Run:AI automates resource allocation and workload orchestration for deep learning inference infrastructure. You can now automate resource management and workload orchestration for machine learning infrastructure without compromising performance. This allows you to focus on building models while Run:AI handles the rest.

With Run:AI, you don’t have to worry about manually managing resources across multiple GPUs, VMs, or containers.

Instead, you can simply use Run:AI to manage everything for you. And since Run:AI is built on Kubernetes, you can scale out easily to meet demand.

Here are some of the capabilities that come with Run:AI:

Automatically allocate resources based on usage patterns Set up guaranteed quotas of GPU memory, CPU cores, and storage space Dynamically adjust resource allocations per job Scale out seamlessly to support large volumes of data and jobs Keep track of costs and ensure optimal pricingLearn More About Deep Learning GPU

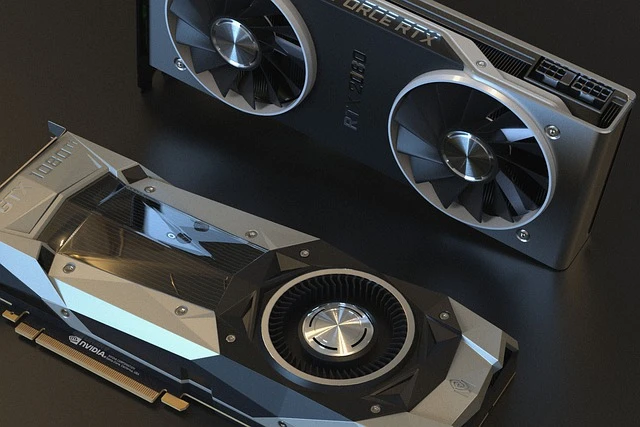

Deep Learning GPU are the latest generation of graphics processing units (GPU). They're designed specifically to handle machine learning tasks like object recognition, speech recognition, natural language understanding, etc.

While there are many types of deep learning GPUs, we'll focus on those used for training neural networks. These models are trained using large amounts of data and require high performance computing power.

The NVIDIA Tesla K80 is one of the most powerful GPUs you can buy today. It has 448 CUDA cores and 8 GB of GDDR5 memory.

This device supports both CUDA 9.0 and cuDNN 7.6.1. In addition, it has support for NVENC video encoding and decoding up to 4K resolution at 60 frames per second.

Best GPU for Deep Learning

GPUs are critical components of deep learning systems, especially those used for large-scale applications such as self-driving cars and autonomous drones.

To make sure you choose the right GPU for your application, it’s important to understand what makes a good GPU and how to evaluate different models.

In this article we cover some of the most common types of GPUs, including consumer and data center GPUs, and discuss factors to consider when choosing the right GPU for your needs.

PyTorch GPU: Working with CUDA in PyTorch

The PyTorch team recently announced support for NVIDIA's CUDA standard. This includes automatic parallelization of operations across multiple GPUs, multi-GPU training, and single-node inference. In addition, we've added some simple examples demonstrating how to use CUDA with PyTorch.

NVIDIA Deep Learning GPU

The NVIDIA deep learning software development kit (SDK) provides developers with access to powerful computing resources for training neural networks. This guide explains how to choose the right NVIDIA GPU for your project.

You'll learn about the different types of NVIDIA GPUs, including consumer and professional models, and find out whether you should use one type over another.

You'll discover why choosing a specific model matter, and why some models perform better than others under certain conditions.

Finally, we cover best practices for working with NVIDIA GPUs, such as selecting appropriate libraries and frameworks, optimizing code, and dealing with hardware failures.

FPGA for Deep Learning

The field-programmable gate array chip enables you to reprogram logic circuits. For example, it allows you to change the functionality of one or multiple gates without changing the physical design of the circuit.

A field programmable gate array provides flexibility and scalability to digital designs. They allow engineers to customize hardware quickly and easily.

In addition to programming logic gates, FPGAs are used to implement complex algorithms such as artificial neural networks (ANN). An ANN is a type of machine learning algorithm inspired by biological neurons.

These algorithms can learn from data and help machines perform tasks like speech recognition, image classification, and natural language processing. In fact, FPGAs are often used for implementing ANNs because of their high performance and low power consumption.

However, FPGAs do come with some drawbacks. Unlike CPUs, GPUs, and ASICs, FPGAs cannot run software directly. Instead, they must be programmed via HDL code. This process takes longer than running native code. Also, FPGAs require specialized tools to develop and debug applications.

Finally, FPGAs aren't always well suited for deep learning implementations. Some deep learning frameworks don't support FPGAs, and others have limited support. However, there are some frameworks that can take advantage of FPGAs' strengths. One of those is TensorFlow Lite.

0

Sign in to leave a comment.