Introduction

Ever wondered why a high-performing model makes a wrong prediction? As artificial intelligence continues to shape industries, a critical challenge has emerged: understanding how complex models make decisions. That’s where model interpretability techniques step in—offering tools to understand, explain, and trust your machine learning models.

While models like neural networks and ensemble methods deliver impressive accuracy, they often operate as black boxes—producing results without clarity. This guide will walk you through the most powerful techniques for interpreting models, from simple linear regressions to deep neural networks. You'll learn when to use each method, how they work, and how they add value.

Whether you're a data scientist, analyst, or decision-maker, this article will help you bring transparency and trust to your machine learning workflows.

What Is Model Interpretability?

Model interpretability refers to how easily a human can understand the predictions made by a machine learning model. It answers the key question: "Why did the model make that prediction?"

Why Interpretability Matters

- Trust and Accountability: Users and stakeholders are more likely to trust interpretable models.

- Compliance: Regulated industries like healthcare and finance often require clear explanations.

- Debugging and Improvement: Helps identify errors, biases, or unexpected behavior in models.

Two Types of Interpretability

- Intrinsic Interpretability: Built-in clarity from models like decision trees and linear regression.

- Post-Hoc Interpretability: External techniques used to explain complex models after training.

Interpretable vs. Complex Models

Different models vary in how transparent their decision processes are:

Model Type Interpretability Level Example Models

Linear Models High Linear/Logistic Regression

Decision Trees Medium to High CART, Random Forest

SVMs & Ensembles Medium to Low XGBoost, Gradient Boosting

Neural Networks Low CNNs, RNNs, DNN

Understanding your model type helps in selecting the right interpretability technique.

Global vs. Local Interpretability

Global Interpretability

Focuses on understanding the model as a whole.

Use Cases:

- Knowing which features are most important overall

- Explaining the model’s average behavior

Local Interpretability

Focuses on individual predictions.

Use Cases:

- Justifying why a loan was denied

- Explaining a specific patient’s diagnosis

Both perspectives are crucial for building fair and transparent systems.

Top Model Interpretability Techniques Explained

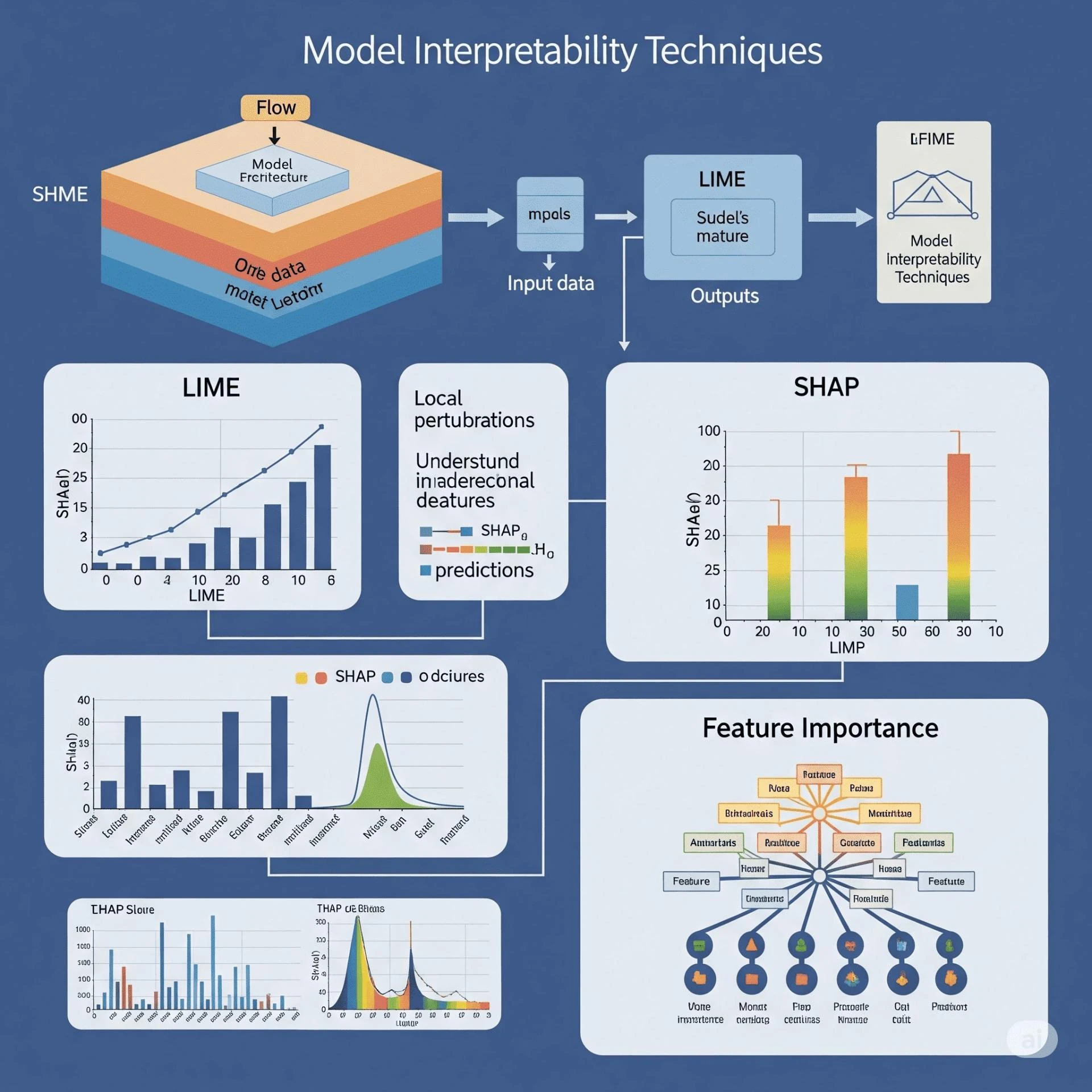

1. Feature Importance

Ranks features based on their influence on predictions.

- Permutation Importance: Shuffles feature values to see the effect on accuracy.

- Gini Importance: Uses decision tree splits to estimate importance.

Use Case: Understand which features are driving the model.

2. Partial Dependence Plots (PDPs)

Show how a feature affects the predicted outcome on average, keeping other features constant.

Benefits:

- Highlights non-linear effects

- Useful for understanding global relationships

Limitations:

- Assumes features are independent

3. Individual Conditional Expectation (ICE) Plots

Reveal how a feature impacts each individual prediction.

Best For:

- Complex datasets with varying patterns across subgroups

- Comparing individual behaviors

4. SHAP (SHapley Additive Explanations)

A game-theory-based method to fairly distribute prediction credit among features.

Advantages:

- Supports both global and local explanations

- Highly accurate and model-agnostic

Use Case: High-stakes domains like credit scoring, healthcare, fraud detection

5. LIME (Local Interpretable Model-agnostic Explanations)

Creates an interpretable model around one specific prediction.

Strengths:

- Simple to apply

- Works with any black-box model

Drawbacks:

- Explanations may vary slightly across runs

- Less stable than SHAP

6. Surrogate Models

Train a simpler model (e.g., decision tree) to mimic the complex one.

Best When:

- Full transparency is needed

- Explaining model behavior to non-technical stakeholders

Choosing the Right Technique

Match your interpretability method to your data and needs.

Questions to Ask:

- Is the decision high-stakes?

- Do I need to explain the whole model or a single prediction?

- What kind of data am I working with?

Goal Recommended Techniques

Audit a single decision SHAP, LIME

Understand overall behavior PDP, Feature Importance

Visualize decision rules Surrogate Models, Trees

Analyze complex interactions SHAP, ICE Plots

Best Practices for Applying Interpretability

- Collaborate with Domain Experts: Validate explanations with people who know the field.

- Use Multiple Techniques: Combine global and local insights.

- Test Consistency: Make sure your explanations are repeatable.

- Tailor to Your Audience: Use visuals and simple terms for business stakeholders.

Model Interpretability in Practice

Real-World Scenario

Use Case: A bank uses a credit-scoring AI. Customers denied loans request reasons.

Interpretability Approach:

- SHAP values highlight key factors like credit score and income

- PDPs show general income-approval trends

Result:

- Customer trust improved

- Compliance reporting simplified

Conclusion

Model interpretability techniques help transform opaque AI systems into clear, trustworthy tools. From SHAP and LIME to PDPs and surrogate models, each approach offers valuable insight into your model’s decisions.

By mastering these tools, you not only increase transparency but also make your models more actionable and fair.

Start applying these techniques today and share this guide with others striving for responsible AI.

Pull Quotes

"Model interpretability is the bridge between machine learning accuracy and real-world trust."

"The right interpretability technique depends on both your model and your audience."

"SHAP and LIME have become industry standards for explaining complex AI decisions."

FAQs

What are model interpretability techniques?

They are methods used to explain how machine learning models make predictions, helping identify the influence of each input feature.

Why is model interpretability important?

It builds trust, ensures compliance in regulated fields, and helps improve models by revealing errors or bias.

What is the difference between SHAP and LIME?

SHAP is based on game theory and offers consistent, mathematically grounded explanations. LIME is simpler but may produce variable results.

Can neural networks be interpreted?

Yes, using SHAP, LIME, ICE plots, and surrogate models to reveal decision logic.

How do I choose the best interpretability technique?

Choose based on your model type, data, and whether you need global or local explanations.

Sign in to leave a comment.