Modern AI applications require massive amounts of training and test data to work. Machine learning algorithms identify patterns that are the foundations of their capabilities. An AI model performs only as well as the data feeding it. Incomplete, erroneous, or inaccurate training data produces unreliable models that make poor decisions in production environments.

Public web data has emerged as the main source for training AI systems. The improvement of AI model performance requires training on larger datasets. The quality and depth of website datasets play a major role in AI algorithm performance and precision. Models need access to information that gets updated frequently. Otherwise, the model becomes irrelevant to current conditions by deployment time.

Significance of Web Scraping Services in AI Projects

Web scraping services for AI projects refer to platforms and APIs designed to extract and prepare data for machine learning model training. These services go beyond simple data collection. They handle the complexities of gathering, cleaning, and structuring information in formats that AI systems can process. AI-focused services deliver datasets ready for immediate model consumption, which sets them apart from simple scraping operations that just pull HTML content from pages.

Traditional web scraping relies on hard-coded rules and fixed patterns. A scraper targets specific HTML tags or CSS classes to extract information. These scrapers break when a website changes its layout. The code requires manual updates that create maintenance headaches for development teams. This rigidity makes traditional approaches unsuitable for AI projects that just need continuous and reliable data flows.

AI-powered web scraping operates differently and AI agents in web data collection are reshaping how enterprises approach this entire process.

- The scraping service providers incorporate machine learning algorithms to understand webpage context rather than following rigid instructions.

- The technology recognizes content patterns visually, like in how humans read pages.

- An AI scraper identifies a product price based on its relationship to surrounding elements, not because a developer specified a particular HTML class name.

This contextual understanding provides resilience. AI web data scrapers adapt without manual intervention when websites update their designs. They handle JavaScript-heavy sites and dynamic content that traditional methods struggle with. The adaptability proves critical for AI training pipelines that cannot afford data collection disruptions.

Value of Working with Web Scraping Services Providers

Building internal web scraping capabilities diverts engineering resources from core product development. Most enterprises use web data to inform decisions rather than their main offering. Investing heavily in scraping infrastructure pulls focus away from what drives revenue. A partnership with the best web scraping services allows organizations to get the necessary data without the distraction of maintaining complex extraction systems.

The cost structure favors outsourcing for most use cases. In-house teams need data scientists skilled in machine learning and experienced engineers familiar with anti-bot technologies. You also need legal experts versed in data compliance regulations. Salaries, infrastructure costs, and ongoing tool maintenance add up fast. Top web scraping services providers spread these expenses across multiple clients and deliver expertise at a fraction of the internal investment.

Top Five Web Scraping Companies That Enterprises can Hire

Choosing from a list of top web scraping services providers means you need to understand what separates industry leaders from simple services. The companies below represent the best web scraping services available for enterprises building AI solutions. Each brings distinct advantages to data extraction projects.

Damco Solutions is ranked as the top web scraping company for enterprises that prioritize data precision and compliance. Having around three decades of experience and a global workforce, Damco is able to extract and deliver consistent datasets for clients with greater precision rates. Their dedicated workforce ensures uninterrupted data flows, irrespective of the time zones. Damco's focus on ethical scraping practices and compliance with international data protection regulations makes them optimal for enterprises functioning under strict compliance conditions.

2. Bright Data

Bright Data operates the world's largest proxy network and serves over twenty thousand customers around the world. The company's infrastructure provides access to hundreds of millions of monthly IP addresses in almost every country. Experts in Bright Data ensure legitimate data collection. The company's patent portfolio demonstrates technological excellence in the domain.

3. Oxylabs

Oxylabs serves fifteen thousand clients with a residential proxy network spanning one hundred seventy-five million IPs. The OxyCopilot feature represents an AI assistant that generates scraping code from natural language prompts and reduces development time. ISO and SOC certifications verify the company's security standards for enterprise deployments.

4. Zyte

Zyte brings fifteen years of web data expertise and maintains Scrapy, the popular open-source scraping framework. The company's patented AI technology and legal compliance leadership position them among the best web scraping companies for organizations concerned about regulatory risks. Managed data services eliminate infrastructure maintenance burdens.

5. Apify

Apify provides a marketplace with thousands of pre-built scraping tools called Actors. The platform offers no vendor lock-in since customers own the code. SOC compliance and GDPR adherence meet enterprise security requirements while supporting projects from the European Commission, demonstrating reliability at scale.

How to Evaluate and Choose the Best Web Scraping Companies

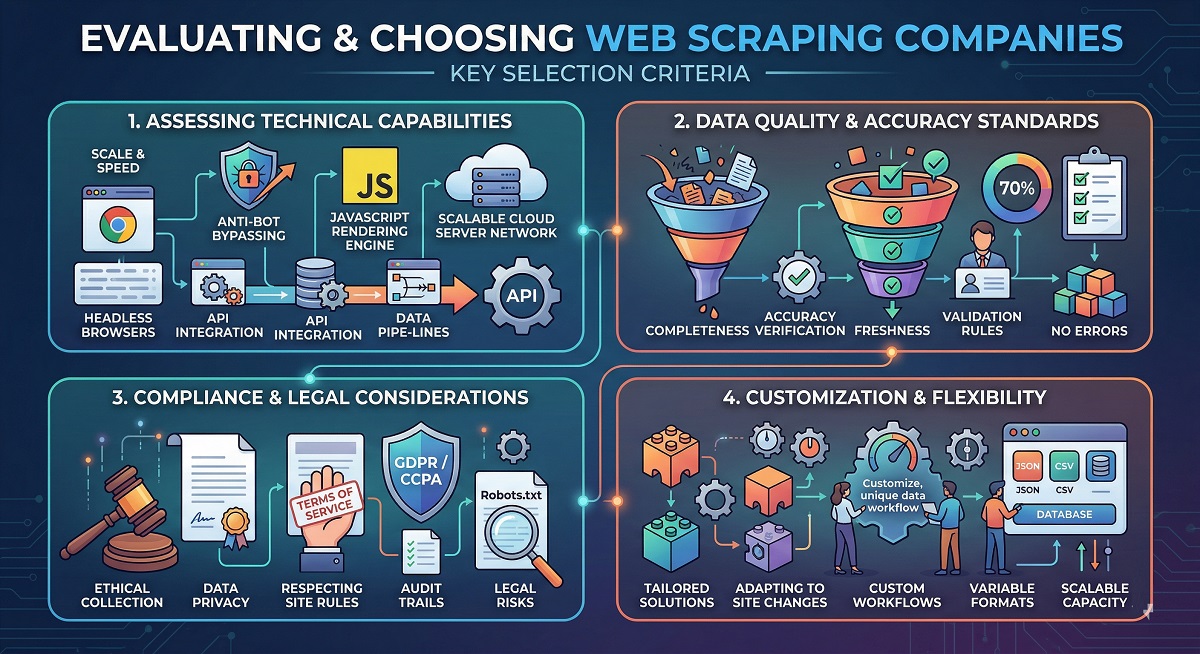

Assessing vendors requires you to look at multiple dimensions beyond original capabilities. The strongest providers balance technical performance with governance, support structures, and operational maturity.

I. Assessing Technical Capabilities

Technical reliability separates functional services from dependable partners. Assess how providers handle JavaScript-heavy sites and bot-protected pages. Ask about success rate definitions and reporting transparency. Determine who owns responsibility when target websites change their structure.

Operating models vary a lot. The scraping service providers offer autonomous tools while others provide managed services. The hybrid scraping approach enables enterprises to leverage the power of automation solutions and expert support without switching vendors. Enterprises should hire scraping firms who handle monitoring, quality assurance, and continuous maintenance fixes.

II. Data Quality and Accuracy Standards

Request details about validation processes and quality monitoring systems. The best web scraping services implement multiple QA layers that combine automated validation with manual review. Understand how providers detect anomalies, handle completeness checking, and manage data type validation. Stakeholders should evaluate whether service providers leverage robust mechanisms for quality checking and data precision observation.

III. Compliance and Legal Considerations

The critical considerations for enterprises should be legal and ethical frameworks. Verify whether providers document data collection principles and participate in industry standards enterprise-grade compliance in web scraping goes well beyond simply checking a vendor's certifications. Check their alignment with data standards and industrial regulations. Understand how the top web scraping company candidates respect robots.txt files and website terms of service. Confirm that contracts define legal responsibilities.

IV. Customization and Flexibility

Delivery capabilities determine integration ease. Assess supported formats, scheduling options, and API designs for production environments. Think about how data fits into analytics, business intelligence, or machine learning workflows.

Scalability matters as requirements evolve. The best web scraping companies provide flexible solutions that grow with business needs without forcing platform migrations.

Final Words

The performance of AI models depends on the data feeding them. The collaboration with professional scraping partners ensures better results for enterprises rather than building complex scraping infrastructure. Enterprises should review technical capabilities and compliance standards of scraping partners before collaboration. The right web scraping partner becomes an extension of internal data departments and ensures AI projects receive the structured data they require for effective operations.

Sign in to leave a comment.