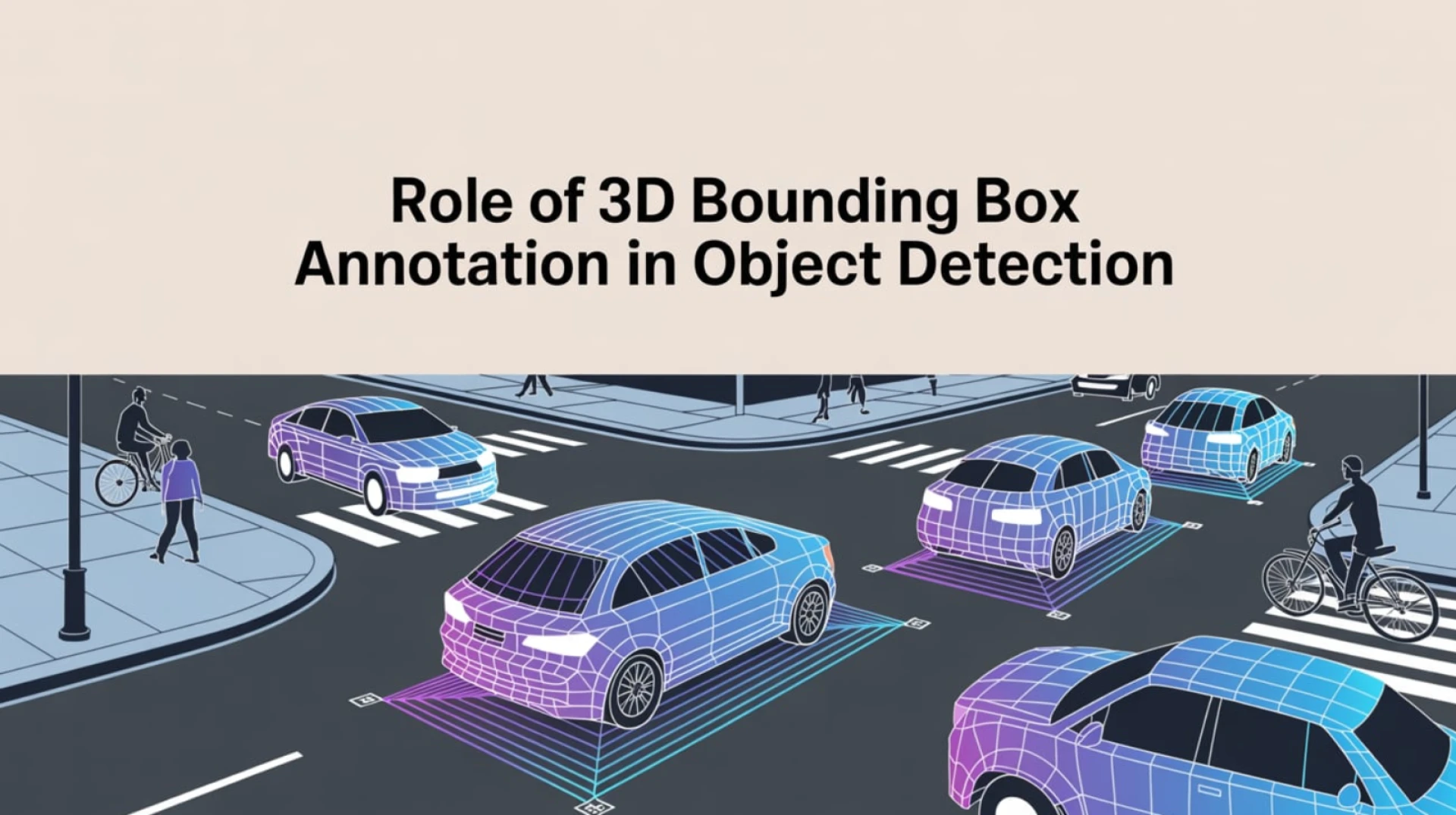

How can autonomous vehicles accurately detect objects in real-time without compromising safety or efficiency?

The answer lies in 3D bounding box annotation, an annotation technique that enables AI models to understand the precise location, size, and orientation of objects within a 3D space. While raw sensor data from LiDAR, cameras, and radar serves as the foundation, this unstructured data is annotated to train the AI model.

This blog explores how 3D bounding box annotation enhances object detection in autonomous vehicles, highlighting its role in object localization, depth perception, and tracking, the best practices for data annotation, and how outsourcing image annotation services help. Let’s get started!

The Ground Truth Labeling: The Bedrock of AI Learning

AI models for autonomous vehicles require labeled data, known as ‘ground truth’, to learn from observations and detect relevant objects. Raw sensor data from LiDAR, radar, cameras, ultrasonic sensors, and vehicle control actuators provides a comprehensive view of the vehicle's environment. However, this unstructured data must be labeled to train machine learning models that can recognize and respond to objects in real time.

Use-Case: For example, data captured by cameras and LiDAR may include images of pedestrians, other vehicles, and road signs. Annotators label these objects by drawing bounding boxes around them and classifying them as "pedestrian," "car," or "stop sign." This labeled data helps the AI model learn how to detect these objects in real-world conditions, enabling the vehicle to respond appropriately, such as slowing down when approaching a stop sign or yielding to pedestrians.

Ethical Considerations: Data Collection for Object Detection in Autonomous Driving

1. Data Privacy and Consent

Autonomous vehicle sensors, such as cameras, LiDAR, and radar, may unintentionally capture personal information like faces, license plates, or private activities. While the primary goal is to enhance vehicle safety, this raises significant privacy concerns.

Ethical Consideration: Data collected by these sensors should be anonymized, and privacy regulations such as the General Data Protection Regulation (GDPR) and California Consumer Privacy Act (CCPA) must be adhered to.

2. Bias in Data Collection

If the data collected by sensors primarily reflects certain environments, demographics, or weather conditions (e.g., sunny urban streets or lighter skin tones), the resulting dataset may fail to represent other, equally important scenarios, leading to biased AI models.

Ethical Consideration: Datasets should be representative of diverse environments, demographics, and contextual factors to ensure that the collected data can support AI models that perform accurately across various real-world conditions.

3. Transparency and Accountability in Data Collection

The data collection process must be transparent to ensure data authenticity and relevance to mitigate bias.

Ethical Consideration: Maintain clear documentation that includes the data’s origin, characteristics, collection methods, and any cleaning or labeling procedures. Ensure accountability by implementing traceability mechanisms for data decisions, such as maintaining logs of data sources, selection criteria, and dataset changes. This ensures that decisions on data collection can be traced and that any issues, such as bias or privacy concerns, can be identified and addressed promptly.

The Role of 3D Bounding Box Annotation in Object Detection

Source: ResearchGate

- Object Localization: Accurately determine an object's position within the environment, which is crucial for navigation and collision avoidance. For instance, when approaching an intersection, the system must accurately localize pedestrians crossing the street to ensure a safe stop.

- Object Classification: Enable AI models to distinguish among objects such as pedestrians, vehicles, and road signs, improving decision-making. For example, when the vehicle detects an object, it can classify it as a pedestrian crossing at a crosswalk or a stationary object along the side of the road, which influences whether the vehicle slows or continues at its current speed.

- Depth Perception: Provides critical depth data to estimate distance to nearby objects, enabling real-time adjustments to vehicle speed, steering, and braking. For example, the vehicle uses depth perception to maintain safe distances from vehicles ahead by dynamically adjusting speed based on their depth and proximity.

- Tracking: Enhances the vehicle’s ability to track and anticipate the movement of dynamic objects (e.g., pedestrians) and to recognize and respond to static objects (e.g., road signs). For instance, as a vehicle approaches a busy intersection, it predicts pedestrian movement, adjusts speed accordingly, and recognizes static road signs such as “Yield” to make accurate decisions.

Best Practices for 3D Bounding Box Annotation in Object Detection

1. Establish Clear and Detailed Annotation Guidelines

Define comprehensive, standardized guidelines for annotators to ensure uniformity across datasets. This consistency is crucial for training models that generalize well across various scenarios and for preventing discrepancies in the labeled data.

2. Implement Robust Quality Control Measures

Use consensus scoring and metric-based evaluation to assess the quality of annotations. Regularly audit annotations to catch errors and ensure accuracy. Incorporate inter-annotator agreement (IAA) to measure consistency between different annotators and ensure reliable, standardized labeling.

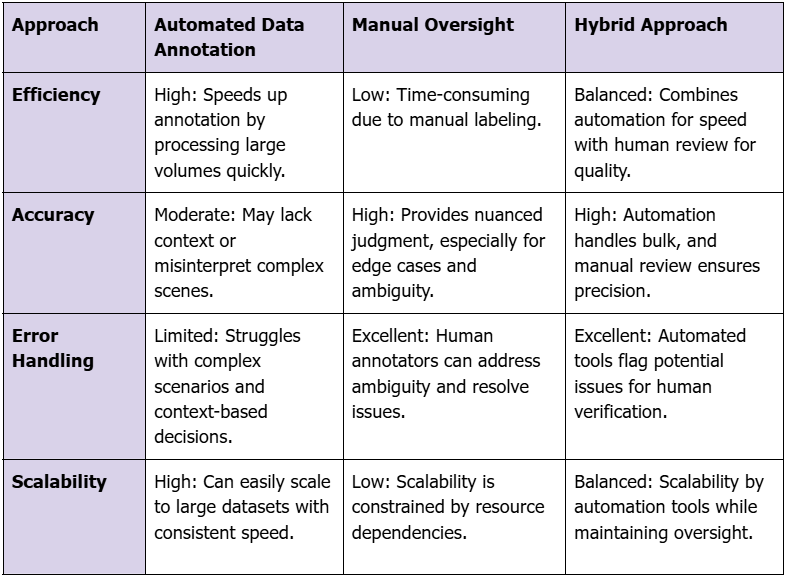

3. Leverage Hybrid Annotation Approach

Automated tools can accelerate annotation, but manual oversight is essential to ensure contextual judgment, accuracy, and handling of edge cases. Combining the efficiency of automation with the accuracy of human review helps identify and correct errors, ensuring the final dataset is precise and reliable.

4. Standardized Annotation Workflows

Ensure the annotation process includes defining clear, repeatable workflows for annotators to ensure consistency and quality across datasets. Standardized workflows help mitigate errors, maintain data integrity, and support model generalization across diverse environments.

5. Adhere to Regulatory Compliance

Ensure that the annotation process complies with industry standards and regulatory requirements, such as the General Data Protection Regulation (GDPR), California Consumer Privacy Act (CCPA), and Health Insurance Portability and Accountability Act (HIPAA). This includes ensuring data privacy, obtaining informed consent, anonymizing personal data, and maintaining transparency in data labeling.

The Strategic Imperative: Managing in-house 3D bounding box annotation poses significant challenges, particularly because in-house annotation teams often struggle to maintain consistent annotation quality, scale operations effectively, and address gaps in domain-specific expertise. These obstacles often lead to higher costs, longer timelines, and an increased risk of errors, ultimately hindering the effectiveness of AI models.

By outsourcing image annotation services, organizations can overcome these challenges by leveraging structured workflows, specialized expertise, and the ability to scale operations efficiently. This approach enables businesses to focus on developing robust AI/ML models while reducing resource constraints and accelerating time-to-market, ultimately improving the accuracy and performance of object detection in autonomous driving systems.

Sign in to leave a comment.