Data integration rarely gets the attention it deserves. It sits quietly behind dashboards, reports, and applications, doing the heavy lifting that makes everything else work. When it fails, teams notice immediately. When it works, it is often taken for granted. I see data integration as the foundation that decides whether analytics and decision making move smoothly or constantly hit roadblocks.

For years, integration work followed a predictable pattern. Teams manually mapped source systems, wrote transformation logic, and fixed breaks whenever schemas changed. It was detailed, repetitive work that depended heavily on tribal knowledge. As data volumes and sources grew, this approach started showing clear limits. What once felt manageable became slow, fragile, and expensive to maintain. The pressure to deliver faster insights made those weaknesses impossible to ignore.

This is where ai in data integration starts to matter, not as a shiny concept but as a practical shift in how data flows are built and maintained. Instead of relying only on predefined rules, systems can now observe patterns, suggest mappings, and adapt when structures evolve. The goal is not to remove human control but to reduce the manual effort that drains time and focus.

Traditional data mapping is a good example of the problem. When a new source system comes in, engineers inspect fields, compare formats, and decide how each element fits into the target model. That process repeats every time something changes. Intelligent integration platforms can now analyze schemas automatically, recognize similarities, and propose mappings that would take hours to create by hand. People still review and approve the logic, but they no longer start from a blank page.

Another area where the shift is visible is data quality. Manual rules can catch known issues, but they struggle with edge cases and evolving data patterns. Intelligent systems learn what normal data looks like over time. They flag anomalies early and adapt as behavior changes. This leads to cleaner pipelines without endless rule tuning. It also reduces the number of late night fixes caused by unexpected source changes.

I also see a clear impact on pipeline maintenance. In older setups, even a small schema update could break downstream processes. Someone had to investigate, adjust mappings, and redeploy. With more intelligence built into the integration layer, systems can detect changes, assess their impact, and suggest fixes automatically. This does not remove accountability, but it does shorten recovery time and reduce operational noise.

From a business perspective, this shift matters because integration speed directly affects decision making. When teams spend weeks preparing data, insights arrive too late to influence outcomes. Faster, more adaptive pipelines allow organizations to respond while opportunities still exist. That is one of the strongest arguments for ai for data integration in modern environments.

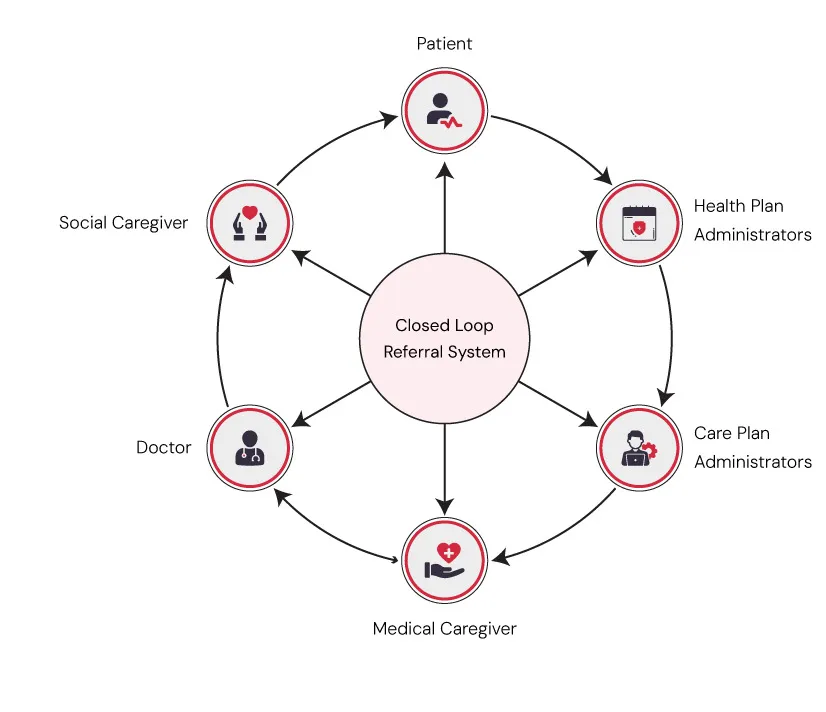

There is also a collaboration benefit that often gets overlooked. Data engineers, analysts, and business users tend to operate in silos because integration logic is hard to understand and modify. Intelligent tools can surface explanations, lineage, and impact analysis in clearer ways. This shared visibility helps teams align on definitions and trust the data they are using. It moves integration closer to the business instead of keeping it locked away in technical workflows.

That said, intelligent data flows are not about full automation. Human judgment remains critical, especially when business rules and compliance requirements are involved. The real value comes from shifting effort away from repetitive tasks toward design, validation, and improvement. Integration teams spend less time fixing breaks and more time shaping data to support meaningful outcomes.

As organizations adopt cloud platforms and lakehouse architectures, the relationship between data integration and ai becomes even more important. Data is no longer centralized in a single warehouse. It flows across services, regions, and formats. Intelligent integration acts as the connective tissue that keeps everything consistent without slowing innovation. It allows teams to scale without multiplying complexity.

I also believe this approach changes how success is measured. Instead of counting how many pipelines exist, teams start looking at stability, adaptability, and time to insight. Intelligent flows reduce friction across all three. They make integration less of a bottleneck and more of an enabler for analytics and operational systems alike.

Looking ahead, the transition from manual mapping to intelligent data flows feels less like a technology upgrade and more like a mindset change. Integration is no longer just about moving data from point A to point B. It is about understanding patterns, anticipating change, and building systems that evolve alongside the business. When data integration and ai work together in this way, organizations gain something far more valuable than efficiency. They gain resilience in how their data supports decisions every day.

Common Questions Answered

1. How does AI improve data integration workflows?

AI improves data integration by reducing manual mapping, learning data patterns, and adapting to schema changes automatically. This helps teams build stable pipelines faster while spending less time fixing breaks caused by evolving source systems.

2. Can AI help improve data quality in pipelines?

Yes, AI continuously monitors data patterns to detect anomalies, inconsistencies, and unexpected changes. Instead of relying only on fixed rules, it adapts over time, helping teams maintain cleaner and more reliable data across pipelines.

3. Is AI driven data integration scalable for enterprises?

AI driven data integration scales well across cloud and hybrid environments. It handles growing data volumes, multiple sources, and changing schemas while reducing operational effort, making it suitable for enterprise level analytics platforms.

4. What are the future trends in AI and data integration?

5. Why are intelligent data flows important for modern analytics?

Intelligent data flows reduce pipeline failures, improve adaptability, and speed up access to insights. They allow teams to focus on analytics and decision making instead of constant maintenance and manual troubleshooting.

Sign in to leave a comment.