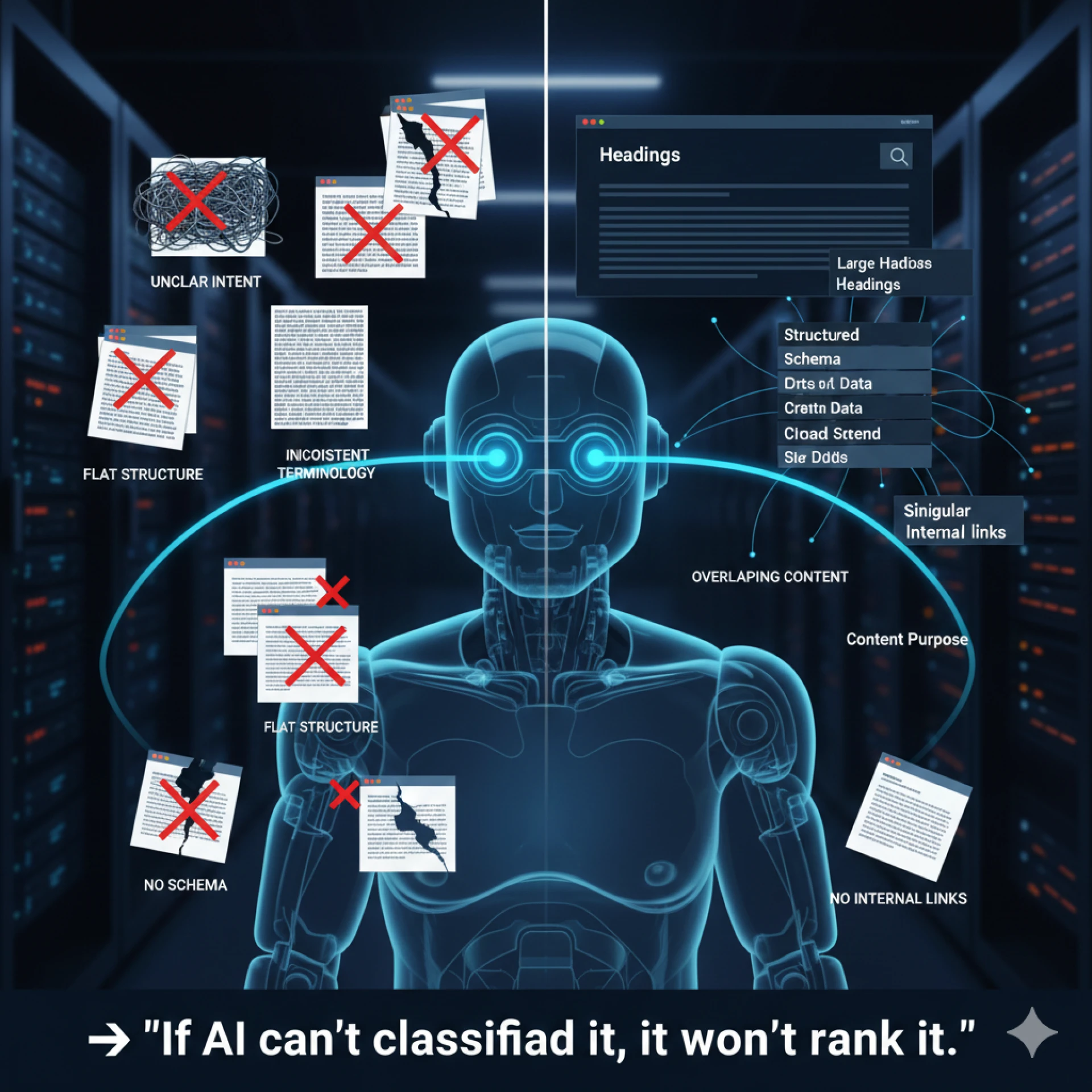

Many websites fail in AI-driven search not because of weak content, but because machines struggle to interpret it. Small structural and semantic mistakes often block visibility without teams realizing it.

Let us understand the most common issues that prevent search engines and AI systems from understanding, classifying, and trusting content.

- Unclear page purpose: Pages that mix multiple intents confuse AI systems. When a page tries to sell, educate, and rank for unrelated queries, machines struggle to classify its role.

- Inconsistent terminology: Using different terms for the same concept across pages breaks entity recognition. AI systems rely on consistency to build topic authority.

- Flat content structure: Walls of text without clear headings reduce interpretability. Machines need visible hierarchy to extract meaning and context.

- Overlapping or duplicate content: Similar pages that cover the same topic without distinction dilute clarity. AI systems may ignore all versions instead of choosing one.

- Missing structured data: Without schema, machines must infer meaning. This increases the risk of misclassification and exclusion from enriched results.

- Disconnected internal linking: Pages that lack contextual links appear isolated. AI systems read internal links as signals of topic relationships and authority.

Machine readability breaks when structure, consistency, and intent fall apart. Fixing these issues often delivers faster gains than publishing more content.

Must Read: How Can I Get My Content Recommended by AI Tools?

Content Source: https://bit.ly/46DxzQy

Sign in to leave a comment.