If you are considering enrolling in a Data Engineering course in Hyderabad, real-time data intake will be one of the most important and challenging subjects you will study.

Businesses now require real-time insights and data-driven decisions, so the old batch-processing method is insufficient.

Success in today's world hinges on your ability to capture, process, and route streaming data quickly and consistently.

Financial analytics, fraud detection, e-commerce personalization, IoT monitoring, and other fields utilize modern data pipelines built on real-time data ingestion.

Let's explore the nature of modern data pipelines, their functioning, the leading tools at our disposal, and strategies for efficiently handling high-throughput data.

What Is Real-Time Data Ingestion?

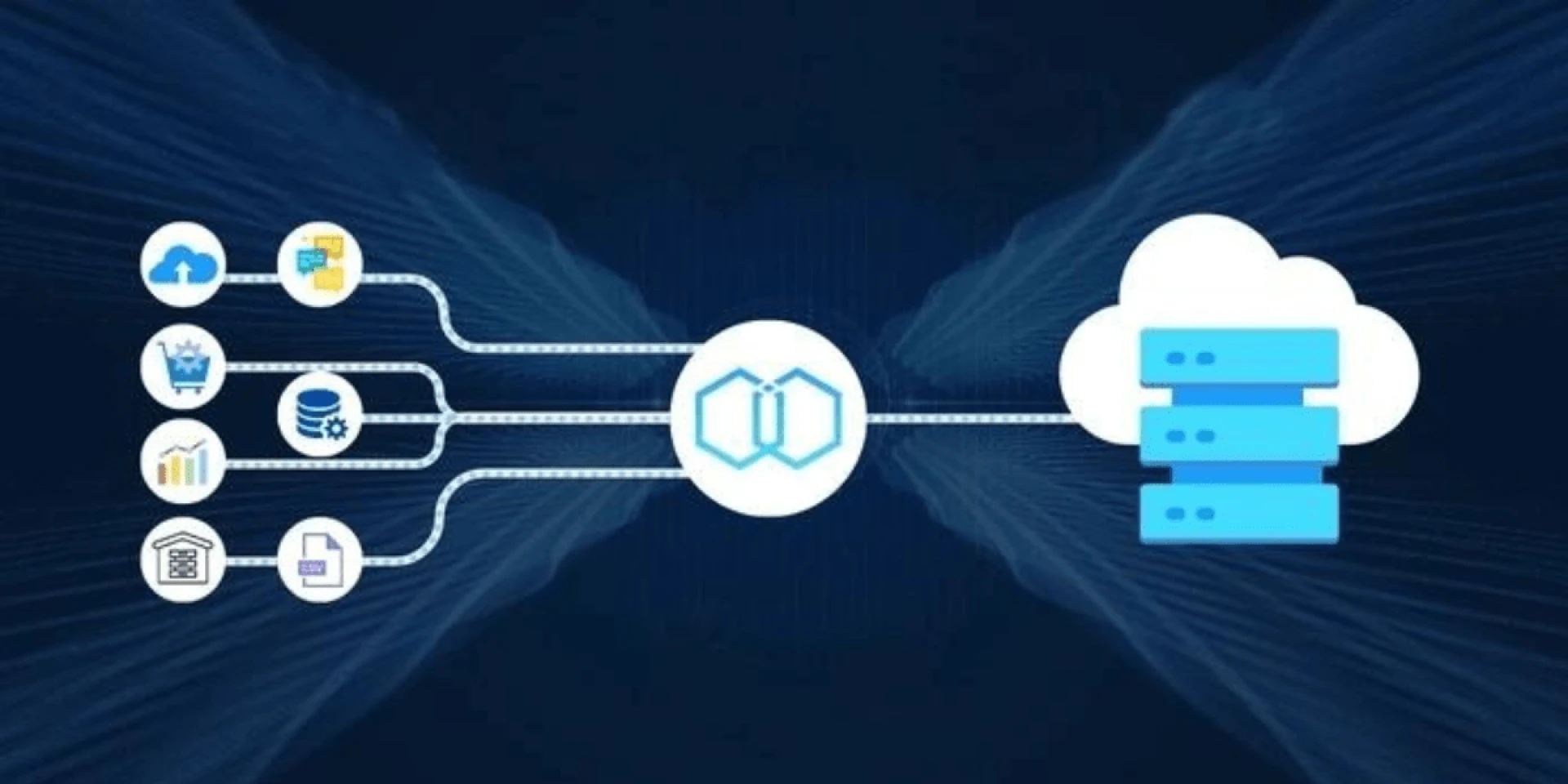

The process of gathering and transferring data simultaneously, or with little delay, from several sources to target systems (data lakes, warehouses, and analytics platforms) is known as real-time data intake.

Real-time ingestion is perfect for time-sensitive applications because it pushes data as it comes in, unlike batch ingestion, which collects data at regular intervals.

It consists of three main parts:

- Databases, logs, IoT devices, and APIs are examples of source systems.

- The ingestion layer comprises brokers or stream processors.

- Target Analytics/Storage Systems (warehouses, data lakehouses, or visualization tools).

Why Real-Time Ingestion Matters in Today’s Data Ecosystem?

Managing real-time data input effectively can significantly impact your data strategy. Take into account the following advantages:

- Making Decisions in Real Time: Milliseconds can make a big difference in the healthcare and financial industries.

- Enhancement of User Experience: Consider e-commerce's dynamic product suggestions.

- Operational Monitoring: Spot irregularities, malfunctions, or opportunities early on and take appropriate action.

Any advanced Data Engineering course in Hyderabad should include real-time ingestion since it will help you create scalable, next-generation systems.

Core Tools Used for Real-Time Data Ingestion

Here are some commonly used technologies in the field that aid in creating real-time pipelines:

1. The Apache Kafka

Kafka is a distributed streaming technology made for fault-tolerant, high-throughput messaging. Data producers and consumers frequently use it as a central ingestion point.

- Per second, it processes millions of messages.

- It offers features such as message replay, durability, and persistence.

- It easily interacts with various processing engines, including Spark and Flink.

2. The Apache Flink

Complex event management and real-time stream processing are done with Flink.

- Data that arrives late can be handled by event-time processing.

- Aggregation windowed operations.

- It is compatible with exactly-once semantics.

3. Scalability, elasticity, and close connection with their respective ecosystems are provided by cloud-native ingestion services like Google Pub/Sub, Amazon Kinesis, and Azure Event Hubs.

Designing Real-Time Ingestion Architecture

A robust real-time ingestion pipeline design necessitates meticulous consideration in several areas:

a. Sources of Data

Determine the incoming data's volume, frequency, and format (JSON, CSV, or Avro). IoT devices' sensor data, for instance, might generate thousands of records every second.

b. Brokers for messages

Select resiliency and dependability by buffering incoming data with systems like RabbitMQ or Kafka.

C. Stream Processors

Utilize tools like as Spark Streaming or Apache Flink to clean, transform, and enhance the moving data.

d. Systems for Storage

Amazon S3 (for lake storage) is one example of a destination where:

- Data may need to be written.

- For analytics, use BigQuery or Snowflake.

- Elasticsearch is used for searching.

Knowing this architecture helps you get ready for large-scale deployment in production systems and is an essential skill in any Data Engineering course in Hyderabad.

Challenges and How to Handle Them

In real-time data ingestion, the following are some typical problems and solutions:

- Use concurrent processing and lightweight data formats, such as Avro/Parquet, to reduce high latency. Deploy your systems nearer the edge computing data source as well.

- Scalability Solution: Use consumer groups and partition your Kafka topics to parallelize processing. When feasible, create microservices that are stateless.

- Real-time validation, dead-letter queues, and the schema registry (Confluent) are solutions for data quality problems.

Use idempotent writes or apply deduplication logic using timestamps or unique IDs as a solution to data duplication.

Middle Focus: Scaling Real-Time Ingestion with Modern Cloud Platforms

The use of cloud platforms has transformed real-time Data Engineering. Serverless computing, controlled streaming services, and auto-scaling capabilities allow engineers to concentrate more on logic and less on infrastructure.

The curriculum of Hyderabad's Data Engineering courses increasingly uses these environments to model actual large data pipelines.

Examples consist of:

- Azure Functions + Event Hubs + Synapse Analytics + AWS Lambda + Kinesis

- Google Cloud Functions + BigQuery + Pub/Sub

Working directly with these configurations teaches fault recovery, monitoring, and real-time orchestration.

Conclusion

Real-time data ingestion handling is now required, not optional. Whether it's financial transactions, telemetry from linked devices, or clickstream data from websites, real-time data processing gives companies a competitive advantage.

Exploring structured programs like a Data Engineering course in Noida or Hyderabad, which provide hands-on training on Kafka, Flink, Spark Streaming, and other topics, is strongly advised if you want to master these skills.

These applications frequently mimic real-world issues, providing students with the advantage to tackle challenging ingestion use cases.

You will become a valued data engineer and be able to design systems that drive the next generation of real-time decision-making if you can master these systems and methodologies.

Sign in to leave a comment.