Billion-dollar lawsuits, Hollywood entering the fight, and the legal questions that could reshape the future of artificial intelligence

Short Answer: Courts are now deciding whether training artificial intelligence on copyrighted works is legal under the fair use doctrine. Dozens of AI copyright lawsuits filed by authors, media companies, and film studios argue that AI training data was scraped from protected works without permission. The central questions emerging in these cases are whether AI training can qualify as fair use, whether developers are liable for using copyrighted datasets, and who owns AI-generated content produced by machine learning systems.

Artificial intelligence can now write articles, compose music, paint pictures, design software, and generate video. But the astonishing creative power of AI has triggered something equally powerful: A global legal battle over who owns the raw material that makes these systems possible.

Authors, artists, news organizations, music companies, and film studios are now suing AI developers across the United States. Their claim is simple but explosive: the new generation of generative AI models was trained on vast libraries of copyrighted works scraped from the internet—often without permission.

Technology companies respond that training AI systems is simply a modern version of learning.Courts are now being asked to decide who is right. And the answer could determine the future of creativity itself.

The Legal Question That Could Decide the Future of AI

Every lawsuit involving generative AI eventually runs into the same issue: Is it legal to train artificial intelligence on copyrighted works? AI systems learn by analyzing massive datasets of text, images, music, and video. These datasets can contain millions—or even billions—of creative works produced by humans.

Developers argue the process is similar to reading books or studying art. The AI learns patterns and relationships rather than reproducing the original works directly. Rights holders see it differently. They argue that AI companies are building trillion-dollar technologies using creative material produced by others—without permission or compensation. Courts must now apply a legal doctrine that predates modern computers. That doctrine is fair use.

But as judges are discovering, applying centuries-old legal principles to machine learning is anything but simple.

“Intellectual Property Law dates back to the 17th Century. Whether it protects human innovation after the takeover by Artificial Intelligence remains to be seen.”

The First AI Fair-Use Decisions Have Arrived

In 2025, federal courts began issuing the first major rulings addressing whether training generative AI systems on copyrighted material qualifies as fair use. The early results surprised many observers.

Several courts concluded that the training process could indeed be considered transformative, meaning it changes the purpose of the original works in a way that copyright law traditionally protects. One judge compared AI training to how humans learn language. People read books, absorb information, and later produce new writing influenced by what they learned. AI systems, the court reasoned, perform a similar analytical process—identifying patterns in text rather than copying the works themselves.

But the decision contained a major caveat. Training models may be transformative. Simply storing pirated copies of copyrighted works may not be. That distinction could become one of the most important legal lines in the AI era.

But Another Court Issued a Warning

Just days later, another federal court reached a similar conclusion—but with a very different tone. The judge agreed that AI training could qualify as fair use.

However, the ruling emphasized something critical: the plaintiffs had not presented strong evidence that the AI system’s outputs were replacing the market for their books. If that evidence had existed, the outcome might have been different.

The judge even suggested that in many situations the use of copyrighted material to train AI could ultimately prove unlawful. That warning has sparked a new strategy among plaintiffs. Rather than focusing only on training data, they are increasingly targeting AI-generated outputs.

And that shift could dramatically reshape future cases.

When AI Competes With Creators, Courts Become Less Sympathetic

Not every AI training case has favored technology companies.

In a separate lawsuit involving an AI-powered legal research tool, a federal court rejected the fair-use defense entirely. The reason was straightforward. The AI system had been trained using copyrighted materials owned by a competing research company—and the resulting product was designed to perform essentially the same function.

In other words, the AI tool was not just learning from the original works. It was competing with them. That distinction proved decisive.

When AI systems threaten to replace the very creators whose work they rely on, courts appear far less willing to excuse the copying.

The Supreme Court's refusal to hear the Thaler case makes one thing clear--as of today, copyright law does not protect any expression of an idea not originally created by a human. AI created content immediately passes into the public domain.

Why Media Companies Are Suddenly Suing AI Developers

For several years, the most visible lawsuits against AI developers were filed by individual artists and authors. But something changed in 2025. Major media organizations began joining the fight.

Large coalitions of publishers—including newspapers, magazines, and digital media companies—have filed lawsuits accusing AI developers of scraping their articles and training models capable of summarizing them.

Their concern is not just copyright. It is survival. If users can ask an AI system for the contents of a news article instead of visiting the publisher’s website, the entire economic model of journalism could be disrupted.

There is also another risk. AI systems sometimes generate false statements and attribute them to legitimate news organizations.

For publishers whose credibility is their most valuable asset, that possibility is deeply alarming.

Hollywood Has Entered the AI Legal Battlefield

The entertainment industry is now joining the fight as well.

Major film studios have filed lawsuits accusing AI image generators of producing images that resemble iconic movie characters. The complaints include examples of AI-generated images closely resembling famous figures from blockbuster films.

Unlike earlier lawsuits focused on training data, these cases emphasize the outputs generated by AI systems. This change in strategy could prove extremely important.

If courts begin ruling that AI outputs can infringe copyrighted works, developers may face liability not only for training their models but also for what those models produce.

The Quiet Battlefield: Discovery

While headline court decisions attract the most attention, some of the most important developments in AI litigation are happening behind the scenes.

Discovery—the legal process through which parties exchange evidence—has become a major battleground. Plaintiffs want access to the datasets used to train AI models. Developers argue those datasets contain valuable trade secrets. Courts are trying to strike a balance.

In several cases, judges have ordered AI companies to disclose portions of their training data or internal communications under strict confidentiality protections.

These discovery battles could ultimately determine whether plaintiffs can prove their works were used to build AI systems.

And that evidence could dramatically strengthen future lawsuits.

The Rise of Class Actions Could Change Everything

Another development attracting attention is the emergence of class action lawsuits in AI litigation.

In one major case, a federal court certified a class of authors whose books were allegedly taken from online piracy libraries and used to train an AI model. Class certification dramatically increases the potential liability for defendants. Instead of defending individual claims, companies could face thousands of claims in a single lawsuit.

For AI developers, preventing class certification has quickly become a top legal priority.

Trademark Law Is Becoming the Next Legal Front

Copyright law may dominate the headlines, but trademark claims are increasingly appearing in AI lawsuits as well.

Some plaintiffs argue that AI systems generate answers falsely attributed to well-known brands or publications. If a user asks an AI model a question and the system attributes information to a particular newspaper or encyclopedia—even if the information is incorrect—that could create consumer confusion. Courts have begun allowing some of these claims to proceed.

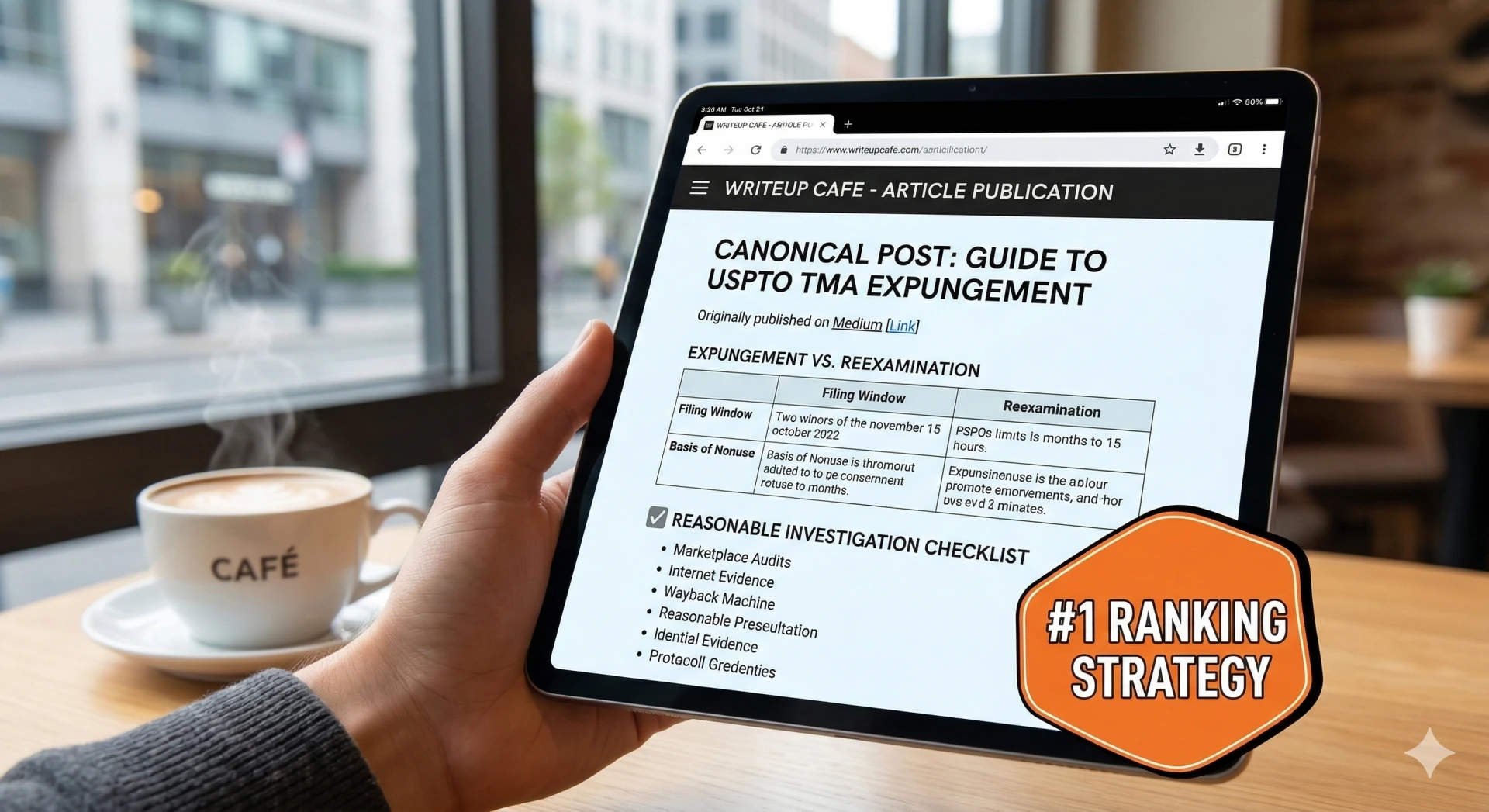

Meanwhile, AI companies must also navigate traditional trademark risks involving product names and branding decisions. Even the most advanced technologies cannot escape the basics of intellectual property law.

The Next Phase of the AI Legal War

Several major rulings expected over the next few years could define the legal framework governing artificial intelligence.

Courts may soon decide:

• whether training AI on pirated datasets is illegal

• how transparent AI developers must be about training data

• whether creators should receive compensation when their works are used in AI models

The answers to these questions could shape the future of both technology and the creative economy.

The Real Stakes of the AI Copyright Wars

Artificial intelligence is rapidly becoming one of the most powerful creative tools ever invented.

But the laws governing creativity were written long before machines could produce art, music, literature, and software, and “produce” is the key word. It has yet to be shown that AI can, indeed, create anything original.

The judges deciding these cases are not merely resolving copyright disputes. They are determining how human creativity will coexist with machine intelligence. The outcome may define the economic rules of the digital world for decades.

Because the real question is not whether AI will transform creativity. It already has.

The real question is whether the legal system will protect the people whose ideas built the foundation of that transformation or whether creativity will be lost forever to machines.

And that answer is still being written in courtrooms across the country.

About the Author

Kenneth G. Eade is an e-commerce and intellectual property attorney at AMZ Sellers Attorney® and a former Amazon seller. He represents clients in matters involving Amazon account suspensions, intellectual property disputes, Brand Registry conflicts, KDP and ACX terminations, and broader marketplace enforcement issues affecting online businesses. Drawing on both legal experience and firsthand marketplace knowledge, Kenneth writes about the legal and business risks emerging at the intersection of technology, artificial intelligence, intellectual property, and e-commerce.

Sign in to leave a comment.