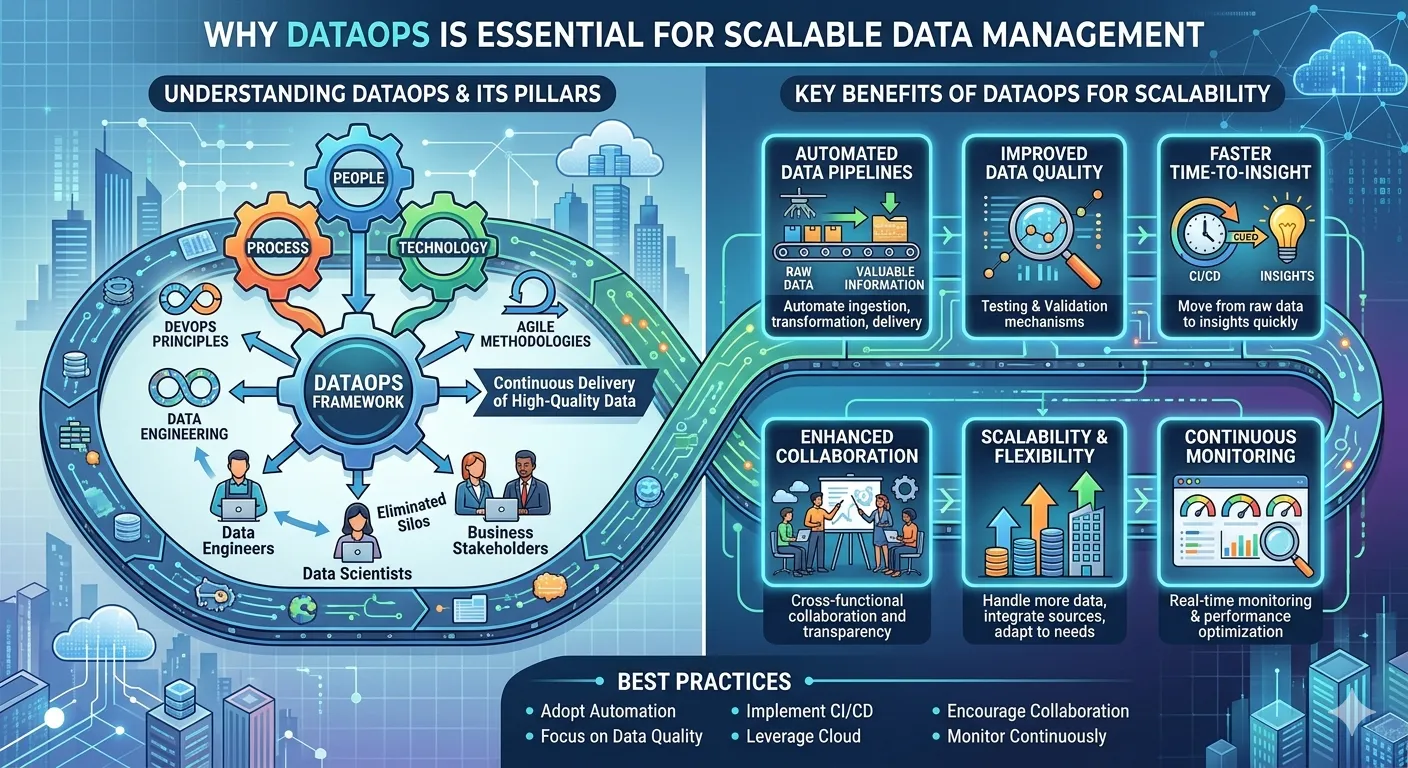

In today’s data-driven economy, organizations are producing and consuming data on an unprecedented scale. From customer interactions and operational metrics to IoT and analytics data, the volume, velocity, and variety of data are increasing exponentially. However, efficiently managing data and converting it into valuable information is still a challenge for organizations. This is where DataOps plays a vital role in enabling efficient data management. DataOps is a framework built on DevOps principles, data engineering, and agile methodologies.

This blog explores how DataOps can enable scalable data management. It discusses data workflows, data quality, collaboration, and other important aspects of DataOps. In addition, the blog discusses the most important advantages and best practices of DataOps and explains why it is becoming increasingly important.

Understanding DataOps

DataOps, or Data Operations, is a collaborative practice focused on enhancing the speed, quality, and reliability of data analytics. DataOps brings together people, processes, and technology to streamline and automate data workflows and ensure the continuous delivery of high-quality data. DataOps aims to eliminate silos between data engineers, scientists, and business stakeholders.

It emphasizes the importance of automation, monitoring, testing, and continuous improvement to ensure the scalability and reliability of the data pipeline.

The Need for Scalable Data Management

As organizations grow, the complexity of the data ecosystem increases. Traditional data management approaches struggle to keep up with rapidly increasing data volumes from various sources, the need for real-time processing, and data quality issues. This is where modern data management services and solutions help organizations improve efficiency through structured frameworks. DataOps addresses challenges related to efficiency, accuracy, and scalability by introducing standard processes and automation in managing data.

Key Benefits of DataOps for Scalability

1. Automated Data Pipelines

One of the major advantages of DataOps is automation. Manual data handling is not scalable for organizations. It would not only consume a lot of time but would also lead to errors. DataOps helps organizations automate the ingestion, transformation, and delivery of data.

The automation enabled by DataOps helps organizations scale their businesses without increasing their workforce.

2. Improved Data Quality

Scalability is not just about increasing data volume. It also involves improving data quality.

DataOps incorporates various testing and validation mechanisms in its architecture. These mechanisms help organizations identify data errors.

3. Faster Time-to-Insight

In today’s business environment, it is critical for organizations to stay competitive. DataOps enables faster data processing and delivery.

The continuous integration and continuous deployment mechanisms in DataOps help in faster processing and delivery of data. Faster data delivery helps move from raw data to insights more quickly.

4. Enhanced Collaboration

DataOps helps create a collaborative work culture among data engineers, analysts, data scientists, and business teams. This ensures all parties are working towards common goals by eliminating silos.

A collaborative approach helps create greater transparency, avoids duplication of work, and helps solve problems more quickly. This is critical when working with large-scale data environments.

5. Scalability and Flexibility

DataOps solutions are built to scale with the organization. Whether it is handling more data, integrating more data sources, or adapting to business needs, DataOps offers flexibility to meet evolving needs.

Cloud-based solutions used in DataOps offer even more scalability as resources can be allocated based on need.

6. Continuous Monitoring and Optimization

Scalable systems require continuous monitoring to ensure optimal performance. DataOps offers real-time monitoring and alerting capabilities to quickly identify any performance degradation before it impacts business operations.

Continuous feedback loops help teams optimize performance, improve delivery, and ensure system reliability as data workloads grow.

Best Practices for Implementing DataOps

In order to effectively utilize DataOps for scalable data management, the following best practices should be considered by organizations:

- Adopt Automation: Automate the processes such as ingestion, testing, and deployment

- Implement CI/CD for Data Pipelines: Ensure the implementation of continuous integration and delivery for the data pipelines.

- Focus on Data Quality: Include the tools for validation and monitoring in the pipeline.

- Encourage Collaboration: Promote collaboration and shared ownership.

- Leverage Cloud Technologies: Leverage the power of cloud technologies to provide flexibility and scalability.

- Monitor Continuously: Ensure the implementation of real-time monitoring.

The Future of DataOps

As the importance of data continues to grow, the role of DataOps will become increasingly essential. As such, businesses are increasingly adopting DataOps as a service. This is because it makes it easier for businesses to implement DataOps, enabling them to leverage its capabilities. As such, with advancements in artificial intelligence and machine learning, DataOps will continue to evolve.

Conclusion

DataOps has become a necessity for businesses to scale their data management capabilities. By leveraging automation, collaboration, and continuous improvement, DataOps provides a powerful platform for managing the complexities of the data ecosystem.

In the age of data-driven decision-making, DataOps enables businesses to efficiently manage their data assets while maintaining data quality and speed. Ultimately, DataOps helps businesses leverage the full potential of their data assets amid ever-changing business dynamics.

Sign in to leave a comment.