Introduction

Data flows through organizations constantly, from application logs and IoT devices to customer transactions and third party APIs. The challenge is not just collecting this data but moving, transforming, and managing it reliably without creating bottlenecks or security risks.

This is where Apache NiFi stands out. It provides a visual and highly configurable way to design and manage data pipelines. Unlike traditional ETL tools that often require heavy coding, NiFi allows teams to build complex flows with clarity and control.

This blog explores how to design effective data pipelines using Apache NiFi, along with proven best practices and architectural insights drawn from real-world implementations.

Understanding Apache NiFi Architecture

Before building pipelines, it is important to understand how NiFi works under the hood.

Core Components of NiFi

FlowFiles

These are the data packets that move through the system. Each FlowFile contains both data and attributes that define metadata.

Processors

Processors perform operations such as data ingestion, transformation, routing, and delivery.

Connections

They act as queues between processors and control the flow of data.

Controller Services

These provide shared services like database connections or SSL configurations across processors.

Provenance Data

NiFi tracks every step of data movement, offering full visibility into where data came from and how it changed.

Why Architecture Matters

A poorly designed pipeline can lead to delays, data loss, or system overload. A well-structured NiFi architecture ensures scalability, fault tolerance, and easy maintenance.

Designing Scalable Data Pipelines

Break Pipelines into Logical Segments

Instead of building one large flow, divide pipelines into smaller, reusable process groups.

For example, an e-commerce company processing order data might structure flows like this:

- Data ingestion from APIs

- Data validation and enrichment

- Transformation into analytics format

- Delivery to data warehouse

This modular approach simplifies debugging and improves reusability.

Use Back Pressure and Flow Control

NiFi allows you to set thresholds for queue sizes and data volume. This prevents system overload.

A financial services firm processing real-time transactions used back pressure to ensure their system remained stable even during peak trading hours.

Enable Parallel Processing

NiFi supports concurrent task execution. Configure processors to run multiple threads where appropriate to increase throughput.

Be cautious not to over-allocate resources, as it may lead to CPU contention.

Best Practices for Apache NiFi Pipelines

1. Prioritize Data Provenance

NiFi’s data lineage tracking is one of its strongest features. Always keep provenance enabled for critical pipelines.

This helps in auditing and debugging, especially in regulated industries like healthcare and finance.

2. Standardize Naming Conventions

Use clear and consistent names for processors, connections, and process groups.

Instead of naming a processor "Processor1", use "Fetch_Customer_API_Data". This makes collaboration easier and reduces onboarding time for new team members.

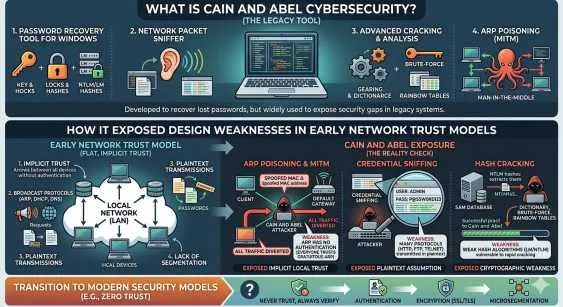

3. Secure Data Flows

Security should be built into the pipeline, not added later.

- Use SSL for secure communication

- Implement role-based access control

- Encrypt sensitive data

Organizations handling personal data must ensure compliance with standards such as GDPR.

4. Monitor Performance Regularly

NiFi provides built-in monitoring tools. Use them to track:

- Queue sizes

- Processor performance

- Error rates

Set up alerts to proactively identify issues before they escalate.

5. Use Templates and Version Control

Save reusable pipeline patterns as templates.

NiFi Registry allows version control for flows, making it easier to manage updates and rollbacks.

Real-World Use Cases

Case 1: Log Aggregation for IT Operations

A large enterprise used NiFi to collect logs from multiple servers, transform them into a standard format, and push them to a centralized monitoring system.

Result:

- Faster troubleshooting

- Reduced downtime

- Improved system visibility

Case 2: IoT Data Processing

A manufacturing company implemented NiFi to process sensor data from production lines.

Pipeline steps included:

- Data ingestion from IoT devices

- Filtering anomalies

- Sending alerts for threshold breaches

Result:

- Improved operational efficiency

- Reduced equipment failures

Case 3: Data Migration to Cloud

A retail organization used NiFi to migrate legacy database records to a cloud-based data warehouse.

NiFi handled:

- Data extraction

- Transformation into modern schema

- Secure transfer

Result:

- Seamless migration with minimal downtime

Common Challenges and How to Overcome Them

Handling High Volume Data

Large data volumes can overwhelm pipelines if not managed properly.

Solution:

- Use load balancing

- Optimize processor configurations

- Scale NiFi clusters horizontally

Managing Complex Workflows

As pipelines grow, complexity increases.

Solution:

- Use process groups for organization

- Document workflows clearly

- Maintain a centralized repository of templates

Error Handling

Without proper error handling, pipelines may fail silently.

Solution:

- Use failure relationships in processors

- Route errors to separate queues

- Implement retry mechanisms

Apache NiFi vs Traditional ETL Tools

| Feature | Apache NiFi | Traditional ETL |

| Development Approach | Visual, low-code | Code-heavy |

| Real-Time Processing | Strong support | Limited |

| Data Provenance | Built-in | Often limited |

| Flexibility | High | Moderate |

NiFi is particularly effective for real-time data flows and streaming use cases, while traditional ETL tools may still be suitable for batch-heavy workloads.

When to Consider Apache NiFi Development Services

While NiFi is user-friendly, enterprise-grade implementations often require expertise.

Businesses typically seek Apache NiFi Development Services when:

- Building large-scale data ecosystems

- Integrating multiple data sources

- Ensuring compliance and security

- Optimizing performance for high throughput

An experienced team can help design robust pipelines and avoid costly mistakes.

Conclusion

Apache NiFi offers a powerful and flexible way to design modern data pipelines. Its visual interface, strong data lineage tracking, and scalability make it a preferred choice for organizations handling complex data workflows.

The key to success lies in thoughtful architecture, clear organization, and adherence to best practices. From modular design to proactive monitoring, every decision contributes to the reliability and performance of your pipelines.

For businesses aiming to unlock the full potential of their data infrastructure, partnering with the Best Apache NiFi Development Company ensures not only smooth implementation but also long-term scalability and efficiency.

Sign in to leave a comment.