Introduction

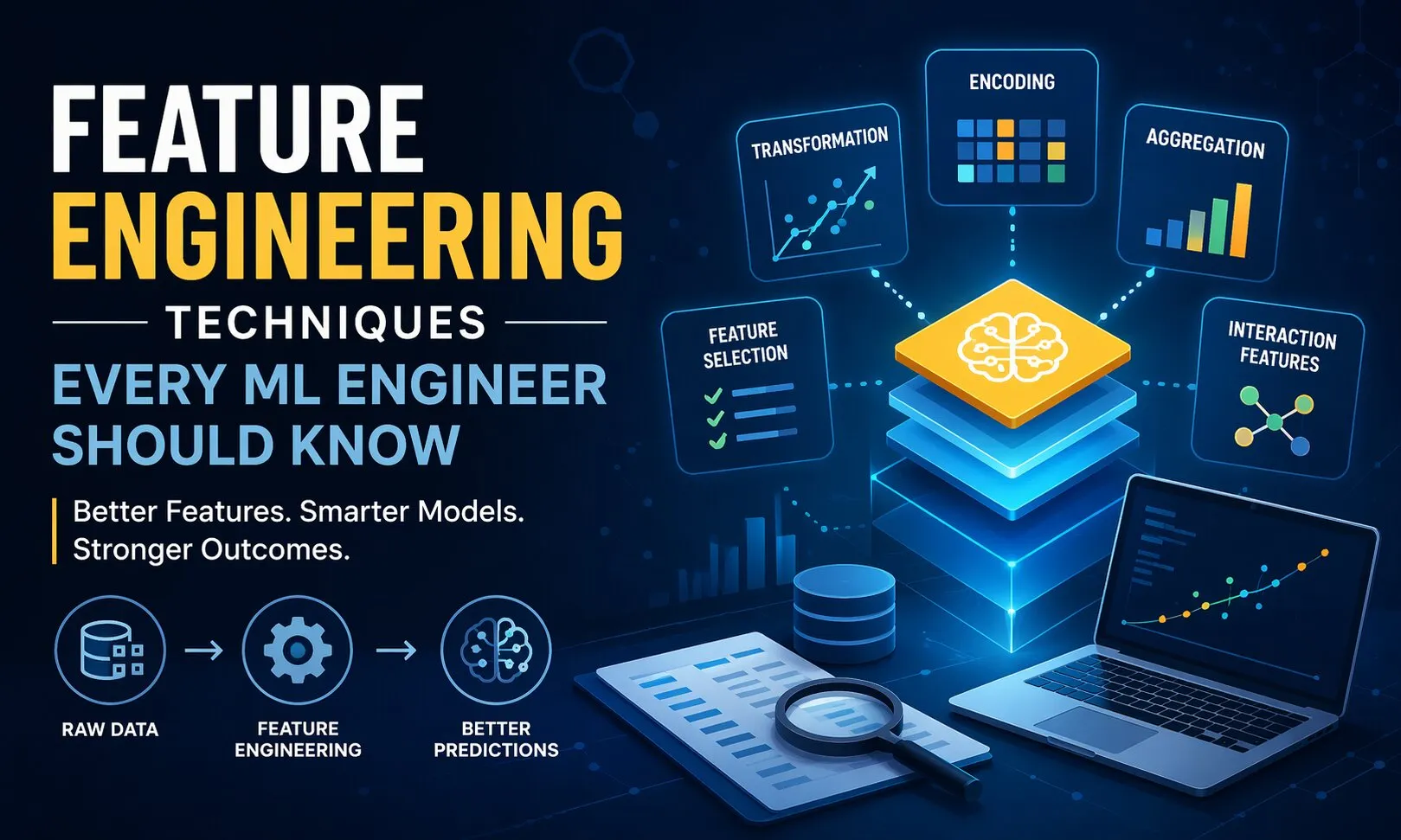

A machine learning model is only as good as the data it learns from and more importantly, how that data is shaped. Many high-performing ML systems don’t rely on complex algorithms alone; they succeed because of carefully engineered features that capture meaningful patterns.

Across top-ranking industry blogs and resources, one thing is consistent: feature engineering is often the silent differentiator between an average model and a production-ready solution. Yet, most content barely scratches the surface focusing on definitions rather than practical application.

This guide goes deeper. It breaks down essential feature engineering techniques, explains when to use them, and illustrates how they impact real-world ML outcomes especially in business-driven environments.

Why Feature Engineering Still Matters

Even with the rise of automated ML and deep learning, feature engineering remains critical in:

- Structured/tabular data problems (finance, healthcare, retail)

- Improving model interpretability

- Reducing overfitting

- Enhancing training efficiency

Case Insight:

A fintech company improved its fraud detection accuracy by 18% simply by creating time-based behavioral features—without changing the model.

Core Feature Engineering Techniques

1. Handling Missing Data Intelligently

Missing values are inevitable, but how you handle them defines model reliability.

Common Approaches:

- Mean/median imputation (for numerical stability)

- Mode imputation (for categorical consistency)

- Predictive imputation (using models)

Best Practice:

Avoid blindly filling missing values—understand why the data is missing. Sometimes, missingness itself is a signal.

2. Encoding Categorical Variables

Machine learning models don’t understand text labels directly. Encoding transforms categories into numerical representations.

Techniques:

- One-Hot Encoding: Works well for low-cardinality features

- Label Encoding: Useful for ordinal relationships

- Target Encoding: Ideal for high-cardinality features (but requires caution to avoid leakage)

Example:

In e-commerce, encoding product categories properly can significantly influence recommendation engines.

4. Feature Transformation Techniques

Transformations help make data more suitable for modeling.

Popular Transformations:

- Log transformation (for skewed data)

- Box-Cox transformation

- Polynomial features

These techniques help models capture non-linear relationships more effectively.

5. Feature Selection Methods

Not all features add value. Some introduce noise or redundancy.

Key Techniques:

- Filter Methods: Correlation, Chi-square

- Wrapper Methods: Recursive Feature Elimination (RFE)

- Embedded Methods: Feature importance from models

Business Impact:

Reducing irrelevant features speeds up training and improves model interpretability—critical for enterprise adoption.

6. Time-Based Feature Engineering

For time-series or event-driven data, time plays a crucial role.

Examples:

- Extracting day, month, hour

- Creating lag features

- Rolling averages

Case Insight:

A logistics company reduced delivery delays by analyzing time-based patterns such as peak hours and seasonal variations.

7. Handling Imbalanced Data with Feature Engineering

In problems like fraud detection or disease prediction, class imbalance is common.

Techniques:

- Creating ratio-based features

- Combining domain knowledge with feature creation

- Using SMOTE along with engineered features

Comparing Manual vs Automated Feature Engineering

| Aspect | Manual Feature Engineering | Automated Feature Engineering |

|---|---|---|

| Control | High | Moderate |

| Speed | Slower | Faster |

| Interpretability | Strong | Sometimes limited |

| Use Case | Domain-specific problems | Large-scale ML pipelines |

Takeaway:

Automation helps, but domain-driven feature engineering still provides a competitive edge.

Real-World Example: Customer Churn Prediction

Let’s break down how feature engineering impacts a real use case:

Raw Data:

- Customer ID

- Subscription date

- Last activity date

- Purchase history

Engineered Features:

- Customer lifetime (days)

- Average purchase value

- Time since last activity

- Engagement frequency

Result:

These engineered features significantly improve churn prediction accuracy compared to raw data inputs.

Best Practices for Feature Engineering

- Start with domain understanding, not tools

- Avoid data leakage at all costs

- Validate features using cross-validation

- Document feature logic for reproducibility

- Continuously monitor feature performance in production

Conclusion: Turning Data into Business Value

Feature engineering is where business understanding meets machine learning expertise. It’s not just about transforming data-it’s about extracting meaningful signals that drive decisions.

For organizations aiming to scale intelligent systems, investing in the right feature engineering strategy is non-negotiable. This is where partnering with a Top AI/ML Consulting Company becomes valuable bringing domain expertise, structured methodologies, and proven frameworks.

Whether you're building predictive models, recommendation systems, or automation pipelines, robust AI & ML Solutions rely heavily on well-engineered features. And with the growing demand for production-ready models, leveraging expert-led AI & ML Development Services ensures that your data translates into measurable outcomes.

Sign in to leave a comment.