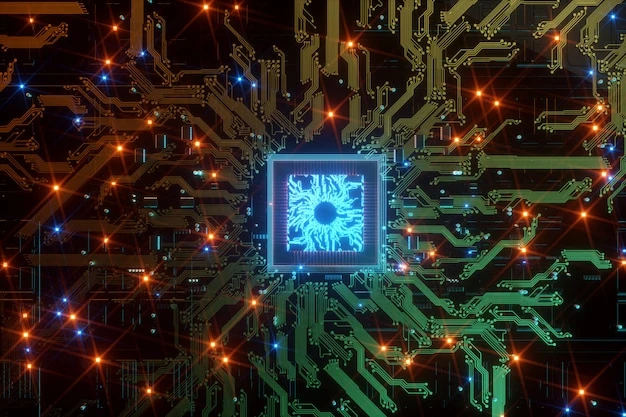

1. The rise of domain-specific silicon

In the era of accelerating compute demands, general-purpose processors are increasingly challenged by the sheer scale of data, AI workloads, and the power/thermal constraints of modern data-centres. Custom silicon enables organisations to tailor architectures, optimise power/performance, and embed domain-specific accelerators for high-performance computing (HPC) and AI inference. A specialist such as Cyient Semiconductors positions itself to design and deliver custom ASICs covering power-management, high-speed connectivity, mixed-signal blocks and compute accelerators that meet the unique needs of data-intensive infrastructure.

2. Why custom silicon matters in HPC, AI and data-centre environments

High-performance computing, AI workloads and data-centres place heavy demands on silicon: massive parallelism, low latency, high bandwidth, stringent power- and thermal-envelopes, and long operation lifetimes. In such environments, custom silicon provides critical advantages:

2.1 Tailored compute accelerators

Off-the-shelf chips can perform many tasks, but when workloads demand tens-or-hundreds of TOPS (tera operations per second), custom ASICs optimized for deep-learning inference or high-throughput compute become essential. These architectures integrate accelerators, memory controllers, and specialised data-paths that standard processors cannot match.

2.2 High-speed connectivity and interconnect scaling

Data-centre infrastructure requires high-bandwidth interconnects chip-to-chip links, optical coherent communication, high-speed SerDes, and low-latency fabric. Custom ASICs allow designers to embed advanced mixed-signal blocks, SerDes lanes, equalisation logic and bandwidth-optimised analog/digital blocks seamlessly into the die. One observed example includes a high-speed 3 nm ASIC for coherent optical communications supporting big-data processing across metro, campus and data-centre interconnects.

2.3 Power and thermal efficiency at scale

In data-centres, energy cost and thermal management are major considerations. Custom silicon enables power and system management solutions such as custom PMICs, voltage-regulators, domain-isolation, and dynamic power control that tightly align with the compute fabric. This tight alignment helps reduce overall power draw and improve sustainability of large-scale deployments.

2.4 System integration and lifecycle readiness

Data centres and AI infrastructure demand long lifecycle support, variant scaling, reliability, supply-chain readiness and manufacturing maturity. Custom ASIC development supports these through architecture tailored for manufacturability, testability, low-voltage retention modes, robust IP reuse and supply-chain-ready infrastructure.

3. End-to-end success through expert chip design services

Achieving the performance, power and scale goals of HPC/AI silicon requires a partner capable of delivering full-flow development. Firms providing comprehensive chip design services cover system architecture, analog/mixed-signal design, digital logic, layout, verification, DFT, post-silicon validation, packaging, manufacturing ramp and supply-chain support. Leveraging such services enables organisations to focus on system and algorithm differentiation while the silicon foundation is executed by domain-expert engineering teams.

4. Key architecture and implementation strategies for HPC/AI silicon

4.1 Define system-level architecture with compute/task partitioning

At the outset, architects must benchmark the workload: how many operations, memory bandwidth, I/O requirements, latency tolerance and power budget. Partitioning the architecture involves defining accelerator cores, memory hierarchies, interface blocks, data-paths, domain isolation and retentive power modes. Early architecture definition ensures that IP selection, floor-planning, and verification flow are aligned.

4.2 Reusable silicon IP and modular sub-systems

High-performance ASICs benefit from re-using proven IP accelerator cores, SerDes, PMICs, high-speed analog blocks, memory controllers to reduce risk, improve verification coverage and shorten schedule. Modules with silicon heritage accelerate development and reduce surprise in layout, timing closure and yield.

4.3 Mixed-signal, analog, high-speed interface co-design

Custom HPC/AI silicon often combine digital logic with high-speed analog/SerDes blocks, mixed-voltage domains, clock-domain crossings and tight analog/digital coupling. The architecture must integrate analog front-ends, equalisation, calibration loops, analog power islands and high-speed data converters. Layout and verification must anticipate noise, crosstalk, power-delivery integrity and mixed-domain interactions.

4.4 Power-domain and voltage-island planning

Advanced ASICs for data-centres must support active compute, retention, sleep, wake-up modes and multiple voltage domains. Strategic power-island design, domain isolation, dynamic voltage/frequency scaling (DVFS), and thermal management are foundational. By aligning architecture with these constraints early, the implementation is more likely to meet power and thermal targets.

4.5 Verification, test-program readiness and manufacturing ramp

Verification flows must include functional, timing, analog/digital interaction, mixed-signal co-simulation, multi-corner analysis, and yield modelling. Test program development (DFT, BIST, scan, parametric measurement, board-level debug) must be planned in parallel. Manufacturing readiness includes packaging, board-level integration, supply-chain qualification, variant scalability and field reliability profiling.

5. Business impact and strategic value

5.1 Time-to-market advantage

Custom silicon with aligned design and full-flow execution enables companies to bring differentiated products to market faster, especially in rapidly evolving AI/data-centre markets. The ability to build optimized silicon supports market leadership and accelerates adoption by hyperscalers and infrastructure providers.

5.2 Cost and energy efficiency at scale

With billions of operations and large server farms, energy consumption and cost per operation matter. Custom ASICs tuned for the workload deliver better performance-per-watt, reduced cooling overhead, and lower infrastructure cost providing competitive advantage at scale.

5.3 Differentiation and IP leadership

In AI and cloud infrastructure, what the hardware can enable becomes a competitive differentiator. Custom ASICs enable companies to embed unique differentiators accelerator architectures, data-paths, interconnects and mixed-signal integration that off-the-shelf chips cannot match. This leads to system-level performance gains and market differentiation.

5.4 Lifecycle and supply-chain readiness

Data-centre infrastructure requires production-scale silicon, ramp-ready manufacturing, variant spine, long-lifetime deployment (5-10 years or more), and global logistics. Full-flow development models ensure the silicon is production-ready, supply-chain aligned and field-serviceable.

6. Real-world case studies and outcomes

In the high-performance computing and AI domain, the company has highlighted solutions such as:

- Custom ASICs for data-centre networking built at advanced nodes such as 3 nm, 5 nm, 7 nm and 16 nm, addressing high-speed data processing and low-power consumption.

- Mixed-signal ASICs enabling high-bandwidth optical interconnects and chip-to-chip communication for data-intensive workloads.

- These examples demonstrate how tailored silicon architecture combining compute, connectivity and power-management answers the demands of modern infrastructure workloads.

7. Challenges and mitigation in delivering HPC/AI custom silicon

7.1 Complexity of multi-domain integration

HPC/AI chips bring together analog, digital, high-speed SerDes, power-islands, memory hierarchies and packaging constraints. The risk of integration failure is high. Architectural and verification discipline plus IP reuse mitigate this risk.

7.2 Power/thermal constraints

With high compute density, managing power consumption, heat dissipation and cooling becomes vital. Early architecture must factor in power-domains, packaging, board-interface, thermal paths and system integration lessons.

7.3 Manufacturing scale and yield

High-volume infrastructure chips must ramp reliably. Yield, test-program development, supply-chain readiness and qualification are all critical. Engaging full-flow development models ensures manufacturing readiness and traceability.

7.4 Time and schedule pressure

Competition in AI/data-centre space intensifies schedule demands. By reusing IP, aligning design flows, integrating packaging/test early and using an end-to-end partner, time-to-market can be reduced.

8. Future outlook for custom silicon in HPC/AI

As AI models grow larger, data-centres expand, and workloads diversify, the role of custom ASICs will continue to grow. Hardware-aware architectures, chiplets, 2.5D/3D integration, optical interconnects, embedded AI accelerators and power-efficient compute fabrics will define the next generation of infrastructure silicon. Companies capable of delivering full-flow custom silicon will lead this transformation.

9. Conclusion: From design to deployment in next-gen infrastructure

Delivering custom silicon for high-performance computing, data-centres and AI workloads is not just about designing logic it is about solving the full system challenge: compute, connectivity, power, integration, manufacturing and scale. By embracing architecture optimised for workload, leveraging design flows aligned to production, and partnering with full-service development capabilities, organisations can unlock significant performance, efficiency and differentiation. Custom ASICs become the technology foundation for next-generation infrastructure and system-level leadership.

Sign in to leave a comment.