With machine learning being the driving force behind such vital decision-making processes in healthcare, finances, law enforcement, and self-driving cars, the following question must be posed: How can we trust the models when we do not even know how they work? In comes Explainable AI (XAI), a subset of artificial intelligence concerned with making machine learning models transparent, interpretable, and understandable to humans.

XAI is no longer an option but a necessity to understand, especially by businesses, regulators, and learners. Whether you're a data scientist, a policymaker, or a student taking a machine learning course in Canada, grasping the concepts behind explainability can empower you, potentially making the difference between your model being accepted or discarded.

The Importance of Why Trust in Machine Learning

Consider a situation in which a machine learning model rejects a person for a loan. In the case of a black box model, it does not give any information on the reasons behind its decision. The absence of transparency forms this mistrust. When models are used to make crucial decisions that affect users, customers, and regulators, there must be a way to explain the model predictions in human-readable, plain language.

It is the reason the demand for explainable models increases. Organizations worldwide are incorporating XAI practices not only for compliance and fairness but also to enhance performance and user trust. Therefore, there has been an increased desire among educational programs to implement or integrate these principles, including a machine learning course in Canada, which also focuses on ethics, accountability, and transparency of models.

Real-World Use Cases of XAI

1. Healthcare

In medical diagnosis, confidence in the machine forecast is essential. When a model suggests a treatment or alerts to a disease, physicians must understand the reason. As an example, SHAP values may allow doctors to visualize which symptoms or test results had the most impact on the model's decision to make more informed medical conclusions.

2. Finance

Machine learning is essential in credit scoring, fraud detection, and algorithmic trading. Regulators usually demand that financial institutions provide reasons to customers for automated decisions. XAI tools guarantee that institutions can adhere to these requirements and, in addition, enhance internal decision-making.

3. Legal Systems

Recidivism models and predictive policing have the ability to affect the freedom of an individual. In the absence of transparency, such tools may be hazardous and discriminatory. XAI makes these models questionable and responsible, which is particularly important in systems that directly impact human rights and freedom.

The essential aspect of advanced training programs is to understand the ways XAI is used in various industries. When you want to dive deep into this, you can enroll in a machine learning course in Canada and get practical and theoretical experiences required to apply these methods in real life.

The Ethics Behind Explainability

Explainability is closely connected with ethics and fairness. The biased data may lead to biased predictions. When a model is opaque, these issues can be challenging to identify or to rectify. In addition to explaining the rationale behind decisions, XAI can be used to identify implicit biases in training data and model specification.

This is especially topical in nations where explainability and fairness are required by regulations such as the GDPR and the suggested Artificial Intelligence and Data Act (AIDA) in Canada. Graduates knowledgeable about these nuances will be highly marketable, and AI and ML courses in Canada are rapidly evolving to incorporate units on ethical AI and XAI.

Challenges in Implementing XAI

Although explainability is essential, it has costs associated with it. Accuracy and interpretability often conflict, in that models that are more interpretable (e.g., decision trees) can be less accurate than less interpretable models (e.g., neural networks). On top of that, a few XAI tools are computationally costly, which creates difficulties in deploying them at scale. Lastly, it has a problem in usability—the explanations must be adjusted depending on the audience, be it a data scientist, business analyst, or end-user, who would need varying details and abstraction.

It is not only technical prowess that can overcome these challenges; instead, a multidisciplinary approach incorporating data science, psychology, and ethics can effectively address these issues and is the kind of skillset that AI and ML courses in Canada may teach with an emphasis on applicability.

The XAI of the Future

The next generation of AI will not just be models that do a good job, but also models that can explain how and why they are making the decisions that they are making. With an increasing level of autonomy, AI is used to power everything, including self-driving vehicles and customized learning solutions, making the capacity to explain and trust AI an essential topic.

Increased glass box models, whereby the model is built with the concept of transparency in mind, are already on the increase. At the same time, there is active research on hybrid models that trade off between complexity and interpretability. The rise in interest in these fields is even affecting academic programs. Most of the organizations providing machine learning courses in Canada are broadening their curriculum to encompass XAI tools, regulatory compliance, and metrics of fairness.

How to Get Started with XAI

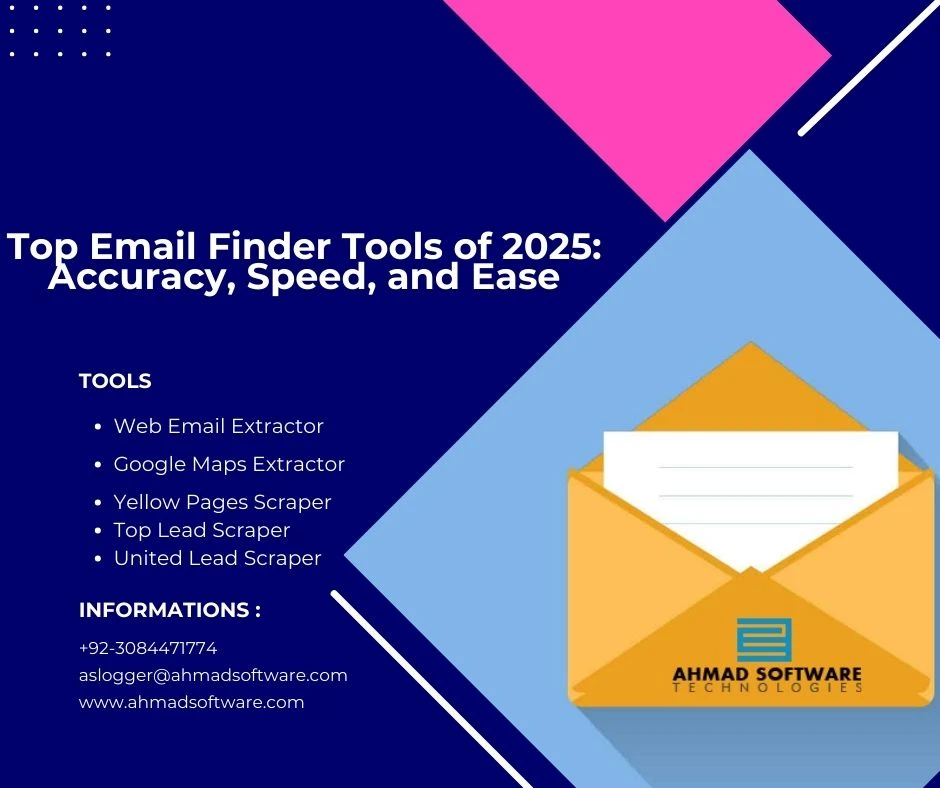

You have just entered the field and do not know what or where to start. You can take several steps. To get started, it is recommended first to learn the fundamentals of machine learning, including getting familiar with such algorithms as decision trees, random forests, and neural networks. Then, study interpretability methods through tools like LIME, SHAP, and Integrated Gradients, and understand how to apply them in Python packages, such as scikit-learn, TensorFlow, or PyTorch.

Another place to look into the ethical implications of AI is case studies of algorithmic bias and fairness. And to get a well-balanced perspective, you should enroll in a formal training program. A recognized machine learning course in Canada can provide you with the theoretical knowledge and practical skills of explainable AI. Last but not least, it is crucial to regularly keep up with the most recent findings of major institutions and industry magazines, since the industry of XAI is developing fast, keeping you engaged and up-to-date.

Conclusion

Explainable AI is not merely a technical necessity but a mechanism of trust. As we continue to integrate AI systems into our lives, interpretability will be required even more. Practitioners and researchers should also prepare themselves with the awareness and the apparatus to build up intelligible and responsible models.

Whether you want to make a career out of ethical and trustworthy AI, this is the best time to consider AI and ML courses in Canada with a focus on XAI. With the technical expertise and the proper grasp of interpretability, you will be able to take the lead in developing not only innovative but also fair, transparent, and human-centered AI.

Sign in to leave a comment.