Introduction

Search engines like Google, Bing, and Yahoo process billions of web pages daily to deliver accurate and relevant search results. But how do these search engines discover, analyze, and store website data? The process starts with crawling, where search engine bots systematically browse the web, followed by indexing, which organizes the collected information for quick retrieval. But what technology do search engines use to 'crawl' websites? Search engines rely on web crawlers, artificial intelligence (AI), machine learning, and advanced indexing algorithms to efficiently scan and organize web content. Understanding this technology helps website owners optimize their sites for better rankings and visibility.

How Search Engines Explore the Web: The Crawling and Indexing Process

Search engines follow a structured workflow to scan, store, and rank web content.

1. Crawling: How Search Engines Discover Content

Crawling is the first step in the search engine process. Web crawlers, also known as spiders or bots, navigate the internet to discover and update content.

How Crawling Works:

- Starting the Crawl – Search engine bots access a list of known URLs, such as:

- Previously indexed web pages.

- Websites submitted via Google Search Console.

- Links found on already indexed pages.

- Following Links to Find New Pages – Crawlers move from one page to another by following internal and external links.

- Reading and Analyzing Content – The crawler scans and collects data from:

- HTML structure (headings, metadata, text, and images).

- Internal and external links.

- Schema Markup and structured data.

2. Indexing: How Search Engines Organize Data

Once a page is crawled, search engines analyze and store its content in a massive database known as the search index. The indexing process determines how and when a webpage appears in search results.

Factors That Affect Indexing:

- Content Quality – Unique, informative, and well-structured content ranks higher.

- Page Relevance – Search engines determine if the page content matches user intent.

- Mobile-Friendliness – Google prioritizes mobile-responsive websites in indexing.

- Page Speed – Fast-loading pages get better rankings.

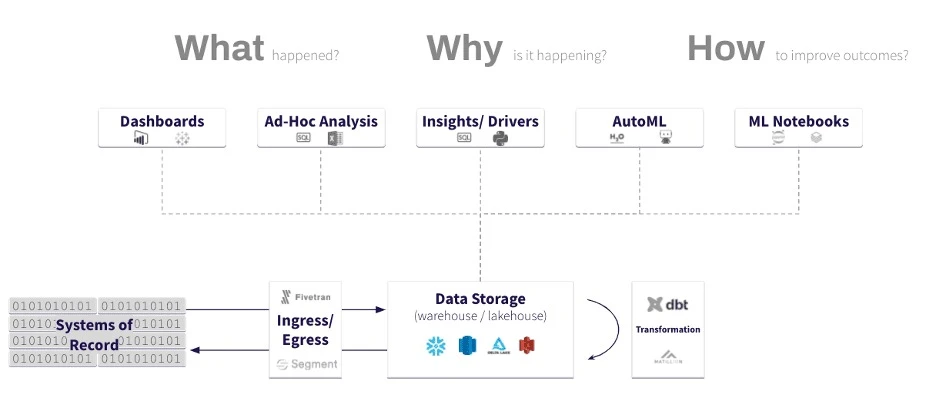

What Technology Do Search Engines Use to 'Crawl' Websites?

Search engines use several advanced technologies to enhance crawling and indexing efficiency.

1. Web Crawlers (Spiders, Bots)

Web crawlers are specialized programs designed to scan websites and retrieve data. Some well-known crawlers include:

- Googlebot – Google’s primary crawler.

- Bingbot – The main crawler for Microsoft Bing.

- DuckDuckBot – DuckDuckGo’s privacy-focused search bot.

- Baiduspider – Used by Baidu, China’s leading search engine.

2. Artificial Intelligence (AI) and Machine Learning

AI and machine learning improve search engine crawling by:

- Understanding content relevance beyond keywords.

- Filtering spam and duplicate content.

- Identifying user intent to enhance search accuracy.

Google’s RankBrain is an AI-based system that helps analyze and process search queries more effectively.

3. Robots.txt and Meta Tags

Website owners can control how search engines crawl their sites using:

- robots.txt – A file that tells search engine bots which pages to crawl or ignore.

- Meta tags (noindex, nofollow) – HTML attributes that guide crawlers on whether a page should be indexed.

4. XML Sitemaps

A sitemap is an XML file that lists a website’s important pages, helping crawlers:

- Discover new content quickly.

- Understand the structure of a website.

- Prioritize which pages should be indexed.

Submitting a sitemap via Google Search Console ensures faster indexing.

5. Structured Data and Canonical Tags

- Schema Markup – Provides additional content details, improving featured search results.

- Canonical Tags – Prevent duplicate content by directing crawlers to the preferred page version.

6. Mobile-First Indexing

Google prioritizes mobile-friendly websites, meaning that crawlers analyze and rank pages based on their mobile versions.

7. Page Speed and Content Delivery Networks (CDNs)

Search engines prioritize fast-loading websites. Crawlers evaluate:

- Optimized images and scripts.

- CDNs for better content distribution.

- Browser caching to reduce load times.

How Search Engines Decide What to Index

After crawling, search engines assess whether to index a page based on:

- Content originality and relevance.

- User experience and mobile optimization.

- Website authority and backlink profile.

- Proper use of structured data and metadata.

Best Practices to Improve Website Crawling and Indexing

1. Submit a Sitemap to Search Engines

- Use Google Search Console and Bing Webmaster Tools to submit a sitemap.

- Keep your sitemap updated with new content.

2. Optimize Website Speed

- Compress images and enable browser caching.

- Minimize CSS, JavaScript, and unnecessary scripts.

- Use a Content Delivery Network (CDN) to reduce server load.

3. Ensure Mobile-Friendliness

- Implement responsive web design for different screen sizes.

- Avoid intrusive pop-ups and autoplay media.

4. Improve Internal Linking

- Link important pages together to help crawlers navigate efficiently.

- Use descriptive anchor text for better indexing.

5. Publish High-Quality, Unique Content

- Avoid duplicate content issues.

- Provide valuable and well-structured information.

6. Monitor Crawl Errors and Fix Issues

- Use Google Search Console to check for crawl errors.

- Fix broken links and improve page loading speed.

Conclusion

Search engine crawlers use advanced AI, structured data, web crawlers, and indexing algorithms to scan and analyze web content efficiently. Understanding what technology do search engines use to 'crawl' websites?' helps website owners optimize their sites for better rankings and visibility. By following best practices such as submitting sitemaps, improving page speed, and ensuring high-quality content, businesses can enhance their search engine performance and attract more organic traffic.

Tags:

#SearchEngines #SEO #WebCrawling #Googlebot #DigitalMarketing #AI #Indexing #WebsiteOptimization #GoogleSEO #OnlineVisibility #TechnicalSEO

Sign in to leave a comment.