When search engine crawlers land on a website, they do not experience it the way people do. They do not respond to layout, typography, or visual hierarchy. They do not care whether a page feels elegant or cluttered. Their job is far simpler and far more mechanical. They follow links.

Every navigation item, contextual reference, and internal pathway acts as a signal that tells crawlers where content lives and how it relates to everything else on the site. Links are not just navigation aids. They are the primary system search engines use to discover pages, evaluate importance, and decide how often something deserves attention.

Most conversations about links in SEO stop at familiar advice. Get quality backlinks. Use descriptive anchor text. Avoid spam. While none of that is wrong, it barely scratches the surface. The way links actually influence visibility is rooted in crawler behavior, probability models, crawl resource allocation, and internal authority flow. Once those mechanics are understood, site structure and content strategy stop being guesswork and start becoming deliberate systems.

How Crawlers Really Move Through a Website

Search engines operate under resource constraints. Even Google does not crawl everything endlessly. Each site is given a crawl budget, meaning a limited number of pages and requests that can be processed within a given time window. Internal links largely determine how that budget is spent.

Server log data shows something that page audits often miss. It reveals which pages are visited, how frequently crawlers return, and which links they actually follow. A page can be technically perfect, fast, and well optimized, yet still be crawled rarely if internal links do not point to it consistently. At the same time, pages that receive frequent internal links often attract repeated crawler visits, signaling importance even if their content is older.

This leads to a practical reality. Internal links are not just about usability. They control discovery and indexation speed. When new content is published, the volume and quality of internal links pointing to it determines how quickly it is found and processed. Pages that sit several clicks away from the homepage and receive minimal internal attention can take weeks to appear in search results, even when nothing blocks access technically.

How Search Engines Decide Which Links Matter More

Early PageRank models treated links as relatively equal signals. That assumption no longer holds. Google refined its approach years ago with the reasonable surfer model, described in patent US 8,117,209 B1. The idea is straightforward. Not every link has the same likelihood of being clicked by a real person, so not every link should pass the same ranking weight.

A contextual link embedded in the main body of content carries more value than an identical link placed in a footer. A prominent navigation element is more influential than a small sitewide reference repeated across hundreds of pages. Anchor text that clearly describes the destination sends stronger signals than generic phrases.

This model accounts for placement, visibility, surrounding context, and visual prominence. A large call to action near the top of a page communicates importance in a way that a muted link at the bottom never will, even if both point to the same URL.

From a site management perspective, this changes how internal architecture should be evaluated. Repeated template links do not accumulate strength in the same way contextual links do. A page with dozens of footer references is not necessarily stronger than one with a smaller number of relevant in content links.

Effective internal linking is not about volume. It is about intentional placement. Important pages should be supported by prominent, relevant links from content that already carries authority. This is where detailed link analysis becomes useful, not just counting links but understanding where and how they appear.

How Internal Links Shape User Journeys and Outcomes

Internal links influence more than rankings. They shape how people move through a site and whether they reach meaningful outcomes. Every internal link is both a signal to crawlers and a decision point for users.

High traffic pages pass attention as well as authority. If informational content attracts visitors but never links to products, services, or next steps, traffic remains disconnected from business outcomes. When pricing pages or signup paths are buried deep within navigation, friction increases even if rankings remain strong.

User session data often reveals unexpected behavior. Visitors may consistently follow certain paths that are not clearly emphasized in navigation. Pages that receive attention organically but rarely contribute to conversions often lack clear internal guidance.

Some organizations have improved conversion rates without any ranking changes simply by adjusting internal links. They identified pages with steady traffic and weak outcomes, then added contextual pathways to relevant offers. Traffic stayed the same. Results improved.

This is why internal linking should not be owned by SEO alone. It intersects with product strategy, user experience, and conversion design. When those disciplines align, links stop being technical artifacts and start becoming intentional pathways.

Link Quality in the Context of Recent Core Updates

Recent google alogirithm core updates reinforced a pattern that has been developing for years. Low quality content cannot rely on aggressive linking to compensate. At the same time, sites with strong content foundations see their link structures work more effectively.

Manipulative patterns now carry greater risk. Excessive exact match anchors, sudden unnatural link spikes, and backlinks from known low trust sources are more likely to trigger filtering or penalties. Large scale directory submissions and paid link packages no longer provide durable benefits.

Modern link audits need to look beyond surface metrics. A backlink from a site that maintained visibility through recent updates carries different weight than one from a site that lost most of its traffic. Link neighborhoods matter. Patterns across referring sites become signals themselves.

Internally, quality matters as well. Links from thin or autogenerated pages do not transfer value the same way links from substantive content do. Pages weakened by quality evaluations pass less influence through their outbound links, regardless of whether those links point internally or externally.

JavaScript and Link Discovery

Many modern sites rely heavily on client side rendering. This can introduce delays in link discovery. Links that appear in raw HTML are seen immediately. Links injected through JavaScript may not be discovered until a rendering phase occurs.

Google has improved its ability to process JavaScript, but timing differences remain. Discovery can be delayed by hours or days, which matters for large or frequently updated sites.

This is easy to test. Viewing the page source reveals whether critical links are present in server delivered HTML. Search Console tools show how Google renders the page and whether those links appear after processing.

When issues arise, solutions exist. Core navigation can be rendered server side while interactive components remain client driven. Frameworks like Next.js support server rendering. Pre rendering services can generate static versions for crawlers.

The goal is not to avoid modern development practices. It is to ensure that essential crawl paths do not depend entirely on delayed execution.

Cleaning Up Links to Preserve Crawl Efficiency

Over time, sites accumulate unnecessary complexity. Redirect chains lengthen. Broken links persist. Sitewide references point to pages that no longer matter. This clutter consumes crawl resources and distorts priority signals.

Periodic audits identify opportunities to simplify. Broken paths can be fixed. Chains can be shortened. Low value sitewide links can be reduced. This is not about minimizing link counts. It is about aligning structure with current intent.

Cleanup projects often show measurable benefits. Crawlers cover more useful pages. Important content receives more consistent attention. Index bloat decreases as outdated signals fade.

Links are infrastructure

Links are infrastructure. When they are designed with intent, they support discovery, relevance, and conversion at the same time. When they are ignored, they create friction for crawlers and confusion for users, even on sites with strong content.

The work starts with clarity. You need to see how links are actually distributed across your site, which pages receive authority, and which ones are disconnected. That means looking beyond surface metrics and combining structural analysis with crawl behavior and real user paths.

From there, priorities become clearer. Access issues matter more than fine tuning. Strengthening links to important pages delivers more value than polishing low impact areas. Editorial context and relevance consistently outperform volume driven tactics.

Link strategy also changes as a site grows. New content needs to be woven into existing structures. Old pages need to be reevaluated as priorities shift. Regular audits prevent outdated signals from shaping how search engines interpret the site.

Websites that perform well over time tend to treat links as systems, not shortcuts. They understand how crawlers move, how link weight flows, and how internal structure supports both visibility and decision making. That level of understanding is not limited to large teams or enterprise budgets. It becomes accessible once link data is visible and actionable.

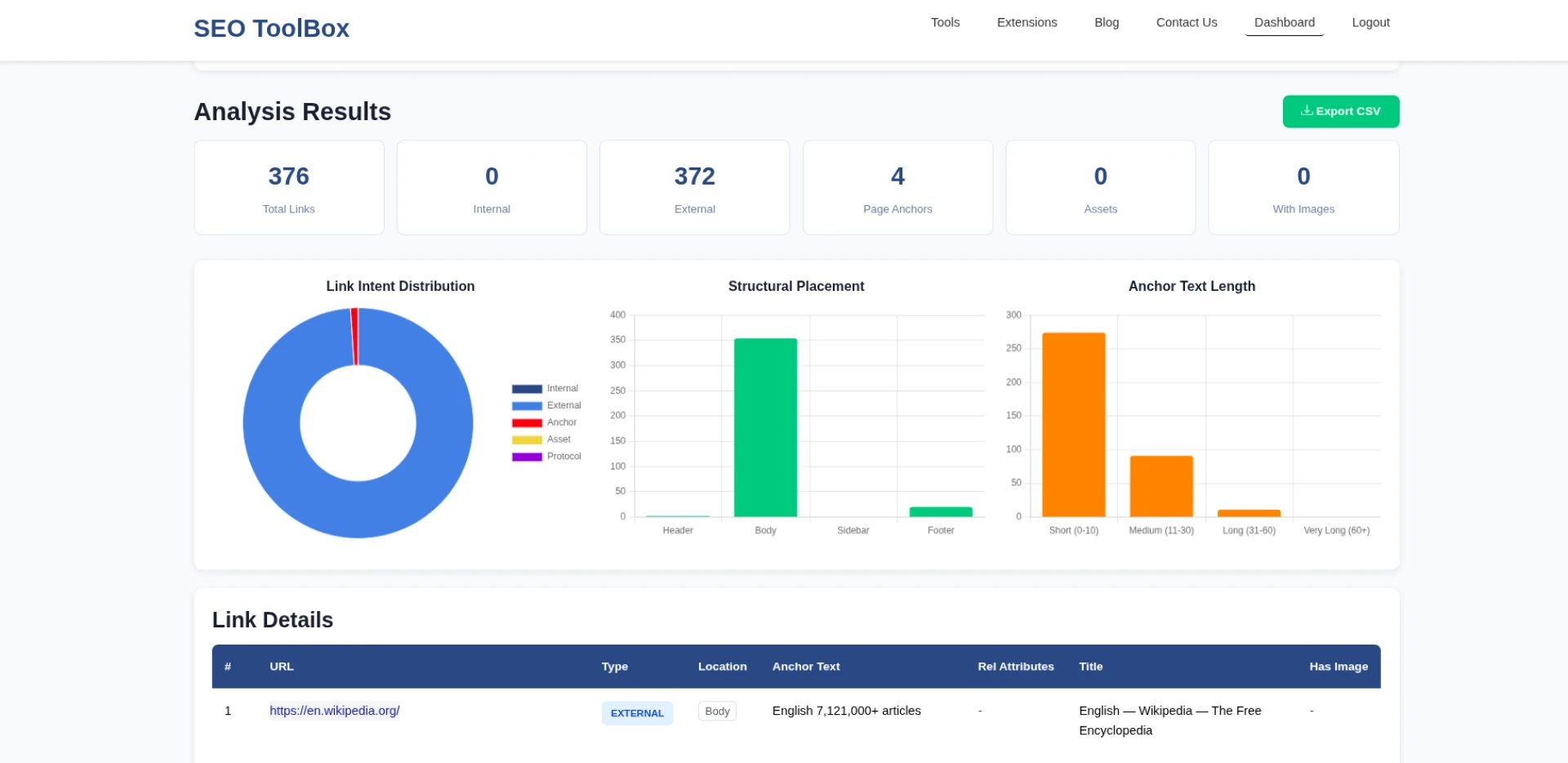

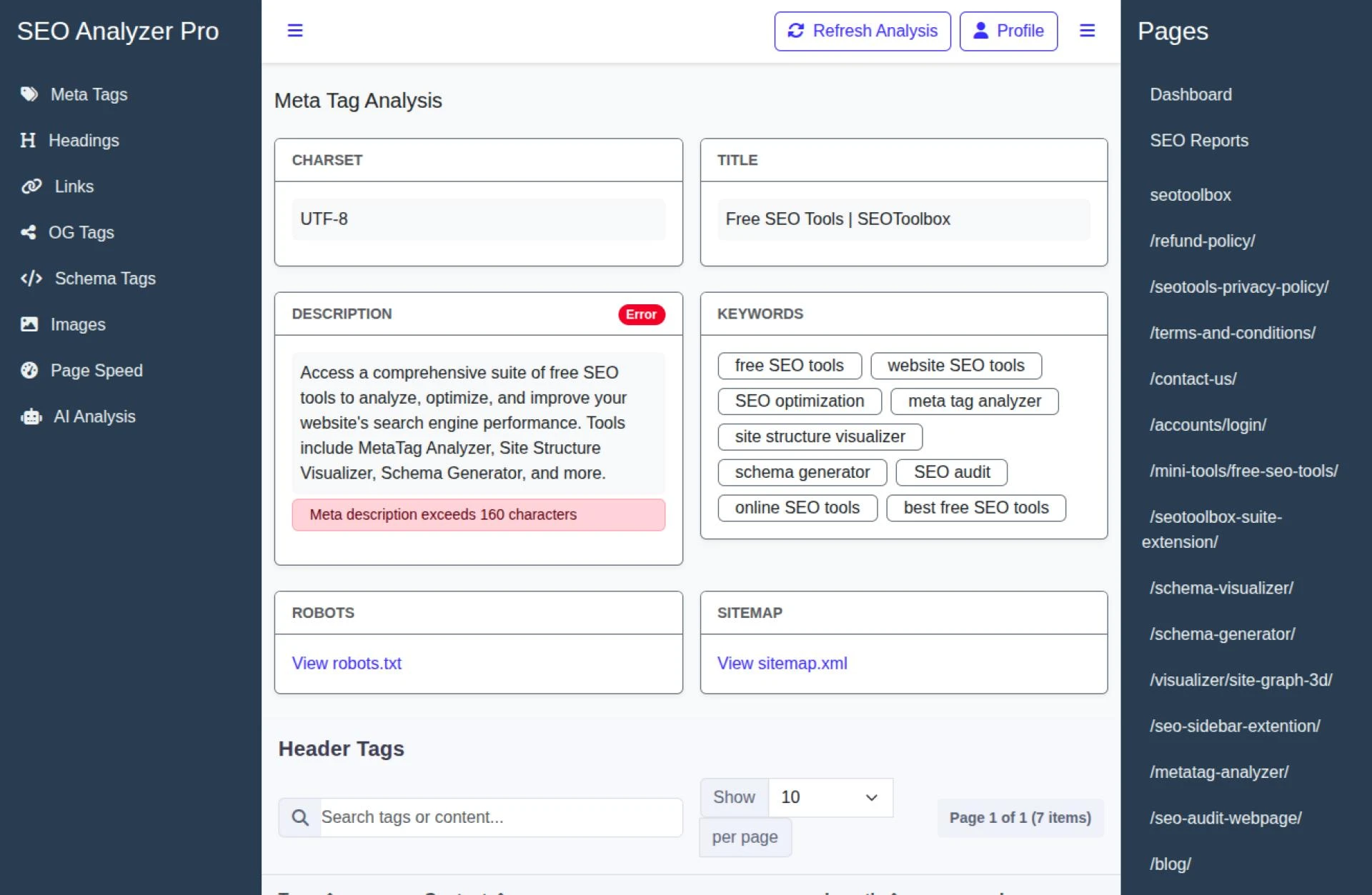

You can use tool like Website Link Analyzer. It breaks down every link on a page and shows how they are structured, connected, and interpreted. You can inspect internal and external links, anchor text usage, rel attributes, and link intent to uncover structural gaps and optimization opportunities that are easy to miss.

Understanding links at this level changes how SEO decisions are made. Instead of guessing what search engines might value, you start working with the same signals they rely on.

Sign in to leave a comment.