Introduction

Professionals must be skilled in query optimization, distributed data processing, data visualization, etc. for a career in Data analytics. Modern platforms like Databricks combine Apache Spark with cloud-native architecture. SQL enables structured data manipulation with precision. Processed datasets can be turned into interactive dashboards with Power BI. Professionals can build a complete data pipeline with this stack. Procedures like ingestion, transformation, modelling, and visualization take place in a scalable environment with these technologies.

Understanding Databricks Architecture

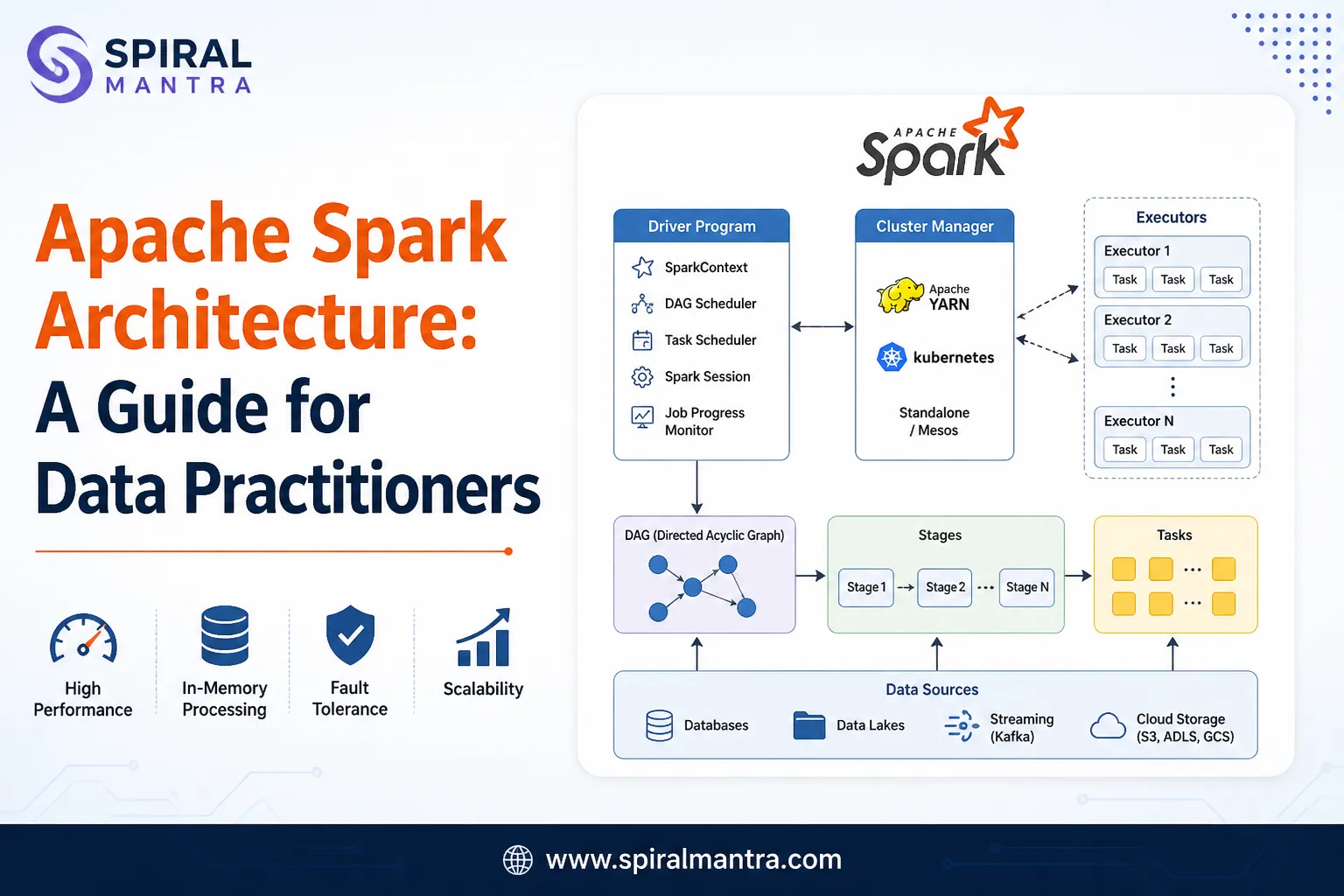

Databricks runs on top of Apache Spark. It uses a cluster-based model. Driver node and multiple worker nodes are present in each cluster. The driver manages execution plans. Workers process data in parallel. This design supports large-scale data processing. One can join Databricks Course for the best hands-on learning opportunity using state-of-the-art technologies.

Delta Lake is a core feature. It enables ACID transactions on data lakes. It uses a transaction log. Data becomes more consistent as a result. Delta Lake applies schema and versioning accurately.

Key Databricks Components

- Notebooks and jobs get organized at Workspace

- Cluster performs distributed workloads

- Files are stored in a distributed system with DBFS

- Delta Lake makes data storage more reliable

- Batch pipelines get automated with jobs

SQL for Analytical Processing

Analytics is a core component of SQL. Relational and semi-structured data can be queried easily with SQL. Databricks SQL extends traditional SQL. It supports large-scale distributed queries.

Query optimization is important. Use partitioning to reduce scan cost. Use indexing techniques like Z-ordering in Delta tables. Full table scans must be avoided. Instead, use selective filters for efficiency.

Important SQL Operations

- Multiple datasets get joined

- Advanced analytics is performed by Window functions

- Aggregations are used to summarize large datasets

- Query readability improves with CTEs

- Subqueries work well with nested logic

Sample SQL Syntax

SELECT department, AVG(salary) AS avg_salary

FROM employees

WHERE join_date >= '2023-01-01'

GROUP BY department

ORDER BY avg_salary DESC;

The SQL Online Training program is designed for beginners and offers the best guidance.

Data Modelling with Delta Lake

Schema evolution works well on Delta Lake. It allows adding new columns without breaking pipelines. It stores metadata in transaction logs. It enables time travel queries.

| Feature | Benefit |

|---|---|

| ACID Transactions | Data becomes more consistent |

| Schema Enforcement | Invalid data writes can be prevented |

| Time Travel | Better analysis of historical data |

| Data Versioning | Improved rollback operations |

Delta tables help Data engineers when working with incremental loads. Merge operations are used for upserts to improve efficiency of the pipelines.

Power BI for Data Visualization

Power BI connects directly to Databricks. It supports DirectQuery and Import modes. DirectQuery fetches real-time data. Import mode improves performance for static datasets. DAX language powers calculations in Power BI. It supports row-level and filter context. Dynamic aggregations work well on Power BI. Aspiring professionals are suggested to join Power BI Course for the best guidance an hands-on learning facilities as per the latest industry trends.

Power BI Features

- Better decision making using interactive dashboards

- Data modeling to improve relationships across systems

- Complex calculations become easier with DAX

- Data views can be refined using Visual filters

- Updates become automated with scheduled refresh

Integration Workflow

Data analyst use tools like Databricks, SQL, Power BI, etc. to build pipelines. Cloud storage or APIs handle Data ingestion. Databricks processes raw data using Spark. SQL transforms datasets into structured formats. Power BI visualizes insights.

| Stage | Tool Used | Function |

|---|---|---|

| Ingestion | Databricks | Raw data loading |

| Processing | Spark | Transforming large datasets |

| Querying | SQL | Structured data analysis |

| Visualization | Power BI | Generating dashboards |

Performance Optimization Techniques

Efficient pipelines require tuning. Use partition pruning in Spark queries. Cache frequently accessed data. Optimize joins using broadcast joins. Use Delta caching for faster reads.

Avoid data skew. Balance partitions evenly. Monitor cluster performance using Spark UI. Scale clusters based on workload.

Conclusion

A data analyst must master distributed processing, query design, and visualization. Databricks provides scalable computation. SQL enables precise data querying. Data turns into insights with Power BI. A combination of the above technologies enables professionals build a strong analytics pipeline. It supports real-time and batch workloads. It improves decision making with accurate data. Consistent practice with these tools ensures strong technical growth in modern data analytics roles.

Sign in to leave a comment.