In today’s digital economy, data pipelines are the backbone of every analytics and AI initiative. Businesses no longer struggle to collect data — they struggle to trust it. From executive dashboards to machine learning models, every insight depends on how accurately information flows through systems.

Modern enterprises rely on complex architectures that connect CRMs, cloud applications, APIs, IoT devices, and financial systems. These interconnected data pipelines move information into data lakes and warehouses where it powers reporting and forecasting. But movement alone isn’t enough. Reliability is what truly matters.

That’s where FirstEigen brings a smarter approach.

The Real Challenge Behind Data Movement

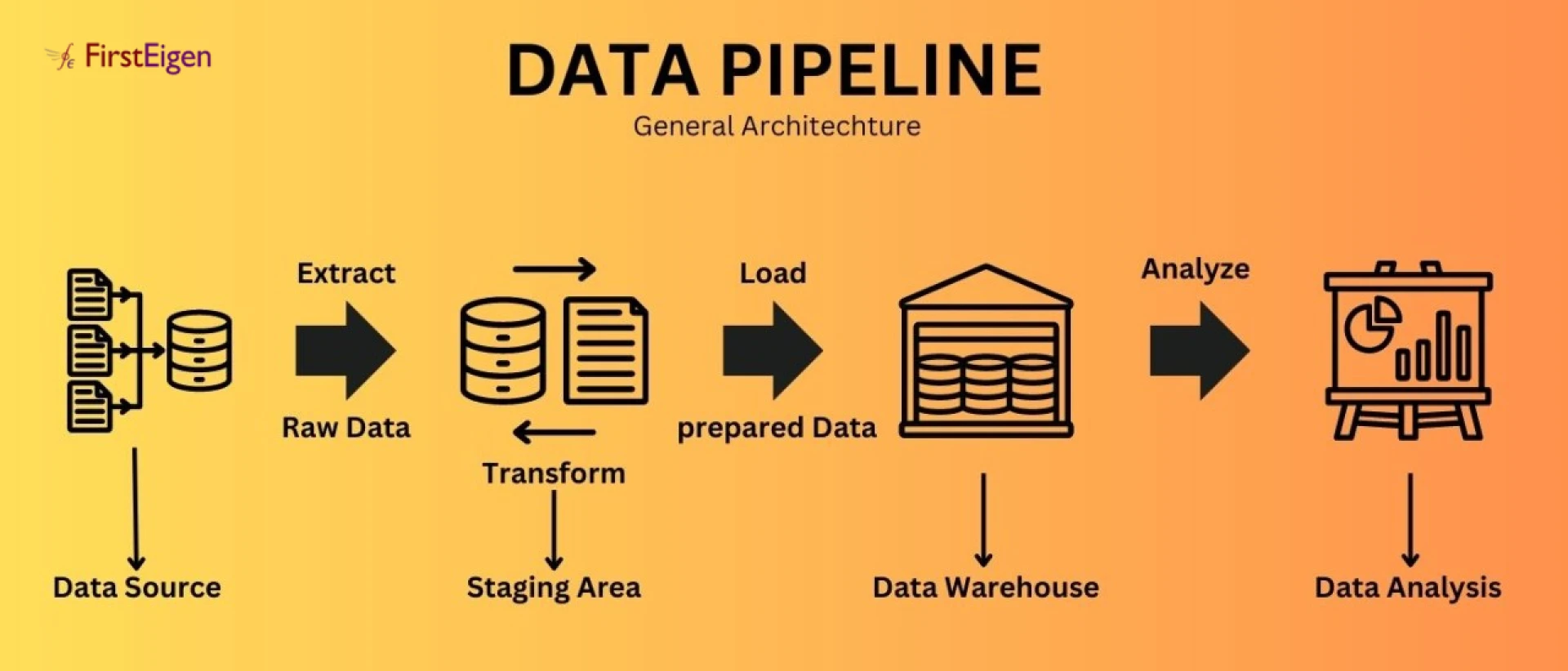

On the surface, building pipelines seems straightforward — extract, transform, load. In reality, things break quietly:

- Schema changes go unnoticed

- Null values creep into reports

- Duplicate records inflate KPIs

- Delays impact time-sensitive decisions

Without the right data pipeline tools, these issues often surface only after business users question a dashboard.

Why Traditional Monitoring Falls Short

Many teams rely on job-level monitoring. It tells you whether a workflow ran — not whether the output is correct.

Modern data pipeline tools must go beyond status checks. They need to validate accuracy, completeness, consistency, and business logic. Otherwise, organizations operate on flawed assumptions.

The FirstEigen Perspective

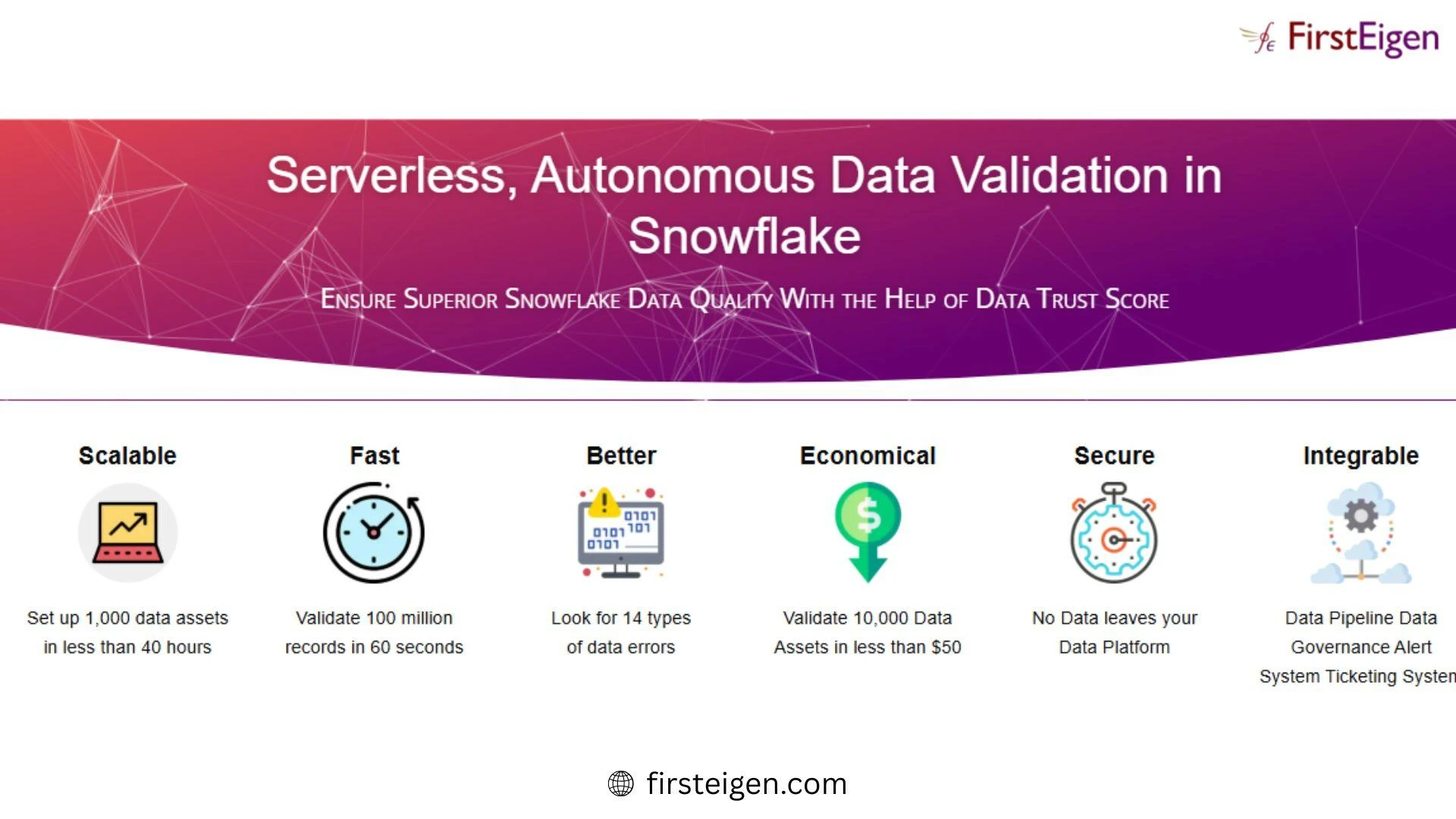

FirstEigen strengthens enterprise data pipelines by embedding intelligent validation directly into the flow of data. Its platform, DataBuck, uses context-aware AI and machine learning to understand how data behaves over time.

Instead of static, manually written rules, it continuously learns patterns and detects anomalies automatically. This approach helps teams:

- Catch subtle data quality issues early

- Reduce alert fatigue

- Maintain trust in analytics systems

- Support AI initiatives with reliable inputs

By enhancing modern data pipeline tools with intelligent validation, FirstEigen ensures that accuracy travels alongside the data itself.

Building for Scale and Confidence

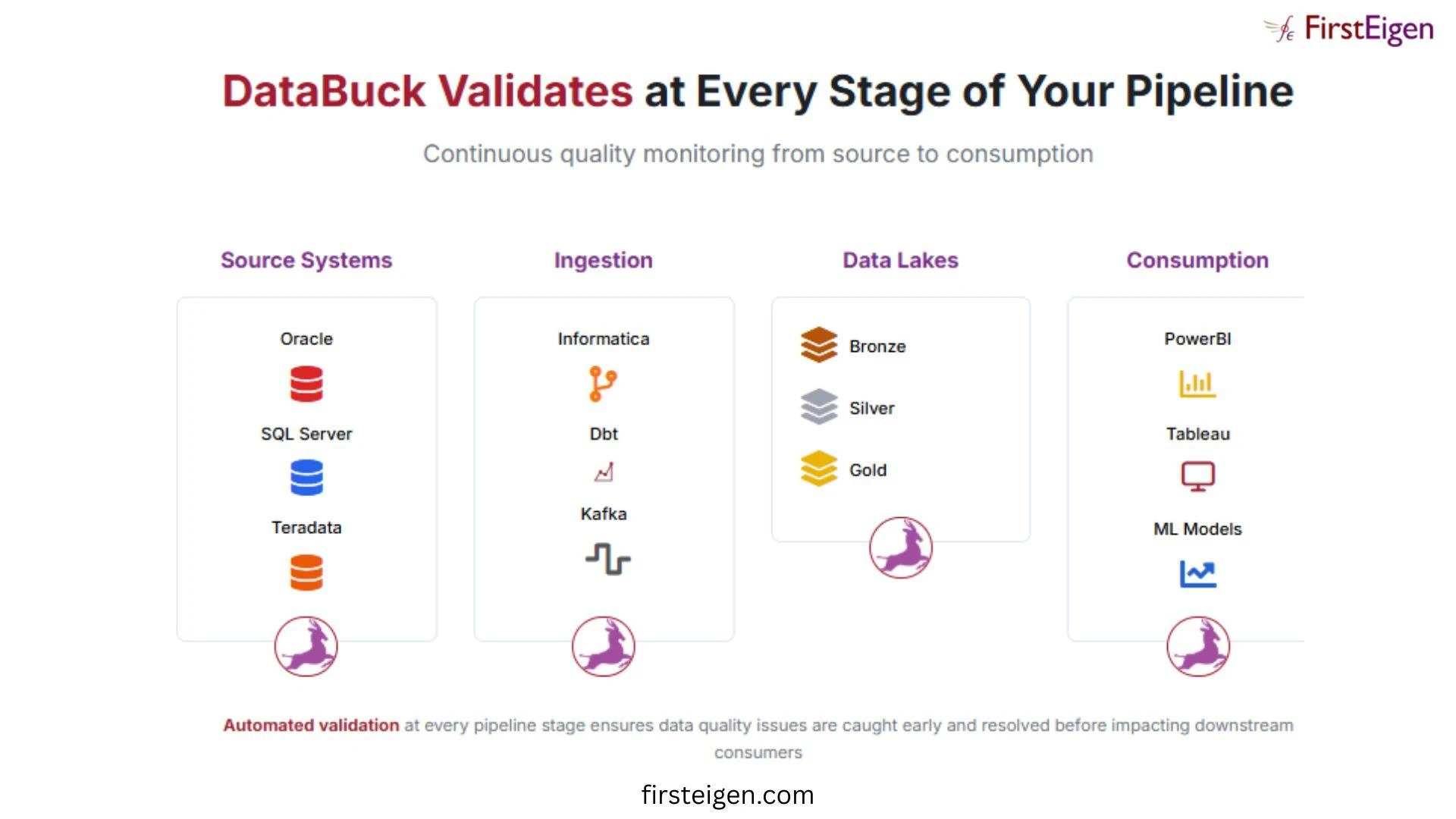

As organizations scale, so does architectural complexity. More sources. More transformations. More stakeholders relying on insights.

Strong data pipelines are not just about speed; they are about resilience. When data flows through lakes, warehouses, and transformation layers, validation must exist at every stage.

With the right data pipeline tools, businesses can:

- Monitor data health in real time

- Detect structural and behavioral anomalies

- Ensure compliance and governance

- Enable faster, more confident decision-making

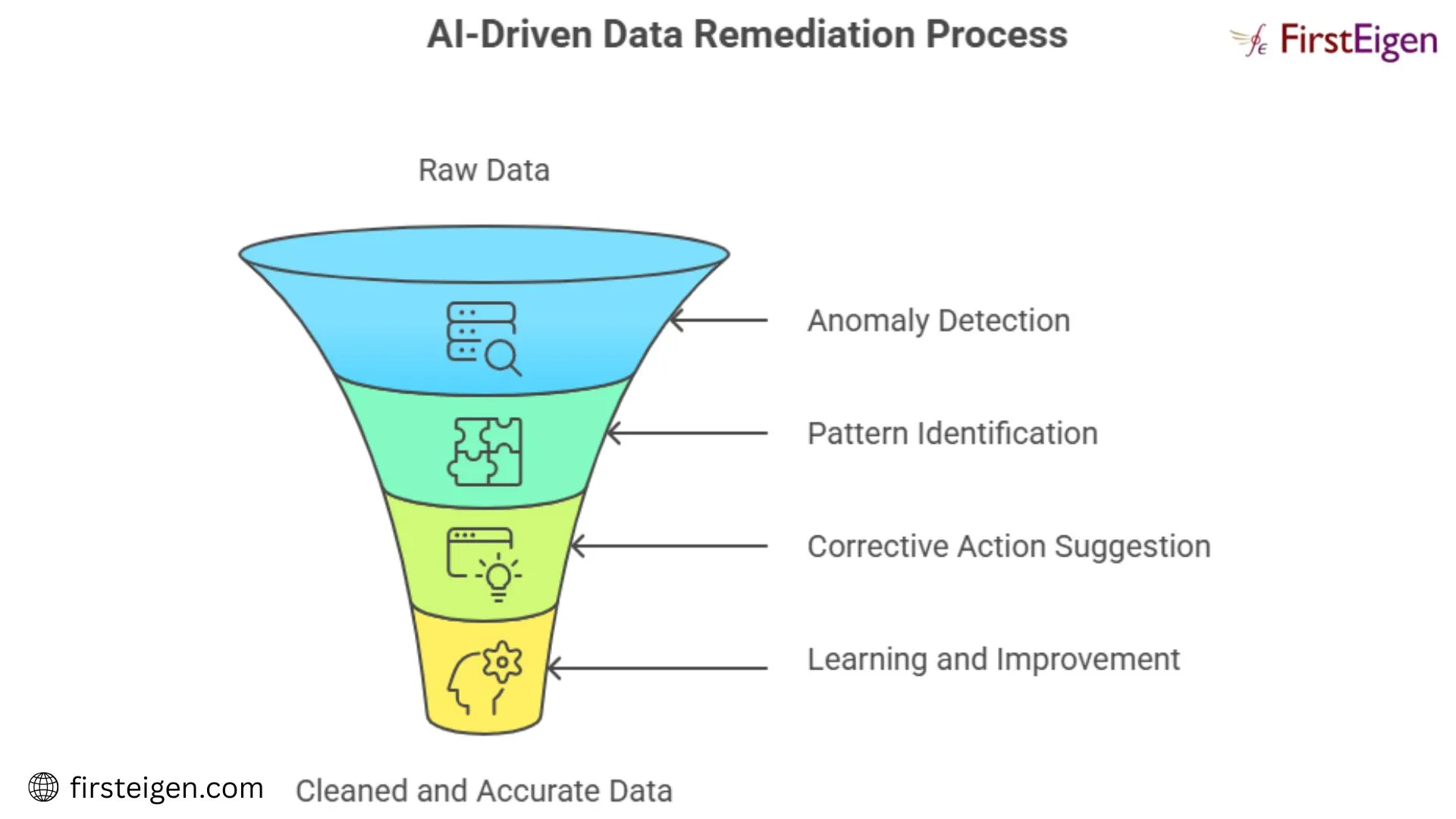

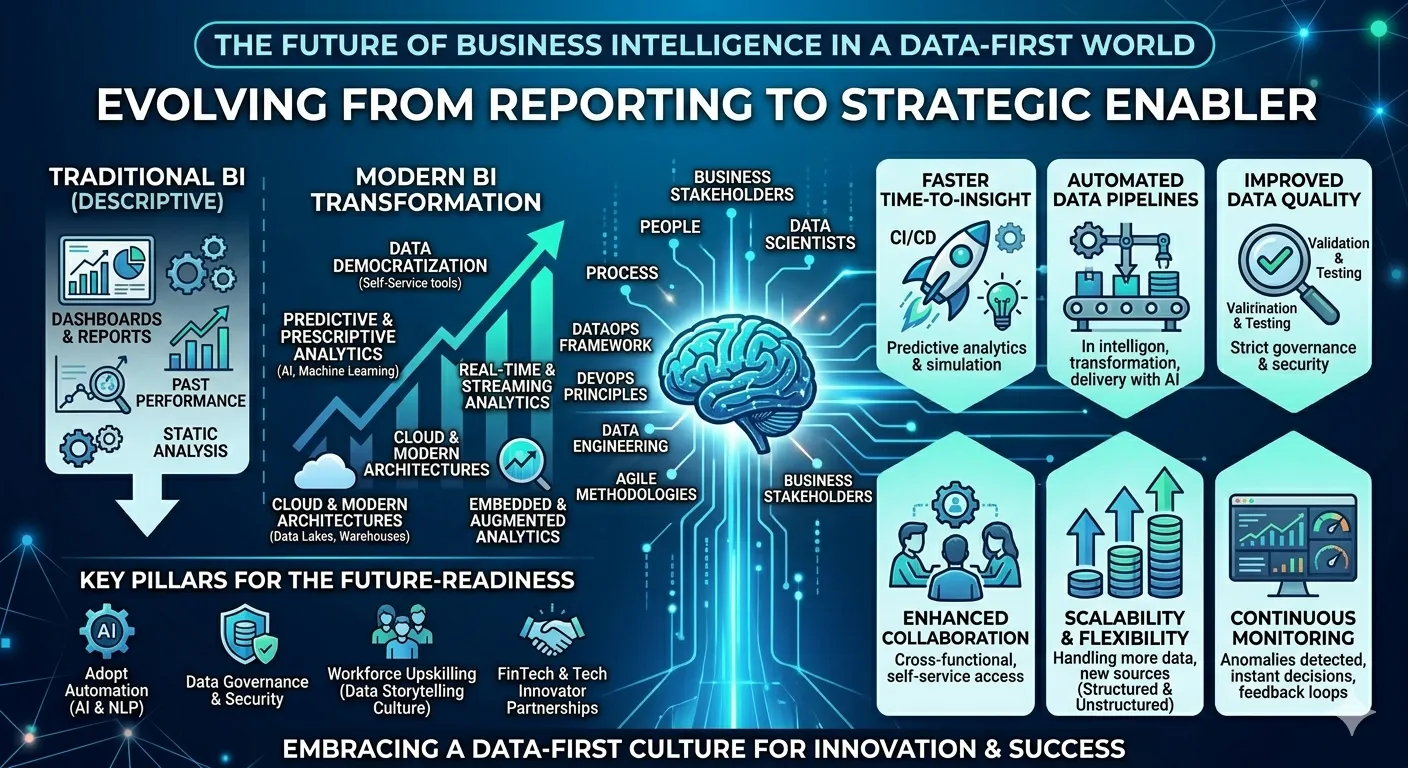

The Shift from Automation to Intelligence

Automation made modern analytics possible. Intelligence makes it dependable.

Companies investing in advanced data pipelines understand that observability and validation are no longer optional. They are foundational. Without them, AI models underperform, dashboards mislead, and strategy suffers.

FirstEigen’s approach is simple: strengthen your architecture by strengthening trust. When data pipeline tools combine automation with AI-driven validation, organizations move faster — without sacrificing accuracy.

Because in the end, reliable data isn’t just a technical goal. It’s a business advantage.

Sign in to leave a comment.