In today’s data-driven world, businesses rely heavily on analytics, AI models, and reporting systems to make critical decisions. But there’s one problem that continues to derail even the most advanced strategies—poor data quality. This is where data remediation becomes essential.

Simply put, data remediation is the process of identifying, correcting, and preventing errors in datasets to ensure accuracy, consistency, and reliability. Without it, even the best data quality software can fall short, leading to flawed insights and costly mistakes.

If your organization is struggling with inconsistent data, duplicate records, or broken pipelines, understanding how data remediation works—and how to implement it effectively—can make all the difference.

What Is Data Remediation and Why Does It Matter?

Data remediation goes beyond simple data cleansing. It’s a structured and continuous approach to fixing data issues at their root while ensuring they don’t reoccur.

Modern enterprises deal with massive volumes of data flowing from multiple sources—CRMs, ERPs, cloud platforms, APIs, and more. As data complexity increases, so does the likelihood of errors such as:

- Missing or incomplete values

- Duplicate records

- Incorrect formats

- Data drift in machine learning models

- Inconsistent business rules

Without proper remediation, these issues can silently impact reporting accuracy, compliance, and AI performance.

To explore how automated solutions tackle these challenges, you can check out this detailed guide on data remediation solutions.

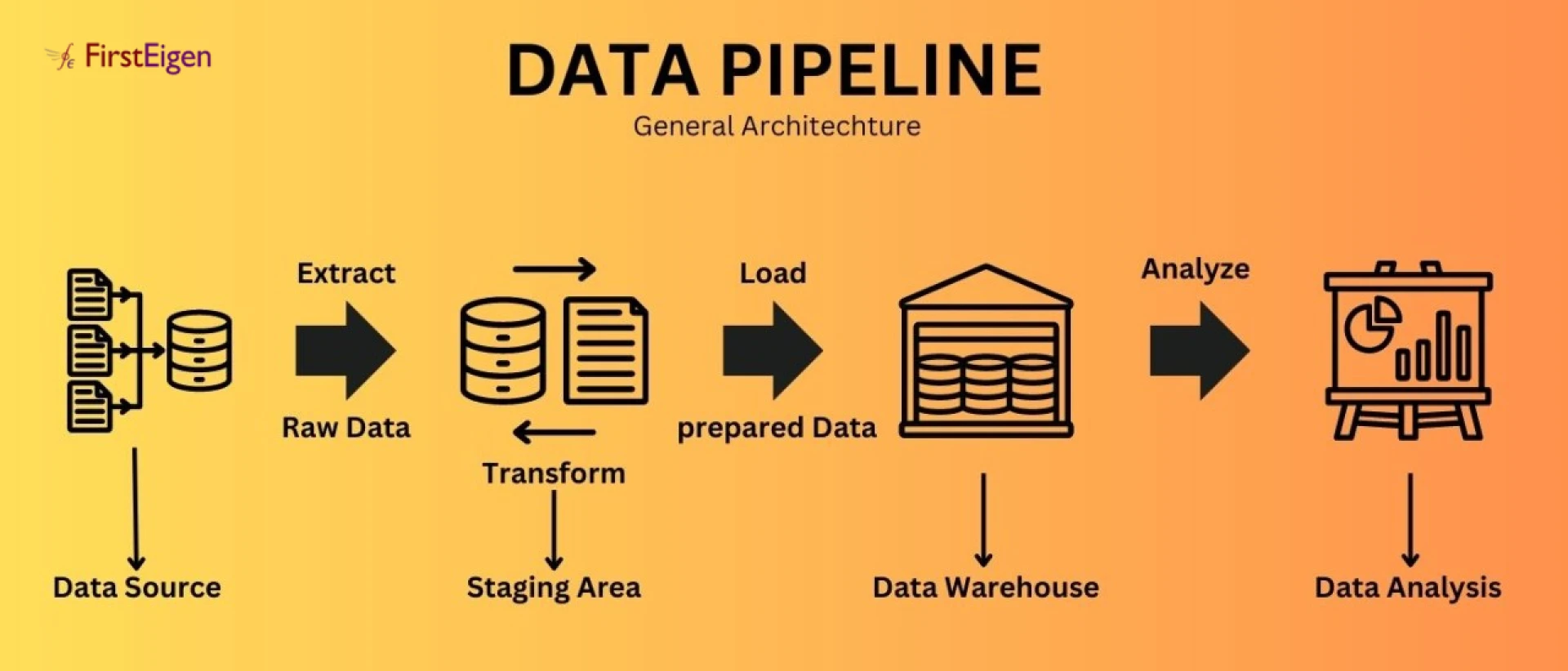

Understanding the Data Remediation Process

A well-defined data remediation process ensures that data issues are not just fixed temporarily but systematically eliminated. Here’s how it typically works:

1. Data Profiling and Discovery - The first step is identifying anomalies and inconsistencies within datasets. This involves scanning data for patterns, outliers, and unexpected values.

2. Root Cause Analysis - Instead of just fixing surface-level errors, organizations need to understand why the issue occurred. Was it a faulty data pipeline? A schema mismatch? Human errors?

3. Data Correction and Standardization - Once the root cause is identified, the data is corrected. This could include deduplication, normalization, or filling missing values using predefined rules or AI models.

4. Validation and Monitoring - After remediation, data is validated to ensure accuracy. Continuous monitoring helps detect future issues in real time.

5. Prevention and Automation - The final step involves implementing rules and automation, so the same issues don’t recur.

This structured approach ensures that businesses build long-term data trust instead of constantly firefighting issues.

Common Data Remediation Techniques Used Today

Organizations use a variety of data remediation techniques depending on their data maturity and complexity. Some of the most effective ones include:

Rule-Based Validation - Predefined rules are applied to detect inconsistencies—for example, ensuring that dates follow a specific format, or that mandatory fields are not empty.

Data Deduplication - Duplicate records are identified and merged to maintain a single source of truth.

Data Standardization - Data is transformed into a consistent format across systems, such as standardizing address formats or currency values.

Anomaly Detection - Advanced systems use statistical models to detect unusual patterns in data that may indicate errors.

Data Enrichment - Missing or incomplete data is supplemented using external or internal data sources.

While these techniques are powerful, they often require manual intervention unless paired with modern automation tools.

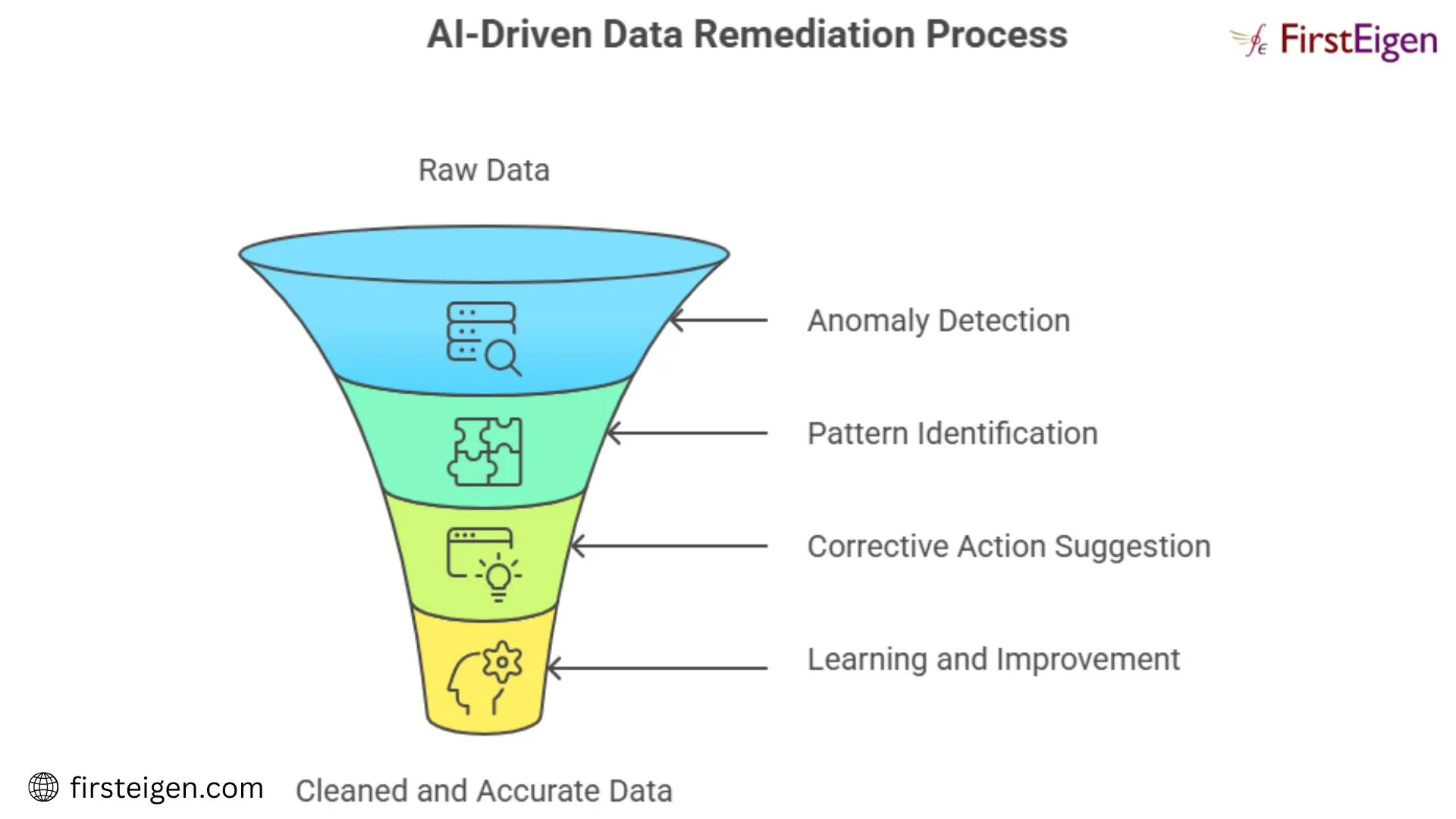

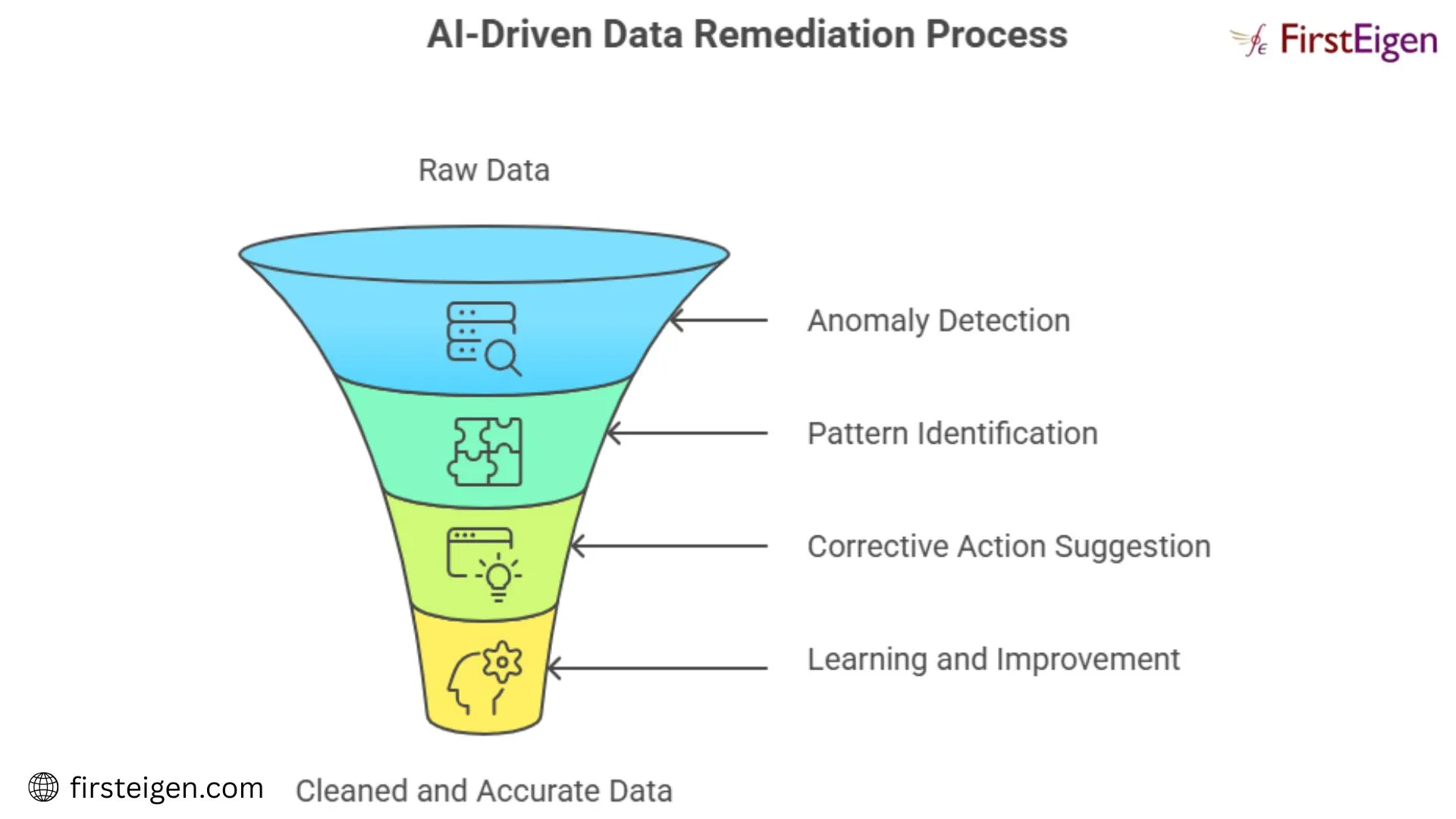

The Rise of AI-Powered Remediation

As data volumes grow, traditional methods struggle to keep up. This is where AI-powered remediation is transforming the landscape.

AI-driven systems can:

- Automatically detect anomalies across massive datasets

- Learn from historical data patterns

- Predict potential data issues before they occur

- Recommend or apply fixes without manual intervention

This shift from reactive to proactive data management is critical for organizations aiming to scale their data operations.

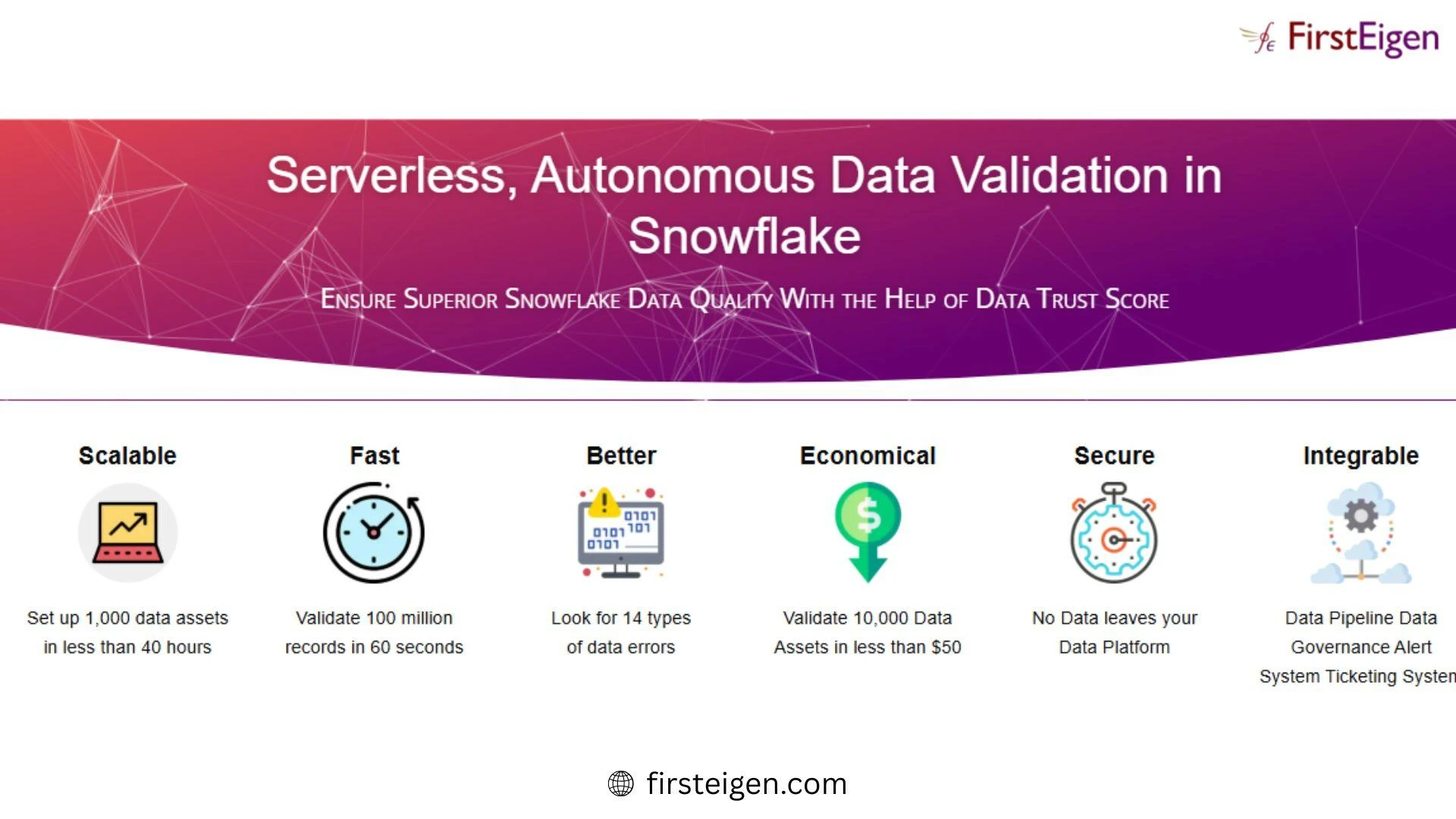

Platforms like advanced data quality software now integrate AI to enable autonomous monitoring and remediation, reducing the need for constant manual oversight.

Data Quality and Remediation: Why They Must Work Together

There’s often confusion between data quality and remediation, but they are two sides of the same coin.

- Data quality focuses on measuring and maintaining the accuracy, completeness, and consistency of data.

- Data remediation focuses on fixing and preventing issues that degrade data quality.

Without remediation, data quality efforts become reactive and unsustainable. On the other hand, without quality monitoring, remediation lacks direction.

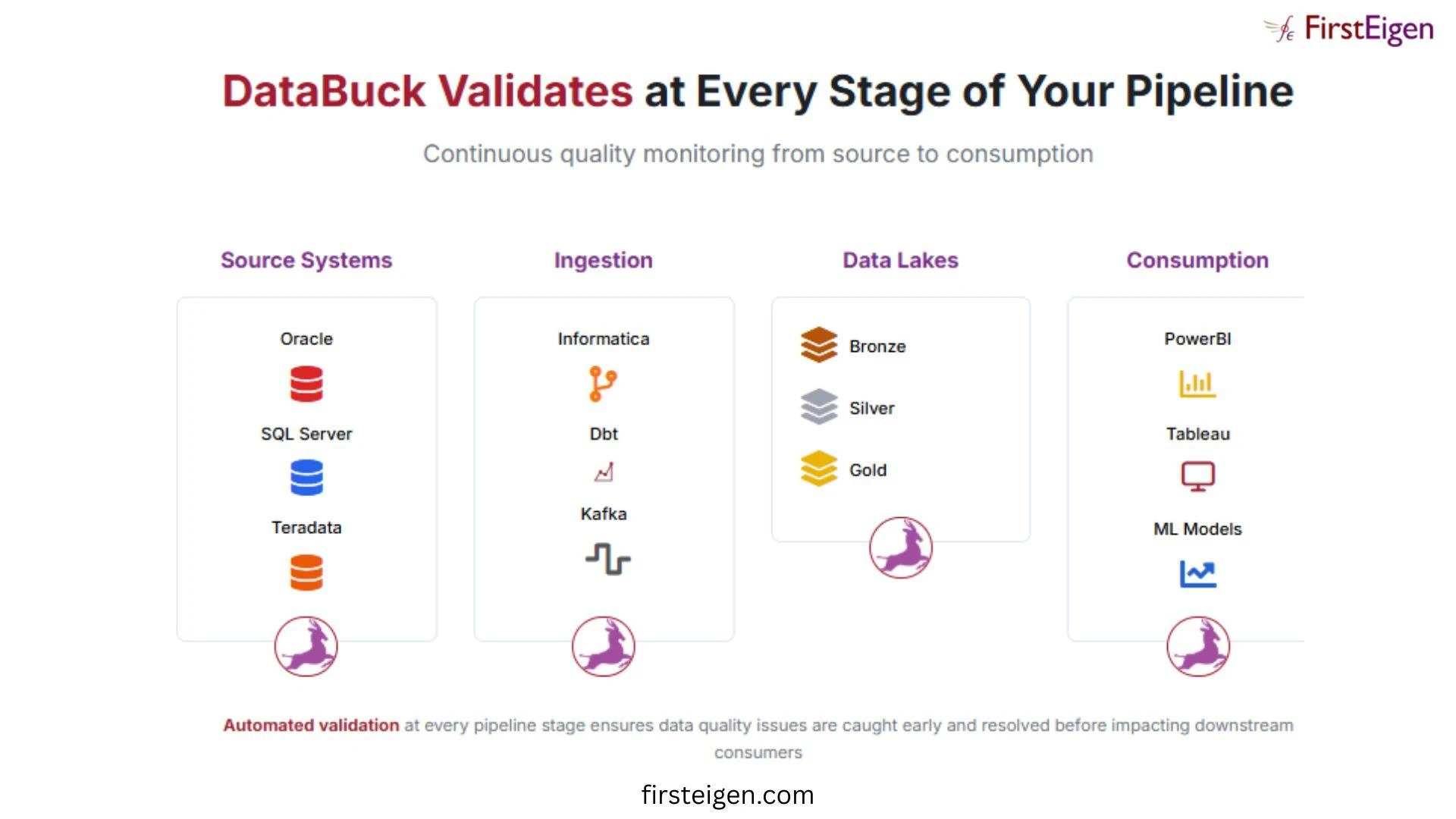

Together, they create a closed-loop system where:

- Data quality tools detect issues

- Remediation processes fix them

- Continuous monitoring prevents recurrence

This integrated approach ensures reliable data for analytics, compliance, and AI initiatives.

Challenges in Implementing Data Remediation

Despite its importance, many organizations struggle with implementing effective remediation strategies. Common challenges include:

- Data Silos: Disconnected systems make it difficult to maintain consistency

- Manual Processes: Heavy reliance on SQL scripts and manual checks slows down operations

- Lack of Scalability: Traditional tools cannot handle large-scale data environments

- Delayed Detection: Issues are often discovered too late, after impacting business outcomes

To overcome these challenges, businesses are increasingly adopting automated and AI-driven solutions that can scale with their data needs.

For a deeper understanding of how modern platforms address these issues, explore this resource on automated data remediation.

How Data Remediation Impacts Business Outcomes

Investing data remediation isn’t just a technical decision—it’s a business imperative. Here’s how it delivers value:

Improved Decision-Making

Accurate data leads to reliable insights, enabling better strategic decisions.

Enhanced AI Performance

Clean data ensures that machine learning models produce accurate and unbiased results.

Regulatory Compliance

In industries like banking and healthcare, accurate data is essential for meeting compliance requirements.

Operational Efficiency

Automation reduces manual effort and frees up data teams to focus on innovation.

Increased Trust in Data

Perhaps most importantly, it builds confidence among stakeholders that the data they rely on is trustworthy.

Final Thoughts: Why Data Remediation Should Be a Priority

As organizations continue to scale up their data ecosystems, the importance of data remediation cannot be overstated. It’s not just about fixing errors—it’s about building a resilient data foundation that supports analytics, AI, and business growth.

By combining a structured data remediation process with modern AI-powered remediation tools and robust data quality software, businesses can move from reactive problem-solving to proactive data management.

In a world where data drives decisions, ensuring its accuracy isn’t optional—it’s essential.

Sign in to leave a comment.