Introduction

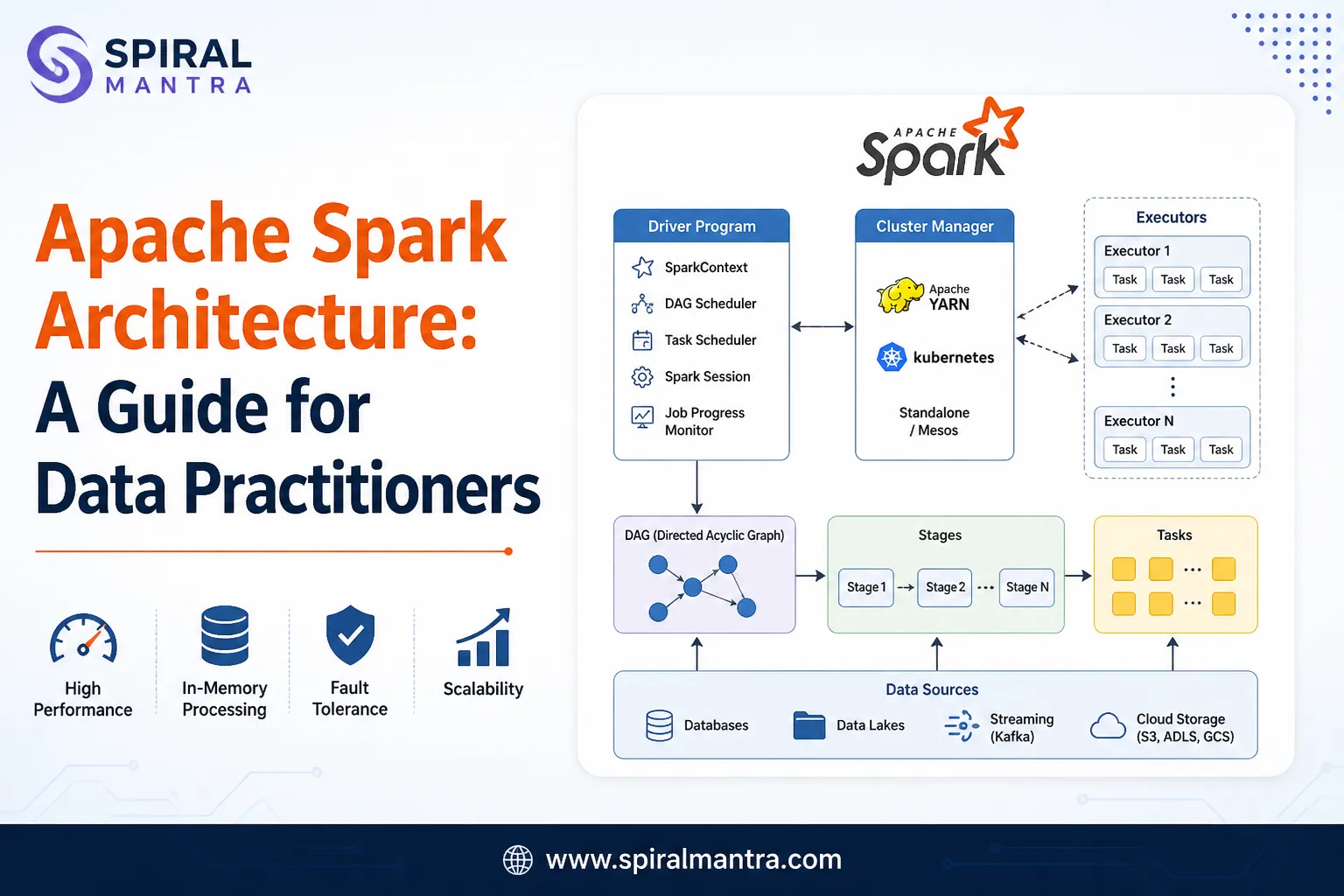

Performance, governance, and cost control are the pillars of modern-day Databricks. Teams use unified analytics on the Lakehouse platform. Engineers design pipelines with high reliability. Compute and storage layers in Databricks get optimized for efficiency. Databricks professionals follow data governance rules. Real-time processing and AI integration plays a major role in Databricks today. Professionals must follow the industry-relevant best practices to use the platform to its full potential. One can join Databricks Training for ample hands-on training opportunities in using this platform.

1. Use Delta Lake for Reliable Data Engineering

- Delta tables must be used for all critical datasets.

- ACID transactions make data more consistent.

- Ingestion of bad data must be prevented using schema.

- Schema evolution must be used only when required.

- Maintain data versioning for rollback and audit.

Delta Lake improves reliability. It reduces data corruption risks. It supports time travel queries for debugging.

2. Optimize Cluster Configuration

- Autoscaling clusters help professionals manage variable workloads.

- The right instance type must be selected as per the type of workload.

- Spot instances improve cost savings.

- SQL execution gets faster with Photon engine.

- Professionals need to prevent node over-provisioning.

The right cluster tuning enables Databricks professionals to work more efficiently and reduce costs.

3. Efficient Data Partitioning and File Management

- Query patterns must be analysed to Partition large tables.

- High-cardinality partition columns must be prevented.

- OPTIMIZE command makes small files compact.

- Z-ORDER indexing speeds up filtering processes.

Small file problems reduce performance. Compaction improves query speed.

Example Syntax

OPTIMIZE sales_data ZORDER BY (customer_id);

4.Implement Data Governance with Unity Catalog

- Centralized catalog improves management of metadata.

- Role-based access control improves system security.

- Systems follow regulations effectively when data lineage is tracked.

- Enforce fine-grained permissions at table level.

Governance ensures security. It supports regulatory compliance.

5. Use Structured Streaming for Real-Time Pipelines

- Incremental processing makes pipeline designing processes streamlined.

- Checkpointing improves fault tolerance across systems.

- Late data must be handled with watermarking.

- Monitor streaming jobs continuously.

Streaming improves latency. It enables real-time analytics.

6. Monitor and Optimize Query Performance

- Query history improves performance analysis.

- Enable adaptive query execution.

- Data which are frequently accessed must be cached.

Professionals can speed up execution processes with the right performance tuning practices. This improves user experience. Consider checking the Data Analyst Course and joining a training program for the best guidance.

Performance Optimization Table

| Technique | Benefit | Use Case |

|---|---|---|

| Caching | Queries speed up | Queries can be repeated |

| Z-ORDER | Filtering becomes effective | Used for large datasets |

| Partitioning | Scan time reduces significantly | Time-based queries |

CI/CD Integration for Data Pipelines

- Using notebooks and code improves version control.

- CI/CD pipelines Automate deployment processes.

- Thoroughly test the pipelines before releasing.

- Use environment separation for dev and prod.

Automation reduces manual errors and makes system more reliable.

2. Cost Optimization Strategies

- Job clusters must be used instead of all-purpose clusters.

- Schedule Cluster shutdown when clusters remain inactive.

- Use cost dashboards to monitor use of systems.

- Storage improves with lifecycle policies.

The right cost control methods make systems more sustainable.

3. Data Quality and Testing

- Data must be validated during ingestion stage.

- Data quality rules improve with the right expectations.

- Pipeline testing must be automated for efficiency.

- Record all failures for accurate debugging.

Good quality data improves user trust and makes analytics accurate.

Conclusion

System optimization, proper governance, and scalable systems play a major role in modern-day Databricks. Teams must design efficient pipelines. They must manage compute resources carefully. A Data Science Course focuses on using Databricks for machine learning workflows, model training, and large-scale data processing with distributed computing. Data success becomes safe with the right security rules. Professionals need to focus on performance tuning for speed and cost-effective operations. Databricks professionals must follow the above best practices to get the best results with this platform. They support advanced analytics and AI workloads effectively.

Sign in to leave a comment.