The U.S. financial services sector is undergoing one of the most consequential technology shifts in its history. Autonomous AI agents — software systems that perceive their environment, reason through options, and take action without constant human direction — are rapidly displacing traditional rule-based automation across banking, capital markets, insurance, and fintech. At the center of this shift is a deceptively simple demand from enterprise leaders: real-time risk awareness and faster, smarter decisions at scale.

This article unpacks the core development models that power enterprise Financial AI agents, explores how U.S. institutions are architecting them for risk and decision intelligence, and answers the most pressing questions practitioners are raising in 2026.

Why U.S. Enterprises Are Prioritizing Financial AI Agents Now

The urgency is data-driven. According to Deloitte's State of AI in the Enterprise 2026 report — based on a survey of 3,235 senior leaders — worker access to AI rose by 50% in 2025, and twice as many leaders as the prior year are reporting transformative business impact from AI deployments. Within financial services specifically, 70% of commercial banks have adopted AI in at least one core banking function, and 78% of banks investing in AI have seen a positive ROI within 18 months.

Meanwhile, the regulatory environment is maturing in the U.S. In February 2026, the U.S. Department of the Treasury released two AI risk management tools specifically designed to help financial institutions safely adopt AI, providing institutions with a matrix of 230 control objectives to manage risks across the AI lifecycle.

This signals that governance and compliance are no longer afterthoughts — they are baked into the architecture from day one.

The market opportunity reflects this momentum. The AI agent market is growing at an extraordinary pace, with a projected CAGR of 46.3%, expanding from $7.84 billion in 2025 to $52.62 billion by 2030. For enterprise financial teams, that growth is not abstract — it translates directly into decisions about which development models to adopt, which vendors to partner with, and which architectural patterns will survive regulatory scrutiny.

What Is a Financial AI Agent? A Working Definition

A financial AI agent is an autonomous software system that continuously ingests financial data streams, applies reasoning models to interpret risk signals, and executes or recommends actions — such as flagging a suspicious transaction, adjusting a credit limit, triggering a trade, or escalating a compliance alert — without waiting for explicit human commands at each step.

Unlike traditional rule-based systems or even early machine learning models, modern financial AI agents combine several capabilities in one stack:

- Perception: Ingesting structured and unstructured data from market feeds, transaction systems, customer records, and external signals in real time.

- Reasoning: Applying chain-of-thought, multi-step, or hybrid reasoning models to evaluate options and predict outcomes.

- Action: Executing decisions through integrations with core banking systems, trading platforms, or CRM tools — or surfacing recommendations for human review.

- Learning: Adapting behavior over time based on feedback loops and new data.

Financial institutions rely on utility-based agents to analyze markets, balance risk-reward trade-offs, flag fraud, and execute trades in real time. The most advanced deployments increasingly rely on multi-agent systems, where specialized agents collaborate across functions.

The Four Core Development Models for Enterprise Financial AI Agents

Choosing the right architectural model is the single most consequential engineering decision in enterprise financial AI agent development. Each model balances autonomy, explainability, latency, and regulatory risk differently.

1. Reactive Agent Model (Event-Driven, Low-Latency)

The reactive model is the simplest and fastest. These agents operate on a stimulus-response loop: when a specific financial event occurs (a transaction exceeding a threshold, a market price moving beyond a band, a document arriving in a workflow queue), the agent triggers a predefined action immediately.

Best suited for: Fraud detection triggers, payment anomaly alerts, real-time AML screening, market circuit breakers.

Architecture pattern: Kafka or Apache Pulsar event streams → rule engine or lightweight ML classifier → action dispatcher → audit log.

U.S. context: The Treasury's 2026 AI risk framework explicitly recommends that financial institutions design system shutdown and deactivation mechanisms — "automated circuit breakers" — for the rapid, controlled disengagement of AI agents when their outputs deviate from defined ethical or financial logic boundaries. Reactive models with hard-coded circuit breakers are the natural implementation of this requirement.

Limitations: Reactive agents lack contextual memory. They cannot reason across time horizons or adjust to evolving market conditions without explicit reprogramming.

2. Deliberative Agent Model (Goal-Directed, Plan-Based)

Deliberative agents maintain an internal model of their environment and plan sequences of actions to achieve a defined financial goal. They are built on large language models (LLMs) augmented with structured financial data, enabling multi-step reasoning before committing to a course of action.

Best suited for: Credit underwriting, loan origination, investment portfolio rebalancing, M&A due diligence, regulatory reporting automation.

Architecture pattern: LLM backbone (GPT-4, Claude, or a fine-tuned financial model) + retrieval-augmented generation (RAG) over enterprise data + planning module + human-in-the-loop checkpoints.

U.S. context: Oracle's Banking 4.0 framework recommends establishing a human-in-the-loop operating model and risk framework aligned with regulatory expectations from day one, embedded alongside deliberative AI agents rather than bolted on afterward.

Limitations: Higher latency than reactive models. Requires high-quality, governed training data. Explainability must be engineered deliberately — LLMs are not naturally interpretable to regulators.

3. Hybrid Reasoning Model (Reactive + Deliberative, Layered)

This is the architecture gaining the most traction across U.S. Tier-1 banks and large fintechs in 2026. A hybrid model pairs a fast reactive layer (for immediate risk signals) with a slower deliberative layer (for contextual analysis and policy recommendations). The two layers communicate through a shared state store and an orchestration controller.

Best suited for: Integrated risk management dashboards, real-time credit decisioning with explainability, Know Your Customer (KYC) workflows, fraud investigation pipelines.

U.S. case example: At a leading bank, Accenture helped transform the KYC process using agentic AI — moving from a slow, costly manual process with legacy systems to a fully agentic end-to-end workflow where KYC analysts are no longer focused on highly manual activities but instead spend their time on high-value, judgment-based investigation work.

Architecture pattern: Event bus (Kafka/Confluent) → reactive screening agent → shared state store → deliberative reasoning agent (RAG + LLM) → orchestration controller → action dispatcher + audit trail.

One senior data scientist at a global consulting firm described this approach: "By orchestrating these sorts of agents, we can automate the ingestion and transformation, and that can boost accuracy and enable end-to-end insights. With the volume and variety as part of the IoT, the modular design of this is going to scale more gracefully."

4. Multi-Agent Orchestration Model (Specialized, Collaborative)

The most sophisticated model deploys fleets of specialized agents — each trained for a specific financial domain (fraud, credit risk, compliance, market risk, customer intelligence) — that collaborate through a shared orchestration layer. No single agent controls the full workflow; instead, agents negotiate, delegate, and escalate tasks based on their confidence and authority levels.

Best suited for: Enterprise-wide risk intelligence platforms, cross-functional financial operations centers, autonomous treasury management, systemic risk monitoring.

U.S. context: Nearly 50% of U.S. banks and insurers are creating dedicated roles to supervise AI agents, and most CIOs expect AI agents to operate under a central governance model that enables real-time monitoring and telemetry tracking of AI agent activity and system interactions.

Architecture pattern: Agent registry → task router → specialized agent pool (fraud agent, compliance agent, credit agent, market risk agent) → multi-agent validation layer → shared audit ledger → human escalation gateway.

The forward-looking design for multi-agent banking systems includes creating a centralized agent command center mapping all deployments with real-time performance, compliance, and risk metrics, and establishing cross-functional agent tuning that adjusts AI agent behavior as a collective group, not in siloed business units.

Key Technical Components in Enterprise Financial AI Agent Architecture

Regardless of which development model an organization chooses, several technical components appear consistently across enterprise-grade deployments in the U.S.

Real-Time Data Pipelines

No financial AI agent can deliver decision intelligence without a robust data foundation. Leading banks are consolidating fragmented data into a unified, real-time foundation to power trusted decisioning and dynamic personalization while adhering to data stewardship requirements. Technologies such as Apache Kafka, AWS Kinesis, Databricks Delta Live Tables, and Snowflake Streaming are common components of this layer.

Retrieval-Augmented Generation (RAG)

RAG architectures allow LLM-based agents to query enterprise knowledge bases — regulatory documents, internal policies, credit histories, market research — in real time, without retraining the underlying model. This is critical for compliance-heavy environments where policies change frequently.

Modern agentic AI for enterprises requires sophisticated data pipelines that support Retrieval-Augmented Generation (RAG) capabilities, enabling agents to access real-time context from multiple enterprise systems.

MLOps and Continuous Model Governance

Enterprise financial AI agents are not fire-and-forget deployments. They require ongoing monitoring for model drift, bias, and performance degradation. U.S. regulators — including the OCC, Federal Reserve, and now the Treasury — expect financial institutions to maintain model validation frameworks aligned with SR 11-7 guidance. Leading AI development companies deliver full lifecycle services — from AI strategy and data engineering to deployment and optimization — including MLOps, monitoring, and continuous improvement at scale.

Compliance-by-Design Architecture

The strategy is to embed governance into agent design from day one. Controls must be architected alongside agents, not retrofitted after something breaks. A disciplined AI strategy embraces adopting "compliance by design" where governance is architected alongside agents, not bolted on after deployment.

Real-Time Risk Use Cases Driving Enterprise Adoption in the U.S.

Fraud Detection and AML

Real-time fraud detection was among the first use cases to benefit from AI agents. Modern deployments go well beyond static rule engines — they apply behavioral graph analysis, device fingerprinting, and anomaly detection in milliseconds across billions of transactions. Multi-agent orchestration models are particularly effective here, with specialized agents handling transaction screening, account history analysis, and network link analysis in parallel.

Credit Risk and Underwriting

Commercial banks are increasingly deploying AI-centric risk, credit, and portfolio-management frameworks, including predictive analytics for real-time scoring and continuous monitoring. AI agents can now assess creditworthiness by ingesting not just traditional credit bureau data but also cash flow patterns, payroll feeds, and alternative data sources — enabling more inclusive and accurate underwriting decisions, particularly for SMBs that have historically been underserved.

Market Risk and Portfolio Management

Deliberative agents are well-suited to market risk management, where the ability to reason across time horizons and model multiple scenarios is essential. These agents can continuously monitor portfolio exposures, stress-test against simulated market shocks, and recommend hedging strategies with full audit trails.

KYC and Regulatory Compliance

KYC compliance is one of the most resource-intensive processes in U.S. banking. Agentic AI is now automating document classification, data extraction, cross-reference validation, and exception flagging — dramatically reducing the manual burden while improving detection rates and reducing false positives.

Treasury and Liquidity Management

Banks and businesses are exploring API-led treasury and balance-sheet orchestration, with real-time APIs, modular treasury services, and AI forecasting enabling joint optimization of liquidity, payments, FX risk, and working capital.

The Role of Proven Technology Partners in Financial AI Agent Development

Building enterprise-grade financial AI agents is not a solo endeavor. The development complexity, regulatory requirements, and integration challenges demand partners with deep domain expertise in both financial services and AI engineering.

When evaluating a Financial AI Agent Development partner, U.S. enterprises should assess several dimensions. First, domain expertise: a qualified partner must understand credit, AML, fraud, compliance, payments, treasury, and audit workflows — not merely AI engineering. Generic LLM patterns are insufficient in regulated financial environments. Second, production evidence: the strongest companies have live, scaled deployments across banks, insurers, credit unions, and fintechs — not just prototypes. Proven production systems signal reliability, scalability, regulatory acceptance, and reduce adoption risk for new BFSI buyers. Third, integration depth: agents must connect cleanly with core banking systems, CRMs, fraud platforms, and compliance tools. Vendors with prebuilt connectors reduce engineering overhead and accelerate go-live timelines significantly.

Firms like Azilen Technologies bring a combination of enterprise software engineering depth and AI agent specialization that is particularly well-suited for U.S. financial services organizations navigating this transition. For organizations also evaluating the broader technology landscape, resources like the guide to Financial AI Agent Development companies offer structured frameworks for vendor selection and capability benchmarking.

Governance, Regulatory Alignment, and Risk Management

The U.S. Regulatory Landscape in 2026

The U.S. approach to AI regulation in financial services is evolving rapidly. The Treasury's February 2026 framework provides financial institutions with a structured matrix of control objectives, covering the full AI lifecycle from data governance and model development to deployment, monitoring, and decommissioning. Simultaneously, bank regulators including the OCC and Federal Reserve have signaled increasing expectations around model explainability, fairness testing, and audit trails for AI-driven decisions.

The United States has moved toward a more innovation-focused approach to AI regulation, with a new federal Executive Order setting out a "minimally burdensome" AI policy aimed at speeding deployment and strengthening national competitiveness. This creates a window of opportunity for U.S. financial institutions to move aggressively — but not recklessly.

Human-in-the-Loop Requirements

Banks must embed ethical oversight, explainability, and policy controls directly into agent workflows. Human supervision and interaction in high-impact decisions support regulatory compliance, risk alignment, and operational resilience.

This is not just a regulatory nicety. It is an architectural necessity. High-consequence decisions — large credit approvals, suspicious activity reports, trade execution above defined thresholds — must have defined human review checkpoints with documented audit trails. Agent systems that lack these checkpoints will face regulatory pushback regardless of their technical sophistication.

Data Governance and Privacy

Financial AI agents process extraordinarily sensitive data. Governance frameworks must address data lineage (knowing where each data point in a model came from), data quality monitoring (detecting degradation in input feeds before it propagates to model outputs), and privacy compliance (ensuring agents do not inadvertently surface PII in ways that violate Gramm-Leach-Bliley Act requirements).

Building vs. Buying: Strategic Considerations for U.S. Enterprises

One of the most frequently debated questions in enterprise financial AI agent development is whether to build custom agents, purchase packaged solutions, or pursue a hybrid approach.

Custom build offers maximum control over model behavior, training data, and integration architecture. It is appropriate for organizations with proprietary data assets that create genuine competitive differentiation (e.g., a bank with decades of proprietary credit performance data). The downside is cost, time-to-market, and the ongoing talent burden.

Packaged solutions from major cloud providers (AWS Bedrock, Azure AI Foundry, Google Vertex AI) and specialized vendors accelerate deployment but may lack the domain-specific customization that regulated financial use cases demand. Banks will turn to platforms from major cloud providers in order to build AI agents that align with their brands, compliance, and service standards.

Hybrid approaches — partnering with specialized AI development firms to build custom agents on top of enterprise cloud platforms — are emerging as the dominant model for mid-to-large U.S. financial institutions. This approach combines the speed and reliability of proven infrastructure with the flexibility of purpose-built financial logic.

For organizations requiring technology vendor evaluation frameworks beyond AI agents, resources on Financial AI Agent Development across adjacent software categories provide useful benchmarks for assessing partner capabilities.

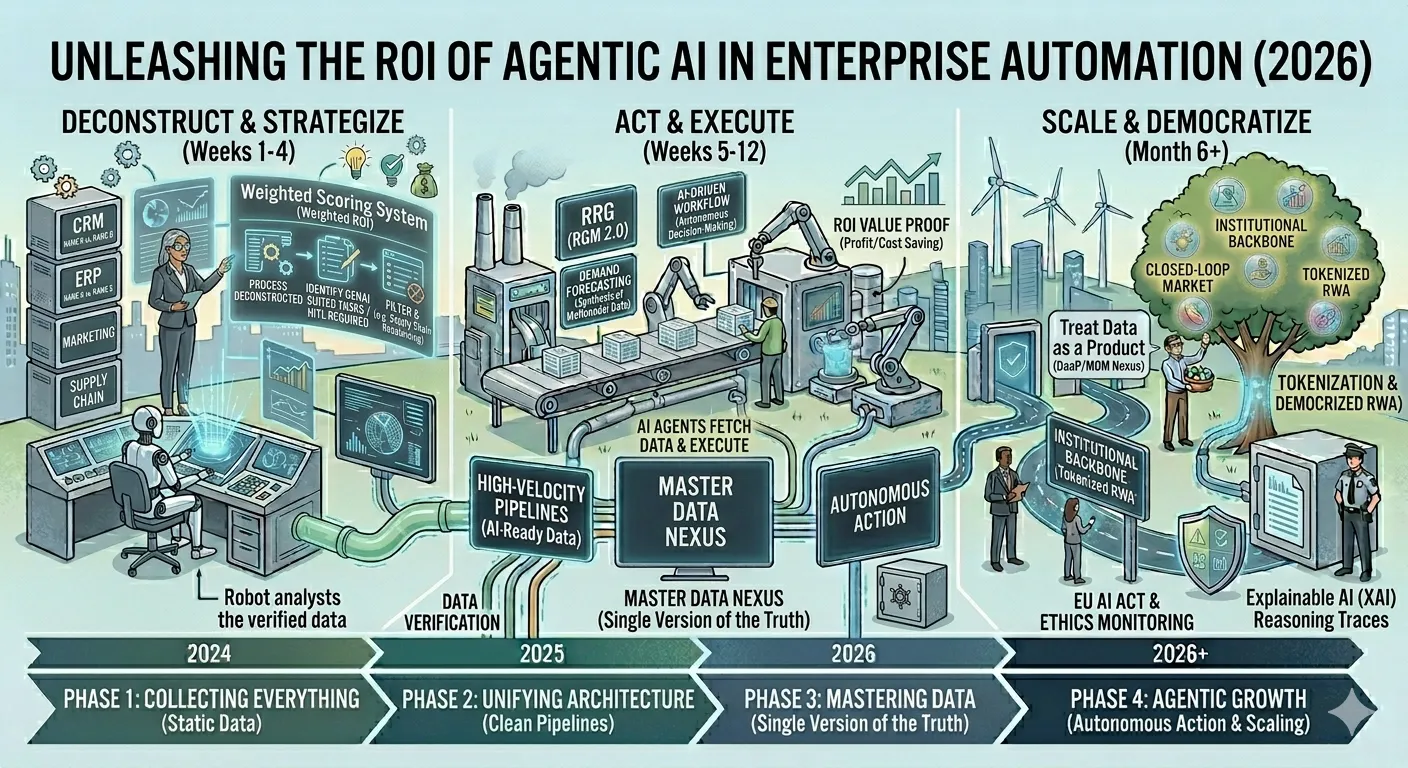

Implementation Roadmap: From Pilot to Production

Phase 1: Discovery and Use Case Prioritization (Weeks 1–6)

Map high-value, well-defined use cases against data readiness and regulatory risk. Fraud detection and AML screening are typically the lowest-risk starting points because they have clear success metrics, existing labeled training data, and established regulatory frameworks for model validation.

Phase 2: Architecture Design and Data Foundation (Weeks 6–14)

Design the agent architecture — reactive, deliberative, hybrid, or multi-agent — based on latency requirements, explainability needs, and integration complexity. Build or validate real-time data pipelines. Establish baseline MLOps infrastructure. Define governance checkpoints and human escalation thresholds.

Phase 3: Proof of Concept (Weeks 14–22)

Deploy a limited agent in a sandboxed environment with historical data. Validate performance against defined KPIs. Assess model bias, edge case behavior, and failure modes. Document findings for internal risk and compliance review.

Phase 4: Production Deployment and Monitoring (Weeks 22–36)

Move to controlled production deployment. Implement real-time telemetry and alerting. Establish model performance dashboards. Begin stakeholder training and change management. Plan for iterative model improvement.

Finance teams that deploy AI agents to manage accounts payable, expense reporting, and financial analysis can cut processing time by 50%, improve accuracy, and strengthen regulatory compliance — freeing up resources to focus on business growth rather than transaction management.

Frequently Asked Questions (FAQs)

1. What distinguishes a financial AI agent from a traditional financial AI model?

A traditional AI model is a static analytical tool: you feed it data, it produces a prediction or classification, and a human decides what to do with the output. A financial AI agent is a dynamic, autonomous system. It perceives its environment continuously, reasons through options using its own planning capability, executes actions through integrated systems, and learns from outcomes over time — all without requiring a human to manage each decision cycle.

The distinction matters practically: an AI model might flag a potentially fraudulent transaction for human review; an AI agent might automatically freeze the account, notify the customer, file a SAR, and initiate an investigation workflow — all within seconds.

2. How does real-time risk decisioning differ from batch-based risk processing in AI agent systems?

Batch-based risk processing analyzes historical data on scheduled cycles — nightly, hourly, or at defined intervals. It is adequate for retrospective reporting but structurally incapable of preventing fast-moving financial risks like fraud, flash crashes, or liquidity cascades. Real-time risk decisioning, by contrast, processes each financial event as it occurs — in milliseconds — using streaming data architectures and low-latency AI inference engines.

In practice, this means a financial AI agent can detect a pattern of micro-transactions consistent with layering (an AML technique) across dozens of accounts within seconds of the transactions occurring, rather than discovering the pattern 24 hours later during a batch run.

3. Which AI agent development model is most suitable for U.S. community banks and mid-sized financial institutions?

Community banks and mid-sized institutions typically lack the data science bench strength and engineering infrastructure to build and operate multi-agent orchestration systems. The hybrid reasoning model — pairing a reactive event-driven layer with a deliberative LLM-backed analysis layer — is generally the most practical starting point. It can be deployed incrementally, integrates with existing core banking systems, and provides the explainability regulators expect.

Many community banks are partnering with specialized AI development firms who bring prebuilt financial domain models and regulatory compliance scaffolding, significantly reducing time-to-production. The key is selecting use cases with clear ROI metrics (fraud savings, underwriting efficiency, KYC cost reduction) to build internal credibility before expanding scope.

4. What regulatory frameworks do U.S. financial institutions need to follow when deploying AI agents for risk decisioning?

Several regulatory frameworks apply to U.S. financial institutions deploying AI agents for risk and credit decisioning. The Federal Reserve and OCC's SR 11-7 guidance on model risk management establishes baseline expectations for model validation, documentation, and ongoing monitoring — and regulators have been clear that AI models are subject to these requirements.

The Treasury's February 2026 AI risk management framework introduces 230 control objectives specifically designed for financial AI systems. For consumer-facing credit decisioning, the Equal Credit Opportunity Act (ECOA) and Fair Housing Act require that AI models do not produce disparate impact on protected classes — which demands rigorous fairness testing at the model level. Institutions also need to address BSA/AML requirements for AI-driven suspicious activity reporting, and GLBA requirements for data privacy in AI training pipelines. Engaging with legal and compliance counsel early in the architecture phase is essential.

5. How should enterprises measure the ROI of financial AI agent development investments?

ROI measurement for financial AI agents is most credible when it tracks a combination of hard and soft metrics. Hard metrics include: fraud loss reduction (typically measured as basis points of transaction volume); underwriting efficiency gains (cycle time reduction and cost per decision); compliance cost reduction (hours saved in manual KYC review, false positive rate improvement); and operational cost savings (headcount efficiency in back-office functions). Soft metrics include: speed-to-decision improvements, customer experience impacts (faster loan approvals, fewer service interruptions due to fraud flags), and risk quality improvements (credit loss rate changes attributable to model improvements).

A structured approach is to define baseline metrics before deployment, run parallel operations (AI agent alongside the existing process) during the transition period, and measure delta performance over a 90-day post-deployment window. Enterprises that require upfront financial validation and defined ROI timelines tend to achieve more disciplined outcomes.

6. What are the most common failure modes in enterprise financial AI agent deployments, and how can they be prevented?

The most common failure modes in enterprise financial AI agent deployments in the U.S. fall into several categories. Data quality failures occur when agents are trained or operating on incomplete, stale, or biased data — producing systematically wrong outputs that are difficult to detect until losses accumulate. Integration failures happen when agents cannot reliably read from or write to core banking systems due to legacy system constraints or inadequate API design.

Governance gaps emerge when agents are deployed without human review checkpoints for high-consequence decisions, creating regulatory exposure. Model drift is the gradual degradation of agent performance as the financial environment changes faster than the model is retrained. Scope creep occurs when agent mandates are expanded beyond their validated domains without corresponding validation and testing. Prevention requires: establishing a robust MLOps monitoring stack before deployment, defining explicit human escalation thresholds in the agent design, validating agents on out-of-distribution scenarios, and maintaining a model registry with documented performance baselines.

7. How is agentic AI reshaping the financial services workforce in the United States, and what roles are emerging?

The impact of agentic AI on the U.S. financial services workforce is significant but more nuanced than simple replacement narratives suggest. The roles being most immediately transformed are those involving repetitive, rules-based judgment: manual KYC review, transaction monitoring queue management, basic underwriting document processing, and routine regulatory reporting.

These roles are contracting. Simultaneously, new roles are emerging and growing rapidly: AI agent supervisors (professionals who monitor agent performance, tune behavior, and manage escalations), model risk managers with AI specialization, AI governance officers, and financial data engineers who build and maintain the real-time data pipelines that feed agent systems.

JPMorganChase's framing is instructive: as reported by Accenture, the firm has encouraged employees to "let AI eat your job" — with the understanding that people who master working alongside AI will take on higher-value, more complex responsibilities. Institutions that invest in upskilling alongside deployment will retain talent and outperform those that treat workforce transition as an afterthought.

Conclusion

Enterprise financial AI agent development is no longer a speculative investment — it is a competitive and regulatory imperative for U.S. financial institutions in 2026. The four core development models (reactive, deliberative, hybrid reasoning, and multi-agent orchestration) each serve distinct risk and decision intelligence use cases, and the most sophisticated enterprises are combining them into layered architectures that balance speed, explainability, and regulatory robustness.

The institutions that will lead are not necessarily those deploying agents fastest, but those embedding governance, compliance, and human oversight into agent design from the outset — treating the entire enterprise as an agent-native operating environment rather than retrofitting automation onto legacy workflows.

For organizations exploring where to begin or how to accelerate an existing program, partnering with a specialized Financial AI Agent Development firm with proven financial domain expertise and U.S. regulatory knowledge is the single highest-leverage decision available.

Sign in to leave a comment.