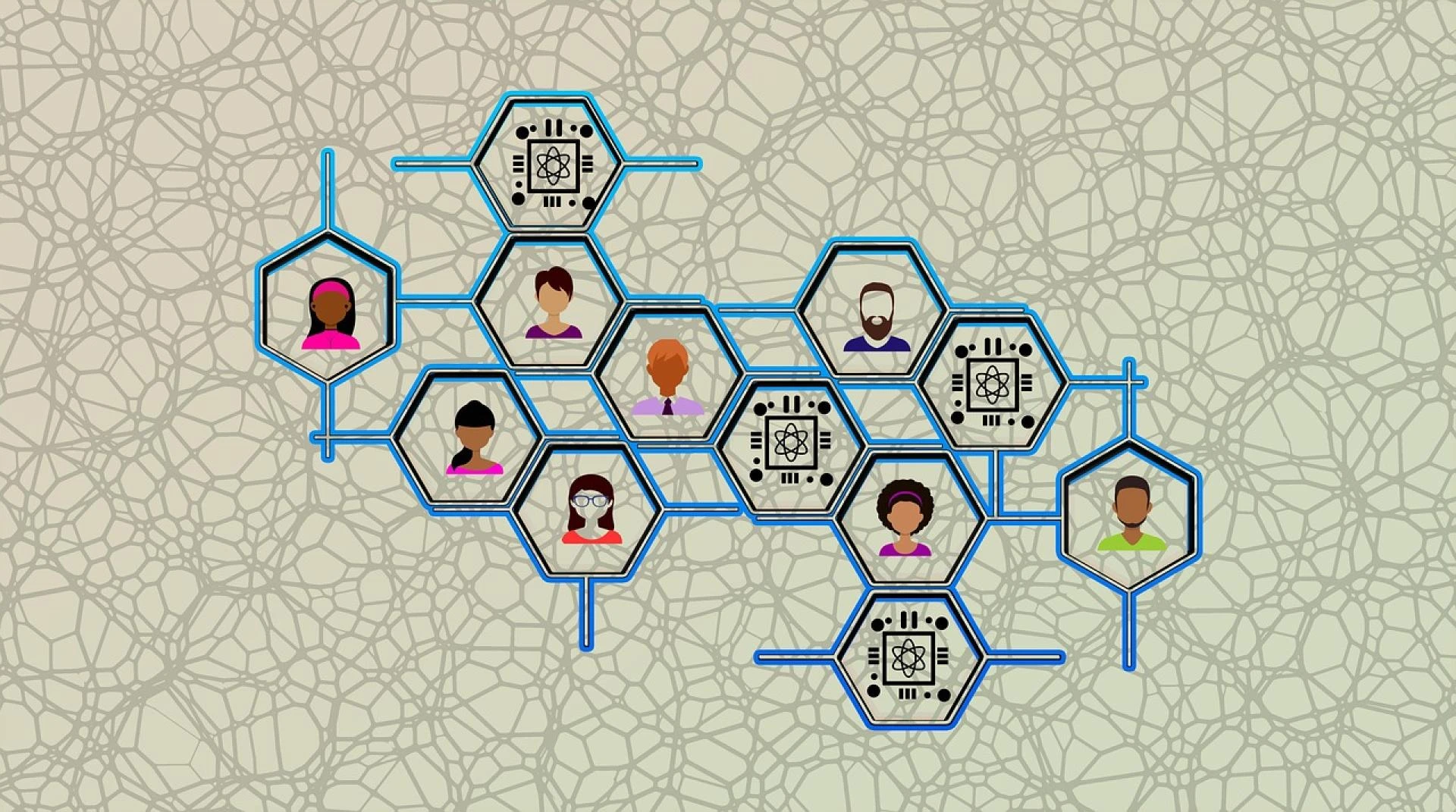

The application of artificial intelligence (AI) enhances worldwide industrial efficiency and accuracy and improves decision-making capabilities. However, AI systems keep getting more complex, creating a significant problem for understanding their decision-making abilities. Explainable AI (XAI) emerged as an essential AI branch for creating transparent and interpretable machine learning models for the public. The following blog presents an analysis of XAI innovations, its data science applications, and its educational functions in the data science course in Chennai.

Understanding Explainable AI (XAI)

XAI stands for Explainable AI, which describes methods that help people understand how AI models produce their output. Traditional machine learning tools, such as deep learning and neural network systems, function like "black boxes," so users find it challenging to understand how these models reach particular outcomes. XAI connects the missing link by showing users the step-by-step reasons behind AI decisions.

The sectors of healthcare finance and autonomous systems require XAI because trust and accountability standards must be maintained. Medical diagnostics require doctors to understand the basis behind AI system treatment recommendations. The investors who stake in financial investments need clear transparency regarding decisions powered by AI systems.

Key Techniques in Explainable AI

Several technical methods were developed to increase AI model interpretability. SHAP (Shapley Additive Explanations) stands as a popular game-theoretic approach that evaluates feature importance by determining their predictive contribution values through Shapley value calculations. The Local Interpretable Model-Agnostic Explanations algorithm creates comprehensible model explanations through black-box model approximation with simpler explainable models. The predictions of models primarily depend on features that Feature Importance Analysis can effectively reveal. Counterfactual Explanations present substitute situations that explain how models reach their decisions. Model Agnostic Methods apply to all models, enabling suitable use across a wide range of models. Such methods empower data scientists to examine model behaviour to generate fair and unbiased AI-driven solutions while enabling more straightforward interpretation.

The Role of XAI in Data Science

The modern data science industry must embrace XAI because it enables the ethical implementation of AI and regulatory compliance. The increasing use of AI systems in business operations has made interpretability development an essential requirement for AI system advancement. Scientific data analysts need access to XAI techniques to build trust and transparency in applications that use AI systems.

Knowledge of XAI techniques can be acquired through data science courses in Chennai, which provide valuable benefits to professionals. These courses include lessons about explainable AI frameworks which enable students to obtain direct experience with model interpretability.

Regulatory and Ethical Considerations

AI model explainability is the focus of worldwide government agencies as well as regulatory organizations. According to the GDPR of the European Union, people need to receive explanations when AI-driven decisions impact their lives.

Organizations now use XAI investments to fulfil both legal obligations and ethical guidelines. Ethical AI principles enforce model-distinction-free operations, which stop discrimination from arising during hiring processes, lending decisions, and law enforcement operations.

Challenges in Explainable AI

Despite its advancements, XAI faces several challenges. The implementation of XAI methods in real-time applications faces difficulties because these methods need large amounts of processing power. The field lacks universal standards for explainability because experts have not established a standard framework; thus, different industries must use inconsistent approaches during implementation. Some explanations produced by XAI techniques remain hard for non-technical stakeholders to understand correctly.

Researchers and data scientists persistently work to improve XAI solutions by creating effective systems that provide better usability for users.

The Future of Explainable AI

The field of XAI demonstrates positive prospects because scientists continue studying methods to understand AI models better while keeping accuracy performance intact. Companies now implement AI governance as an essential trend because they are developing AI governance frameworks to provide both transparency and accountability in their operations. Researchers today are creating innovative interpretive systems to analyse complex neural networks in the field of deep learning explainability. The development of user-friendly XAI tools that use no-code and low-code platforms allows any professional with basic IT skills to understand AI-assisted decision-making systems easily. XAI information has gained popularity in the educational environment through expanded incorporation into teaching programs that equip future data scientists to develop secure artificial intelligence solutions.

Learning Explainable AI through a Data Science Course in Chennai

The success of XAI brings forward the need for mastering this approach because it will soon become necessary for all data scientists. A data science course in Chennai allows students practical experience with model interpretability skills, which prepares them for industry demands.

The attainment of a data science certification in Chennai confirms expertise in both explainable AI and fundamental data science domains, thus improving career opportunities. XAI technique implementations form the core content of many courses, which offer students the ability to develop transparent and responsible AI models.

Conclusion

The emergence of explainable AI provides a fundamental reshaping force in data science by giving professionals transparent, ethical models that establish trustworthy AI systems. Organisations that implement AI-driven solutions need skilled professionals with XAI expertise because their numbers continue to increase. Individuals at any data science skill level who enrol in an XAI-focused data science course in Chennai will find that it produces career benefits.

A data science certification in Chennai will boost your credibility while keeping you at the forefront of this developing field. The increasing advancement of AI technology requires explainability to remain essential, which necessitates the ethical and transparent design of AI systems.

Sign in to leave a comment.