A developer's perspective on how trust is actually built behind online donations

Introduction: What You See vs. What's Actually Happening

Click, fill in your card number, confirm. Done. Most people donate to a nonprofit online and never think twice about it. The whole flow is designed to get out of your way, and when it works well, it genuinely does. You just assume the organization you picked is real, registered, and legitimate — and for the most part, that assumption holds up.

But it holds up because someone built systems to make it hold up. None of that trust is automatic.

I got a real sense of how much work sits underneath a simple donation button when I started digging into how platforms handle nonprofit onboarding. From the outside it looks trivial. From the inside, it's a surprisingly involved process with data quality problems, regulatory requirements, and edge cases that keep surfacing no matter how clean you think your system is.

When Manual Review Stops Being Enough

Small platforms can check nonprofits by hand. Someone looks at an application, verifies a few things, approves or rejects it. That works when you're dealing with ten or twenty organizations. It stops working when you're dealing with hundreds, and it completely falls apart at thousands.

The pressure you feel at that point goes in two bad directions at once. If you slow down approvals to keep reviews thorough, legitimate organizations get frustrated waiting. If you speed up reviews to clear the queue, you get sloppy, and sloppy is how fraudulent or ineligible organizations get through. Neither outcome is something you want to explain to anyone.

Automation is the obvious answer, but automation requires you to define the rules. And defining what makes a nonprofit "legitimate" — precisely enough that a system can evaluate it without human judgment — is harder than it sounds. The data is messy. Eligibility isn't always clear-cut. You end up building something more nuanced than a simple pass/fail check.

Starting with the EIN

Every verification process I've looked at starts with the Employer Identification Number. It makes sense — the EIN is how nonprofits show up in tax records and federal datasets, so it's the most reliable identifier you have to work with.

When an organization registers on a platform, you collect the EIN along with the basic details: legal name, address, that kind of thing. Then you use the EIN to go look them up. That part is straightforward enough.

What catches people off guard is what comes next. Finding a valid EIN in a dataset doesn't mean the organization is currently eligible to receive donations. Tax-exempt status can be revoked. An organization can exist on paper, appear in records, and still be completely ineligible. So the EIN lookup is really just the starting point — a way into a broader set of checks rather than a check itself.

The Data Problem Nobody Warns You About

Here's something that surprised me when I first got into this: the authoritative nonprofit data from federal sources isn't served through a nice clean API. It comes as bulk file downloads. Big ones, with field encodings that aren't immediately obvious, updated on a schedule that doesn't match the pace you'd want for a real-time verification system.

To actually use this data in an application, you have to build a pipeline — download the files, parse them, normalize the fields, store them somewhere queryable, and then keep the whole thing current as updates come out. That's not a one-time effort. It's ongoing maintenance. The format can change. The update schedule can shift. You're committed to the whole thing for as long as the system runs.

Most teams eventually build an abstraction layer on top of all of this — something that takes the raw, complicated source data and presents it as a simple interface to the rest of the application. It doesn't solve the underlying complexity, but it at least keeps it contained in one place rather than scattered throughout the codebase.

What Actually Gets Checked

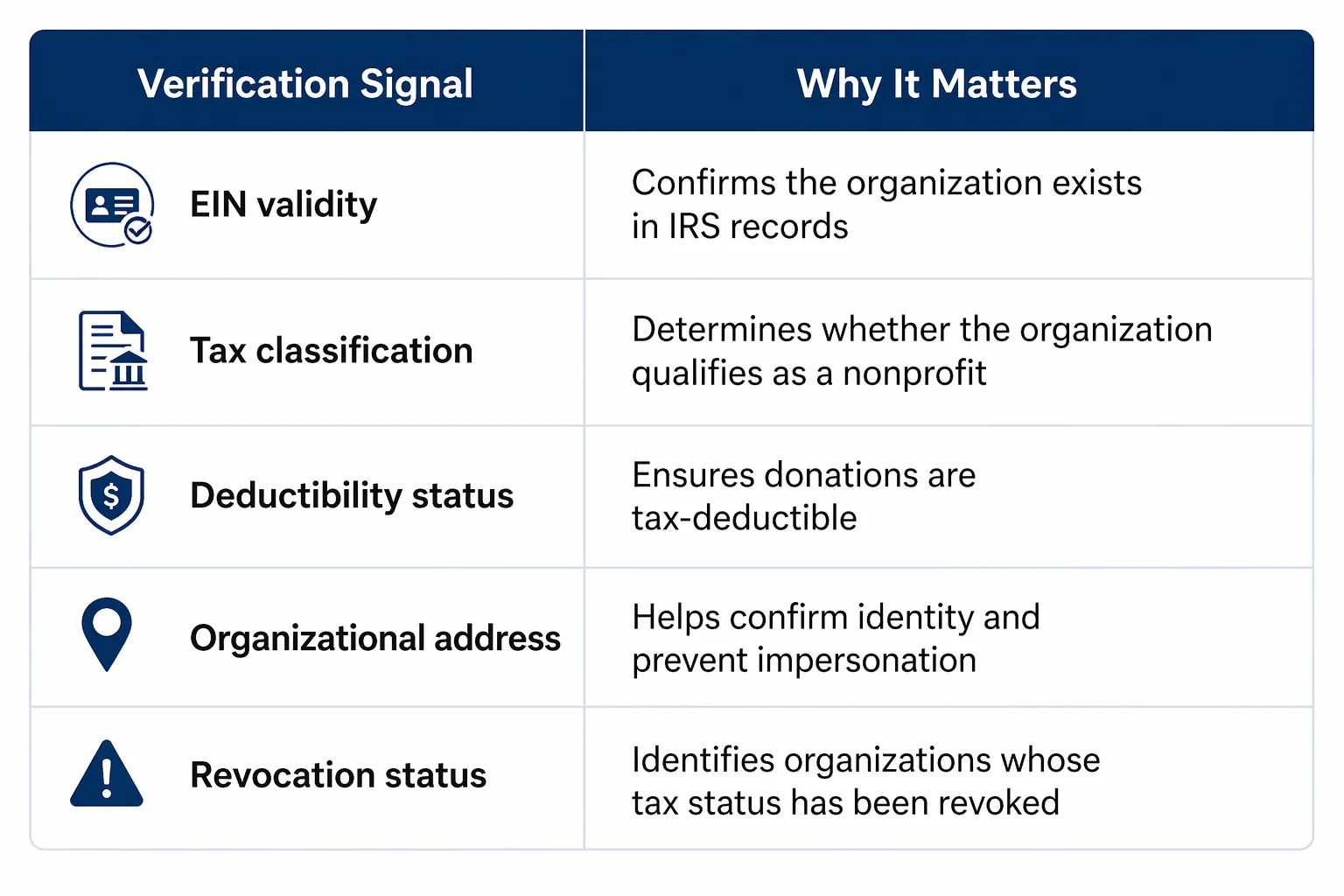

Verification isn't one question, it's several, each one catching things the others miss.

You need all of them because each one has blind spots. EIN validity doesn't tell you about revoked status. Deductibility information doesn't catch address mismatches. The picture you actually trust is the one that comes from reading all the signals together.

What a Real Response Looks Like

Here's a response from an actual nonprofit lookup against a live API:

{

"status": 200,

"message": "OK",

"data": {

"legal_name": "ABORJAILY BANNISH FOUNDATION",

"ein": "996589560",

"irs_status": "501(c)(3) - Public Charity",

"foundation_type": "Private non-operating foundation (section 509(a))",

"deductibility": {

"cash": "60% of AGI",

"non_cash": "30% of AGI",

"standard": "50% of AGI"

},

"address": {

"street": "50 LOWELL AVE",

"city": "WESTFIELD",

"state": "MA",

"zip": "01085-2643"

},

"last_updated": "2025-05-02T01:01:39Z"

}

}

A few things worth pointing out here. The deductibility breakdown isn't a simple yes or no — it gives you cash, non-cash, and standard limits separately, because they're different and donors actually need to know the distinction. The last_updated timestamp tells you how stale this data might be, which matters because a record that hasn't been touched in months might not reflect a recent status change. The address gives you something to cross-reference if the name or EIN doesn't quite match what the organization submitted during registration.

Every field is doing something. You don't just read the IRS status and move on.

Wiring It Into Onboarding

The verification call typically happens the moment a nonprofit submits their registration — not as a separate step, not after a delay, but right there in the onboarding flow. Here's the basic shape of that Non Profit API request:

import requests

EIN = "996589560"

API_URL = f"https://entities.pactman.org/api/entities/nonprofitcheck/v1/us/ein/{EIN}"

response = requests.get(API_URL)

if response.status_code == 200:

raw = response.json()

data = raw["data"]

print("Organization:", data["legal_name"])

print("IRS Status:", data["irs_status"])

else:

print("Verification failed")

In production this is one node in a larger pipeline that also handles consistency validation, risk scoring, and routing decisions — figuring out whether a case can be auto-approved, auto-rejected, or needs a human to look at it. But the core of it is just this: you get the EIN, you call the API, you read the response, you make a call.

The Compliance Layer

Verification tells you whether an organization is legitimate. There's a separate question that runs alongside it: are you legally allowed to process funds on their behalf?

Platforms have to screen against lists of sanctioned and restricted entities. The realistic probability of a legitimate nonprofit appearing on one of those lists is very low, but the check still has to happen every time. It's a regulatory requirement, not a heuristic — you can't skip it because you think the organization looks fine. Running these checks in parallel with verification keeps the onboarding pipeline moving without skipping anything legally required.

Problems That Don't Go Away

I want to be honest about the stuff that remains annoying even after you've built a solid system. Data freshness is the big one. Because the underlying datasets update on a schedule rather than in real time, there's always some lag between what's true about an organization and what your system knows. Most of the time the gap is small and harmless. Occasionally it causes a legitimate org to fail verification because their status update hasn't propagated yet.

Name matching is another persistent headache. The same organization might appear as "St. Matthew's Community Foundation," "St Matthews Community Foundation," and "Saint Matthew's Comm. Foundation" across three different data sources. None of those are wrong, exactly. You need fuzzy matching logic, and you're going to tune it forever.

And perhaps the trickiest issue: organizations that were legitimate when they registered can lose their eligibility later. Status gets revoked. Foundations restructure. Catching this requires going back and re-verifying organizations that already passed — not just new applicants. It's unglamorous work and easy to deprioritize, but ignoring it leaves a genuine gap in your coverage.

Why You Can't Fully Automate This

The best-built platforms I've seen all use the same basic approach: automate the straightforward cases, keep humans in the loop for everything else. That's not a design failure — it's the correct design for a problem with this much inherent ambiguity.

Rigid automation makes confident decisions on cases that deserve scrutiny. Pure manual review doesn't scale. The combination — automation handling volume, humans handling edge cases — is what actually works in practice. You build the automated layer to be as accurate as possible, and you build the escalation path to catch what it misses.

Conclusion: The Invisible Work

Nobody donates online and thinks about EIN lookups, deductibility classifications, or sanctions screening. They just click confirm and move on. That's the whole point — the experience should feel that simple.

What makes that possible is a lot of unsexy infrastructure running quietly in the background, catching problems the donor will never know almost happened. Building that infrastructure well isn't glamorous work. It doesn't show up in a feature announcement. But it's what the whole thing actually rests on, and when it breaks, you feel it everywhere.

The donation button is the easy part. Everything behind it is the job.

Sign in to leave a comment.