Now that we live in the era of big data, data scientists have greater tasks besides building predictive models. The first step toward a successful data-driven project is having strong data engineering, focusing primarily on the ETL process. Top machine learning models can be held back if the data pipelines are disorganized. This is why Apache Airflow plays an important role.

Today, Apache Airflow is considered an essential skill for anyone doing a data science course in Chennai. It takes data and turns it into insights that businesses can use.

Why ETL is Important in Data Science

The progress of data science greatly depends on ETL. It includes obtaining data from several sources, such as databases, APIs, and flat files; making it fit for analysis by cleaning, enriching, normalizing, and aggregating; and then moving the processed data to a data warehouse or a data lake.

This process allows data scientists to work on model building rather than wasting time on data wrangling. Manually taking care of ETL processes often leads to errors and is inefficient. For this reason, using Apache Airflow and similar tools is so important.

What is Apache Airflow?

Developed by Airbnb, Apache Airflow was later set free as an open source and helped users plan, organize, and keep an eye on workflows. Tasks and dependencies are set up using Python scripts, allowing data engineers and data scientists to use them flexibly.

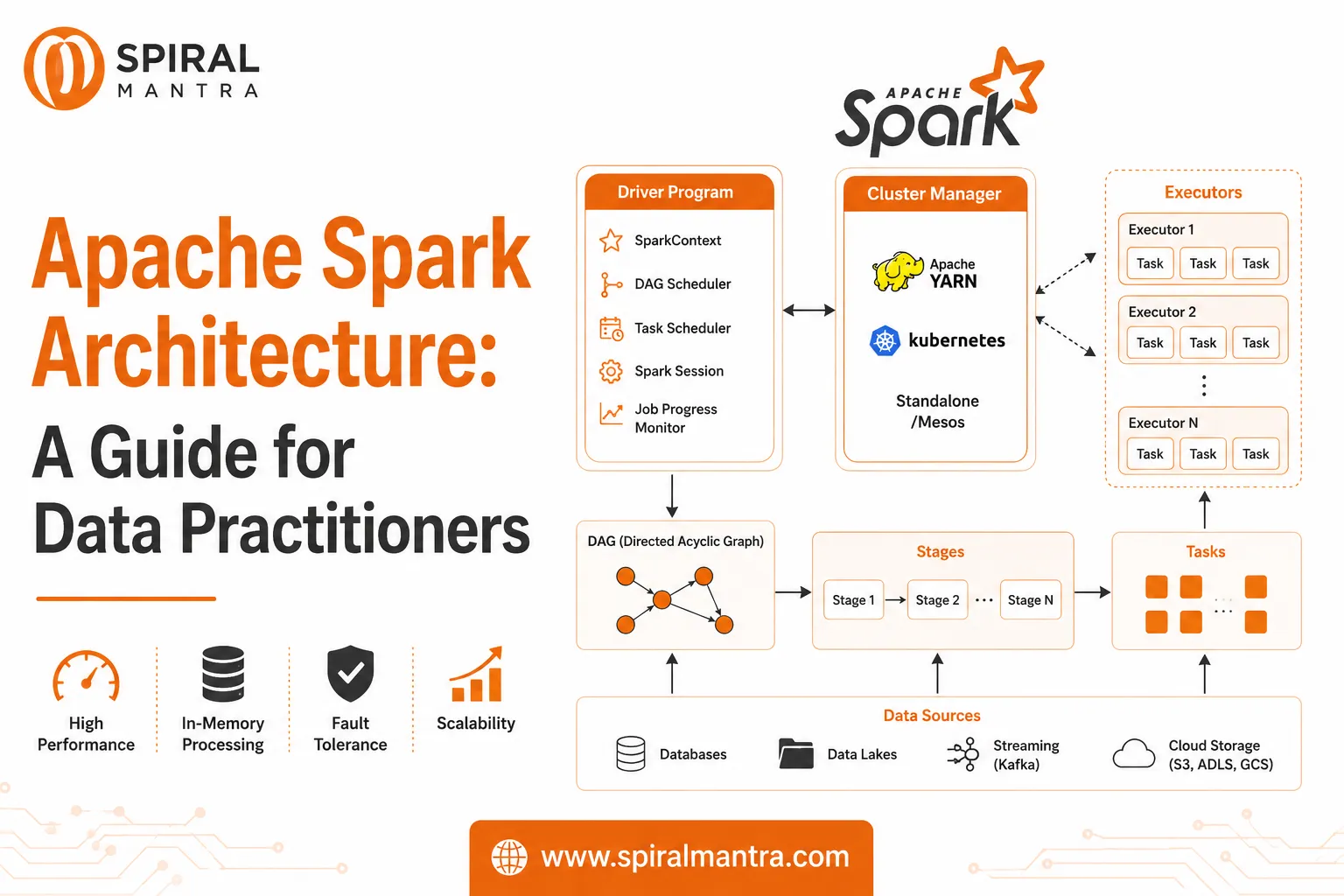

Through directed acyclic graphs (DAGs), Airflow users can organize and control complicated data pipelines easily. As an example, a DAG could handle extracting data from a MySQL database, cleaning it with Pandas, putting it in a PostgreSQL data warehouse, and finally setting off the machine learning training pipeline.

Every part of the process can be set up and tracked by using Airflow.

One key feature of Airflow is that it makes it easy to automate tasks that must be repeated. Usually, data scientists have to go through the tedious process of fixing data and loading sets. Still, Airflow addresses this by automating it at planned intervals, whether they are hourly, daily, or weekly. Because of automation, data scientists can concentrate on the analysis itself.

Airflow is also adequate in managing how different applications relate to each other. When your model training needs sales data every day, Airflow ensures it will not be run unless the data has been correctly extracted and cleaned. By doing this, libraries ensure that data is not leaked and the quality remains high.

Airflow has features for monitoring and getting alerted automatically. Any task failure alerts the users, and by using email and Slack, it is simple to track how a workflow is doing. This becomes crucial for machine learning systems that are put into actual use, since data accuracy matters a lot.

Airflow supports version control and reproducibility because the Python workflows can be stored in Git repositories. In turn, teams have an easier time collaborating and reproducing experiments.

When you are taking a data science course in Chennai, go for ones that include working with workflow orchestration. They add value to your resume and get you prepared for practical data issues that come your way.

Setting Up an ETL Pipeline in Airflow for the First Time

This is a quick explanation on how to start using Apache Airflow.

Initially, you should install Apache Airflow with the help of the pip package manager. You can run it by using Docker or Helm for more complicated scenarios.

Then, you form a Directed Acyclic Graph (DAG) by writing a Python script and putting it in the Airflow dags/ folder. It explains the order of the ETL process by featuring Python functions and operators.

Once your DAG is made, run it and watch it by using Airflow's web GUI. This interface helps you start DAGs as needed, keep an eye on the planned running of tasks, check out logs for actions, and see dependencies clearly, all of which makes workflow management easier.

Real-World Use Cases

Companies in a wide array of industries make use of Apache Airflow. Companies in e-commerce often use airflow to segment customers by checking current activity data. Medical organizations may use it to set up ETL so that patient records get loaded for usage in diagnostics. In finance, you can unite data from various APIs and refresh your live dashboards using Airflow.

People who earn a data science certification in Chennai typically work on issues that are relevant to real-world applications in their final projects. They allow students to gain important knowledge that is appreciated in the industry.

How to Effectively Use Apache Airflow

Using task groups helps you sort tasks by category, simplifying and making the DAGs understandable and easier to manage. If you write Python functions for all aspects of ETL, they are easy to reuse and fix errors in. Because Airflow is not built for heavy computing tasks, handling these tasks with Apache Spark or Dask makes sense.

It is important to log strongly in every task to detect errors easily and keep the operations clear. Also, take advantage of Airflow's feature that allows managing credentials and configurations with connections and variables. It helps keep both security and flexibility in all types of environments.

Why Learning Airflow is a Must for Aspiring Data Scientists

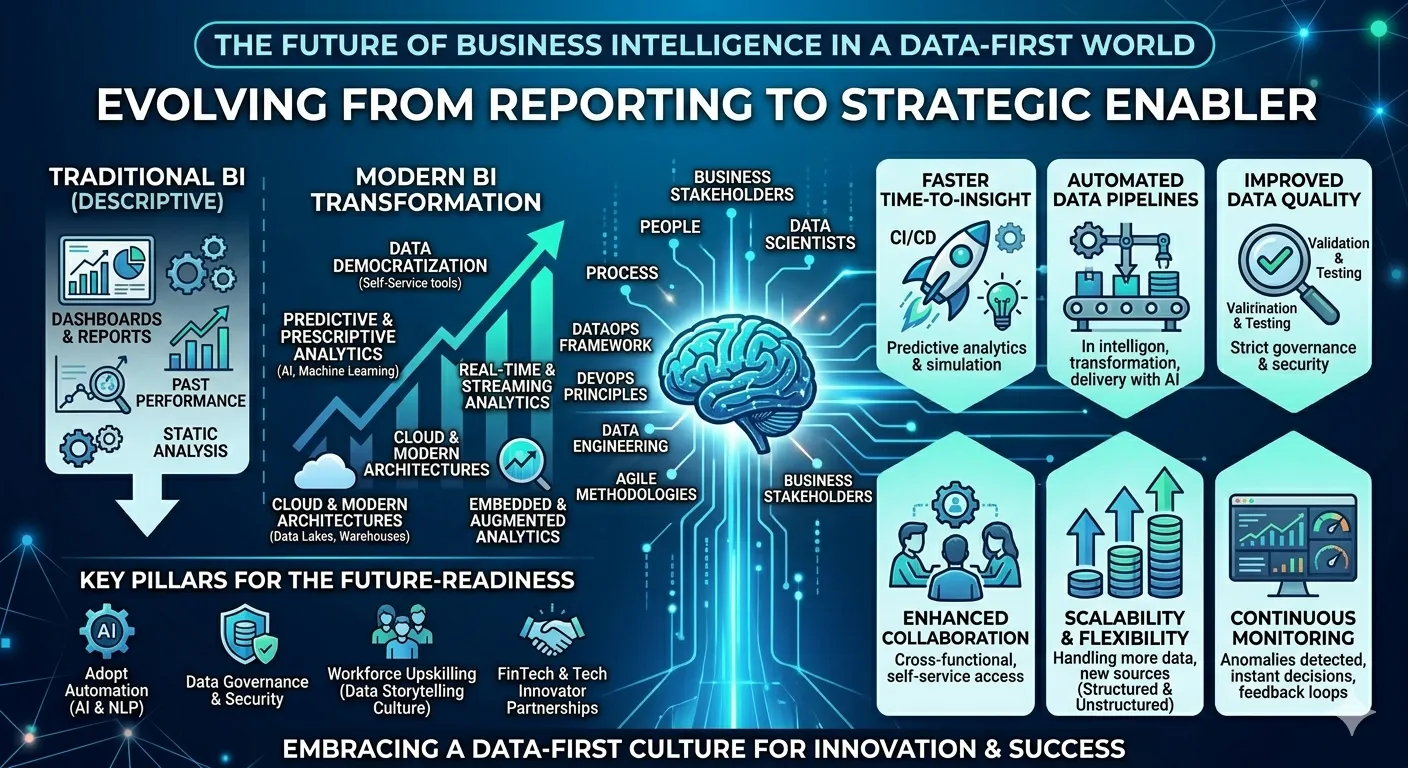

Just learning machine learning by itself is no longer enough in the current data field. Nowadays, employers hope that data scientists know about every step along the data pipeline, from bringing data to using it in models. Using Apache Airflow allows you to set up reliable and efficient workflows using automation.

The tech field is growing in Chennai, making it easier to obtain these skills. Many institutes that provide data science courses in Chennai now teach Apache Airflow and data engineering in their programs. As a result, students are equipped with knowledge that is both useful in real-world situations and up-to-date.

Additionally, pursuing a data science certification in Chennai generally includes working on projects and studying real-world scenarios. Learners can practice what they have learned using Apache Airflow in real-life settings, improving their comprehension and job skills.

Conclusion

If data scientists want to make their data pipelines ready for production, Apache Airflow gives them the tools to do so. It makes your tasks easier and more reliable, leaving you extra time for the most important tasks — exploring data and making useful models.

You will stand out in the job market if you take a data science course in Chennai with data engineering topics, whether a beginner or an expert. In addition to this, having a data science certificate from Chennai will help you adapt to current data science careers.

Sign in to leave a comment.