The most capable AI systems now present a seamless interface to the user. 88% of organizations report regular AI use in at least one function, and experiments with AI agents are increasing. But, behind every reliable output lies a complex, engineered control layer that governs reasoning and ensures compliance. The growing problems of inconsistency, subtle drift, and regulatory risk in production all stem from the neglect of this foundational discipline of prompt engineering.

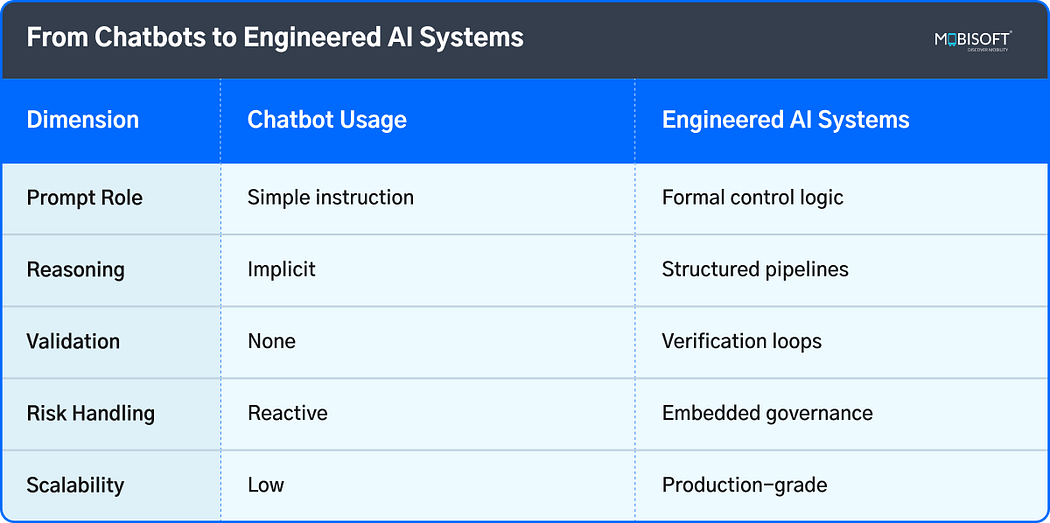

What is often referred to as prompt engineering is not a writing skill. It has evolved into the essential structural framework for deterministic behavior in non-deterministic systems. It is how logic, safety, and workflow integrity are codified within AI systems built on Large Language Models (LLMs). This architectural approach represents the core differentiator for enterprises that succeed with AI at scale. Looking toward 2026, this layer is no longer optional. It is the critical substrate for any system that must be trustworthy, auditable, and robust under real operational pressure.

To explore how governed systems are built in production, see our approach to enterprise AI development services.

Why Casual Prompting Already Fails in Production?

The conversational prompting that enables engaging demo experiences is fundamentally mismatched for production environments. Its failure is rooted in a core technical conflict: the probabilistic nature of model outputs clashes with the deterministic requirements of business workflows. Under-scaled traffic, this variance manifests not as random errors but as systematic, compounding failures.

Output inconsistency introduces logical drift. A minor ambiguity in an instruction can redirect an entire reasoning chain, leading to cascading errors in multi-step processes. These are often silent failures. The model produces a plausible but incorrect or ungrounded output that bypasses superficial monitoring.

This gap represents a direct operational risk. An unverified financial analysis or a non-compliant document clause is not a conversational mishap. They are substantive failures where the cost is measured in broken processes, regulatory exposure, and eroded institutional trust. Casual prompting lacks the structural rigor to prevent these outcomes, treating a system-level engineering challenge as a simple interface problem.

Organizations reduce these risks by aligning architecture with AI strategy consulting frameworks.

What Does Prompt Engineering Mean in 2026?

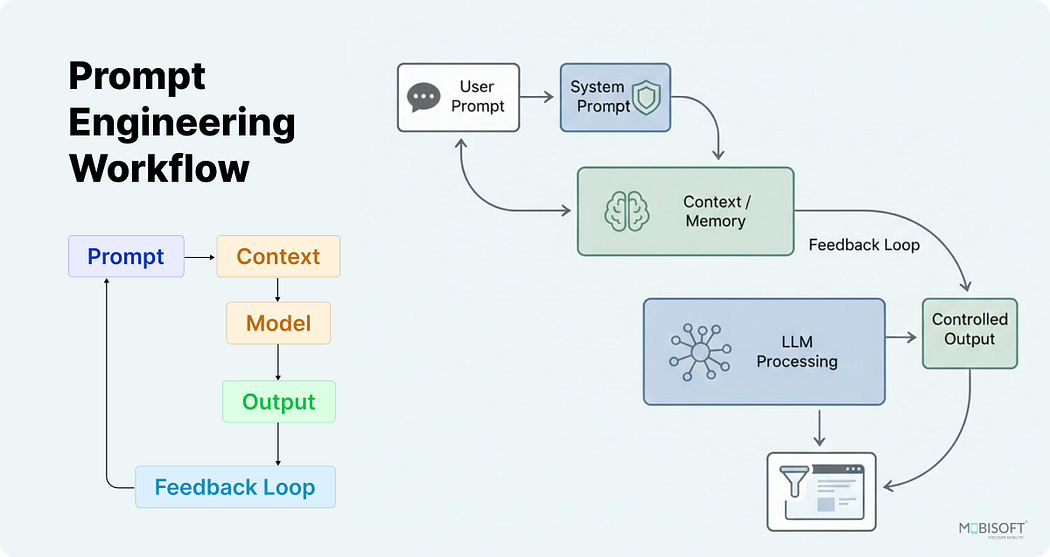

Prompt engineer role demand grew by 135.8% in 2025 as companies invest in structured AI expertise. By 2026, it will have fully transitioned from a linguistic skill to a core discipline of systems engineering. It is the practice of designing the formal control logic that governs AI behavior, reliability, and compliance. This evolution moves beyond tuning syntax to architecting layered prompt stacks, embedding constraint-based guardrails, and defining orchestration protocols for multi-agent workflows using advanced prompt engineering.

This discipline constructs the deterministic boundaries within a non-deterministic system. It is comparable to writing application logic, but for cognitive processes. The prompt becomes a structured specification for reasoning, verification, and tool use. This change establishes prompt engineering as a fundamental software layer, responsible for translating business rules and risk parameters into enforceable machine operations. It is the indispensable bridge between high-level intent and deterministic, auditable outcomes in enterprise environments.

Prompts as Control Systems, Not Instructions

In modern Prompt Engineering, an advanced interpretation of a prompt is not a command buta configuration file for a reasoning engine. It defines operational parameters, search spaces, and failure modes. This control-system mindset prioritizes constraints over open-ended instruction, establishing the guardrails within which generative flexibility can safely operate.

Constraint-First Design

This principle involves deliberately limiting the model’s probabilistic search space. By defining strict output formats, prohibited reasoning paths, and mandatory validation steps upfront, the system’s potential for variance is directly reduced. Reliability is engineered through exclusion, ensuring the model operates within a bounded, verifiable domain of correct behaviors.

Role Anchoring & Context

Here, the prompt statically defines the agent’s operational persona and factual grounding. It locks in specific knowledge sources, tool permissions, and interaction protocols before any task begins. This prevents context drift or role confusion during extended workflows, enforcing a consistent operational frame that is resistant to user prompt injections or ambiguous queries.

Guardrail Logic Integration

Modern prompts encode active governance logic. They specify conditional rules for self-verification, automatic fallback procedures, and predefined halting conditions when confidence metrics are low. This active regulatory layer monitors and governs its own execution process in real time, making failure a managed state rather than a surprise outcome.

At scale, orchestration depends on multi-LLM AI platforms to manage reasoning across models efficiently.

How Reasoning Is Now Engineered

Modern AI systems no longer rely on monolithic, opaque reasoning. Instead, they implement engineered cognitive architectures where the thought process itself is systematically designed, segmented, and validated. This transformation reflects how prompt engineering works by treating reasoning as a modular software component, making it auditable, reliable, and repeatable across countless production instances.

Structured Cognition Layers

Reasoning is decomposed into discrete, functional stages. A single query might trigger sequential layers for intent classification, information retrieval, analytical synthesis, and response formulation. Each layer has a defined input, process, and output specification, isolating failure points and allowing for targeted optimization and monitoring.

Multi-Step Reasoning Pipelines

Complex tasks are executed through explicit, sequential pipelines. These are predefined workflows that chain together specialized reasoning steps, often with conditional branching. This moves beyond a single “thought” to a documented decision tree, ensuring that multi-faceted problems like financial analysis or contract review follow a consistent, logical pathway every time.

Self-Verification Loops

Critical outputs are not taken at face value. Engineered systems incorporate automated verification steps, where a subsequent reasoning module critiques, cross-checks, or grounds the initial conclusion. This creates internal quality control, significantly reducing hallucination and error by forcing the system to contest and corroborate its own work before finalizing an answer.

This evolution reflects best practices in context engineering for LLMs for enterprise-grade agents.

The Rise of Agent-Based AI Workflows

The architecture is moving from monolithic chatbots to specialized agent ensembles, representing the next maturity phase for production AI. Linear, conversational interfaces collapse under the weight of complex real-world tasks. Modern systems decompose these tasks into parallel, coordinated workflows executed by purpose-built agents, each with defined roles, tools, and governance rules supported by structured prompt engineering.

Role-Specialized Agents

Workflows are now built using discrete agents functioning as specialized components. One agent may handle semantic retrieval, another structured analysis, and a third compliance verification. This specialization increases accuracy and allows for independent scaling and optimization of each cognitive function within the pipeline.

Tool Execution & Abstraction

Agents interact with external systems through a formalized tool execution layer. This layer abstracts APIs, databases, and computational resources into defined functions with strict input-output schemas. It transforms an agent’s intent into precise, auditable operations, grounding its behavior in real-world data and actions.

Multi-Model Orchestration

No single model is optimal for every subtask. Engineered workflows dynamically route requests to specialized models. It can be a cost-efficient model for summarization, a highly logical model for code generation, or a large-context model for synthesis. An orchestration controller manages this routing based on latency, cost, and required capability, treating the model layer as a heterogeneous compute resource.

Many of these controlled workflows power modern enterprise chatbot solutions across business operations.

Why Enterprises Now Engineer AI Governance Layers?

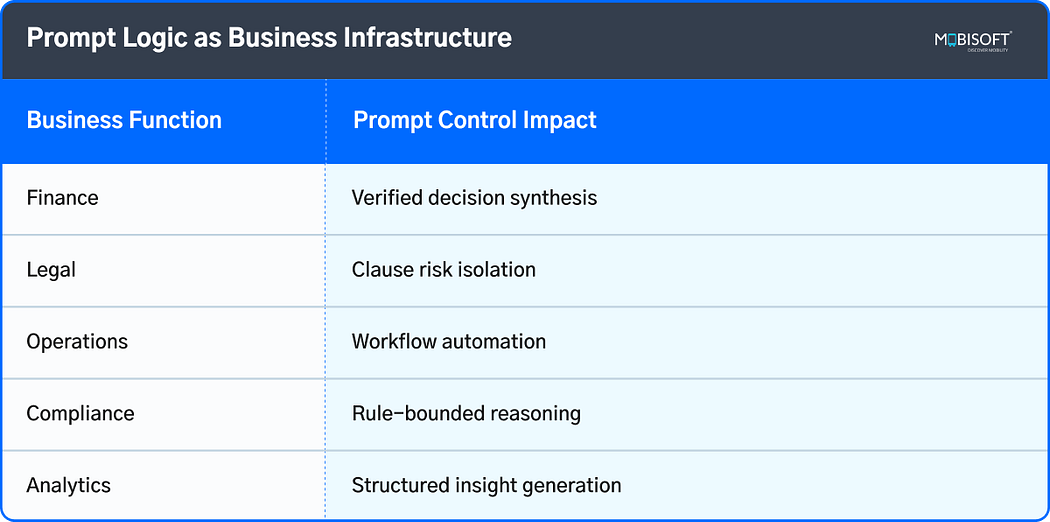

Integrating AI into core business processes exposes organizations to novel forms of operational and regulatory risk. In mature prompt engineering environments, these systems provide the audit trails, control mechanisms, and compliance guarantees that transform AI from a promising tool into a responsible corporate asset.

Auditability and Traceability

Complete decision logs capture the precise chain of reasoning, linking final outputs to the source data and the prompt logic defined through AI prompt ethat generated them.

This creates an immutable record for regulatory scrutiny, allowing auditors to verify the provenance of every automated decision.

Teams gain the ability to perform root-cause analysis on the system’s cognitive process, not just its final answers.

Citation Grounding and Failure Protocols

Architectural rules force the system to mechanically associate any factual claim with a retrievable source, anchoring outputs in verifiable data.

Predefined failure conditions, like low confidence scores or missing information, trigger automated halts instead of speculative responses.

This protocol ensures errors are contained gracefully, defaulting to a safe state that requires human intervention.

Prompt Logic as Compliance Control

Regulatory requirements and internal policies are embedded directly into the prompt’s constraint logic, a core principle of advanced prompt engineering, acting as a first-order filter.

Compliance becomes a structural feature of the system’s operation, consistently applied without depending on the model’s own interpretation.

This method provides a uniform standard of adherence across all interactions, closing a critical gap in operational risk.

Legal and Financial Risk Reduction

Engineering deterministic boundaries around AI behavior converts ambiguous liability into a defined and measurable technical parameter through deliberate AI behavior control.

A clear governance framework establishes formal accountability for system outputs, which is essential for regulatory licensing and insurance.

The resulting documentation satisfies audit requirements and provides tangible evidence of due diligence in AI operations.

Many customer-facing pipelines now apply proven AI chatbot strategies to balance automation and experience.

Prompt Engineering as a Business Capability

When treated as a formal discipline, prompt engineering transitions from a technical task to a core business capability. It functions as the translation layer between strategic objectives and reliable machine execution. This is where abstract AI potential is converted into measurable organizational value, directly impacting efficiency, accuracy, and speed.

Knowledge Automation & Synthesis

This capability enables the systematic conversion of unstructured data into actionable intelligence using proven prompt engineering techniques. It automates the synthesis of reports, research, and procedural guidance, allowing human experts to focus on high-judgment activities. The return on investment materializes through scalable expertise and consistent information delivery.

Decision Quality & Standardization

Prompt architectures encode and enforce critical thinking frameworks, demonstrating how prompt engineering works in real operational settings. They ensure analytical rigor, reduce individual bias, and standardize the quality of outputs across all users. This elevates the consistency and reliability of automated decisions in areas like risk assessment or financial analysis.

Operational Velocity & Orchestration

The true value lies in orchestrating complex workflows. By designing prompt logic that coordinates multiple agents, tools, and verification steps, businesses can automate entire multi-stage processes through AI prompt engineering. This significantly accelerates operational timelines, reducing the cycle time from question to resolved outcome.

Product teams increasingly follow AI-enhanced application design principles to embed cognition into apps.

What Teams Must Rethink for 2026 AI Adoption

A notable 74% of workers use AI regularly at work, but only 33% had formal training, showing a serious skills gap around proper usage. For sustainable adoption in 2026, teams must fundamentally reconceive their approach, moving from leveraging AI tools towards engineering institutional cognition through modern Prompt Engineering. This transition demands new organizational roles, development methodologies, and a primary focus on governed reliability.

New Internal AI Design Layers

Teams must institute formal layers for cognitive architecture, separating the design of reasoning workflows from both model infrastructure and user-facing applications.

This involves building and maintaining modular prompt stacks, verification loops, and agent orchestration logic as version-controlled, core intellectual property aligned with enterprise AI prompt engineering practices.

Investment priorities must pivot from merely consuming model APIs to engineering the proprietary control systems that ensure deterministic behavior within specific business contexts.

Prompt Architects vs. Casual Users

A specialized role for the prompt architect emerges, focused on defining structured reasoning pathways and system-level constraints rather than crafting conversational instructions.

This role requires a hybrid skillset of systems engineering, deep domain expertise, and model mechanics to translate business logic into enforceable cognitive processes.

The general population of casual users then operates within these engineered systems, benefiting from their reliability without needing to command the underlying control logic.

Governance-First Adoption Strategy

Deployment must begin with integrating audit trails, compliance boundaries, and validation gates; these are foundational, not final, features embedded through deliberate AI behavior control.

Every pilot should be evaluated on its governance maturity and demonstrable traceability, moving beyond assessments of output quality in isolated demos.

This strategy inverts the traditional adoption path, making verifiable reliability and accountability the primary, non-negotiable features around which all functionality is constructed.

Redesigning Workflows Around Cognition

Existing business processes should be re-engineered into hybrid cognitive assemblies, incorporating discrete, AI-powered reasoning steps based on how prompt engineering works in production systems.

The goal is to design cohesive systems where AI handles scalable, pattern-driven cognition and humans provide strategic oversight, exception judgment, and ethical calibration.

The static workflows are becoming dynamic, intelligent circuits, fundamentally enhancing both operational throughput and decision quality.

Engineering teams also benefit from AI-enabled software development workflows to govern delivery and change management.

From Prompting to AI System Design

The practice of prompt engineering has fundamentally evolved. It is no longer a method for phrasing requests to a chat interface. It has become the essential control language for building reliable, scalable AI systems through advanced prompt engineering. This discipline engineers the cognitive layer itself, defining how reasoning is structured, validated, and governed within production environments.

The future of enterprise AI depends on this architectural perspective. Forward-looking organizations are already building with this understanding, constructing systems where prompts function as executable logic for constraint, orchestration, and compliance. This is what separates a fragile demo from a dependable operational asset.

Ultimately, treating prompt engineering as a core system design function is the strategic differentiator. The AI platform thus offers measurable, auditable, and repeatable business outcomes.

Key Takeaways

Modern prompt engineering is the design of control logic for AI systems. It has evolved from phrasing instructions into architecting the cognitive layer that governs reasoning, safety, and compliance in production.

Reliability is engineered through constraint-first design. By deliberately limiting the AI’s operational space and defining failure protocols, you create deterministic outcomes from non-deterministic models.

Enterprises now require formal AI governance layers. This infrastructure provides mandatory audit trails, enforces citation grounding, and embeds compliance rules directly into the prompt logic to mitigate legal and financial risk.

The core unit of design has shifted from chatbots to agent-based workflows. Complex tasks are decomposed into coordinated sequences of specialized agents, tools, and verification steps, orchestrated by prompt logic.

Adoption must follow a governance-first strategy. Success is measured by the presence of traceability and control mechanisms, making verifiable reliability the primary feature of any deployed system.

The true value lies in automating multi-stage cognitive workflows with predictable quality, reducing errors, and accelerating complex processes.

Building a proprietary control layer creates abstraction from underlying models, allowing the core business logic to remain portable and adaptable across different technologies.

FAQs

How is this new prompt engineering different from fine-tuning?

Fine-tuning adjusts the model’s foundational knowledge. Modern prompt engineering constructs the real-time control logic that governs how that knowledge is applied. It’s the difference between teaching an engineer facts and giving them a precise, fail-safe operating procedure for a specific mission. Both are necessary, but serve distinct purposes.

Does this approach lock us into a specific AI model provider?

A well-architected prompt logic layer creates abstraction by defining the cognitive processes. While some tuning is needed, the core control systems can often be ported or adapted across different models, future-proofing your investment and reducing vendor dependency.

What’s the first concrete step to implement this control-layer mindset?

Begin by instrumenting full traceability for a single high-value workflow. Capture every reasoning step, tool call, and data source. This audit trail doesn’t just monitor; it reveals the specific decision points where control logic is missing. You cannot engineer governance for what you cannot see in detail.

We have skilled, prompt writers. Do we need to hire different profiles now?

Likely, yes. You will need individuals who can design systems, not just craft instructions. Look for people who blend software architecture thinking with an understanding of model behavior. Their skill is translating business risk parameters into deterministic cognitive pathways, a discipline closer to systems engineering than linguistics.

How do you measure the ROI of investing in such a structured approach?

Measure the reduction in cost from errors and reversals, not just time saved. Calculate the savings from containing incidents before they affect customers or compliance. The true ROI is in risk mitigation and the enabled velocity of deploying new, complex AI use cases with predictable reliability.

Can you use open-source models with this engineered approach?

Absolutely. In many ways, this approach is even more critical for open-source models. It allows you to inject the missing enterprise-grade reliability, compliance, and structured reasoning as a separate layer on top of the raw model capability, effectively customizing a general tool for specific, high-stakes work.

Where does retrieval-augmented generation (RAG) fit into this architecture?

RAG becomes a governed tool within the cognitive pipeline, not the whole system. The prompt control layer determines when and how to retrieve information, how to validate its relevance, and what to do if retrieval fails. This prevents RAG systems from becoming confident sources of outdated or off-topic data.

Sign in to leave a comment.