Pick the wrong shared storage and your apps will tell you fast. Latency climbs, file locks pile up, and backup windows slip. The good news is that the SAN vs NAS choice is more straightforward once you map it to how your software reads and writes data.

This guide cuts through buzzwords and focuses on the practical signals you can measure: access pattern, concurrency, and tolerance for delay. Along the way, we will cover modern protocols like NVMe over Fabrics, NFSv4.1 with pNFS, and SMB 3.1.1 features that shape performance in 2025.

By the end, you will have a clear decision path, a quick checklist, and examples that match real workloads so you can choose once and avoid surprises later.

SAN vs NAS in plain English

SAN presents raw blocks to a host. The OS formats those blocks with a filesystem and mounts them as local disks. NAS shares files over the network, and the server handles the filesystem for you. SAN storage delivers volumes that look like fast local drives, while NAS exposes folders that many clients can open at once. Think of SAN for consistent low latency and tight control at the block level, and NAS for shared file access, easy growth, and collaboration.

How they deliver data

SAN

- Access mode: block level

- Common transports: Fibre Channel, iSCSI, NVMe over Fabrics including NVMe/FC and NVMe/TCP

- Host software: multipath I/O, volume manager, filesystem created by the OS

- Typical strengths: predictable latency, high IOPS with small random reads and writes, per-host isolation

NAS

- Access mode: file level

- Common protocols: NFS v3 or v4.1 with pNFS, SMB 3.x with Multichannel and SMB Direct (RDMA)

- Server role: handles the filesystem and file locking, clients mount exports or shares

- Typical strengths: many clients read and write the same tree, simple permissions, fast scale-out with modern NAS clusters

Performance, latency, and concurrency

- Latency sensitive and random I/O: Databases, virtualization hosts, and transactional systems usually favor SAN because block protocols add less overhead and multipathing keeps queues deep and steady.

- High throughput with shared files: Media pipelines, home directories, build farms, and analytics stages often favor NAS. NFSv4.1 with pNFS or SMB with Multichannel and RDMA can push line-rate bandwidth while coordinating file locks across many clients.

- Mixed profiles: Some environments run both. For example, a hypervisor keeps VM datastores on SAN, then guest VMs share project files from NAS.

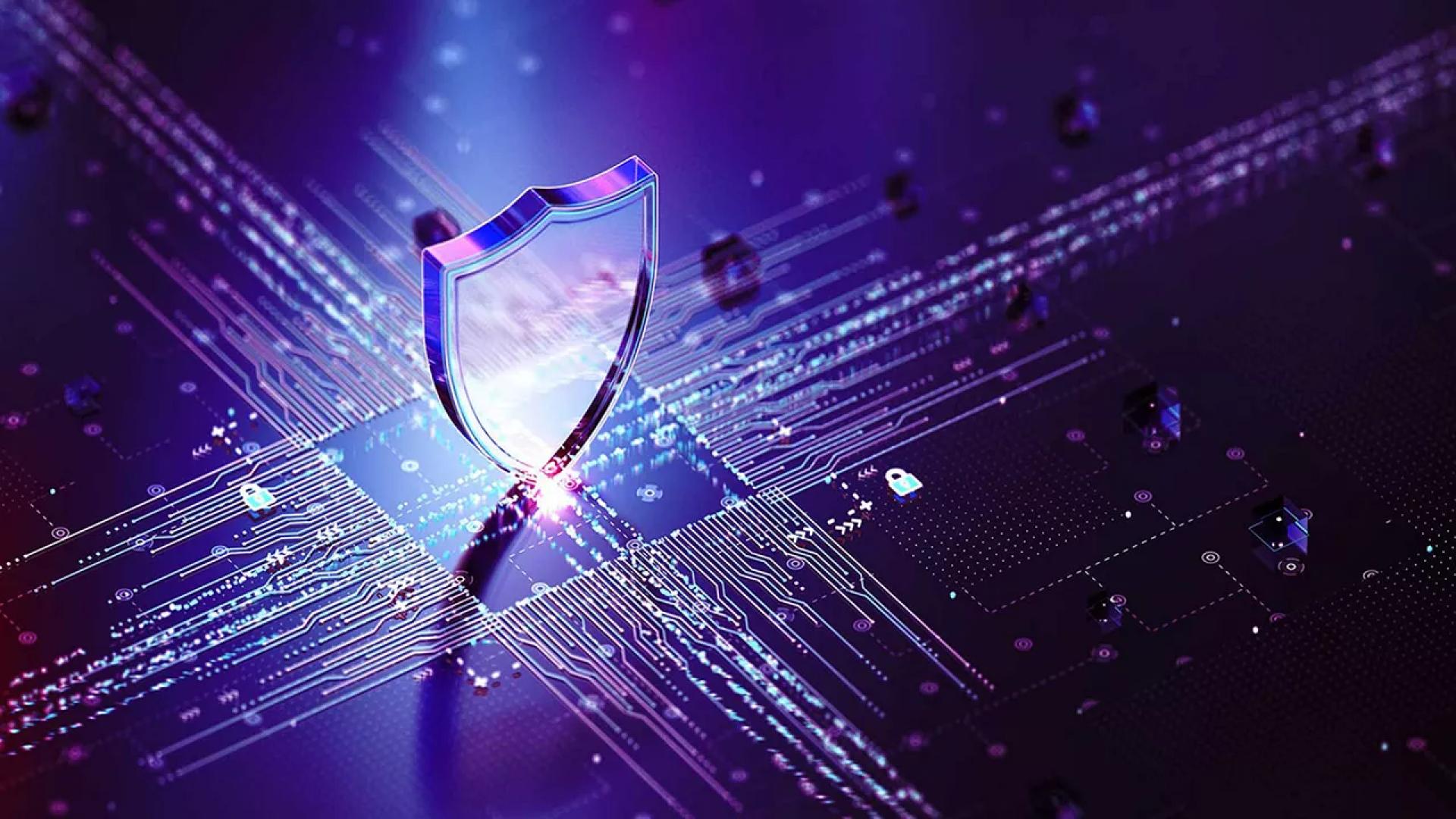

Security and zero trust basics

- SAN security: Zone or segment initiators and targets, apply LUN masking, and require authentication. iSCSI supports CHAP, IP networks can add IPsec, and NVMe/TCP supports TLS in current specs. Keep storage traffic on dedicated VLANs or fabrics and restrict management planes.

- NAS security: Use NFSv4.1 with Kerberos for integrity and privacy where possible. On SMB, enable 3.1.1 encryption and pre-authentication integrity, prefer modern ciphers, and bind access to your directory service. Apply export or share hardening, including root-squash for NFS where appropriate.

Resilience and operations

- SAN: Design for dual fabrics or redundant IP paths, enable multipath on every host, and test path failover. Snapshots, replication, and consistency groups protect block volumes that back critical apps.

- NAS: Scale-out NAS spreads files across nodes and can survive node loss while keeping a single namespace online. Use directory-based quotas, snapshots, and replication for quick recovery and protection against deletions or ransomware.

Containers and modern platforms

- Block for single-writer pods: In container platforms, ReadWriteOnce volumes map cleanly to block or block-backed filesystems. This fits databases and stateful services that want exclusive write access.

- File for shared access: ReadWriteMany volumes align with NAS. That suits web tiers, model serving that shares checkpoints, and build systems that many pods touch at the same time.

Cost, skills, and growth curve

- SAN: More moving parts to operate. You manage host multipath, zoning or target access lists, and volume layouts. The payoff is low jitter and strong isolation for your hottest workloads.

- NAS: Quicker to deploy and grow. Most teams can add capacity, expand shares, and delegate folder permissions without touching clients. Performance scales well when the platform supports parallel data paths such as pNFS or SMB Multichannel.

Conclusion

There is no universal winner, only the best fit for the work your systems do every minute. If your application hammers small random writes and punishes any jitter, block access over a well-engineered SAN will feel like local storage while giving you centralized control.

If your users and services need to open the same tree from many places and grow capacity without touching clients, modern NAS brings parallel reads and writes with strong security options.

Many teams run both and let each dataset live where it performs and restores best. Start with the measurements, run a real pilot, and keep the design simple. That combination rarely disappoints in production.

Sign in to leave a comment.