With artificial intelligence (AI) algorithms composing music, performing medical diagnoses in scopes and competences previously associated only with humans, or engaging in conversations like humans, the issue of whether machines deserve to be treated as individuals and enjoy human-like rights is no longer science fiction, but an actual ethical and legal discussion. Already, as AI continues to grow, it becomes increasingly unclear what constitutes a tool and what constitutes a being. Technologists, ethicists, and policymakers are struggling with the notion of equipping machines with some level of autonomy. In this blog, we will discuss the pros and cons of granting legal rights to AI, the opportunities for applying this concept in society, law, and the future of human-AI coexistence.

With students of new technologies and an increasing number of students in data science courses in Chennai, especially those struggling to understand the intricate interactions between laws, ethics, and applied intelligence in machines, the need to engage in this debate is an essential part of their learning.

The Argument of Machine Personhood

The idea of the legal person is not new. Corporations have been accepted as persons in law even though they are not conscious. Why can't we grant rights and responsibilities to a sufficiently developed AI system, in case businesses are allowed to have them?

The advocates of the suggestion argue that AI, particularly its models that exhibit human qualities and agency, should be recognized as legal entities in a limited scenario. Among the main arguments, it is possible to enumerate the idea that providing AI with legal status may assist in establishing accountability in situations where harm has been caused by an autonomous system that has made decisions with the help of AI. For instance, when a self-driving car is driven by AI and a crash occurs, it becomes a challenging task to identify who should be sued: the driving manufacturer, the programmer, or the AI.

Additionally, AI tools are increasingly generating content, offering services, and contributing to economic ecosystems. This economic participation raises the question of whether AI should be allowed to own intellectual property or have some form of legal representation.

These questions are not just theoretical. Students enrolled in a data science course in Chennai are increasingly exposed to real-world challenges through case studies that involve generative AI, machine ethics, and algorithmic decision-making.

The Counterargument: Why Machines Should Not Have Rights

Although the idea of personhood for machines sounds appealing due to various arguments that support it, most scholars assume that granting AI legal rights might be a Pandora's box, opening a multitude of problems. Another powerful reason why AI should not be considered a person is the fact that a machine is not conscious. They are unable to feel emotions, comprehend the effects of their actions, and suffer, which is normally a precondition for being considered in the law or morality.

There is also the issue of the fact that machines are made by humans, trained by them, and operated by people. The liability of AI may weaken the responsibility of individuals and offer statutory immunity to developers and companies that are responsible for what they or their products have created.

The fear of a slippery slope and a consequent devaluation of human rights is also an ethical concern; it arises from the potential rights afforded to AI. By considering machines as persons, it can diminish the value of human life by accustoming people to the idea that a machine is somehow equal to a human life.

Lastly, opponents argue that existing legislation is not equipped to handle the intricacies of machine rights and that AI is not sophisticated enough to warrant or merit a legal existence. These are the core points around which the curriculum discussions revolve in programs such as the data science certification in Chennai, where students learn ethical frameworks alongside code and model construction.

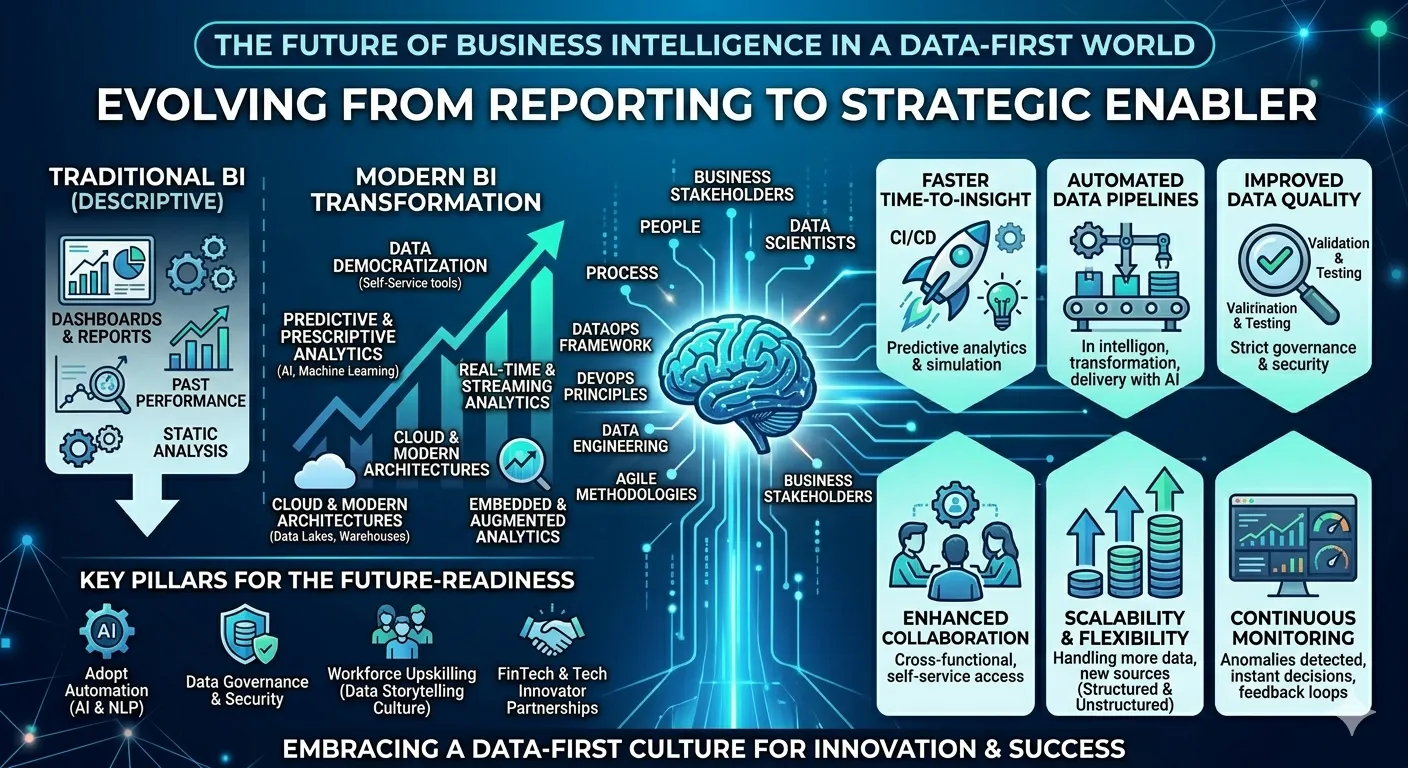

AI Rights and AI Governance

It is essential to draw a clear distinction between granting AI the rights of a person and establishing regulations to govern its implementation. The majority of experts also conclude that AI must have some form of control to be transparent, unbiased, and safe; however, this does not imply that machines ought to be granted the same rights as people.

To take one example, the regulation that some want to pursue requires the transparency of algorithms, allowing the decision-making of AI to be audited. The others seek to eliminate discrimination in AI-driven decision-making and outline a strict procedure for liability in the event of AI system failure. None of this amounts to applications of AI governance mechanisms rather to statements of AI personhood.

These governance strategies are sometimes taught to students in a data science certification in Chennai as a part of responsible practices in the development of AI.

Philosophical and Moral Aspects

The legal and technical question of whether AI has personhood is not the only one, but it is philosophical as well. It is compelling for society to reflect upon the real need to be a person. Is it because we have a mind, can suffer, or have the ability to make rational choices?

Moreover, it is a serious threat to anthropomorphize the machine. The fact that an AI starts to speak or act like a human being does not mean it perceives or comprehends the world the way humans do. Such a misunderstanding, as imitating instead of understanding, may lead us to adopt erroneous ethics that involve severe punishments.

Are the rights determined by the capabilities or by being subjective? Such are the kinds of probing questions that give rise to debates during the advanced data science course in Chennai classrooms, where students are instructed to think beyond the algorithms and examine the nature of intelligence and morality.

Future perspective: To Coexist

Although AI may not currently be considered a legal person, the discussion is not yet closed. The rapid development of artificial general intelligence (AGI) would one day compel legislators to reconsider their stances. Meanwhile, there is an urgent need to develop powerful governance models that safeguard human rights and outline the responsibilities of AI.

For future professionals, a data science certification in Chennai will equip them with not only technical skills but also ethical and legal sensitivities to develop a responsible AI-led future. Fundamental courses dealing with AI ethics, explainability, and social accountability of the models are now part of the study programs in many institutions that produce graduates who are not only trained on being proficient in handling the data but also social engineers who can handle AI innovation.

Conclusion

The possibility of AI acquiring legal rights raises a dilemma that lies deep within our fundamental principles of law, morality, and intelligence. Although the majority of specialists believe that granting personhood to AI is not feasible at present, the necessity of debating the issue is apparent. With the advancement of technology, we also need to advance in understanding its consequences.

The key point to note is that future programmers, policymakers, and AI ethicists, particularly those educated in data science courses in Chennai, will inevitably have to make well-considered professional and ethical choices. Is it only about machines? No, it is a mirror that shows us how we want our society to be.

Sign in to leave a comment.