As artificial intelligence accelerates toward safer super intelligence, organizations face a new imperative: building trust, transparency, and governance into every AI system. While innovation drives competitive advantage, failing to integrate humanist AI principles can lead to unintended risks, regulatory backlash, and erosion of public confidence. In this rapidly evolving landscape, AI ethics in business and proactive responsible AI development are no longer optional—they are strategic differentiators that determine long-term success.

What Role Does Global Policy Play in Responsible AI Development?

Global policymakers increasingly recognize that unchecked AI could reshape society in profound ways. Countries, international bodies, and industry consortia are developing regulations to ensure ethical AI deployment, emphasizing accountability, fairness, and safety.

Key functions of global AI policy include:

- Setting minimum ethical standards for AI developers worldwide.

- Encouraging transparency and explainability in complex systems.

- Fostering cross-border cooperation for shared safety protocols.

- Supporting innovation while mitigating systemic risks.

For example, integrating AI business solutions (source) within compliance frameworks can help companies meet international standards while maintaining operational efficiency. Forward-looking organizations that align with emerging policies can establish themselves as leaders in AI competitive advantage before regulations become mandatory.

What Will Trustworthy AI Governance Look Like in 2030?

The next decade will demand AI systems that are not only capable but also accountable, explainable, and human-centered. Governance frameworks will likely evolve to include:

1. Layered Oversight Structures

- Multi-tier ethics boards for strategic and operational AI decisions.

- Real-time audits embedded into AI workflows.

- Risk escalation mechanisms for high-impact systems.

2. Transparency as Default

- Full visibility into AI decision-making for stakeholders.

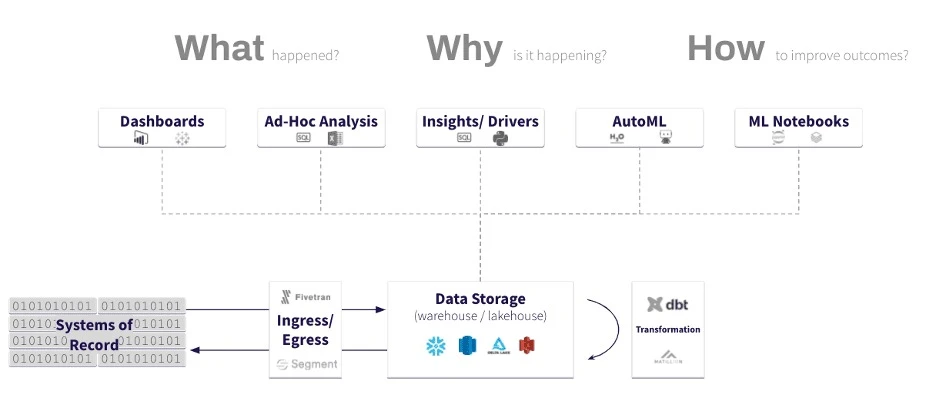

- Use of predictive analytics technologies to anticipate anomalies and prevent bias.

- Public dashboards to build trust and demonstrate compliance.

3. Continuous Human Oversight

- Humans as the final arbiters for critical decisions.

- Humanist AI principles ensure that empathy, fairness, and ethical reasoning remain central.

- Integration of HITL (human-in-the-loop) frameworks across enterprise systems.

By 2030, companies that implement these practices will not only meet regulatory expectations but also cultivate consumer trust, enhance brand reputation, and secure lasting AI competitive advantage.

Ethics Boards, Audits, and Algorithms: Rethinking Oversight for Super intelligence

Oversight in the era of safer super intelligence requires a fusion of human judgment and automated monitoring:

- Ethics Boards: Cross-functional teams including developers, ethicists, legal experts, and business leaders.

- Automated Audits: Continuous AI behavior assessment using machine learning services to detect anomalies and potential ethical violations.

- Algorithmic Transparency: Designing AI models with explainability to ensure stakeholders understand how decisions are made.

This multi-layered approach ensures AI remains responsible and aligned with human values, even as it becomes more autonomous and complex.

How Humanist Principles Can Guide Future AI Regulation

Lawmakers increasingly rely on humanist AI concepts to shape forward-looking regulations.

These principles emphasize:

- Empathy: AI systems should anticipate and mitigate potential harm.

- Accountability: Clear lines of responsibility for AI-driven decisions.

- Transparency: Open disclosure of AI capabilities, limitations, and outcomes.

Integrating these principles can make AI governance frameworks more adaptable and enforceable, creating a global standard for ethical AI that balances innovation with public safety.

Why Businesses Must Lead in AI Ethics Standards

Companies can no longer wait for regulations to dictate standards. Early adopters of responsible AI development gain:

- Market Differentiation: Ethical AI fosters trust with customers, investors, and partners.

- Risk Mitigation: Proactive governance reduces liability from misuse or algorithmic bias.

- Innovation Freedom: Clear ethical guardrails enable safe experimentation with new AI technologies.

- Policy Influence: Businesses that set standards help shape national and international regulations.

Examples of practical implementation include:

- Incorporating IoT deployment technologies to ensure safe, monitored AI interactions in physical environments.

- Leveraging Mobile app development to deliver transparent, human-centered AI experiences to end-users.

- Integrating AI-ML solutions for continuous monitoring and improvement of AI systems

.

Businesses that proactively embrace these strategies secure both ethical integrity and a tangible AI competitive advantage.

Conclusion

The trajectory toward safer super intelligence demands that trust, governance, and ethical oversight are central to AI strategy. Organizations that embrace humanist AI principles today—through ethics boards, continuous audits, transparent reporting, and proactive policy engagement—will be the leaders of tomorrow.

In practice:

- Trustworthy AI governance safeguards both people and business.

- Responsible AI development accelerates innovation while reducing risk.

- Humanist AI ensures that technology serves human values, not just efficiency metrics.

By leading in AI ethics in business, companies not only future-proof themselves against regulatory changes but also build sustainable innovation models, creating the ultimate AI competitive advantage.

FAQs

Q1: What makes AI “humanist”?

AI is considered humanist when it prioritizes human values, empathy, transparency, and accountability throughout design, deployment, and governance.

Q2: Can businesses benefit from proactive AI governance?

Yes. Ethical oversight enhances trust, reduces risk, enables safe innovation, and creates measurable AI competitive advantage.

Q3: How do ethics boards and audits work in AI governance?

Ethics boards provide cross-functional oversight, while audits—often powered by machine learning services—continuously monitor AI decisions for bias, fairness, and compliance.

Q4: Why should companies shape AI standards instead of waiting for law?

Leading in ethics positions a company as a trusted innovator, reduces regulatory risk, and influences policy frameworks to align with both business and societal interests.

Sign in to leave a comment.