Setting up Intel servers seems simple at first glance, yet small choices can ripple into big headaches.

A careful plan prevents most issues, and it also saves money over the server’s full life. Your checklist should include hardware fit, firmware, power, storage, security, and monitoring from day one.

Each area can hide traps that appear only under load or during routine maintenance. You do not need exotic tools to avoid them, just consistent habits and clear standards. Write the plan, verify each step, and document what you changed and why.

That way, your future self, or the next engineer, can support the system with confidence. The goal is a stable platform that stays boring, performs well, and scales smoothly as business needs grow and change.

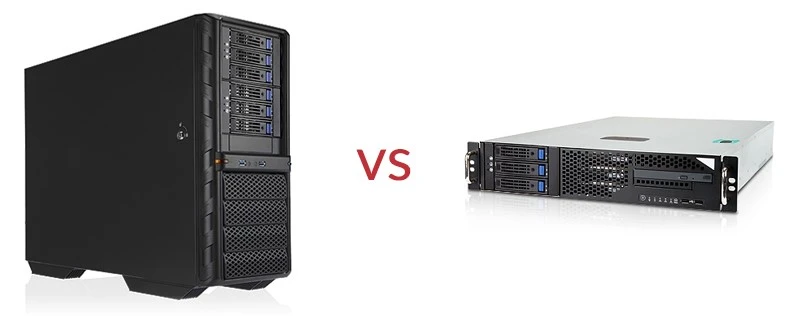

1. Skipping Compatibility And Sizing Checks

Many problems start when teams assume any part will work fine with any other part. Intel server platforms are flexible, but firmware levels, memory types, and network cards still require careful matching.

If you mix ECC modules incorrectly or choose unsupported DIMM layouts, performance and stability can suffer. The same holds for PCIe cards, NVMe backplanes, and even rack rails in tight spaces.

Right sizing is not only about CPU cores; it includes memory bandwidth, IOPS, and network throughput. Spend time with vendor compatibility lists and validated bill-of-materials guides before purchasing parts.

Model realistic workloads rather than peak marketing numbers, and add headroom for bursts and failures. Doing this work early is dull, yet it prevents frantic scrambles later when downtime is expensive and public.

2. Designing Weak Airflow And Cooling

Heat is the silent killer of server reliability, and airflow mistakes often hide until the room gets busy. Intel servers can throttle under high inlet temperatures, cutting performance right when users need it most.

Poor rack placement, blocked intakes, and mismatched fan profiles make these issues worse than they should be. Start by mapping hot and cold aisles, then confirm the plan with simple temperature logging during a dry run.

Finally, track inlet and exhaust temperatures across seasons, since summer conditions expose marginal designs quickly. A few hours of setup now can prevent many painful nights spent chasing random throttling and reboots.

- Use blanking panels to stop recirculation in empty rack spaces.

- Keep front intakes clear; route and tie cables along sides and rear.

- Validate fan curves in BIOS and out-of-band controllers under load.

3. Underestimating Power And Redundancy

Power planning is more than adding wattage numbers and hoping the breakers hold steady. Nameplate ratings rarely match real workloads, which vary by CPU features, memory density, and storage choices.

Without margin, a small spike can trip a circuit and bring down an entire rack unexpectedly. Redundant power supplies help, but only when each feed can carry the full live load during an outage.

That means testing failover, not just ticking the checkbox after plugging cords into separate PDUs. Track inrush behavior as well, since many servers draw more current during boot storms.

When power is predictable and redundant, uptime improves, and routine maintenance becomes far less stressful for everyone.

4. Rushing Firmware, Bios, And Driver Updates

Updates sound routine, but mismatched versions can cause odd reboots, missing devices, and unstable performance. Intel platforms rely on a stack: BIOS, BMC, microcode, RAID firmware, NIC firmware, and operating system drivers.

Treat that stack as one unit, and validate it in a lab before touching production racks. Keep a golden image and a rollback path so you can recover quickly if something misbehaves.

Most of all, test under real workload patterns, not only synthetic tools, because edge cases show up under business traffic. A calm, standard process keeps updates boring and safe, which is exactly what you need for stability.

- Maintain a “known-good” firmware bundle with BIOS, BMC, NIC, RAID, and microcode.

- Test upgrades and downgrades; confirm you can roll back fast if needed.

- Pin operating system driver versions to match validated firmware stacks.

- Automate inventory and version reporting across all hosts.

- Update out-of-band controllers first to avoid losing remote access mid-upgrade.

5. Sloppy Storage Layout And Raid Choices

Storage mistakes often begin with haste, then linger for years as performance complaints and recovery risks. NVMe drives offer great speed, yet placement, queue depth settings, and firmware versions still matter a lot.

Mixing consumer and data center media invites uneven wear, sudden throttling, and surprise failures under heavy writes. RAID levels should match workloads: mirrors for latency and rebuild speed, parity for capacity and sequential reads.

Document spare policies and validate SMART alerts so replacement happens before performance craters. Reliable storage comes from deliberate layouts, not quick wizards clicked during a long night.

6. Ignoring Security Hardening From Day One

Security is easiest when it is built in early, before habits form and exceptions pile up. Intel server platforms expose powerful management features that attackers love, especially when default credentials remain.

Treat out-of-band networks as sensitive, and isolate them with strict rules, monitored logs, and dedicated VLANs. Patch cycles should include firmware for BMCs and NICs, since those components run code with deep privileges.

Least privilege and simple role names help new teammates avoid risky shortcuts during on-call shifts. When security is routine and well documented, teams move faster because they trust the platform underneath their work.

- Change all defaults; enforce MFA for console and BMC access.

- Isolate management networks; deny internet egress by default.

- Enable Secure Boot and measured boot, supported here.

- Rotate credentials and certificates on a schedule, with audit logs.

- Scan and patch firmware, not only the operating system.

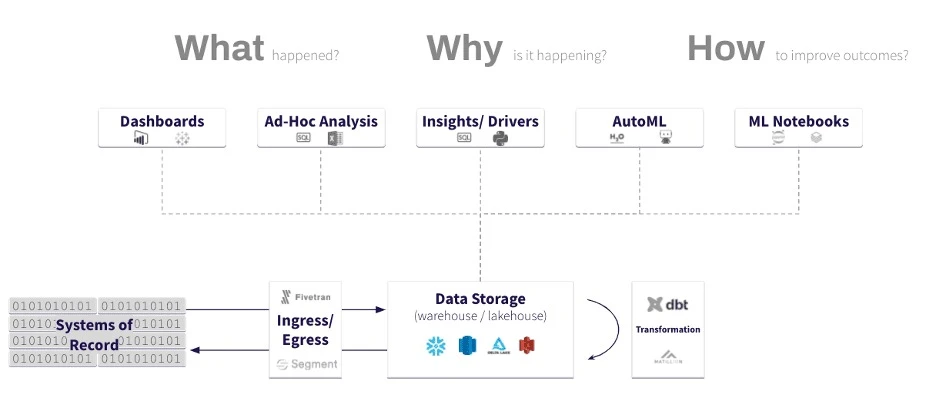

7. Skipping Monitoring, Burn-In, And Disaster Drills

Many deployments go live after a quick ping test, then reveal issues only under Monday morning traffic.

Burn-in testing should push CPU, memory, storage, and network at once to expose weak parts early. Combine synthetic tools with a captured trace of real traffic so patterns match daily usage. Monitoring must track golden signals like latency, errors, saturation, and resource usage with clear, simple dashboards.

Alerts should be few and focused, with runbooks that guide the first responder through checks and safe actions. When teams rehearse bad days, real incidents feel smaller and end faster, protecting both uptime and sleep.

Conclusion

Avoiding these common mistakes is not about heroics; it is about steady, thoughtful engineering habits.

Start with compatibility and sizing, then ensure cooling, power, and firmware are all aligned and tested. Design storage deliberately, secure everything from the first boot, and build strong monitoring with real burn-in tests.

Each step seems modest alone, yet together they create a server platform that is calm and predictable. Intel servers shine when the surrounding choices support them, allowing cores and memory to serve users reliably.

In the end, boring servers are the best servers, because they let your business move forward with confidence.

Sign in to leave a comment.