Understanding how deep learning models make decisions can feel like trying to decipher a foreign language—at least at first. Terms like weights, biases, and gradients often steal the spotlight, but there’s another unsung hero shaping the entire learning process: activation functions.

Think of activation functions as gatekeepers inside a neural network. They decide what information should pass through and what shouldn’t. Without them, even the most complex architecture becomes nothing more than a fancy calculator performing linear math.

Whether you're a beginner trying to understand neural networks or a tech-savvy reader looking to deepen your knowledge, this article will walk you through everything you need to know—minus the jargon overload.

Why Activation Functions Matter

Before diving into types, let’s answer a simple question: Why do we even need activation functions?

Neural networks aim to mimic the human brain. In your brain, neurons "fire" when triggered by certain signals. Activation functions do the same thing—they determine whether a neuron should fire and influence how strongly it contributes to the next layer.

Here’s what activation functions help neural networks do:

- Introduce non-linearity, allowing models to learn complex patterns.

- Control how signals flow through the network.

- Enable deeper architectures to extract richer features.

- Help networks converge faster during training.

- Avoid problems like exploding or vanishing gradients (depending on the function chosen).

Without activation functions, no matter how many layers you stack, the entire deep network collapses into a single linear equation—useless for image recognition, speech processing, or pretty much anything interesting.

Linear vs. Non-Linear Activation Functions

To appreciate activation functions, it helps to understand the difference between linear and non-linear behavior.

Linear Functions

A linear activation function outputs something like:

f(x) = ax + b

They’re simple but limited.

Non-Linear Functions

Non-linear functions allow the network to learn patterns that aren't just straight lines. These functions are what make deep learning deep.

Most modern activation functions fall into the non-linear category because real-world data is rarely linear.

The Most Common Activation Functions in Deep Learning

Below, we break down the most widely used activation functions, how they work, when to use them, and where they shine.

1. Sigmoid Activation Function

The Sigmoid function is one of the earliest activation functions used in neural networks. It looks like an S-shaped curve.

Formula:

f(x) = 1 / (1 + e^-x)

Characteristics

- Squashes values between 0 and 1.

- Useful when the output represents probability.

- Smooth gradient, which helps in optimization.

Where It’s Used

- Binary classification (often in the output layer)

Downsides

- Prone to vanishing gradients.

- Slow learning for large or small input values.

Real-world analogy:

It’s like a dimmer switch that gradually transitions between off and on.

2. Tanh (Hyperbolic Tangent) Function

Tanh looks similar to sigmoid but ranges from -1 to 1, making it zero-centered.

Formula:

f(x) = (e^x – e^-x) / (e^x + e^-x)

Benefits

- Faster learning than sigmoid.

- Values centered around zero, improving gradient flow.

Limitations

- Can still suffer from vanishing gradients.

Common Applications

- Hidden layers of feedforward neural networks.

3. ReLU (Rectified Linear Unit): The Most Popular Choice

If deep learning had a mascot, it would probably be ReLU.

Formula:

f(x) = max(0, x)

Why ReLU Became a Favorite

- Simple and computationally efficient.

- Helps solve vanishing gradient problems.

- Works extremely well for deep architectures.

Downsides

- “Dying ReLU” problem: Once a neuron outputs zero consistently, it may never recover.

Used In

- CNNs, RNNs, GANs—almost every modern deep learning architecture.

Think of ReLU as a filter that blocks negative information but allows positive signals to flow freely.

4. Leaky ReLU

To solve the dying ReLU problem, Leaky ReLU allows a small, non-zero gradient when inputs are negative.

Formula:

f(x) = x if x > 0, else αx

Benefits

- Prevents neurons from getting stuck at zero.

- Faster convergence than ReLU in some cases.

5. Parametric ReLU (PReLU)

A more flexible version of Leaky ReLU where α is learned during training.

Advantages

- Learns optimal negative slope automatically.

- Can outperform ReLU in specific architectures.

6. ELU (Exponential Linear Unit)

ELU smooths the negative side instead of using a straight line.

Why Use ELU?

- More robust to noise and outliers.

- Produces faster and more accurate learning.

Drawback

- Slightly more computational cost than ReLU.

7. Softmax Activation Function

Softmax is widely used in multi-class classification problems.

It converts raw output values (logits) into probabilities that add up to 1.

Key Benefits

- Helps models pick the most likely class.

- Easy to interpret output as probability distribution.

Best Used In

- Output layer of classification models with more than two classes.

8. Swish (Developed by Google)

A newer activation function defined as:

f(x) = x * sigmoid(x)

Why Experts Love Swish

- Smooth, non-monotonic.

- Performs better than ReLU on very deep networks.

- Helps models generalize better.

How Activation Functions Impact Training

Choosing the wrong activation function can slow down training or completely block learning. Here are key areas affected:

1. Gradient Flow

Activation functions influence how easily gradients pass through layers.

- Sigmoid/tanh → prone to vanishing gradients

- ReLU/Leaky ReLU → maintain stronger gradients

- ELU/Swish → smooth and stable gradients

2. Learning Speed

Functions like ReLU and Swish help models learn significantly faster.

3. Model Accuracy

Some functions allow deeper feature extraction, leading to higher accuracy.

4. Stability

Poor choices may cause exploding gradients or dead neurons.

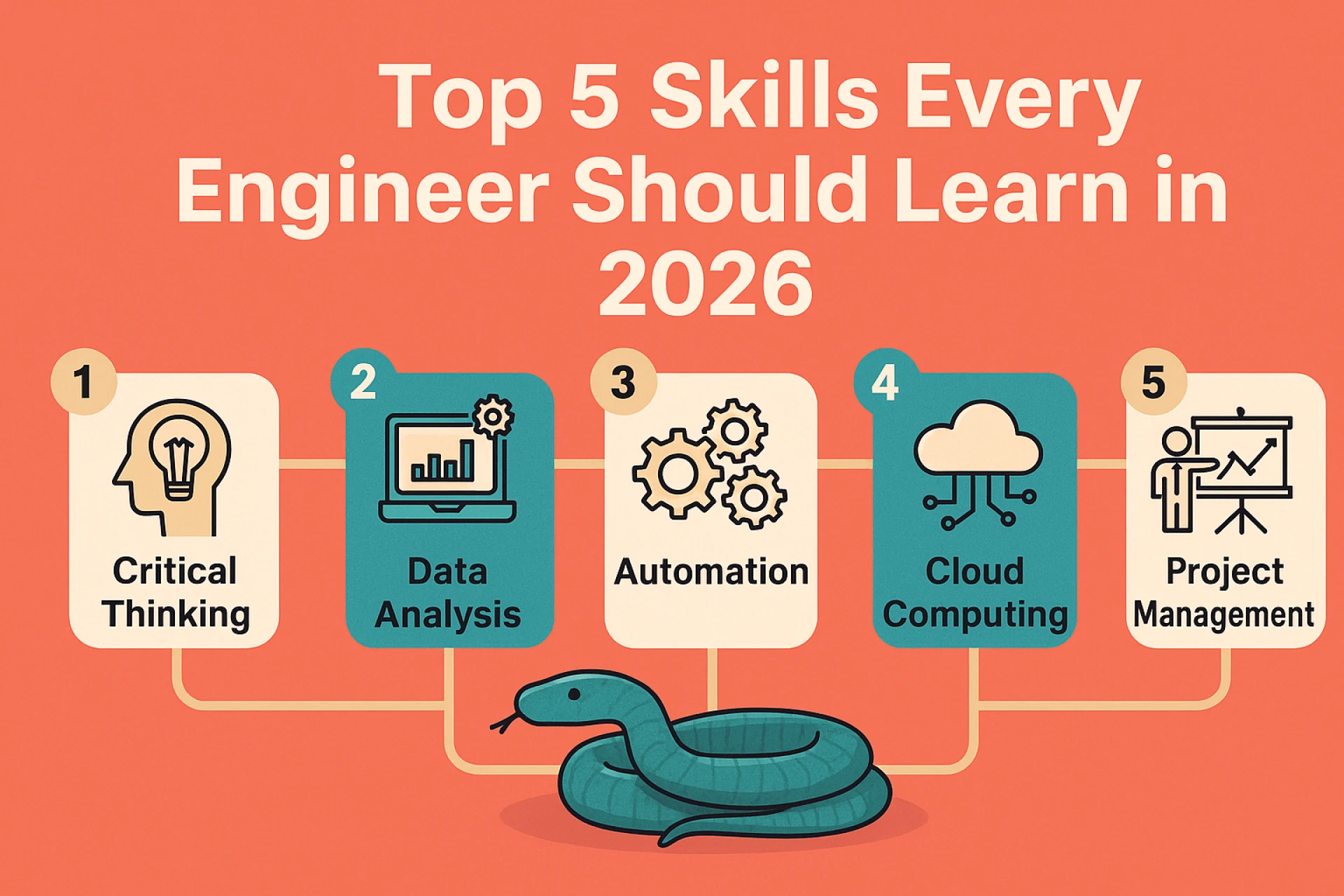

Comparing Activation Functions: When Should You Use What?

Here’s a simple guide to help you make better choices:

Use ReLU When:

- You want fast, efficient training.

- You’re working with deep neural networks.

Use Sigmoid When:

- Your output represents probability (binary classification).

Use Softmax When:

- You’re predicting multiple classes.

Use Tanh When:

- You need zero-centered outputs in hidden layers.

Use Leaky ReLU or PReLU When:

- You want to avoid dead neurons.

Use ELU or Swish When:

- You’re training very deep models that need smoother gradients.

Practical Example: Choosing an Activation Function

Imagine you’re building a neural network for recognizing handwritten digits (0–9). Here's a simple guideline:

- Hidden layers: ReLU or Swish

- Output layer: Softmax (10 classes)

If you're building a model to classify emails as spam or not spam:

- Hidden layers: ReLU

- Output layer: Sigmoid

Picking the right activation function is like choosing the right tool for the job—each one has strengths depending on the task.

How Activation Functions Shape Real-World Deep Learning

Activation functions may seem like small mathematical components, but they influence some of the most powerful AI systems today.

In Self-Driving Cars

Deep networks with ReLU/ELU help identify objects—pedestrians, traffic signs, lanes—within milliseconds.

In Voice Assistants

Activation functions help speech models understand tone, emotion, and intent.

In Healthcare

Softmax helps AI systems classify diseases from medical images with remarkable accuracy.

In Recommendation Systems

Functions like Swish help models analyze user behavior and make predictions seamlessly.

In short, activation functions are everywhere—even if they’re working behind the scenes.

Future Trends: Are Activation Functions Evolving?

Yes, rapidly.

Recent research explores advanced functions like Mish or innovations based on learnable parameters. As networks get deeper and more complex, activation functions continue evolving to keep up with growing demands.

Future deep learning models may use adaptive activations that adjust themselves based on the data—making AI systems more accurate and efficient than ever.

Conclusion: Activation Functions Are the Heart of Deep Learning

Activation functions may look like small mathematical formulas, but they determine how neural networks learn, how fast they converge, and how accurately they perform. Without them, advanced AI applications—from facial recognition to medical diagnosis—simply wouldn’t exist.

Whether you're building models or simply expanding your understanding of deep learning, knowing how activation functions work gives you a powerful advantage. They influence everything from model performance to training stability, and mastering them opens the door to designing smarter and more efficient AI systems.

So next time you hear terms like ReLU, Softmax, or Swish, you’ll know you’re looking at the very mechanisms that bring neural networks to life.

Sign in to leave a comment.