Modern enterprises generate data at unprecedented scales, creating both opportunities and challenges for IT infrastructure teams. While Big Data promises transformative insights, organizations struggle to effectively store, manage, and analyze massive datasets that often exceed traditional storage capabilities. Data lakes have emerged as a popular solution for handling diverse, unstructured data at scale, yet many enterprises find themselves grappling with performance bottlenecks and integration complexities when connecting these repositories to their existing storage infrastructure.

Storage Area Network (SAN) solutions are evolving to address these challenges, offering enterprise-grade performance, reliability, and scalability that data lakes require. By bridging Big Data architectures with proven storage technologies, organizations can unlock the full potential of their data assets while maintaining the security, governance, and performance standards that mission-critical operations demand.

This convergence represents a fundamental shift in how enterprises approach data storage strategy, moving beyond siloed solutions toward integrated architectures that support both operational workloads and analytical processing at scale.

Understanding Data Lakes: Architecture and Implementation Challenges

Data lakes serve as centralized repositories that store raw data in its native format, supporting structured, semi-structured, and unstructured data types without requiring predefined schemas. Unlike traditional data warehouses, which impose rigid structures during data ingestion, data lakes enable organizations to store vast quantities of information and apply structure only when analysis is required.

The architecture typically consists of three primary layers: ingestion, storage, and processing. The ingestion layer handles data collection from various sources including IoT sensors, application logs, transactional systems, and external APIs. The storage layer maintains raw data files using distributed file systems such as Hadoop Distributed File System (HDFS) or cloud-based object storage. The processing layer enables data transformation, analytics, and machine learning workloads through frameworks like Apache Spark, Flink, or cloud-native services.

However, data lakes present significant operational challenges. Storage performance can degrade as datasets grow, particularly when handling high-velocity data ingestion or complex analytical queries. Data governance becomes increasingly difficult without proper metadata management and lineage tracking. Security concerns arise from storing sensitive information alongside less critical data, requiring granular access controls and encryption capabilities.

Many organizations also encounter the "data swamp" problem, where poorly managed data lakes become repositories of low-quality, ungovernable data that provides limited analytical value. These challenges underscore the need for enterprise-grade storage infrastructure that can support data lake architectures while maintaining performance, security, and management capabilities.

The Convergence: SAN Solutions Meet Big Data Demands

Traditional SAN architectures are evolving to support Big Data workloads through enhanced scalability, performance optimization, and integration capabilities. Modern SAN solutions incorporate distributed storage technologies, high-speed networking protocols, and advanced data management features specifically designed for analytics-heavy environments.

High-performance SANs now support parallel processing architectures that enable multiple nodes to access shared storage simultaneously, eliminating traditional bottlenecks associated with sequential data access. Advanced caching mechanisms and tiered storage strategies optimize frequently accessed data placement while maintaining cost-effectiveness for long-term retention.

Network fabric improvements, including 32Gb and 128Gb Fibre Channel implementations, provide the bandwidth necessary for high-throughput data analytics workloads. NVMe over Fabrics (NVMe-oF) protocols further reduce latency and increase IOPS capacity, enabling real-time analytics processing that was previously constrained by storage performance limitations.

SAN vendors are also integrating cloud-native features, including REST APIs, container orchestration support, and hybrid cloud connectivity. These capabilities enable seamless integration with modern data lake platforms while maintaining the reliability and performance characteristics that enterprise applications require.

Software-defined storage layers abstract physical hardware complexities, providing policy-driven automation for data placement, replication, and lifecycle management. This abstraction enables IT teams to optimize storage resources dynamically based on workload requirements and business priorities.

Real-World Use Cases: SAN-Enabled Data Lake Success Stories

Financial services organizations leverage SAN-backed data lakes for regulatory reporting and risk analytics. High-frequency trading firms require microsecond-level latency for market data analysis, while maintaining historical data retention for compliance purposes. SAN solutions provide the performance necessary for real-time processing while ensuring data durability and availability for audit requirements.

Healthcare institutions utilize integrated storage area network and data lake architectures for medical imaging analysis and patient outcome research. Large-scale genomics projects generate terabytes of sequence data that require both high-performance access for computational analysis and long-term retention for longitudinal studies. SAN solutions enable rapid data processing while maintaining HIPAA-compliant security controls.

Manufacturing companies implement predictive maintenance programs using IoT sensor data stored in SAN-backed data lakes. Production line sensors generate continuous data streams that require immediate analysis for anomaly detection while historical data supports machine learning model training. The combination of high-performance SAN storage with distributed analytics frameworks enables both real-time monitoring and batch processing workloads.

Telecommunications providers analyze network performance data and customer usage patterns using integrated storage architectures. Call detail records, network logs, and subscriber behavior data require different access patterns and retention policies. SAN solutions provide the flexibility to optimize storage tiers based on data age and access frequency while maintaining consistent performance for analytics workloads.

Best Practices: Implementing SAN Solutions in Big Data Environments

Performance optimization requires careful consideration of data placement strategies and workload characteristics. Implement tiered storage policies that automatically migrate data based on access patterns and age. Place frequently accessed datasets on high-performance storage tiers while archiving historical data to cost-effective capacity storage. Configure parallel processing capabilities to eliminate storage bottlenecks during peak analytical workloads.

Security implementation must address both data-at-rest and data-in-transit protection requirements. Deploy encryption at the storage layer using hardware-accelerated encryption engines to minimize performance impact. Implement granular access controls that support role-based permissions aligned with organizational hierarchies. Establish network segmentation to isolate analytical workloads from production systems while maintaining necessary connectivity for data ingestion.

Data governance frameworks require integration between SAN management tools and data catalog systems. Implement automated metadata capture during data ingestion processes. Establish data lineage tracking that spans from source systems through storage layers to analytical outputs. Deploy policy engines that enforce retention schedules and compliance requirements automatically.

Capacity planning should account for both current storage requirements and future growth projections. Implement storage analytics tools that monitor utilization patterns and predict capacity needs. Design storage architectures with horizontal scaling capabilities that support seamless expansion without performance degradation.

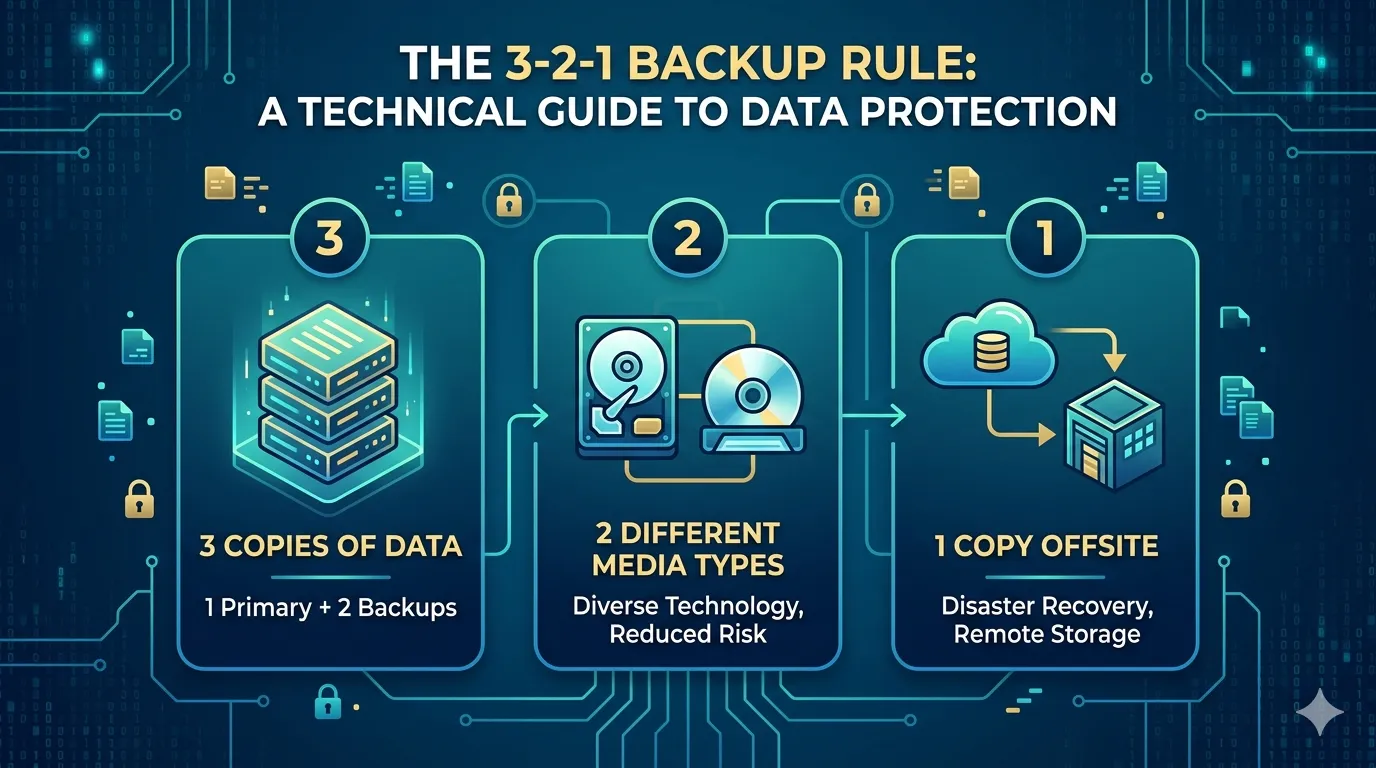

Backup and disaster recovery strategies must address both operational data and analytical datasets. Implement snapshot-based backup systems that provide rapid recovery capabilities for critical analytics workloads. Establish cross-site replication for business-critical data lakes while optimizing bandwidth utilization through deduplication and compression technologies.

Maximizing Data Value Through Integrated Storage Architectures

The convergence of data lakes with enterprise SAN solutions represents a strategic approach to Big Data infrastructure that combines the flexibility of modern analytics platforms with the reliability of proven storage technologies. Organizations that successfully bridge these architectures gain competitive advantages through improved data accessibility, enhanced analytical capabilities, and reduced infrastructure complexity.

This integration enables enterprises to extract maximum value from their data assets while maintaining the security, performance, and governance standards that business operations require. As data volumes continue growing and analytical requirements become more sophisticated, the combination of SAN solutions with data lake architectures provides a scalable foundation for data-driven decision making.

Success depends on careful planning, appropriate technology selection, and implementation of best practices that address both current requirements and future scalability needs. Organizations that invest in integrated storage strategies position themselves to capitalize on emerging analytics technologies while protecting their data infrastructure investments.

Sign in to leave a comment.