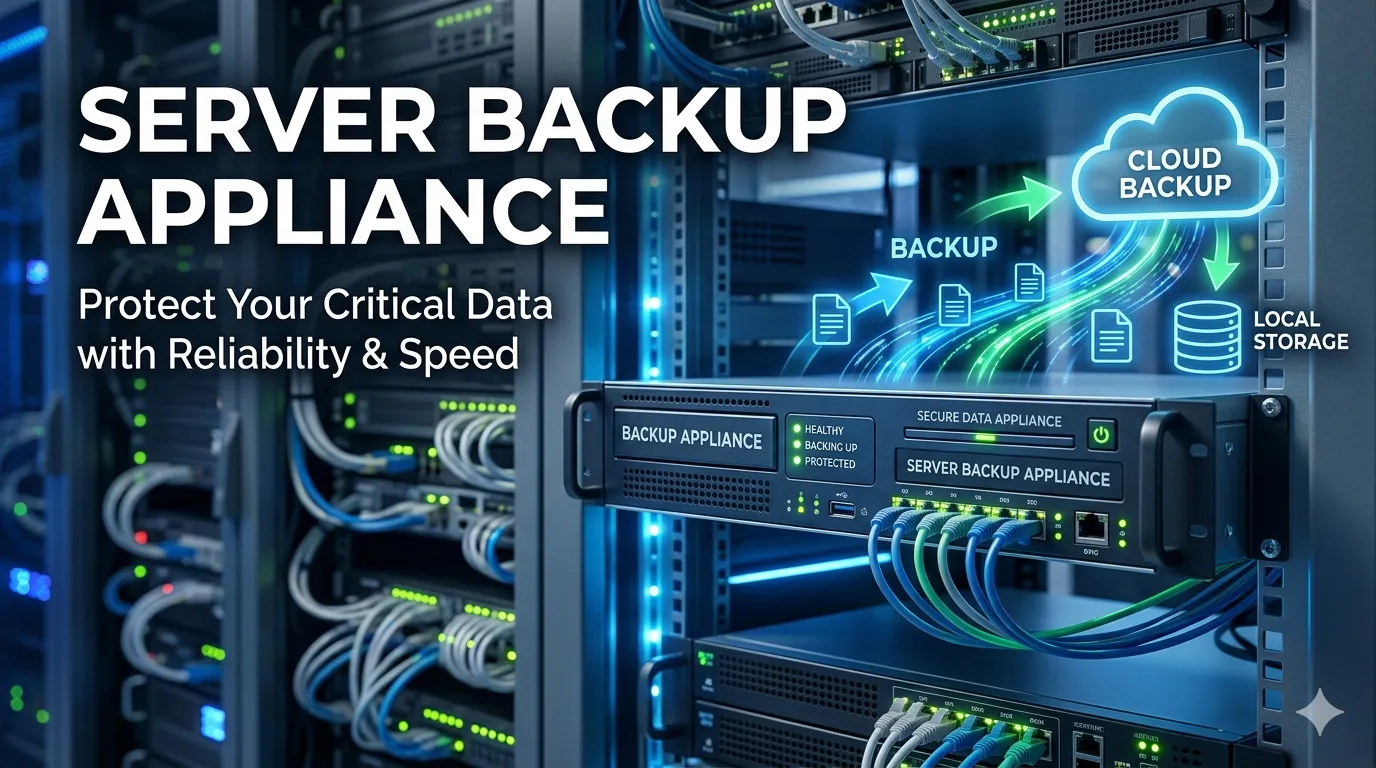

Modern data backup appliances have evolved far beyond simple storage targets. They now function as intelligent, integrated nodes within a broader data protection fabric, responsible for ensuring data integrity, minimizing storage footprints, and orchestrating disaster recovery (DR). For IT architects and storage administrators, understanding the nuanced capabilities of these appliances is critical for reducing Recovery Time Objectives (RTO) and Recovery Point Objectives (RPO).

This guide bypasses the basics to explore the architectural mechanisms that drive high-performance data backup appliances, focusing on storage efficiency, replication logic, and security integration.

Deep Dive into Deduplication Techniques

Efficiency in backup appliances is largely dictated by the sophistication of the deduplication engine. While block-level deduplication is standard, the implementation method significantly impacts performance and reduction ratios.

Variable-Length vs. Fixed-Length

Advanced appliances utilize variable-length block deduplication rather than fixed-length. In a fixed-length scheme, a single byte shift in a file (like adding a sentence to the beginning of a document) changes the hash of every subsequent block, negating deduplication benefits. Variable-length segmentation solves this by using algorithms (such as Rabin fingerprints) to identify boundaries based on data content, realigning the blocks to match existing segments. This results in significantly higher storage efficiency for modified datasets.

Inline vs. Post-Process

The choice between inline and post-process deduplication represents a trade-off between ingest speed and storage efficiency.

- Inline Deduplication: Data is analyzed and deduplicated in the RAM before being written to disk. This minimizes disk I/O and storage consumption immediately but demands substantial CPU overhead during the backup window.

- Post-Process Deduplication: Data is written raw to a landing zone and deduplicated later. This allows for faster backups (limited only by disk write speeds) but requires a larger initial storage capacity to hold the unreduced data.

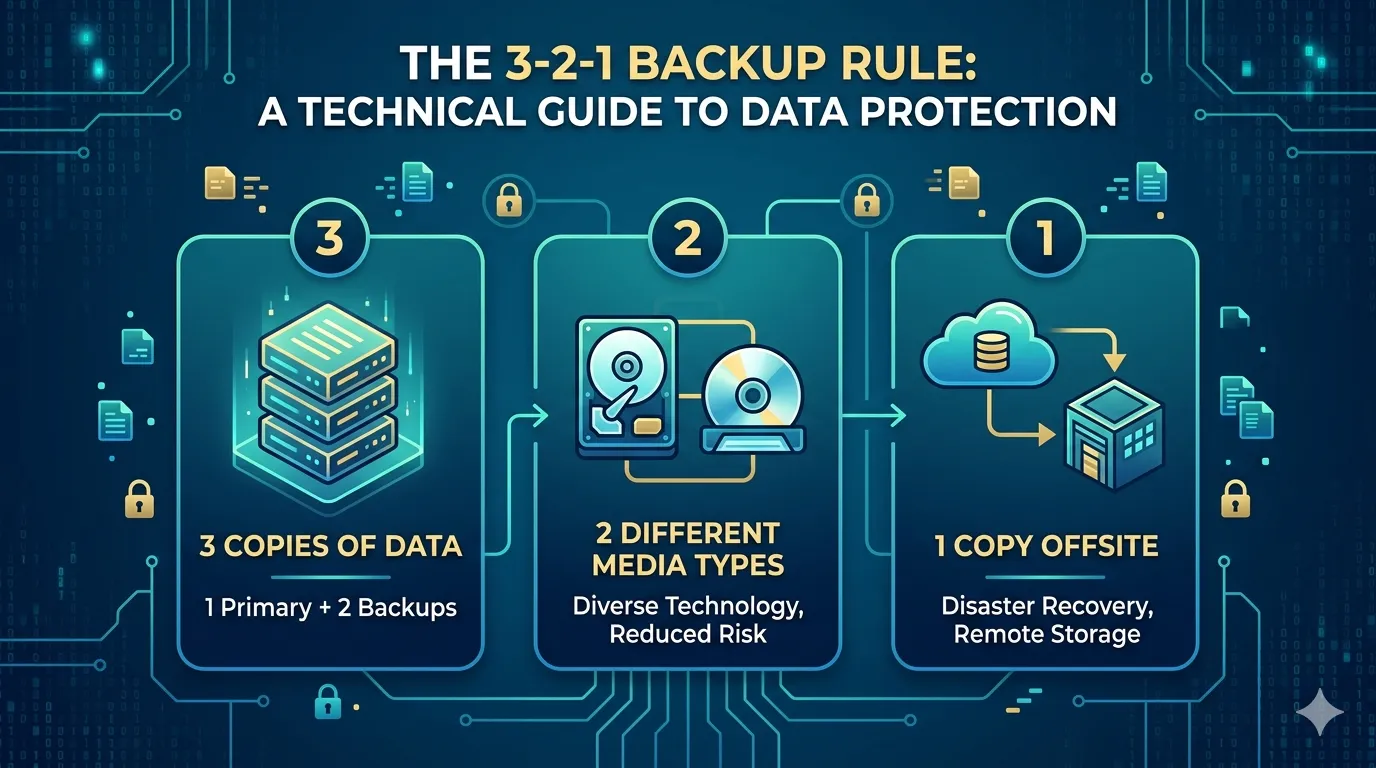

Replication Strategies for Disaster Recovery

Replication is the backbone of disaster recovery, but the synchronization method must align with the network topology and latency constraints.

Synchronous Replication

Synchronous replication writes data to the primary and secondary sites simultaneously. The write is not acknowledged until it is confirmed at both locations. This guarantees a zero RPO, meaning no data loss occurs if the primary site fails. However, it introduces latency to the application and is strictly limited by the physical distance between data centers.

Asynchronous Replication

For geographically dispersed DR sites, asynchronous replication is the standard. The write is acknowledged immediately at the primary site, and data is replicated to the secondary site afterward. While this introduces a non-zero RPO (seconds or minutes), it prevents network latency from impacting production performance and tolerates higher packet loss and jitter.

Snapshot Technology Architectures

Snapshots provide near-instantaneous recovery points, but the underlying mechanics—specifically Copy-on-Write (CoW) versus Redirect-on-Write (RoW)—affect system performance.

Copy-on-Write (CoW)

In CoW snapshots, when a block of data is modified, the original block is read and written to a snapshot location before the new data overwrites the original address. This incurs a "read-modify-write" penalty, tripling the I/O operations for the first write to a block, potentially degrading performance on write-heavy workloads.

Redirect-on-Write (RoW)

RoW optimizes this by redirecting new writes to a new location on the disk, leaving the original block as the snapshot. This eliminates the read-modify-write penalty, making RoW superior for performance-intensive environments. However, it can lead to fragmentation over time, requiring intelligent background defragmentation processes.

Integration with Cloud Services

The modern appliance effectively acts as a gateway to cloud storage, enabling hybrid cloud architectures. Advanced integration goes beyond simple file dumping; it involves tiering and VTL (Virtual Tape Library) interfaces.

Appliances can automate the movement of aged data to cooler cloud tiers (such as AWS S3 Glacier or Azure Archive) based on retention policies. This reduces the on-premises footprint while maintaining a catalog of the offsite data. Furthermore, "cloud-native" appliances allow for disaster recovery within the cloud itself, spinning up virtual instances of the backup appliance to restore data directly to cloud compute resources.

Performance Optimization

Optimizing appliance throughput requires tuning both the network and the storage subsystems.

- Network Configuration: Implement LACP (Link Aggregation Control Protocol) or LBR (Load Balancing Router) bonding modes to aggregate bandwidth across multiple interfaces. Furthermore, enabling Jumbo Frames (MTU 9000) reduces CPU overhead by decreasing the number of frames the system must process for large data transfers.

- Storage Tiering: Ensure the appliance utilizes flash storage for metadata databases and ingest landing zones. Separating the metadata (deduplication hash tables) from the data store ensures that lookups do not contend with write operations for IOPS.

Security Considerations

As backup targets are primary vectors for ransomware attacks, security hardening is non-negotiable.

Encryption and Access Control

Data must be encrypted at rest (using AES-256) and in flight. Key management should be externalized where possible to prevent appliance compromise from exposing encryption keys. Regarding access, Role-Based Access Control (RBAC) and Multi-Factor Authentication (MFA) are mandatory to prevent unauthorized administrative access.

Compliance and Immutability

To meet compliance standards like HIPAA or GDPR, appliances should support WORM (Write Once, Read Many) or Object Locking capabilities. This ensures that backup data cannot be modified or deleted for a set retention period, providing a failsafe against ransomware encryption attempts.

Architecting for Resilience

Deploying a data backup appliance requires more than capacity planning; it demands a deep understanding of data flow, latency, and write mechanics. By leveraging variable-length deduplication, Redirect-on-Write snapshots, and immutable storage architectures, IT professionals can construct a data protection strategy that is resilient, compliant, and highly efficient.

Sign in to leave a comment.