For enterprise architects and senior systems administrators, the initial deployment of HYCU Backup is merely the foundational step in a comprehensive data protection strategy. While the platform is renowned for its simplicity and purpose-built architecture, its true potential lies in its advanced configuration capabilities.

Optimizing HYCU backup requires moving beyond standard policy assignment to understanding the underlying mechanics of data transfer, application consistency, and cross-platform integration. This article examines advanced methodologies for leveraging HYCU Backup to its fullest extent within complex, high-demand IT environments.

Leveraging Granular Control and Impact-Free Backups

Experienced administrators understand that standard crash-consistent backups are insufficient for high-transaction workloads. HYCU excels in its agentless, application-aware processing, but optimal utilization requires precise configuration of the Application Framework.

For critical databases such as SAP HANA or Microsoft SQL Server, reliance on generic snapshots can lead to data corruption upon restoration. Advanced users should enforce strict application-consistent checkpoints. This involves configuring the specific discovery credentials and ensuring the interaction between HYCU and the production storage controllers handles I/O quiescing correctly. This approach guarantees that transaction logs are truncated properly, preventing storage bloat and ensuring point-in-time recovery integrity without requiring agents on the guest OS.

Furthermore, administrators should utilize HYCU’s ability to separate backup traffic from production networks. By configuring dedicated virtual interfaces for data transport, backup streams can be isolated, preventing latency spikes in user-facing applications during backup windows.

Complex Integration Scenarios

HYCU Backup does not operate in a vacuum. In mature DevOps and enterprise environments, backup operations must integrate seamlessly with existing orchestration tools and ITSM platforms.

API-Driven Automation

For environments utilizing CI/CD pipelines, relying on the GUI for policy assignment is inefficient. Advanced integration involves leveraging the HYCU REST API. By embedding backup policy assignment into provisioning scripts (such as Terraform or Ansible playbooks), data protection becomes an intrinsic part of the infrastructure lifecycle. This ensures that every new asset is protected immediately upon creation, eliminating the "protection gap" that often occurs between deployment and manual backup configuration.

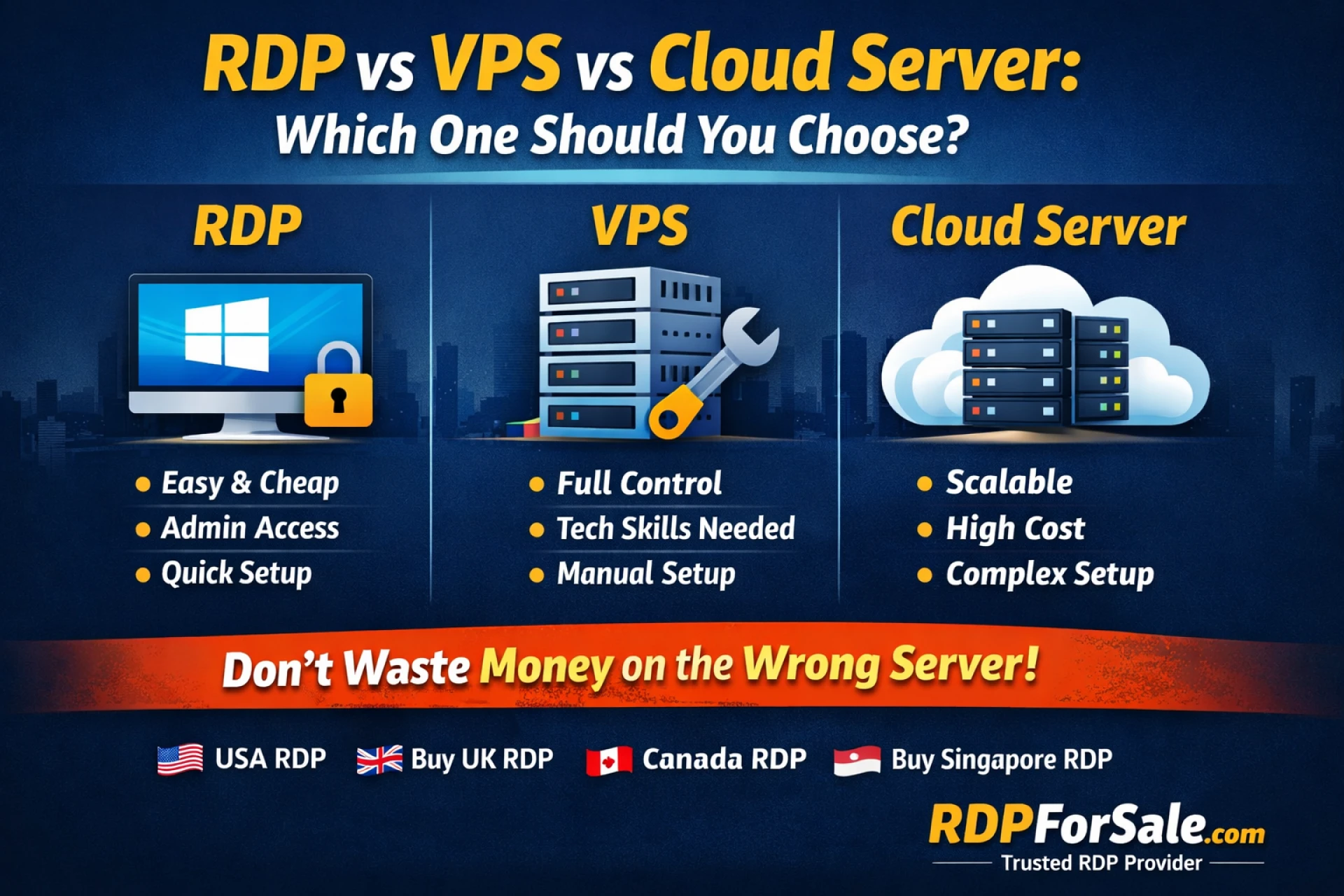

Cross-Cloud Mobility

HYCU’s native integration with public clouds (Azure, Google Cloud, AWS) allows for sophisticated disaster recovery architectures. Rather than simply using cloud storage as a dumb archive tier, advanced strategies involve configuring cross-cloud recovery targets. This enables the restoration of on-premises workloads directly into the public cloud as native instances, drastically reducing Recovery Time Objectives (RTO) during site-wide failures.

Performance Optimization in High-Change Environments

In environments with high data change rates, default configurations may lead to "stun" situations or extended backup windows that bleed into production hours. Maximizing performance requires a deep understanding of storage I/O and network throughput.

Parallelism and Stream Management

HYCU automatically manages data streams, but manual intervention is often necessary for massive datasets. For multi-terabyte virtual machines or file shares, administrators should configure multi-stream data transfer. This parallelizes the read/write operations, saturating available bandwidth to minimize the duration of the backup job. However, this must be balanced against the storage array's IOPS capacity to avoid degrading production performance.

Smart Tiering Policies

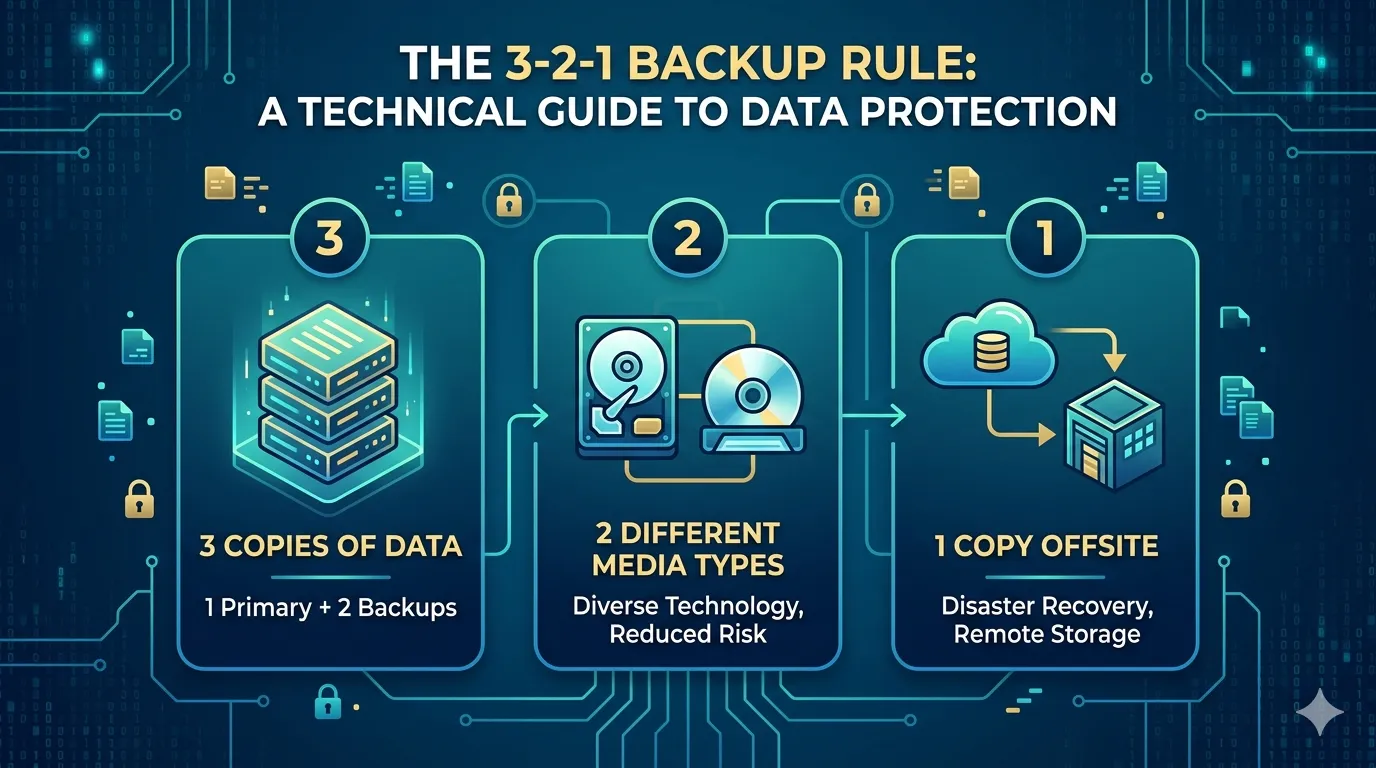

Optimizing storage costs without sacrificing RTO requires aggressive tiering policies. Configure HYCU to keep a short retention period (e.g., 24-48 hours) on high-performance, local flash storage for instant restores. Older restore points should automatically migrate to capacity-optimized object storage. This "hot/cold" architecture ensures that the most frequent recovery requests—which usually target recent data—are serviced instantly, while long-term retention remains cost-effective.

Troubleshooting Advanced Configuration Issues

Even in robust architectures, complex variables can cause backup failures. Troubleshooting at an advanced level moves beyond reading error codes to analyzing the infrastructure stack.

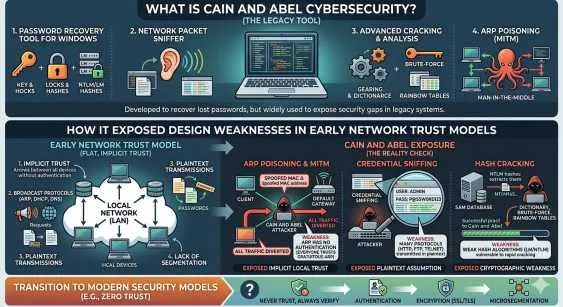

- VSS Writer Instability: For Windows workloads, backup failures are frequently caused by unstable Volume Shadow Copy Service (VSS) writers. If application-consistent backups fail, investigate the guest OS event logs for VSS timeout errors. Often, increasing the VSS timeout values in the registry or ensuring no conflicting backup agents are present resolves these issues.

- Snapshot Chain Overhead: On hyperconverged infrastructure, excessive snapshot retention at the storage layer can impact performance. Ensure that HYCU’s cleanup processes are completing successfully. If "orphan" snapshots accumulate, they can cause metadata bloat and slow down both production storage and backup operations.

- Network Segmentation Faults: If backup targets become unreachable, verify that the static routes configured within the HYCU controller align with recent network changes. Firewall rules must explicitly permit traffic on the necessary ports between the HYCU controller, the source hypervisor, and the target storage.

Strategic Data Resilience

HYCU Backup offers a potent combination of simplicity and depth. By mastering application-aware configurations, integrating protection via APIs, and optimizing data paths for performance, administrators transform backup from a passive insurance policy into an active component of infrastructure resilience. The focus for advanced practitioners must remain on the efficient alignment of technical capabilities with business continuity requirements with backup appliances.

Sign in to leave a comment.