Unstructured Data Is Winning. Your Storage Strategy Needs to Catch Up

File servers are groaning. SANs are expensive to expand. Meanwhile, 80 percent of new data is video, images, logs, backups, and telemetry. The answer for most teams is modern Object Storage Solutions that scale without the headaches of traditional hierarchies.

Object storage trades POSIX trees for flat buckets and unique IDs. That change removes the metadata bottlenecks that slow down file systems at scale. You get billions of objects, global access over HTTP, and built-in resilience. When you need to store more, you add nodes. No weekend migrations, no complex rebalancing.

How Object Storage Strategy Differs from File and Block

Understanding the differences helps you pick the right tool for each workload.

1. Data Structure

File storage uses folders and paths. Block storage presents raw volumes to an OS. Object storage uses buckets and keys.

Each object contains data, metadata, and a unique identifier. There are no directories to traverse, which means listing a billion objects is just as fast as listing ten.

2. Access Method

Files use NFS or SMB. Block uses iSCSI or Fibre Channel. Objects use HTTP with simple verbs: PUT, GET, DELETE, HEAD.

That makes them perfect for web apps, mobile clients, and backup tools. Any language with an HTTP library can talk to them.

3. Scale Model

File and block systems scale up until the controller chokes. Object Storage Solutions scale out. Add more servers and the system redistributes data automatically. Capacity and performance grow together.

Core Capabilities You Should Expect

Not all platforms are equal, but these features are table stakes today.

Durability Through Erasure Coding

Instead of mirroring data, the system breaks objects into chunks and adds parity. A common scheme like 12+4 can lose any four drives or nodes and still reconstruct data.

This gives eleven nines of durability with 33 percent overhead, far better than triple replication.

Horizontal Scalability

Start with three nodes and 200 TB. Next year add three more and you have 400 TB with more throughput. The cluster handles placement and rebalancing.

Your apps keep using the same endpoint while capacity grows behind the scenes.

Rich Metadata and Tagging

Each object can carry custom tags, JSON metadata, or content hashes. That turns storage into a searchable data lake.

Backup apps use it for retention dates. Media teams tag projects, resolution, and rights. You can query without a separate database.

Where Object Storage Fits in Your Architecture

Use it where scale, cost, and access matter more than low-latency block IO.

Backup and Archive

Most backup software now writes directly to object targets. Deduplication happens before data leaves the client, so you send unique blocks only.

Enable immutability on the bucket and you have ransomware protection built in. Restores are fast because the platform can serve thousands of objects in parallel.

Media and Entertainment

Video production creates massive files that don’t change often. Editing proxies can live on fast file storage while masters sit in object.

Global teams access content via presigned URLs without VPNs. Lifecycle policies move old projects to colder nodes automatically.

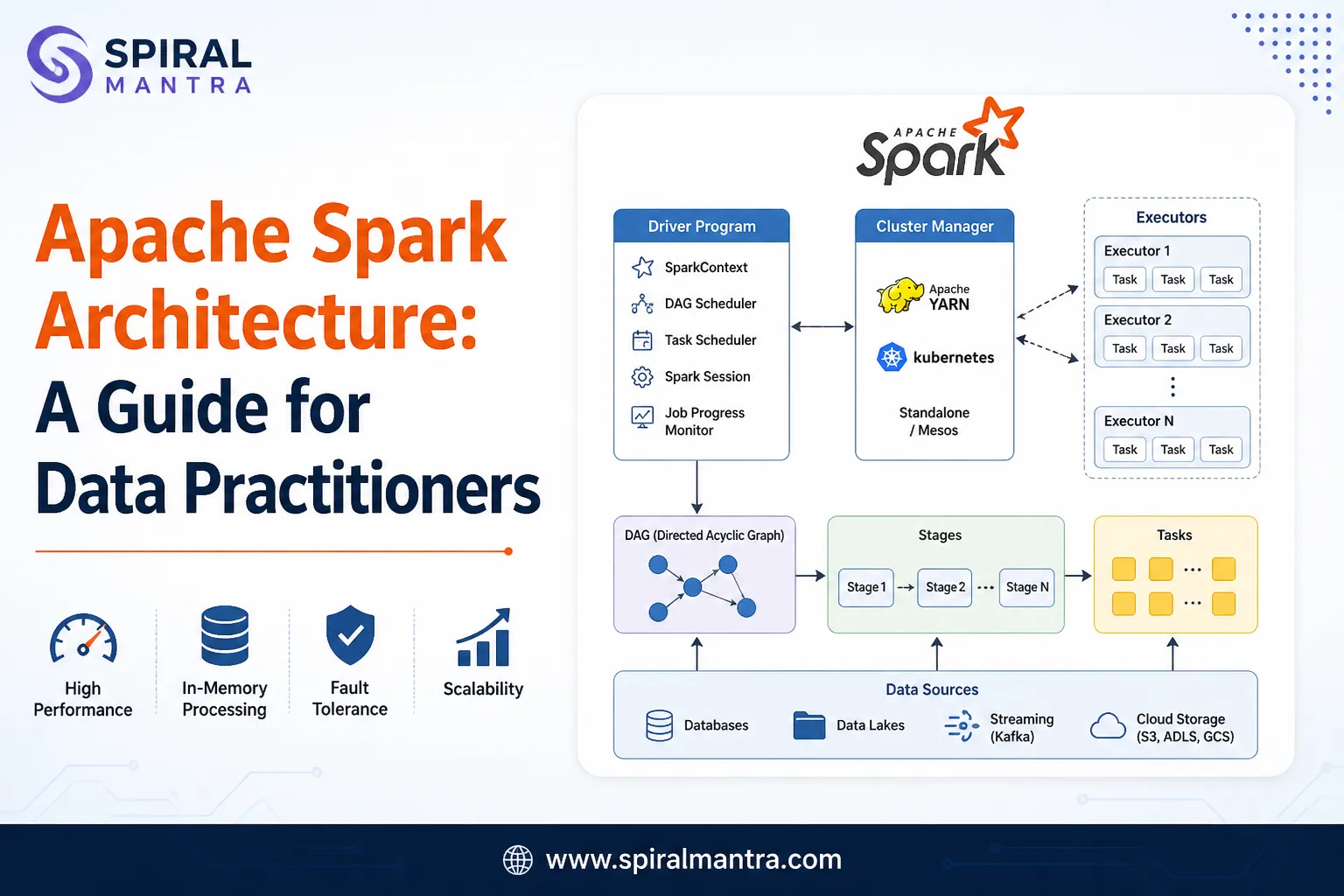

Analytics and AI Datasets

Data lakes are basically object stores with query engines on top. Dump logs, telemetry, and CSV files into a bucket.

Spark, Presto, or Python notebooks read them directly. No ETL into a warehouse just to explore data.

Application Data

Mobile apps upload images and user content straight to object storage using presigned URLs. Your app server never handles the bytes.

That reduces load and keeps large files out of your database. Lifecycle rules delete temp uploads after 7 days.

On-Prem vs. Third-Party Hosted: Making the Call

You have two paths. Both can use the same API.

| Factor | On-Prem Cluster | Managed Service |

|---|---|---|

| Cost Model | Capex upfront, low marginal cost | Opex per GB, per request |

| Control | You own hardware and location | Provider owns infrastructure |

| Latency | Single-digit ms on LAN | Depends on internet path |

| Scale Start | Usually 50 TB plus | Start at 1 GB |

| Compliance | Data stays in your facility | Review provider certifications |

Many organizations use both. They run Object Storage Solutions locally for primary workloads and replicate a copy to a separate service for disaster recovery. The API is the same, so failover is just a DNS change.

Security Practices That Actually Matter

Object storage is network-facing by design, so lock it down.

1. Network Segmentation

Put the cluster on a dedicated VLAN. Only backup servers and app gateways should reach it. Block internet ingress except to a proxy if needed.

2. Identity and Policies

Use IAM-style policies with least privilege. App A can write to bucket A only. Read-only keys for analytics. Rotate keys every 90 days and store them in a secrets manager.

3. Encryption Everywhere

TLS 1.3 for transit. AES-256 for at-rest encryption with keys in an HSM or external KMS. Never store keys on the same nodes as data.

4. Immutability for Critical Buckets

Enable object lock with governance or compliance mode. Set retention based on legal needs. This stops both attackers and accidental deletes.

Performance Tuning Basics

Object storage isn’t slow, but defaults are for safety, not speed.

Concurrency: Use multipart upload for objects over 100 MB. Run 8 to 32 parts in parallel. Throughput scales almost linearly.

Networking: Use 25Gb or faster NICs. Bond them for redundancy. Jumbo frames help if your switches support them end to end.

Client Placement: Put gateways or app servers in the same rack as the storage when possible. Every network hop adds latency.

Bucket Layout: Don’t use dates as prefixes if you list millions of objects. Use random hashes to spread load across the cluster.

Migration Without Drama

You don’t need to rewrite apps to adopt object storage.

Step 1: Deploy a pilot cluster and create test buckets. Validate PUT, GET, list, and delete with your app or backup tool.

Step 2: Use a sync utility to copy existing data. Most tools support checksum verification and bandwidth throttling.

Step 3: Switch your app to the new endpoint in stages. Start with read-only workloads, then move writes.

Step 4: Turn on versioning and immutability, then retire the old storage.

Because the interface is standard, rollback is also simple. Change the endpoint back and you’re done.

Conclusion

Unstructured data growth is not slowing down. File shares and LUNs were not designed for billions of objects, global access, and flat cost curves. Object storage was.

It gives you scale, resilience, and a simple HTTP API that every developer and backup app already knows. Start with one workload, like backups or media archives. Measure cost, speed, and admin time. Most teams expand quickly once they see how little operational overhead it takes. The future of storage is flat, durable, and addressable by URL.

FAQs

1. Can I run a database directly on object storage?

No. Databases need block or file storage for random reads, writes, and locking. Use object storage to hold database backups, exports, and parquet files for analytics. Run the live database on SSD or NVMe volumes, then back it up to object.

2. How many objects can I store in one bucket?

For practical purposes, unlimited. Production clusters routinely hold billions of objects per bucket. Performance depends on key design and list patterns, not total count. Avoid sequential prefixes if you list frequently.

3. What happens during a node failure?

The cluster marks the node as down and rebuilds its data from parity chunks on other nodes. Clients keep reading and writing because there are still enough pieces to serve every object. You replace the node or disk and the system heals automatically.

4. Is object storage suitable for small files under 1 MB?

It works, but efficiency drops. Each object has metadata overhead. If you have millions of tiny files, consider tarring them into 100 MB objects or using a gateway that aggregates. For backups and logs, this is rarely a problem.

5. How do I prevent surprise costs with on-prem object storage?

Track capacity growth per bucket and set quotas. Monitor request metrics so a buggy app doesn’t hammer the cluster. Unlike usage-based billing, your costs are power, support, and eventual expansion. Review quarterly and plan node adds 90 days ahead.

Sign in to leave a comment.