PostgreSQL 17 brings native incremental backup support directly into the database core, eliminating the previous dependency on third-party tools like pgBackRest or Barman. Instead of copying the entire dataset on every run, incremental backups capture only the data blocks that changed since the last backup, whether full or incremental. This results in faster backups, reduced storage consumption, and lower system overhead.

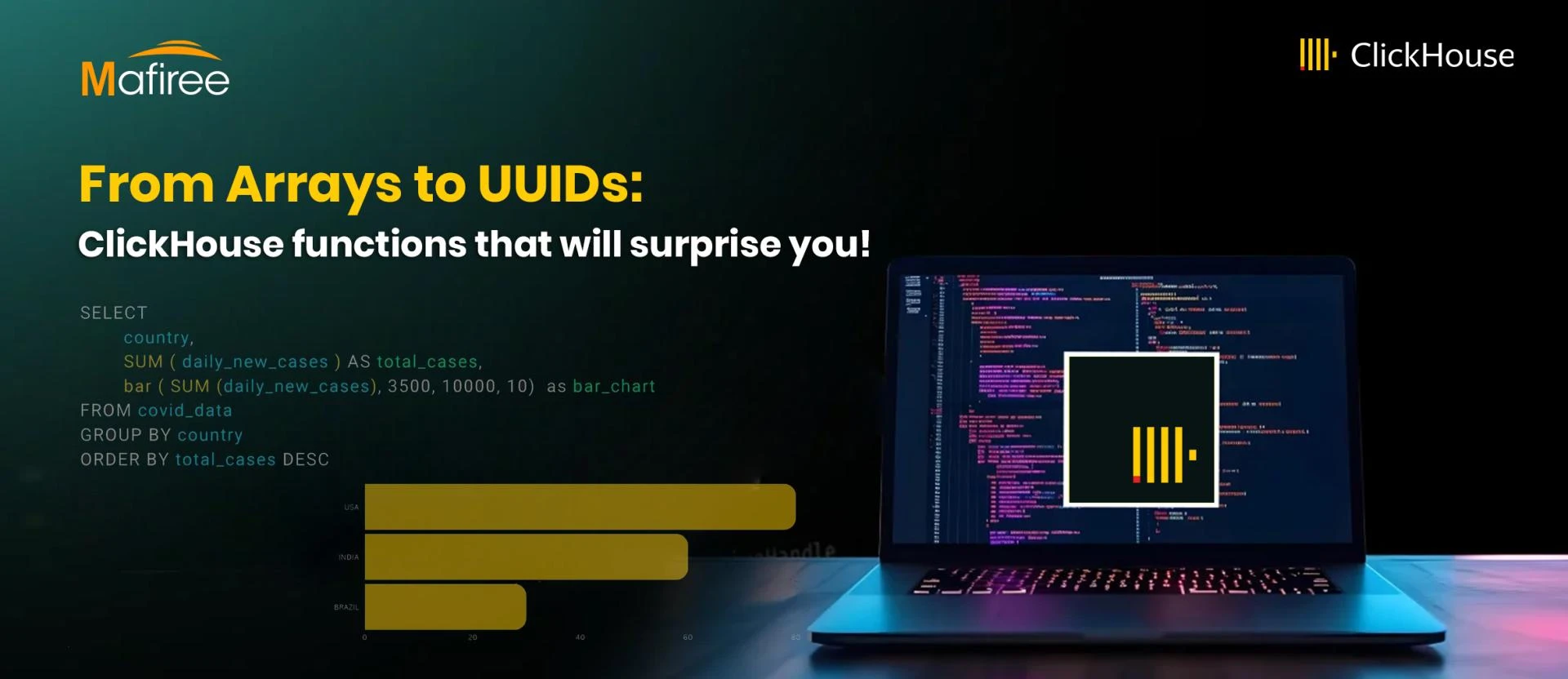

ClickHouse provides a powerful collection of built-in functions that simplify working with large datasets, from date arithmetic to unique ID generation. Here's a concise breakdown of the most essential categories.

Linux kernel live patching is no longer optional—it’s becoming a foundational security strategy for modern infrastructure. As organizations move toward always-on systems, the ability to apply critical updates instantly—without downtime—has redefined how security and operations work together.

MongoDB has long been celebrated for its flexibility and performance, and since version 4.0, it also supports full ACID transactions — giving developers both NoSQL scalability and the data consistency guarantees traditionally associated with relational databases.

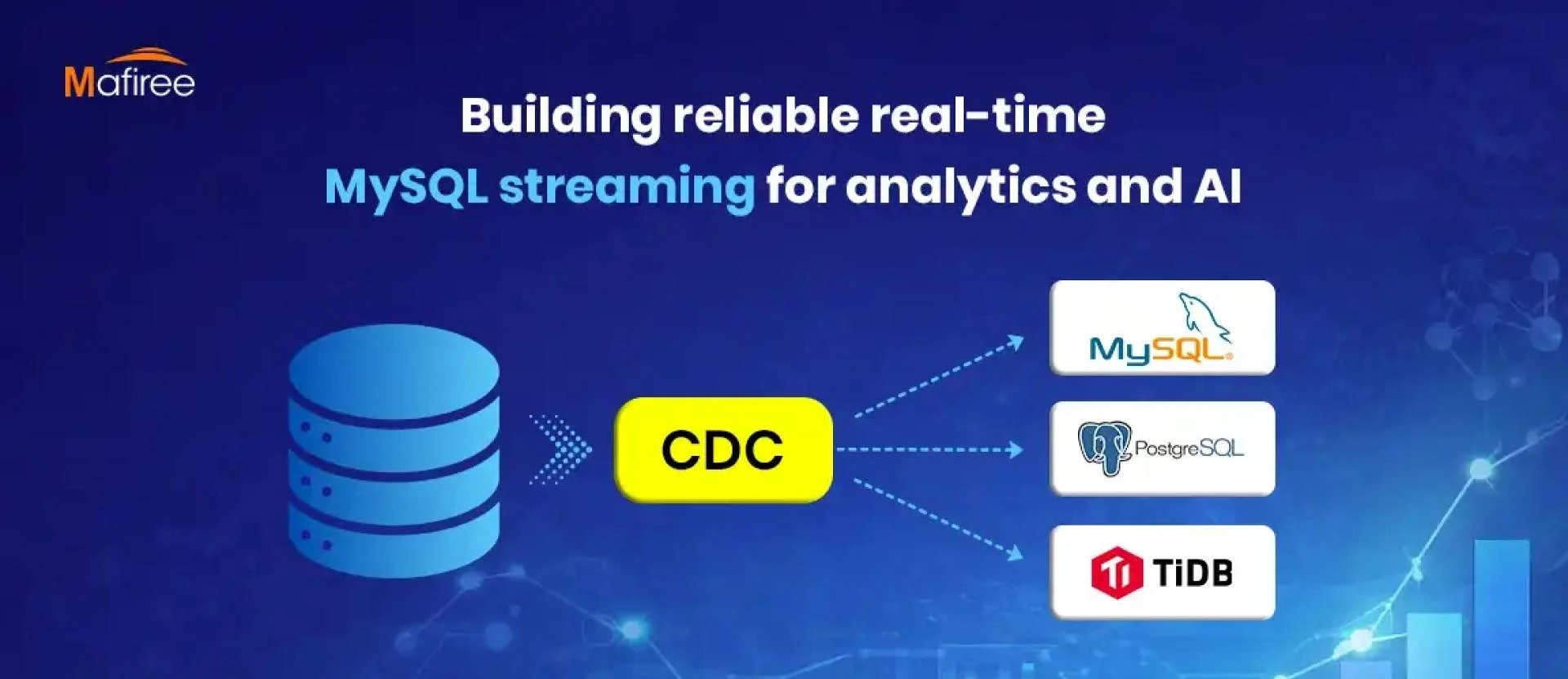

Real-time Change Data Capture (CDC) pipelines built on MySQL provide significant power

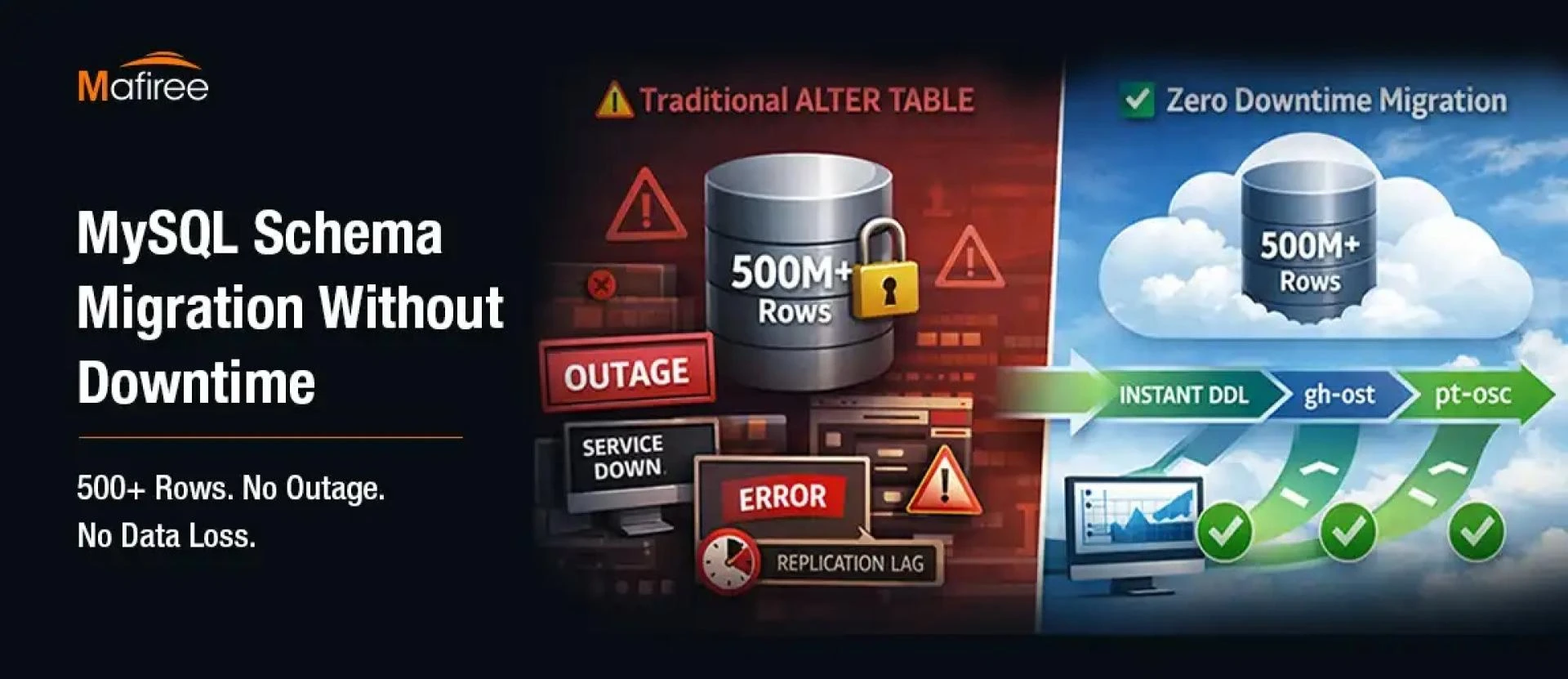

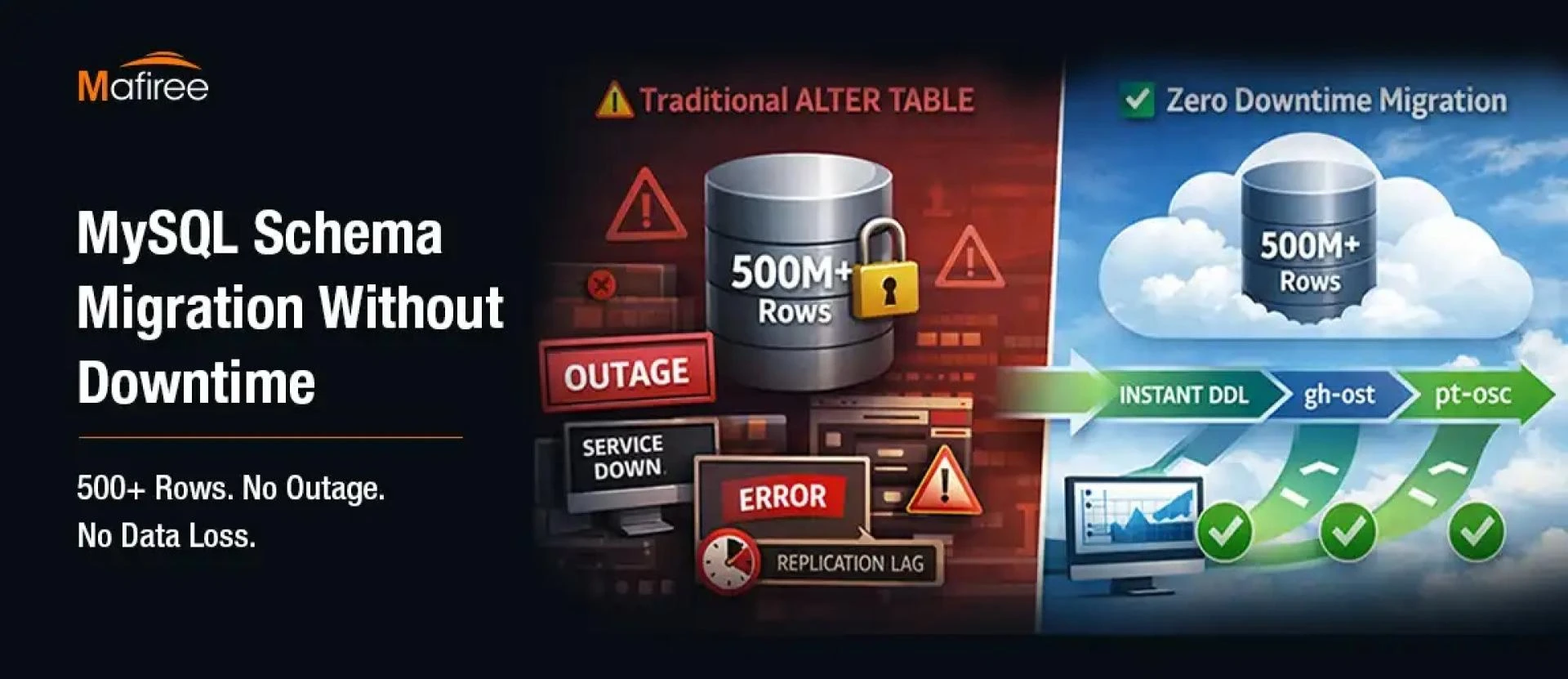

Modifying schemas on very large MySQL tables can disrupt live systems when traditional DDL operations are used.

Real-time MySQL streaming has become essential for analytics and AI in today’s data-driven landscape.

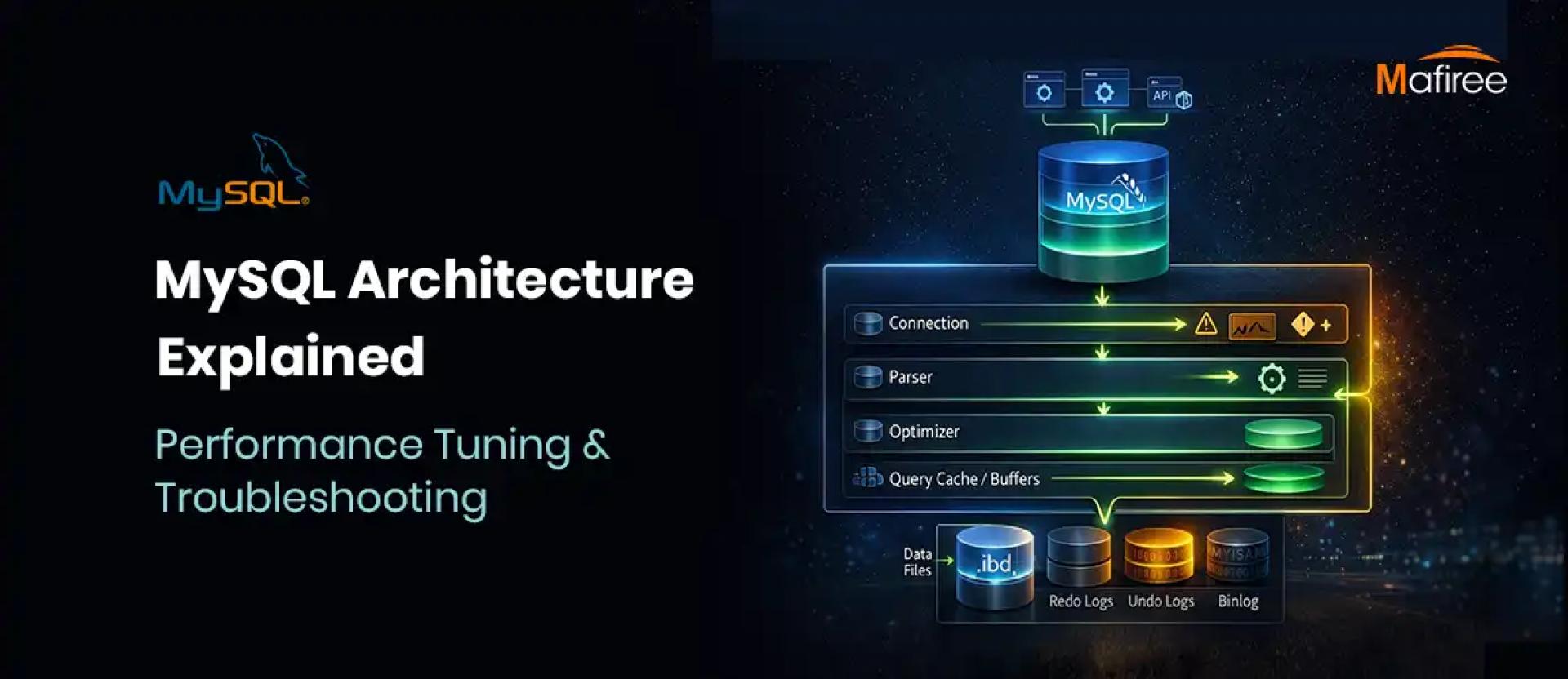

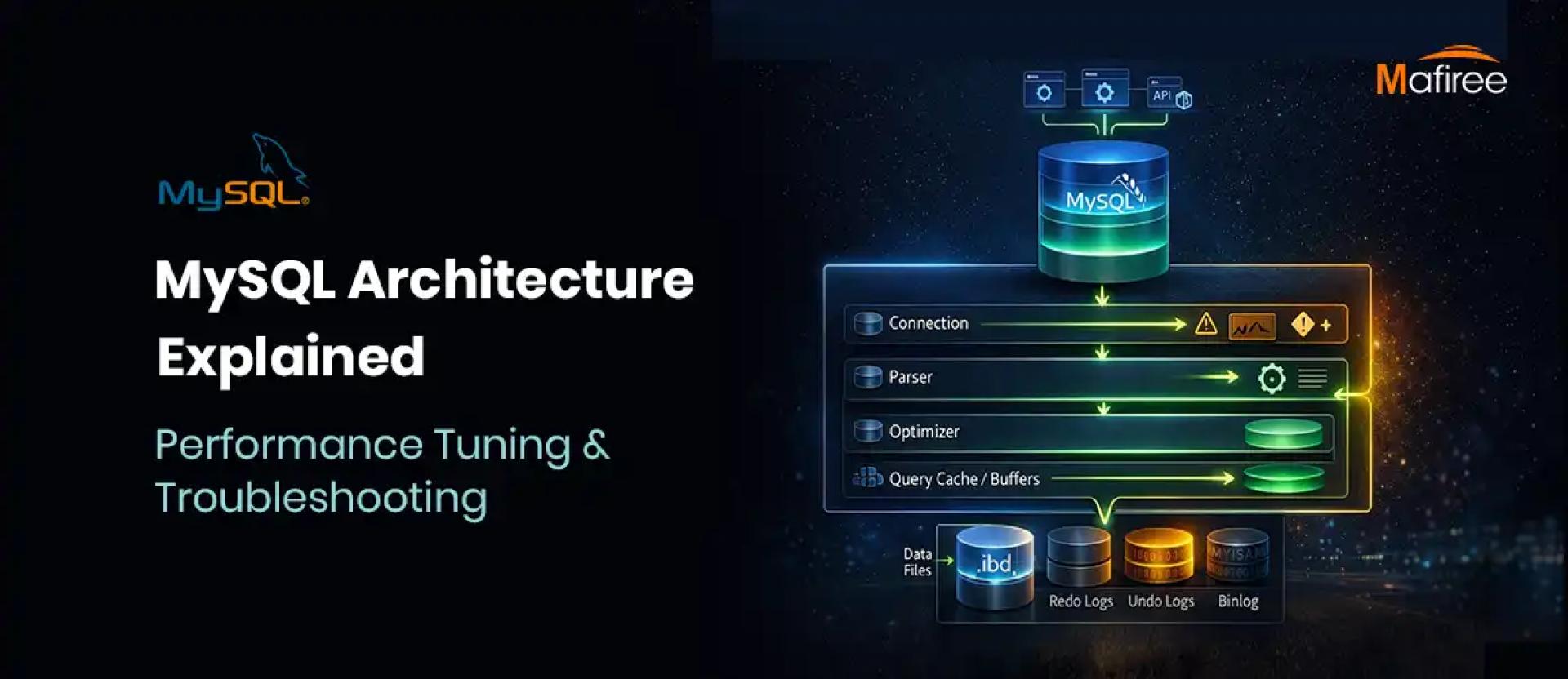

MySQL is one among the popular relational database management systems (RDBMS) currently being utilized.

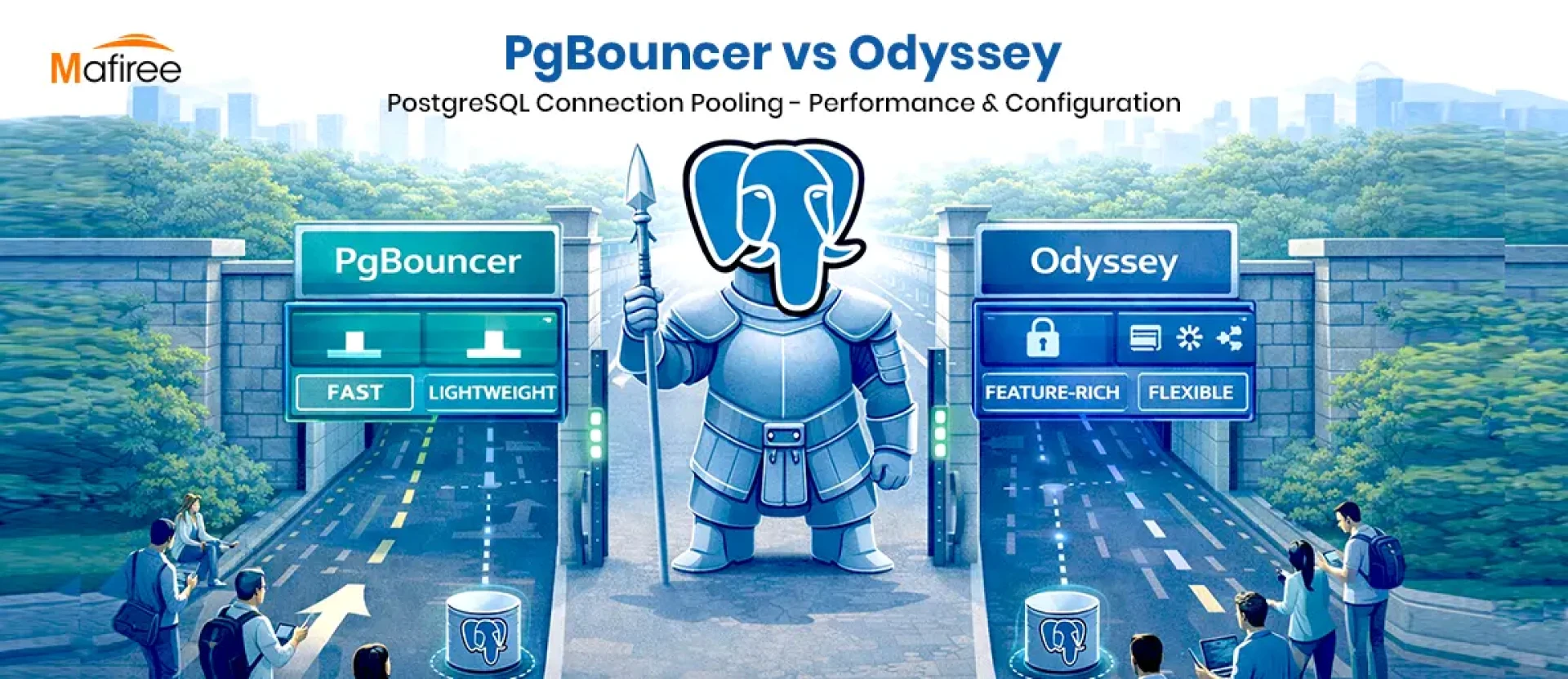

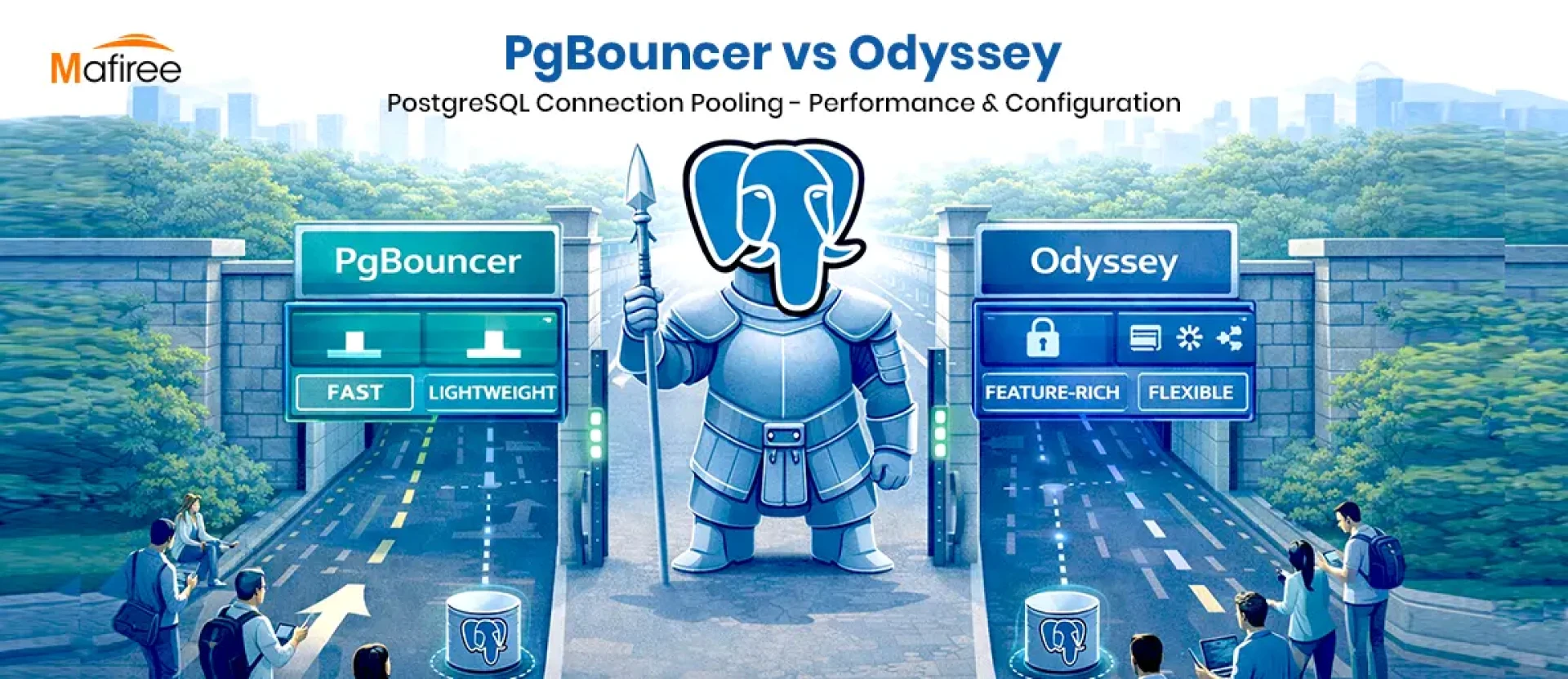

Have you ever been curious about how you can improve the performance of PostgreSQL when it is under a heavy load? The process-per-connection mechanism

In this new era where data is the fuel for innovation, every business, whether it is eCommerce or FinTech, needs solutions for database management services that are efficient and high-performing.