Introduction

Data lakes promise flexibility and scale, but many organizations hit a wall when performance starts to degrade. Queries slow down, dashboards lag, and teams lose trust in the data. What begins as a centralized analytics platform often turns into a bottleneck when not optimized properly.

Apache Trino has become a preferred query engine for modern data lakes because of its ability to query data across multiple sources at high speed. Yet, simply deploying Trino does not guarantee performance. Without the right configuration, architecture, and query design, even Trino-based systems can struggle.

This blog explores how to identify and fix common data lake performance issues using Apache Trino, along with practical strategies used by experienced teams in production environments.

Understanding Data Lake Performance Challenges

Why Performance Issues Occur

Data lakes are designed to store vast volumes of structured and unstructured data. Over time, this scale introduces challenges such as:

- Fragmented data across multiple storage systems

- Poorly optimized file formats

- Inefficient query patterns

- Metadata overload

These issues often compound, leading to slow query execution and higher compute costs.

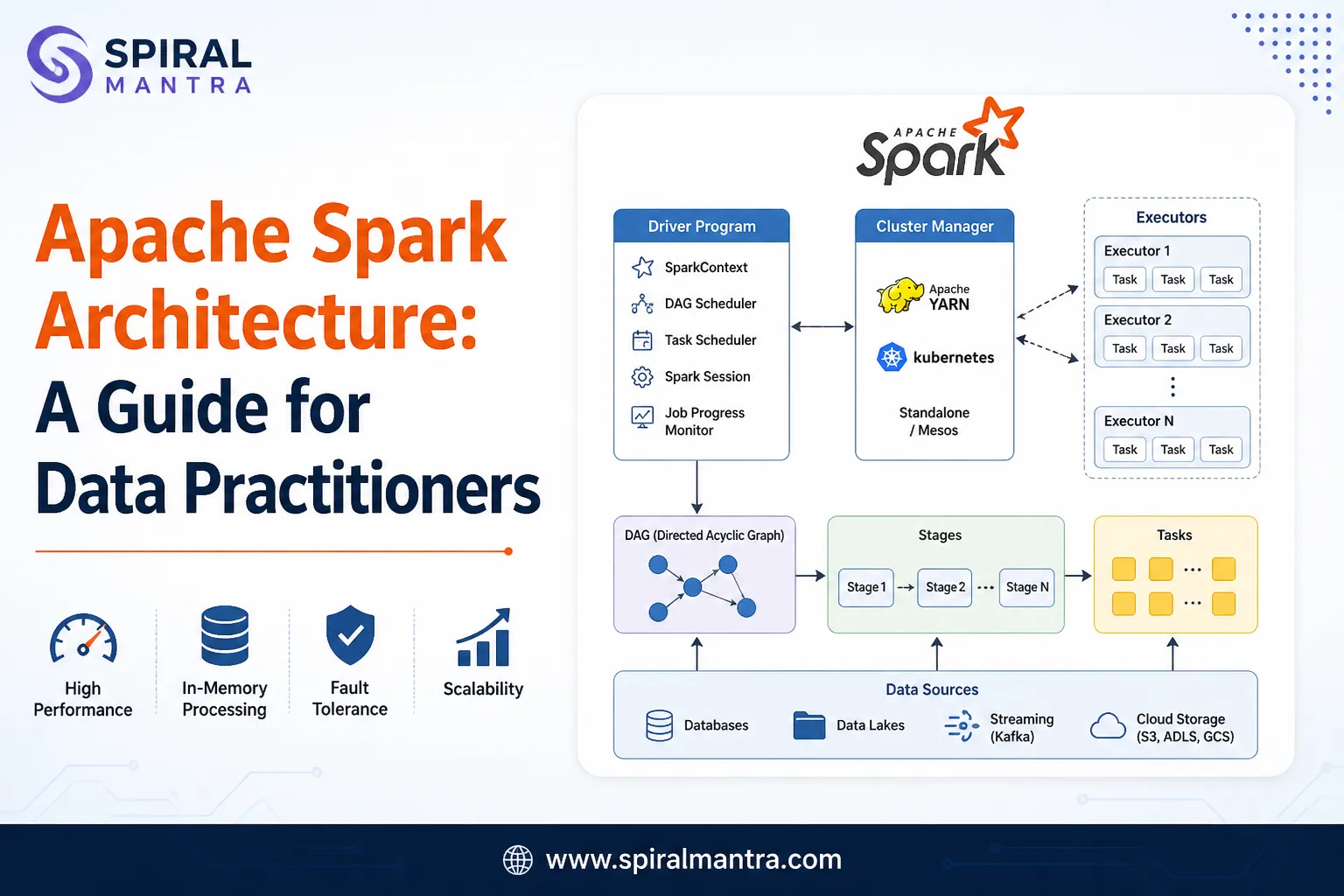

The Role of Apache Trino

Apache Trino acts as a distributed SQL query engine that connects multiple data sources. It enables users to query data without moving it, which reduces latency and simplifies analytics workflows.

However, Trino performance depends heavily on how data is stored, accessed, and queried.

Common Data Lake Performance Issues

1. Small File Problem

Large datasets broken into thousands of small files can slow down query execution. Each file adds overhead during scanning.

Impact:

- Increased query latency

- Higher resource consumption

2. Inefficient Data Formats

Using formats like CSV or JSON instead of columnar formats leads to slower processing.

Better alternative:

- Parquet

- ORC

3. Poor Partitioning Strategy

Improper partitioning can result in scanning unnecessary data.

Example:

Partitioning by a low-cardinality column such as status instead of date can reduce efficiency.

4. Lack of Query Optimization

Unoptimized queries often scan more data than required.

Common mistakes:

- Selecting all columns instead of required ones

- Missing filters

- Inefficient joins

Best Practices to Improve Trino Performance

Optimize File Formats and Storage

Switching to columnar formats like Parquet or ORC can significantly improve performance.

Benefits:

- Faster query execution

- Reduced storage costs

- Better compression

Case Insight:

A retail company reduced query time by 40 percent after converting raw JSON data into Parquet format.

Implement Effective Partitioning

Partitioning should align with query patterns.

Best practices:

- Partition by frequently filtered columns

- Avoid over-partitioning

- Combine partitioning with bucketing if needed

Use Data Compaction

Compacting small files into larger ones reduces overhead.

Approach:

- Schedule compaction jobs regularly

- Maintain optimal file size balance

Tune Trino Configuration

Proper configuration is essential for performance.

Key areas to optimize:

- Memory allocation

- Query concurrency limits

- Worker node scaling

Organizations often rely on End-to-End Trino Support Services to fine-tune these configurations based on workload requirements.

Optimize Queries

Even with the best infrastructure, poorly written queries can slow everything down.

Tips:

- Use selective filters

- Avoid unnecessary joins

- Limit result sets

- Use approximate queries when possible

Architecture Considerations for High Performance

Separate Storage and Compute

Modern architectures decouple storage from compute, allowing independent scaling.

Advantages:

- Cost efficiency

- Better performance control

Use Caching Mechanisms

Caching frequently accessed data can reduce query time.

Examples:

- In-memory caching

- Result caching

Leverage Metadata Optimization

Metadata plays a critical role in query planning.

Best practices:

- Use table formats like Iceberg or Delta Lake

- Maintain clean metadata

- Regularly update statistics

Real-World Use Case

A global SaaS company faced slow dashboard performance due to inefficient data lake queries.

Challenges:

- Large number of small files

- Unoptimized queries

- Lack of partitioning

Solution:

- Migrated data to Parquet format

- Implemented partitioning by date

- Tuned Trino cluster configuration

Results:

- 50 percent faster query execution

- Improved dashboard responsiveness

- Reduced infrastructure costs

Trino vs Traditional Query Engines

| Feature | Apache Trino | Traditional Engines |

| Query Speed | High | Moderate |

| Data Source Integration | Multiple | Limited |

| Scalability | Strong | Moderate |

| Flexibility | High | Lower |

Trino stands out for its ability to query across distributed data sources efficiently, making it ideal for modern data lake environments.

When to Seek Expert Support

While many optimizations can be implemented internally, complex environments often require expert guidance.

Signs You Need Help

- Persistent slow queries

- Increasing infrastructure costs

- Difficulty scaling workloads

- Complex multi-source integrations

Value of Expert Support

- Tailored optimization strategies

- Faster issue resolution

- Improved system reliability

- Ongoing performance monitoring

Conclusion

Data lake performance issues can impact business decisions, slow down analytics, and increase operational costs. Apache Trino offers a powerful solution, but achieving optimal performance requires the right combination of architecture, data design, and query optimization.

From addressing small file challenges to tuning configurations and improving query efficiency, each step plays a critical role in building a high-performing data ecosystem.

Organizations that invest in expert guidance can unlock the full potential of their data platforms. Partnering with the Best Apache Trino Support Services provider ensures that your data lake remains fast, scalable, and ready to support growing business demands.

Sign in to leave a comment.