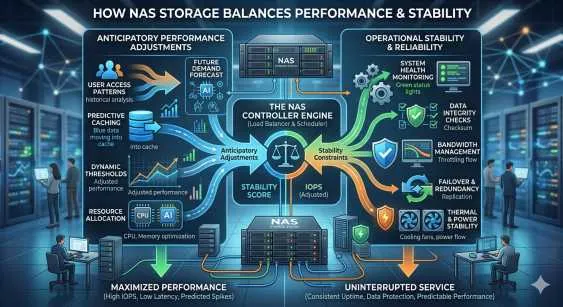

Modern enterprise environments demand data infrastructure that can dynamically scale resources while maintaining absolute reliability. IT architects frequently encounter a central tension: deploying aggressive, predictive optimization algorithms can sometimes introduce systemic risks, threatening the baseline uptime required by critical applications. Addressing this challenge requires sophisticated architecture capable of optimizing data pathways without compromising fault tolerance.

At the core of this architectural balance is NAS Storage. Historically viewed simply as file-level repositories, modern iterations of these systems have evolved into highly intelligent platforms. They now utilize machine learning and advanced heuristics to anticipate I/O demands, pre-fetching data into high-speed caches before an application explicitly requests it. This anticipatory approach drastically reduces latency and accelerates overall throughput for complex, data-heavy operations.

However, anticipating workload spikes is only half the equation. The infrastructure must also guarantee high availability and data integrity. This is where advanced Network Storage Solutions demonstrate their actual value. By isolating the predictive adjustment mechanisms from the foundational storage protocols, these systems ensure that a miscalculated performance adjustment does not cascade into a system-wide failure. The result is an environment that adapts to real-time demands while enforcing rigorous operational stability.

The Mechanics of Anticipatory Performance Adjustments

To understand how modern architectures balance these competing priorities, it is necessary to examine the mechanisms driving performance optimization. Anticipatory adjustments rely on continuous telemetry data, analyzing read/write patterns, queue depths, and historical access frequencies.

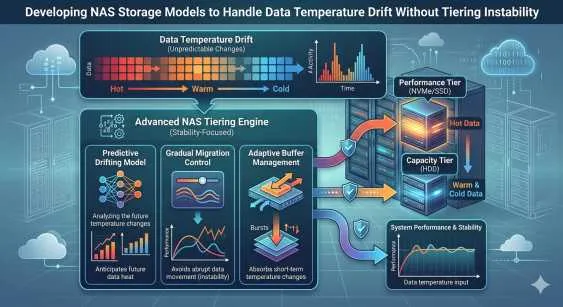

Predictive Caching and Automated Tiering

When a system detects a recognizable pattern—such as a specific database executing a heavy read operation at the same time every day—the storage controller proactively moves the required blocks from slower capacity drives to high-speed NVMe or SSD tiers. This automated storage tiering prevents bottlenecks during peak utilization phases.

Because Network Storage Solutions are designed to handle multi-protocol traffic, these caching algorithms must operate across varying file types and access methods. The intelligence layer monitors these protocols and shifts resources fluidly. If a predictive model misinterprets a data spike, the system must gracefully degrade the caching operation rather than locking up the storage array. The algorithms are explicitly written to prioritize controller stability over aggressive, high-risk caching maneuvers.

Load Balancing Across Distributed Nodes

Another aspect of anticipatory adjustment involves dynamic load balancing. As traffic increases on a specific network interface, the storage system can preemptively route incoming requests to underutilized nodes. This prevents any single point of ingress from becoming overwhelmed. NAS Storage systems manage this by utilizing virtual IP addresses and intelligent DNS routing, ensuring the application layer remains completely unaware of the backend resource shifting.

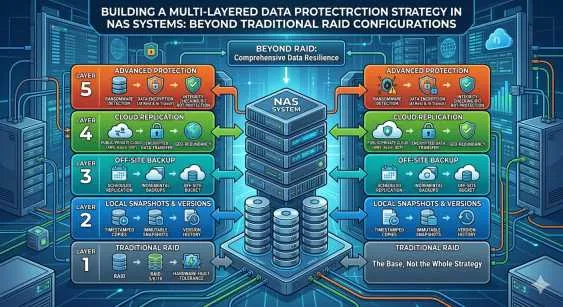

Sustaining Operational Stability

While predictive algorithms enhance speed, operational stability remains the primary mandate for enterprise data centers. Stability requires redundant hardware, rigorous snapshotting schedules, and protocol-level safeguards that protect data integrity during transmission.

Fault Tolerance and Redundancy

A core component of stability is the implementation of active-active storage controllers. If the primary controller experiences a hardware fault or becomes unresponsive due to a computationally heavy predictive task, the secondary controller seamlessly assumes the workload. Network Storage Solutions utilize multipath I/O configurations to ensure the data pathways remain open during these failover events. The system maintains absolute consistency, verifying that no data packets are dropped during the transition.

ISCSI NAS Integration for Block-Level Consistency

Many enterprise applications, such as virtualization hypervisors and transactional databases, require block-level access rather than standard file-level protocols like NFS or SMB. Integrating ISCSI NAS capabilities bridges this gap. By provisioning logical unit numbers (LUNs) over the IP network, organizations achieve the performance of a storage area network (SAN) within a unified file architecture.

The use of ISCSI NAS enforces strict operational stability because the SCSI command set requires precise acknowledgment of data writes. The predictive algorithms discussed earlier operate safely behind this protocol wall. Even as the system anticipates LUN utilization and shuffles blocks to faster tiers in the background, the ISCSI NAS protocol ensures that the host server maintains a stable, uninterrupted connection to its required volumes. This separation of background optimization and frontend protocol presentation is vital for preventing data corruption.

Synergizing Agility with Uptime

The true measure of a storage platform is not just how fast it operates, but how it manages internal conflicts between speed and safety. High-end NAS Storage resolves this by implementing strict resource partitioning. The CPU and memory dedicated to predictive analytics are isolated from the compute resources required for basic read/write operations and protocol management.

If the machine learning model experiences a processing spike while attempting to calculate a complex tiering schedule, it will hit a hard resource ceiling. The foundational Network Storage Solutions are programmed to prioritize incoming client requests over background optimization. Consequently, the application layer never experiences a timeout or latency spike due to internal system housekeeping. This hard-coded hierarchy of operations guarantees that anticipatory adjustments enhance the environment without ever endangering the baseline stability.

Frequently Asked Questions

How does predictive caching impact the lifespan of solid-state drives?

Anticipatory algorithms are designed to minimize unnecessary write operations. By analyzing data access patterns thoroughly before moving blocks, NAS Storage systems reduce write amplification, thereby preserving the endurance of SSD and NVMe components.

Can block-level and file-level protocols run simultaneously without degrading performance?

Yes. Modern Network Storage Solutions utilize multi-core processors and segmented caching to handle heterogeneous traffic. Administrators can assign specific CPU threads to handle file-sharing traffic while dedicating other resources to block-level requests.

What happens if the network connection drops during a data transfer?

When utilizing ISCSI NAS, the initiator and target maintain a continuous dialogue. If the connection drops, the protocol pauses the transmission and waits for the pathway to re-establish, preventing partial writes and ensuring absolute block-level consistency.

Are machine learning models required for automated tiering?

While basic automated tiering relies on simple threshold policies (e.g., moving data that hasn't been accessed in 30 days), advanced Network Storage Solutions use machine learning to identify complex, non-linear usage patterns, resulting in much higher cache hit ratios.

Is it possible to disable anticipatory adjustments for sensitive workloads?

Yes. Administrators managing an ISCSI NAS environment can pin specific volumes or LUNs to a designated storage tier. This prevents the system from migrating the data, ensuring predictable, consistent latency for latency-sensitive databases.

Strategic Implementation for Future Workloads

Deploying infrastructure that correctly handles both dynamic performance scaling and unyielding reliability requires rigorous planning. IT leaders must evaluate their specific workload characteristics, identifying which applications benefit from aggressive pre-fetching and which require rigid, guaranteed access latency.

By leveraging intelligent NAS Storage, organizations can build a foundation that adapts to unexpected traffic spikes without manual intervention. Understanding the technical boundaries of protocols like ISCSI NAS ensures that these optimization features enhance the data center rather than introducing instability. Ultimately, the successful deployment of these architectures relies on choosing platforms that prioritize data integrity and continuous uptime above all other metrics.

Sign in to leave a comment.