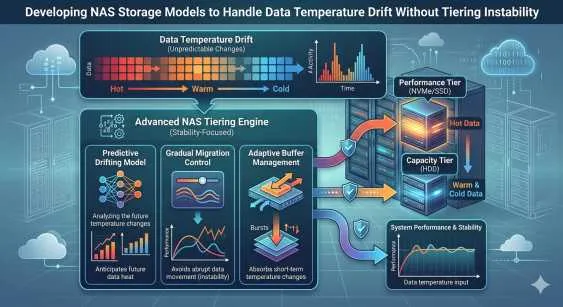

Enterprise data is rarely static. Over time, the frequency with which files are accessed changes continuously, a phenomenon known in the engineering sector as data temperature drift. Hot data requires immediate, high-performance access and typically resides on expensive NVMe or SSD drives. Conversely, cold data can safely reside on slower, cost-effective spinning disks or object storage. Managing this transition efficiently is a primary architectural challenge for modern IT infrastructure.

Traditionally, storage tiering algorithms move data between performance tiers based on rigid access thresholds. However, sudden shifts in workload patterns can easily trigger tiering instability. This phenomenon occurs when data is constantly migrated back and forth between performance tiers, consuming valuable processing cycles, congesting network bandwidth, and ultimately degrading overall system performance.

Resolving this instability requires a fundamental shift in how a NAS Appliance processes and evaluates data access patterns. By implementing predictive analytics and machine learning at the storage controller level, engineers can develop sophisticated models that accommodate temperature drift without overwhelming the system. This post examines methodologies for stabilizing tiering mechanisms while ensuring high data availability, robust performance, and stringent security compliance.

Understanding Data Temperature Drift in NAS Storage

Data temperature drift is an inevitable aspect of the enterprise data lifecycle. A project file might be accessed hundreds of times a day during active development, classifying it as strictly hot data. Weeks later, after a project concludes, that same file may only be read once a month. Standard NAS Storage arrays utilize policy-based tiering to push this cooling data to lower-cost drives, optimizing the return on hardware investments.

The engineering problem arises when cold data is suddenly requested again, perhaps during a compliance audit, a legal discovery process, or a system-wide search. The storage controller often overreacts by promoting the data back to the hot tier immediately. If a large batch of files constantly fluctuates in access frequency, the resulting input/output (I/O) penalty from moving data physically between disks drastically outstrips the cost savings of tiering. This thrashing effect heavily limits the throughput and latency consistency of the entire NAS Storage environment.

The Financial and Operational Impact

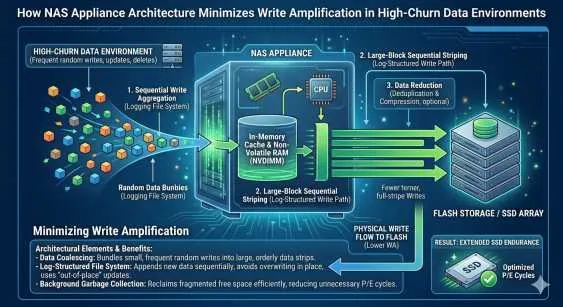

To prevent this destructive cycle, storage administrators must transition away from rigid threshold policies. Instead of reacting to single access events, the NAS Storage system should evaluate the sustained access rate over a programmable moving time window. Tiering instability not only reduces application performance but also physically degrades solid-state drives faster due to unnecessary write amplification. Establishing a stable equilibrium for data temperature drift directly correlates to longer hardware lifespans and reduced capital expenditure.

Rethinking the NAS Appliance Architecture

To handle temperature drift gracefully, the architecture of the NAS Appliance must decouple metadata processing from the physical data migration path. When metadata is stored in high-speed, persistent memory, the system can quickly analyze access patterns without waiting for mechanical disk seeks or consuming standard I/O lanes.

Continuous Monitoring vs. Reactive Migration

Modern systems must employ continuous telemetry to build a predictive, mathematical model of data temperature. A single read request on a cold file should not instantly trigger a costly promotion to the NVMe tier. The NAS Appliance must weigh the size of the file, the specific processing cost of the move, and the statistical likelihood of subsequent reads based on historical application behavior. By applying a smoothing function to the temperature graph, the storage controller avoids reactionary movements.

Furthermore, implementing read-ahead caching in volatile RAM for occasionally accessed cold files can safely satisfy spontaneous read requests without permanently altering the file's physical tier location. This precise mechanism ensures the NAS Appliance maintains high read throughput while completely bypassing the mechanisms that cause tiering instability.

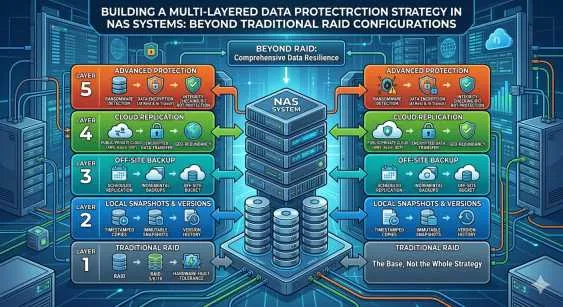

Securing Against NAS Appliances Ransomware Threats

Temperature drift models also intersect critically with enterprise data security. An unexpected, massive spike in write activity or read access across cold tiers is a classic indicator of a sophisticated cyberattack. When discussing comprehensive security postures, NAS appliances ransomware resilience is a non-negotiable architectural requirement. Malware often attempts to encrypt old files first to avoid immediate detection by end-users.

Immutable Snapshots and Anomaly Detection

If an unauthorized process attempts to encrypt archived data en masse, the sudden artificial shift in data temperature can trigger automated defense mechanisms. A highly secure NAS Storage infrastructure uses these specific telemetry spikes to instantly lock file systems, terminate suspicious user sessions, and alert administrators. Because NAS appliances ransomware attacks often target backup repositories and cold storage first to prevent recovery, tying temperature drift monitoring directly to security protocols creates a powerful, passive intrusion detection system.

By maintaining immutable snapshots at the block level, the system guarantees that even if a threat actor bypasses initial perimeter defenses, the underlying data remains unalterable. Administrators must ensure their NAS appliances ransomware mitigation strategies are tightly integrated with their automated tiering logic. This integration prevents malicious encryption processes from generating artificial "hot" access patterns that force corrupted data into expensive, high-performance NVMe tiers.

Building a Stable Data Lifecycle Strategy

Developing a NAS Storage environment that handles drift seamlessly requires careful operational planning. First, administrators must establish baseline access metrics for standard operational workloads. Use these empirical baselines to train the storage controller's predictive models, teaching the system what normal temperature drift looks like for your specific applications.

Next, properly configure the hysteresis loop—the engineered delay between a file crossing a temperature threshold and the actual migration event taking place. A wider hysteresis loop significantly reduces the chances of tiering instability, ensuring only files with proven, sustained changes in access frequency are physically moved between storage tiers.

Finally, organizations must regularly audit their security protocols and storage telemetry. Ensure that in the context of NAS appliances ransomware detection algorithms are accurately interpreting artificial temperature spikes as potential threats, rather than confusing them with legitimate, authorized user activity.

Next Steps for Optimizing Enterprise Storage

Managing data temperature drift without triggering tiering instability requires a highly calibrated blend of predictive analytics, intelligent caching algorithms, and robust security integration. By upgrading legacy threshold systems to modern, machine-learning-driven storage controllers, organizations can maximize their hardware investments while maintaining high performance and strict data governance.

Evaluate your current IT infrastructure to identify signs of disk thrashing, high latency spikes, or inefficient storage tiering. Work alongside certified storage architects to implement moving-average threshold policies. Above all, verify that your environment is fully protected against emerging security threats, ensuring that modern NAS appliances ransomware safeguards are properly calibrated to read temperature drift telemetry as a primary line of defense.

Sign in to leave a comment.