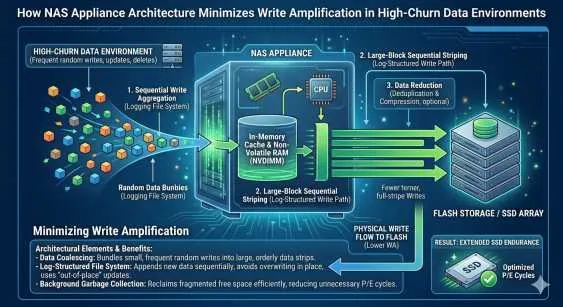

High-churn data environments place immense stress on enterprise storage media. Databases, virtual machines, and transactional systems constantly modify and overwrite existing data. This continuous cycle creates a significant hardware challenge known as write amplification. When a system attempts to write a small amount of logical data, the underlying solid-state drives (SSDs) must often erase and rewrite much larger physical blocks.

Over time, this process accelerates drive wear, increases latency, and degrades overall system performance. IT administrators are frequently forced to replace expensive flash media well before their expected end of life. Managing this physical degradation requires a strategic approach to storage architecture, file system management, and data layout.

Modern infrastructure demands a robust solution to mitigate unnecessary hardware wear. An optimized NAS appliance provides specific architectural advantages designed to handle high-frequency writes efficiently. By exploring the interaction between appliance architecture and data placement, organizations can drastically reduce write amplification and extend the operational lifespan of their flash-based arrays.

The Mechanics of Write Amplification

To resolve hardware degradation, one must first understand how flash memory records data. In a NAS appliance, solid-state drives write data in pages but must erase data in much larger blocks. When an application modifies a small file, the SSD cannot simply overwrite the specific page. Instead, it must read the entire block into memory, modify the specific page, erase the entire physical block, and then rewrite the newly updated block back to the drive.

This background operation means a single 4KB logical write request might force the SSD to rewrite 1MB or more of physical data. In environments with high data churn, this multiplier effect creates severe bottlenecks. Garbage collection routines—the processes that reclaim stale blocks—work constantly in the background, further monopolizing drive resources.

A standard NAS storage array without optimized write paths will quickly succumb to these inefficiencies. The controllers become overwhelmed by the sheer volume of background operations. Consequently, front-end application performance suffers, leading to unacceptable latency spikes for end-users.

How NAS Appliance Architecture Mitigates Drive Wear?

Advanced storage systems deploy specific architectural frameworks to intercept and organize random writes before they reach the flash media. A purpose-built NAS appliance typically utilizes a log-structured file system or a highly optimized write cache. These mechanisms change the fundamental way data is committed to the drives.

Instead of writing data randomly across the SSD, the appliance aggregates small, random write requests in non-volatile memory (NVRAM) or high-speed dynamic RAM. The system then coalesces these small requests into large, sequential chunks. When the cache destages data to the underlying flash media, it writes in full, sequential stripes.

Sequential writes completely bypass the read-modify-write penalty. Because the SSD receives large, continuous blocks of data, it can write them directly to empty pages without triggering excessive garbage collection. This sequentialization is the primary method by which modern architectures reduce the write amplification factor from a high multiplier down to a ratio approaching 1:1.

Distributing the Load with Scale Out Storage

Addressing write amplification also requires managing the volume of data directed at any single storage controller. In traditional NAS storage architectures, a single pair of controllers handles all incoming write requests. Under heavy load, these controllers and their associated drives become a concentrated failure domain.

Implementing scale out storage alters this dynamic by distributing the write workload across multiple independent nodes. When a high-churn application sends a massive influx of data, the scale-out cluster intelligently balances the inbound requests. Each node receives a fraction of the total write load, which reduces the immediate pressure on any individual SSD cache or storage tier.

Furthermore, this distributed architecture allows the system to perform background tasks, such as garbage collection and metadata updates, more efficiently. Because the computational load is shared across the cluster, the system maintains consistent low latency even during periods of intense data modification.

Intelligent Data Reduction Strategies

Data reduction technologies, specifically deduplication and compression, play a critical role in managing flash endurance. However, the timing of these operations heavily influences write amplification.

Inline deduplication analyzes data blocks as they enter the NAS appliance. If the system detects a duplicate block, it discards the incoming data and merely updates a metadata pointer. By preventing redundant data from ever reaching the flash media, the system drastically reduces the total volume of physical writes.

Compression operates on a similar principle, shrinking the data payload before it is committed to the drive. When combined with intelligent write coalescing, these data reduction techniques ensure that only unique, compressed, and sequentially ordered data blocks consume the limited write cycles of the SSDs.

Maximizing the Lifespan of Enterprise Flash Media

Deploying flash media in high-churn environments requires careful architectural planning. Relying on raw hardware performance without intelligent software management inevitably leads to premature drive failure and unpredictable performance.

By utilizing a sophisticated NAS appliance, organizations can restructure random IO into sequential streams, effectively neutralizing the read-modify-write penalty. When integrated with scale out storage, the architecture distributes the physical toll across a wider array of hardware, ensuring consistent performance. Through the careful application of write coalescing, inline data reduction, and distributed workload management, enterprise IT teams can protect their flash investments and maintain optimal performance under the most demanding data conditions.

Sign in to leave a comment.