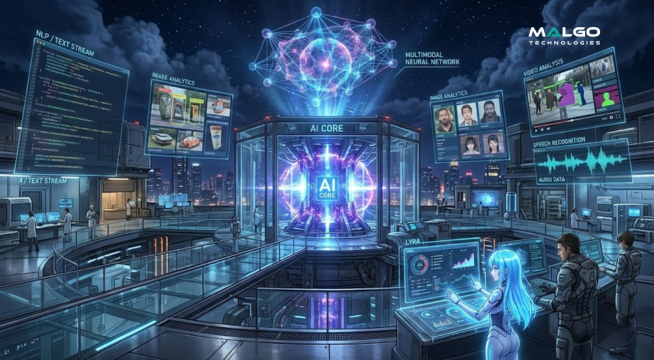

Multimodal AI is a type of artificial intelligence that processes and understands different types of data like text, images, videos, and audio at the same time to provide more accurate results. This technology mimics how humans perceive the world through multiple senses, making it a powerful tool for businesses that deal with complex information. By using multimodal AI development services, companies can build systems that don't just read words but also see patterns in pictures and hear nuances in speech.

What is Multimodal AI?

Multimodal AI refers to advanced systems that can handle multiple "modes" of data simultaneously. In the past, most AI models focused on just one thing, such as a chatbot that only understood text or a security tool that only recognized faces. Today, a multimodal AI development company creates models that combine these inputs to get a fuller picture of a situation.

These systems work by using different neural networks to process each data type and then merging that information into a single context. For example, a multimodal system can watch a video of a person speaking and use both the audio of their voice and the movement of their lips to understand them better. This leads to much higher accuracy in real-world scenarios where data is often messy or incomplete.

Why Enterprises Need Multimodal AI Development Solutions

Businesses generate vast amounts of data in various formats every day, including emails, CCTV footage, voice recordings, and PDF documents. Traditional AI often fails to connect the dots between these different files, leaving gaps in business intelligence. Multimodal AI development solutions fill these gaps by analyzing all available data sources together to find hidden insights.

By adopting these solutions, an enterprise can automate tasks that were once too complex for machines. Instead of having staff manually cross-reference a written report with a set of photos, the AI does it instantly. This shift allows teams to focus on strategy and creative work while the technology handles the heavy lifting of data processing and cross-modal analysis.

Why Multimodal AI is Growing Fast

The rapid growth of this technology is driven by the need for more natural interactions between humans and machines. People do not communicate through text alone; they use gestures, tone of voice, and visual aids. As companies strive to create better customer experiences, they turn to a multimodal AI development company to build interfaces that feel more human and responsive.

Another reason for this growth is the massive increase in computing power and data availability. Modern hardware can now support the intense processing required to run several data models at once. This makes it possible for industries like healthcare, retail, and manufacturing to use multimodal AI development services to solve problems that were unsolvable just a few years ago.

Key Features of Multimodal AI Development Services

One main feature of these services is the ability to perform cross-modal retrieval, which lets a user search for one type of data using another. For instance, a user could upload a photo and ask the AI to find all text documents that describe similar items. This creates a much more flexible way to manage large databases and digital assets within a corporate environment.

Another important feature is emotion and sentiment detection across different mediums. The AI can look at a customer's facial expression in a video call and listen to the tone of their voice to determine if they are frustrated or happy. These multimodal AI development solutions provide a level of understanding that goes far beyond what a simple text-based sentiment analysis tool can offer.

Benefits of Using Multimodal AI in Business

The biggest benefit of these systems is the massive improvement in decision-making accuracy. When an AI can see a product defect on a factory line and read the sensor data at the same time, it makes fewer mistakes than a system using only one input. This leads to higher quality standards and less waste in production, which directly impacts the bottom line.

Efficiency is another major gain for companies using multimodal AI development services. By automating the interpretation of complex data sets, businesses reduce the time spent on manual data entry and analysis. This speed allows enterprises to respond to market changes or customer needs almost instantly, giving them a strong competitive edge in their respective fields.

Why Choose Malgo for Multimodal AI Development

Malgo provides a deep understanding of how to integrate various data streams into a unified AI architecture. The focus stays on creating practical tools that solve specific business problems without adding unnecessary complexity to existing workflows. Malgo helps organizations move from basic automation to advanced intelligence by building systems that see, hear, and read like experts.

The team at Malgo prioritizes data security and model reliability in every project. Since multimodal AI often handles sensitive information like video feeds and voice prints, Malgo uses strong protocols to keep data safe. Choosing Malgo means getting a partner that values clarity and results, ensuring that the technology delivers real value to the enterprise.

Getting Started with Multimodal AI Development Solutions

Starting the process requires a clear look at the data assets already available within the organization. A company should identify where data is being wasted or where human staff spend too much time manually connecting different types of information. This identifies the best areas to apply multimodal AI for the fastest return on investment.

Once the goals are set, working with a specialized multimodal AI development company helps in building a roadmap. This includes selecting the right models, training them on specific industry data, and integrating them into the company’s current software stack. This structured approach ensures that the new AI tools work smoothly with the systems the team already uses every day.

The Future of Enterprise Innovation with AI

As multimodal AI continues to evolve, it will become the standard for how businesses interact with their data. Future systems will likely handle even more modes, including tactile data from robots or spatial data from augmented reality. Companies that start using these services now will be much better prepared for the next wave of digital change.

Innovation no longer comes from just doing things faster; it comes from understanding things better. Multimodal AI gives enterprises the "eyes" and "ears" they need to fully grasp the world around them. This leads to new products, better services, and a much deeper connection with customers across every digital and physical touchpoint.

Sign in to leave a comment.