Real-time genomic sequencing generates data at an unprecedented scale and velocity. Next-generation sequencing (NGS) instruments routinely produce terabytes of raw genomic information within a single operational cycle. Successfully ingesting this data requires a highly optimized and specialized storage infrastructure. Standard enterprise storage systems frequently fail under the sustained input/output operations per second (IOPS) demanded by modern sequencing machines.

Simultaneously, bioinformatics pipelines demand massive concurrent read and write operations. Researchers utilize high-performance computing (HPC) clusters to align sequences, identify variants, and perform complex analyses. If the underlying storage layer cannot feed data to the compute nodes fast enough, expensive processor cycles sit idle. This bottleneck delays critical clinical diagnostics and vital biological research.

This article examines how IT architects can construct a high-throughput storage environment. We will analyze the specific I/O patterns of genomic workloads and detail how specific storage protocols resolve latency issues. By understanding these architectural principles, organizations can successfully manage real-time genomic data ingestion and parallel processing tasks.

The I/O Profile of Genomic Workloads

Understanding the data lifecycle is critical for designing appropriate NAS storage infrastructure. The sequencing process begins with the instrument writing raw base call (BCL) files directly to the NAS storage system. This phase is characterized by continuous, sequential write operations. Any interruption or latency during this ingestion phase can cause the sequencer software to crash, ruining the chemical run and wasting thousands of dollars in reagents.

Once the sequencing run completes, the bioinformatics pipeline initiates. Software converts the BCL files into FASTQ formats. Next, alignment algorithms compare these sequences against a reference genome, generating BAM or CRAM files. Finally, variant calling processes identify genetic mutations, producing VCF files.

This analytical phase shifts the storage workload entirely. It generates highly randomized, parallel read requests across the compute cluster, followed by large sequential writes as the pipeline outputs the final alignment files. A successful architecture must handle these extreme shifts in data access patterns without degrading performance.

Architecting the Storage Infrastructure

Meeting the demands of genomic sequencing requires a multi-tiered approach to storage design. The system must provide a unified namespace for applications while handling diverse network protocols effectively.

Leveraging Scale Out NAS

Traditional scale-up storage arrays hit performance ceilings quickly. Upgrading involves replacing the entire controller head, leading to expensive forklift upgrades and disruptive data migrations. A scale out nas architecture resolves this fundamental limitation.

In a scale-out deployment, organizations add independent storage nodes to an existing cluster. Each new node contributes disk capacity, CPU power, and network bandwidth to the collective system. As the volume of genomic data grows, the storage environment scales linearly. This architecture allows hundreds of compute nodes to access a single, unified file system concurrently. Distributed metadata management ensures that file lookups and directory operations do not become a centralized bottleneck during parallel processing jobs.

Integrating ISCSI NAS

While the majority of genomic data relies on file-based access protocols like NFS or SMB, complete bioinformatics environments contain diverse application requirements. Laboratory Information Management Systems (LIMS) and relational databases are necessary to track sample metadata, patient records, and specific variant annotations.

These transactional applications perform poorly over standard file protocols due to file-locking mechanisms and overhead. Utilizing an ISCSI NAS configuration allows the same physical storage hardware to present block-level access over a standard Ethernet network. The initiator on the database server treats the iSCSI target as a directly attached local disk. This configuration delivers the strict consistency and sub-millisecond latency required for high-speed database transactions, consolidating the infrastructure footprint.

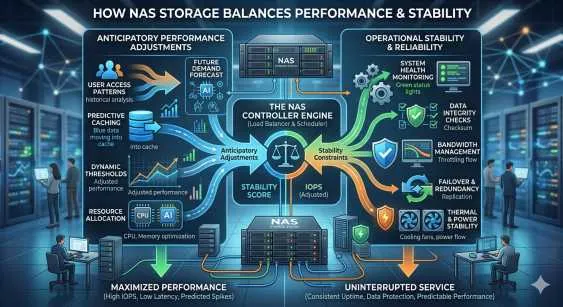

Optimizing NAS Storage

Selecting the right hardware configuration within the NAS Storage platform dictates overall pipeline efficiency. All-flash NVMe arrays provide the highest IOPS and lowest latency, but storing petabytes of genomic data entirely on flash memory is cost-prohibitive for most research institutions.

A hybrid architecture offers the optimal balance of performance and cost. An NVMe solid-state drive (SSD) tier operates as an ingestion zone and active working directory. Sequencers write directly to this high-speed tier, ensuring zero data loss during the chemical run. Parallel alignment jobs also read from this flash tier. Once the pipeline completes, automated data lifecycle management policies migrate the inactive BAM and VCF files to a high-capacity, cost-effective hard disk drive (HDD) tier for long-term archiving.

Network fabric tuning is equally important. Configuring the maximum transmission unit (MTU) to jumbo frames (typically 9000 bytes) on all storage nodes, switches, and compute clients reduces TCP/IP packet overhead. This simple configuration change significantly increases sustained throughput across the network.

Feeding Parallel Compute Clusters

High-throughput bioinformatics pipelines rely on job schedulers to distribute analytical tasks across thousands of CPU cores. The efficiency of this parallel processing depends entirely on data availability.

When a job scheduler launches an alignment task across fifty compute nodes simultaneously, the storage system experiences an immediate phenomenon known as an "I/O storm." A properly architected scale-out environment handles this by distributing the data layout across multiple physical drives and network interfaces. This striping technique ensures that no single storage node becomes overwhelmed by read requests. Consequently, the compute layer experiences zero starvation, and processing times decrease significantly.

Advancing Bioinformatics Infrastructure

Genomic sequencing technologies continue to evolve, driving higher data volumes and more complex analytical requirements. Designing a scale-out NAS infrastructure capable of handling these workloads requires precise planning and a deep understanding of bioinformatics I/O patterns. Standard enterprise file servers will inevitably fail under these specialized demands.

Organizations must transition to distributed, highly scalable architectures to maintain operational efficiency. The next step for IT leaders is to conduct a comprehensive audit of their current sequencing workloads. Measure the peak write throughput during instrument runs and the peak read IOPS during pipeline execution. Use these specific metrics to correctly size your next-generation storage fabric.

Sign in to leave a comment.