In the fast‑evolving world of artificial intelligence, frameworks that let developers build, manage, and scale autonomous agents are becoming central to innovation. LangGraph vs CrewAI vs AutoGen has emerged as one of the most talked‑about discussions among developers, CTOs, and technology strategists exploring how to build next‑generation AI systems that coordinate tasks, automate workflows, and collaborate with humans or other agents effectively. Each platform represents different architectural philosophies, levels of maturity, and ideal use cases, and understanding these differences informs smarter investment, adoption, and technical decisions.

This article explores how these modern AI agent platforms work, what trends are shaping their adoption, and how organizations can choose among them for real‑world applications.

The Rise of Agent‑Based AI

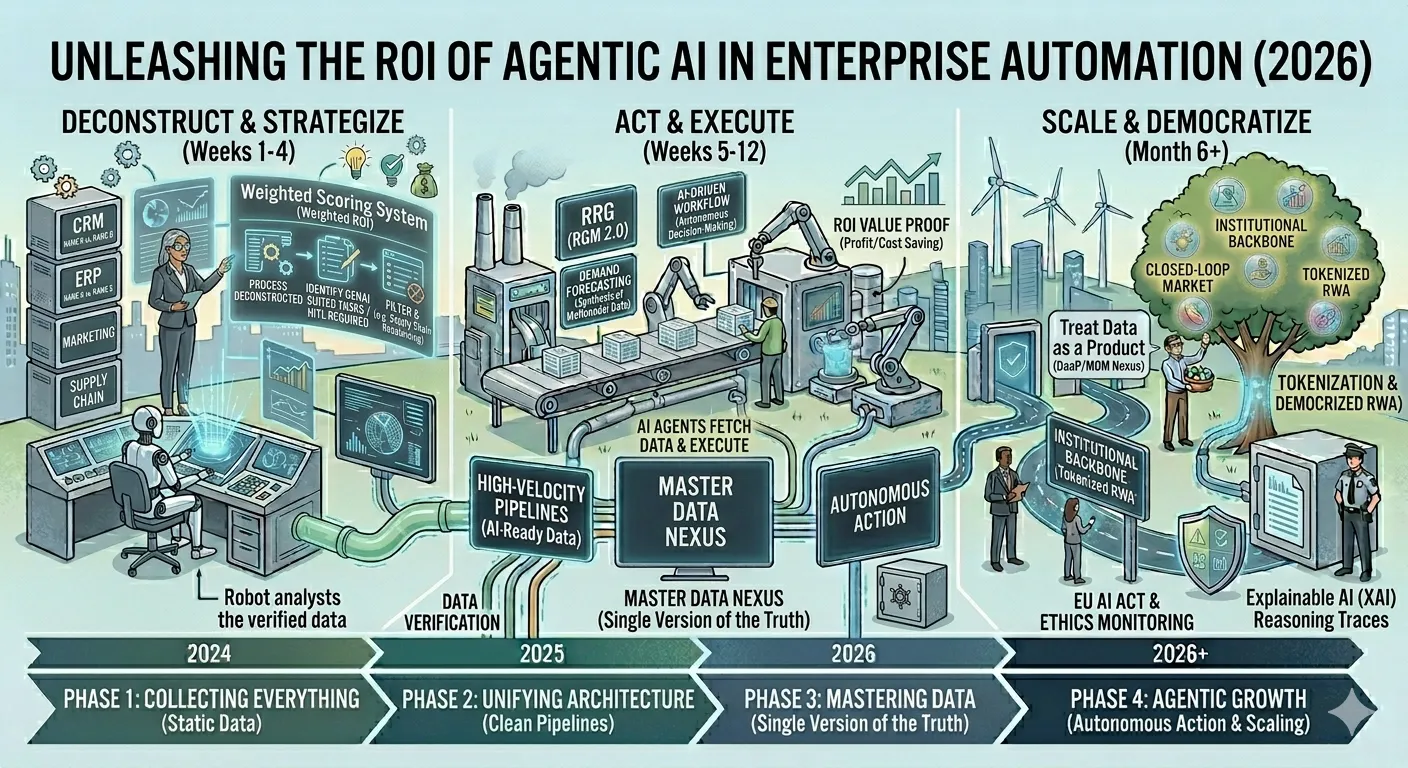

Autonomous and semi‑autonomous AI agents are at the forefront of advanced AI development. Rather than working as isolated models responding to prompts, these agents can carry out multi‑step tasks, coordinate among multiple sub‑agents, and adapt workflows based on outcomes and context. Gartner predicts that by the end of this decade, a significant portion of enterprise applications will integrate agentic AI, making robust frameworks and governance tools vital for production success.

In practical terms, agencies and enterprises want AI platforms that do more than generate text. They want systems that can evaluate data, call external tools, manage state over time, and maintain structured workflows with error handling, logging, and observability — features that modern agent platforms have begun to address.

What Constitutes an AI Agent Development Platform?

Before diving into specific frameworks, it helps to define what an agent platform typically provides. At its core, an agent development platform:

- Integrates one or more large language models (LLMs)

- Facilitates communication between agents or sub‑agents

- Manages state, memory, and decision processes across multiple steps

- Provides tooling for orchestration, error handling, and observability

- Supports integration with external APIs, databases, and enterprise systems

These capabilities extend AI beyond simple question‑and‑answer interactions to systems that can plan, execute, and refine complex tasks on their own.

LangGraph: Workflow‑Centric and Graph‑Based

Architecture and Philosophy

LangGraph originated as an extension within the LangChain ecosystem, transforming chains of actions into stateful graphs where tasks, tools, and decisions are modeled as nodes with clear transitions. This graph‑centric design offers explicit state management, robust control flow with branching and loops, and high transparency into how an agent reaches a conclusion.

Rather than treating the agent as a black box, LangGraph encourages developers to visualize and manage the full workflow, which can be crucial for enterprise scenarios where auditability, error handling, and predictable behavior matter.

Strengths and Use Cases

LangGraph shines in scenarios demanding:

- Complex, conditional workflows

- Traceable state transitions

- Integration with external services and structured data flows

Its strength lies in deterministic orchestration and fine‑grained control, making it suitable for financial operations, compliance workflows, and systems where outcomes must be transparent and reproducible.

Challenges

The trade‑off for this control is complexity in setup and maintenance. Developers may spend weeks crafting precise graphs for intricate tasks, and without proper observability tooling, debugging can become challenging.

CrewAI: Role‑Based Collaboration

Design Philosophy

CrewAI seeks to simulate team dynamics among AI agents through role‑based orchestration. Instead of a monolithic workflow or strict state graph, agents in CrewAI are organized as a crew, each with specific goals, responsibilities, and tools. Overall task execution emerges from collaboration rather than a predefined graph.

This design reflects how human teams function: researchers conduct information gathering, analysts interpret results, and expert agents refine or validate outputs.

Strengths and Use Cases

CrewAI’s strengths include:

- Intuitive task delegation

- Parallel workflows with specialized agents

- Low barrier for prototyping collaborative behavior

These qualities make it appealing for content generation pipelines, research assistants, or business intelligence workflows where distinct agent roles contribute different expertise.

CrewAI has also gained traction for rapid adoption and developer friendliness, with an emphasis on enterprise‑ready tooling and simplified abstractions that lower the learning curve.

Challenges

While CrewAI is powerful, it may lack the transparent state control and deep debugging facilities found in graph‑centric frameworks. Some users report that internal operations are opaque, which can pose challenges as projects scale or require tight integration with existing observability systems.

AutoGen: Conversational Multi‑Agent Systems

Core Approach

AutoGen takes a different route: it emphasizes asynchronous conversational interaction among multiple agents. Rather than a structured workflow or role‑based assignment, agents in AutoGen communicate with one another — exchanging messages, negotiating decisions, and coordinating actions in a dialogue‑driven architecture.

This dynamic approach mimics human collaboration, where agents interact conversationally to solve problems or refine outcomes.

Strengths and Use Cases

AutoGen is particularly effective for:

- Multi‑agent problem solving via iterative conversations

- Collaborative reasoning with dynamic role assumptions

- Scenarios where flexibility and emergent behavior are desirable

Those building analytical systems, exploratory tools, or solutions that benefit from back‑and‑forth negotiation among agents can find AutoGen’s paradigm well suited to their needs.

Challenges

The open‑ended conversational model can introduce unpredictability and make state tracking and debugging more complex than in structured frameworks. Developers must build additional instrumentation to maintain oversight and ensure outcomes align with business requirements.

Market Trends and Adoption

Industry surveys and adoption data reveal several broad trends shaping agent framework usage:

Migration from Prompting to Orchestration

Most AI projects have moved beyond simple prompt engineering to system orchestration. Organizations now require governance, observability, and error handling embedded into agent frameworks — characteristics reflected in how platforms evolve.

Growth in Multi‑Agent Collaboration

A notable shift has been toward multi‑agent systems where specialized agents coordinate tasks rather than a single monolithic agent executing a linear workflow. This mirrors microservices patterns in traditional software, providing modularity and resilience.

Enterprise Focus and Production Readiness

Companies are increasingly evaluating frameworks not just on technical features but on production maturity, compliance, and enterprise support. Factors like security, deployment tooling, and team scaling now weigh heavily in platform choices, not just performance or flexibility.

Ecosystem and Community Momentum

Frameworks with strong community activity, rich plugin ecosystems, and extensive documentation naturally attract broader usage. This in turn improves long‑term sustainability through contributions, integrations, and ecosystem tooling.

How to Choose the Right Platform?

There is no single “best” solution — the optimal choice depends on project needs:

1. Complex Orchestration with Transparency

Choose a graph‑based platform if workflows require detailed state tracking, robust error handling, and reports demonstrating reliability.

2. Collaborative Multi‑Agent Projects

Select a role‑based framework where team‑like coordination delivers parallel contributions to composite tasks.

3. Exploratory or Conversational Logic

Use frameworks that support dynamic conversations and negotiation among agents for tasks that benefit from flexible reasoning paths.

Organizations should weigh long‑term maintainability, community support, and observability tooling alongside baseline technical capabilities. Combining qualitative user feedback with repository activity, enterprise case studies, and project requirements leads to better decisions.

Final Thoughts

Modern AI agent platforms are transforming how applications are built, moving from simple automation toward intelligent systems that plan, reason, and collaborate autonomously. The current ecosystem — from graph‑based orchestration to conversational multi‑agent frameworks — reflects diverse approaches to solving increasingly complex problems with AI.

Choosing among these tools requires a clear understanding of their strengths, limitations, and alignment with project goals. By considering the architectural trade‑offs and evolving trends in agent systems, organizations can leverage the right platform to build scalable, robust, and intelligent AI solutions that deliver long‑term value.

Sign in to leave a comment.