Building an AI agent that works in a demo is easy.

Building one that scales across users, systems, and time is where most teams fail.

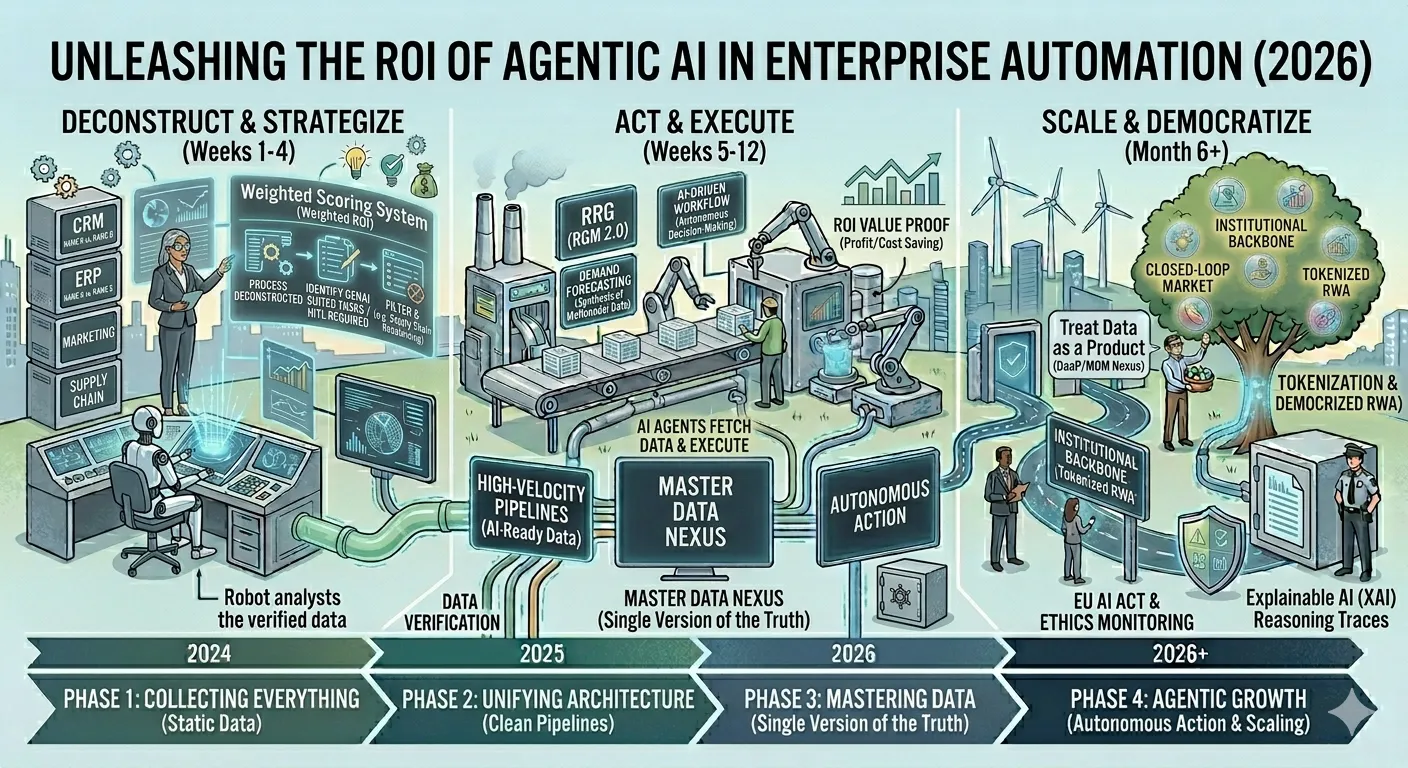

In 2026, scalability in AI agents is not about “bigger models.” It’s about architecture, control, and operational discipline. This guide breaks down how modern teams build scalable agents using LLMs and where AI agent development services actually earn their value.

Scalable AI agents built with LLMs require six core components:

a reasoning-optimized model, an orchestration layer, modular memory, secure tool execution, governance controls, and observability infrastructure. Without all six, agents fail under real-world load.

Step 1: Choose LLMs for Reasoning, Not Conversation

The first scalability mistake teams make is selecting models based on chat quality.

What matters instead:

- Long-horizon reasoning stability

- Tool-use reliability

- Instruction adherence under load

- Predictable cost curves

In 2026, many production agents use smaller, specialized reasoning models rather than monolithic general LLMs.

Rule of thumb:

If your agent can’t reliably plan across 10+ steps, it won’t scale.

Step 2: Add an Agent Orchestration Layer (Non-Negotiable)

Scalability begins above the model.

An orchestration layer is responsible for:

- Goal decomposition

- Task scheduling

- Retry and fallback logic

- Multi-agent coordination

This is the difference between:

- “LLM with tools”

- A true agent system

Modern orchestration patterns include planner–executor architectures and supervisor agents that monitor execution quality.

Step 3: Design Memory as a System, Not a Log

Scalable agents don’t just remember—they curate memory.

Production-grade memory layers:

- Working memory (current task context)

- Episodic memory (past actions and outcomes)

- Semantic memory (domain knowledge)

- Preference memory (user/system patterns)

Vector databases alone are insufficient. The key is policy-driven memory retrieval that prevents drift and repetition as usage scales.

Step 4: Treat Tool Execution as a First-Class Risk Surface

An agent that can act is powerful—and dangerous.

Scalable AI agents require:

- Permission-scoped tool access

- Explicit action validation

- Rate and cost limits

- Human-in-the-loop escalation paths

This is where many companies rely on experienced AI agent development companies to avoid production failures and security incidents.

Step 5: Implement RAG for Decisions, Not Just Answers

Retrieval-Augmented Generation (RAG) evolved significantly by 2026.

In scalable agents, retrieval is:

- Conditional (used only when needed)

- Source-ranked and confidence-scored

- Time-aware and continuously refreshed

Instead of flooding prompts with documents, agents decide when retrieval improves decision quality a key scalability unlock.

Step 6: Build Governance Into the Core Architecture

If governance is bolted on later, the agent won’t scale.

Modern AI agents include:

- Action-level permissions

- Policy-aware refusal logic

- Full audit trails

- Rollback and kill-switch mechanisms

This is why agentic AI is now preferred over pure generative AI for enterprise use cases:

Step 7: Add Observability and Agent Ops from Day One

Scalable agents are measurable agents.

Teams track:

- Task success rates

- Decision latency

- Tool failure frequency

- Cost per completed objective

- Reasoning drift over time

Without observability, agents degrade silently and fail publicly.

Step 8: Prepare for Multi-Agent and Hybrid Deployment

By 2026, many scalable systems use:

- Multiple cooperating agents

- Hybrid cloud / on-prem inference

- Partial edge execution for latency and compliance

This architecture allows agents to scale horizontally without increasing cognitive or operational risk.

Bottom Line: Scaling AI Agents Is an Architecture Problem

LLMs enable intelligence but systems enable scale.

Scalable AI agents require deliberate design across reasoning, orchestration, memory, execution, governance, and observability. This is why organizations increasingly partner with specialized AI agent development services not to build smarter demos, but to deploy reliable autonomous systems that survive real usage.

Sign in to leave a comment.