An Unexpected Judicial Intervention in AI Supply Chain Oversight

On a spring morning in 2026, the AI community was taken aback when a federal judge issued an injunction halting the U.S. government's designation of Anthropic, a leading AI startup, as a "supply chain risk." This legal pause has sent ripples across the AI and automation sectors, raising critical questions about the intersection of national security, technological innovation, and regulatory oversight. For those tracking the trajectory of AI governance, this development marks a pivotal moment: the judiciary stepping in to recalibrate the balance between governmental caution and industry growth.

Anthropic, a key player in large language models and AI safety research, was flagged by the Department of Defense (DoD) and related agencies under a designation that signals potential vulnerabilities in their supply chain. This move followed a broader government initiative aimed at mitigating risks posed by critical technology suppliers amid escalating geopolitical tensions and concerns over AI’s strategic implications. However, the company challenged the designation through legal channels, arguing that the government’s classification was based on incomplete assessments and lacked due process.

"This injunction signals the judiciary's recognition of the need for thorough, evidence-based evaluation before imposing sweeping designations that can impact innovation and national interests," legal analyst Karen Wu noted in a recent briefing.

As the injunction temporarily lifts the designation, industry stakeholders are keenly observing the court proceedings and their implications on AI supply chain policy. This article explores the background of the designation, analyzes the core arguments on both sides, details recent developments in 2026, and discusses the broader impact on AI companies navigating supply chain risks.

Background and Context: How Supply Chain Risk Became a Flashpoint in AI

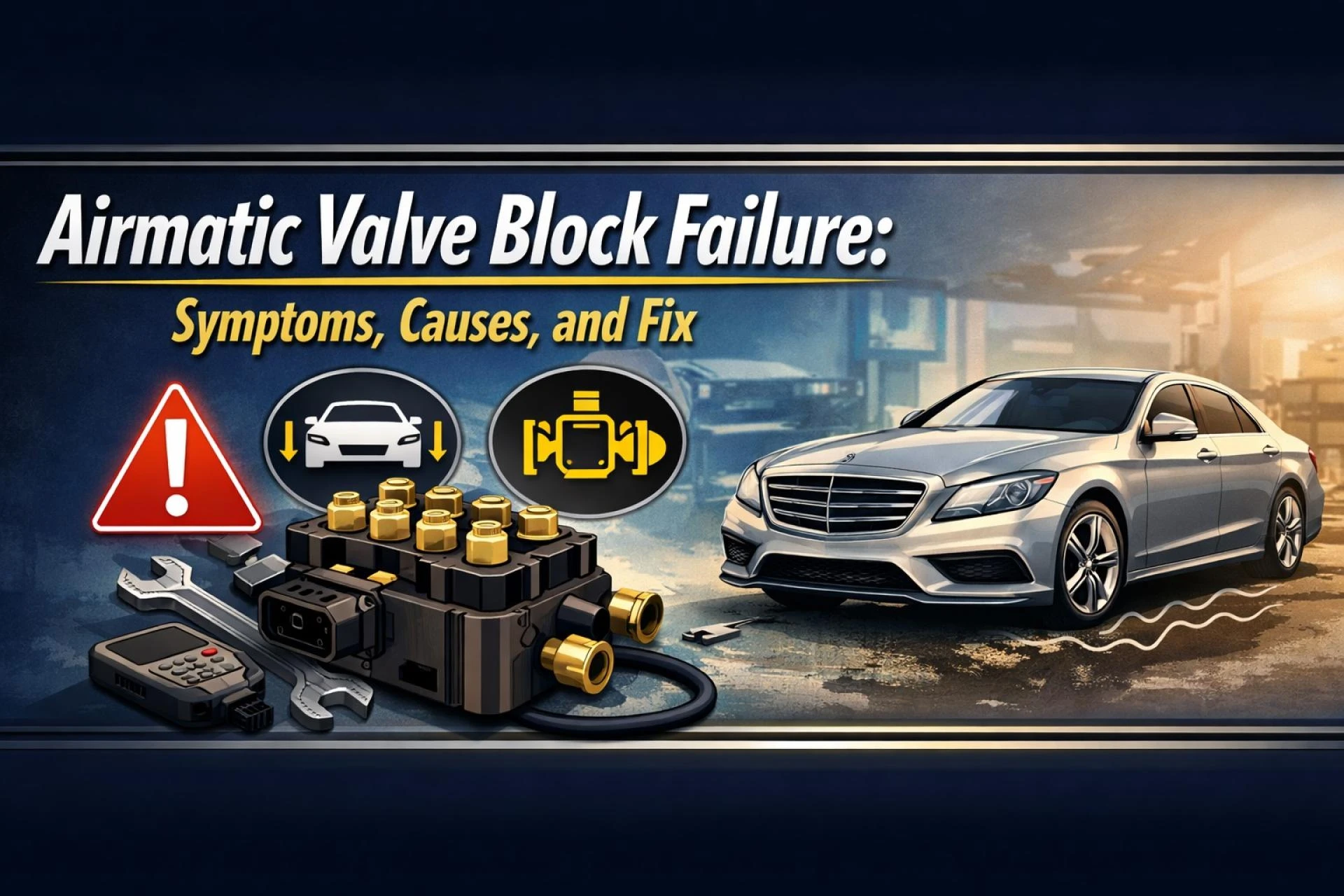

The origins of this dispute trace back to the U.S. government's increasing scrutiny over technology providers deemed critical to national security. In late 2024, amid growing concerns about AI’s dual-use potential and supply chain vulnerabilities, the Pentagon and the National Security Agency (NSA) began deploying a framework to identify and manage suppliers that might pose risks—either from foreign influence, espionage, or operational dependencies on components perceived as insecure.

Anthropic, founded in 2020 by former OpenAI researchers, quickly ascended to prominence with its focus on AI safety and ethical deployment, developing proprietary models competing with giants like OpenAI and Google DeepMind. However, their reliance on certain hardware and software vendors, some with international ties, triggered alarms within U.S. defense circles. By mid-2025, Anthropic was formally designated as a "supply chain risk," a status that restricted its access to certain government contracts and raised questions among private sector clients.

As reported by MSN, the designation cited concerns over components sourced through supply chains vulnerable to foreign interference, although specifics were classified.

This designation aligns with a broader geopolitical context. The U.S.-China technology rivalry has intensified scrutiny on AI suppliers, especially those whose supply chains might incorporate hardware or software from adversarial nations. The government’s policy aimed to preempt risks of compromised AI systems being weaponized or manipulated, but it also ignited debates about overreach and the unintended consequences on innovation.

The designation's impact was immediate and tangible: Anthropic lost several government contracts, faced investor uncertainty, and encountered operational hurdles. The company’s lawsuit argued that the government did not provide sufficient transparency or evidence supporting the designation and that the process infringed on its due process rights.

Core Analysis: Legal and Technical Dimensions of the Supply Chain Risk Halt

The central legal question revolves around whether the government followed procedural fairness and had adequate grounds to impose the supply chain risk designation on Anthropic. The judge’s injunction emphasized gaps in the government’s justification and the significant harm the designation inflicted on Anthropic’s business and reputation.

From a technical perspective, the supply chain designation is rooted in risk management methodologies that assess vulnerabilities based on hardware provenance, software dependencies, and third-party vendor security postures. However, AI companies’ supply chains are inherently complex and globalized, often involving semiconductor fabrication in East Asia, cloud infrastructure from multinational providers, and open-source software components.

Anthropic’s defense highlighted the company’s rigorous internal security protocols, extensive audits of their suppliers, and investments in supply chain transparency. Moreover, they noted that some government agencies, including the NSA, had continued to utilize Anthropic’s Mythos AI model despite the designation — a contradiction that complicates the government’s position.

According to eWeek, the NSA’s ongoing use of Mythos AI underscores the nuanced balance between risk and utility.

This discrepancy fueled Anthropic’s argument that the designation was not only procedurally flawed but also inconsistent with practical government application. The judge’s ruling underscored the necessity for a more transparent, evidence-based approach before imposing such impactful classifications.

Examining the data reveals:

- Supply chain audits by Anthropic: Conducted bi-annually with independent verification since 2023.

- Percentage of foreign components: Estimated 18% of hardware components sourced internationally, primarily from Taiwan and South Korea, regions with strong U.S. alliances.

- Government contracts at risk: Approximately $150 million in AI-related contracts suspended due to the designation.

- Legal precedents: Few cases have challenged supply chain risk designations in the AI sector, making this a landmark ruling.

Current Developments in 2026: The Unfolding Legal Battle and Industry Responses

Following the injunction, Anthropic has aggressively pursued its case, filing motions to dismiss the designation permanently and requesting detailed disclosures from the government regarding the evidence base. The legal battle has attracted attention from AI developers, investors, and policymakers alike. Many are watching to see if this case will establish new standards for how supply chain risks are defined and enforced in AI.

Simultaneously, the Biden administration has reiterated its commitment to securing AI supply chains but signaled openness to refining the designation process to incorporate clearer criteria and due process safeguards. This shift reflects mounting pressure from the tech industry and legal experts concerned about regulatory overreach stifling innovation.

Industry groups have mobilized, issuing white papers and hosting forums to discuss best practices for supply chain security tailored to AI. The consortium includes global AI leaders, semiconductor manufacturers, and cloud service providers, emphasizing collaborative risk assessment rather than unilateral government mandates.

In South Korea, where Samsung and other tech giants have significant AI hardware production, there is keen interest in how these developments will affect international supply chains and partnerships. Seoul’s smart city initiatives increasingly rely on secure AI infrastructures, making supply chain integrity a top priority.

As detailed in a WriteUpCafe analysis, "Advanced Strategies After Anthropic’s Supply-Chain Risk Halt in 2026," companies are adapting by implementing multi-layered verification and transparency tools across their supply chains.

Meanwhile, Anthropic continues to enhance its supply chain transparency, publishing detailed reports and adopting blockchain-based tracking for critical components. These efforts aim to reassure clients and regulators of their commitment to security without compromising agility.

Expert Perspectives and Industry Impact: Navigating Between Security and Innovation

The Anthropic case has drawn commentary from legal scholars, AI ethicists, and supply chain experts who emphasize the delicate balance required in regulating AI technology. On one hand, national security imperatives justify stringent oversight of AI suppliers; on the other, overly broad or opaque designations risk chilling innovation and disadvantaging domestic AI firms in the global market.

Professor Min-Jae Lee, an expert in AI policy at Seoul National University, remarked, "This injunction reflects a necessary corrective in how governments handle complex AI supply chains. Transparency and evidence must underpin risk designations to avoid undermining technological leadership." His perspective resonates with many in South Korea’s burgeoning AI sector, which closely watches U.S. regulatory trends.

Meanwhile, industry leaders stress that supply chain risk management must evolve beyond static lists and incorporate dynamic, data-driven risk assessments that reflect real-time changes in geopolitical and technological landscapes. This approach requires close collaboration between private companies, governments, and international partners.

JD Supra’s legal analysis highlights that "Anthropic’s challenge exposes the need for clear guidelines and practical precautions for AI companies operating under supply chain risk frameworks."

For AI firms, the case serves as a cautionary tale and a call to action: robust internal controls, transparent supply chain documentation, and legal preparedness are no longer optional but prerequisites for survival in a security-conscious environment.

What to Watch: Future Outlook and Strategic Takeaways for AI Supply Chains

As the Anthropic case progresses, several key factors will shape the future landscape of AI supply chain risk management. First, judicial decisions will clarify the limits of executive power in designating supply chain risks and set procedural standards for affected companies. This will influence how swiftly and fairly governments can act to mitigate real or perceived threats.

Second, technological advancements in supply chain transparency—such as blockchain verification, AI-driven anomaly detection, and secure hardware provenance tracking—will become mainstream tools to demonstrate compliance and reduce risks. Companies investing early in these technologies will gain competitive advantages.

Third, international cooperation will be critical. Given the globalized nature of AI supply chains, unilateral designations can disrupt alliances and trade. Frameworks that harmonize security standards across allies, including South Korea, the U.S., and the EU, will be essential.

For AI enterprises and investors, the following strategic priorities emerge:

- Enhance end-to-end supply chain visibility leveraging emerging technologies.

- Engage proactively with regulators to shape fair and transparent policies.

- Develop contingency plans to mitigate designation risks, including diversified suppliers.

- Invest in legal expertise to navigate evolving regulatory landscapes.

As noted in the WriteUpCafe piece "Judge Halts Anthropic Supply-Chain Risk Designation 2026," "This case redefines how AI companies must strategize around regulatory risks, embedding supply chain security into their core operational DNA."

Ultimately, the Anthropic case exemplifies the complex interplay between cutting-edge technology, national security, and legal frameworks. It underscores that safeguarding AI supply chains requires nuanced, transparent, and collaborative approaches that protect both innovation and security.

For those invested in AI and automation tools, monitoring this case offers invaluable insights into the evolving governance of one of the most transformative technologies of our era.

Sign in to leave a comment.