Understanding Database Migration

Effective database migration strategies begin with a clear understanding of what migration actually means. Database migration refers to transferring data from one storage system or environment to another - be it a move to the cloud or a complete switch of database engines. The core challenge lies in maintaining both data integrity and application availability simultaneously. Legacy "Big Bang" approaches often result in hours or days of downtime, something modern businesses operating 24/7 simply cannot afford.

The Database Migration Process

A structured migration involves three foundational steps:

Assessment and Planning comes first — evaluating data volumes, schema compatibility (homogeneous vs. heterogeneous), and network latency to confirm the infrastructure can support replication traffic.

Schema Conversion follows for heterogeneous migrations, such as moving from MongoDB to PostgreSQL, where NoSQL BSON documents must be mapped to relational SQL schemas using tools like AWS SCT or custom scripts.

Data Cleansing wraps up the pre-migration phase by archiving obsolete records and normalizing data, ensuring only clean, relevant data makes the journey.

The Three Database Migration Strategies

Selection depends on your Recovery Time Objective (RTO) and available budget:

Big Bang Migration moves everything in one go during a scheduled maintenance window. It's straightforward but risky, demanding significant downtime — best reserved for small, non-critical databases.

Trickle (Phased) Migration shifts data incrementally while both systems operate in parallel. It reduces risk and enables real-time validation, but requires complex bi-directional synchronization to prevent data drift.

Zero-Downtime Migration (Live Replication) is the enterprise-grade approach. It uses Change Data Capture (CDC) to keep the source and target databases continuously in sync until cutover. Downtime is virtually eliminated, rollback remains straightforward, and it's built for systems that must never go offline.

Zero-Downtime Approaches by Database Engine

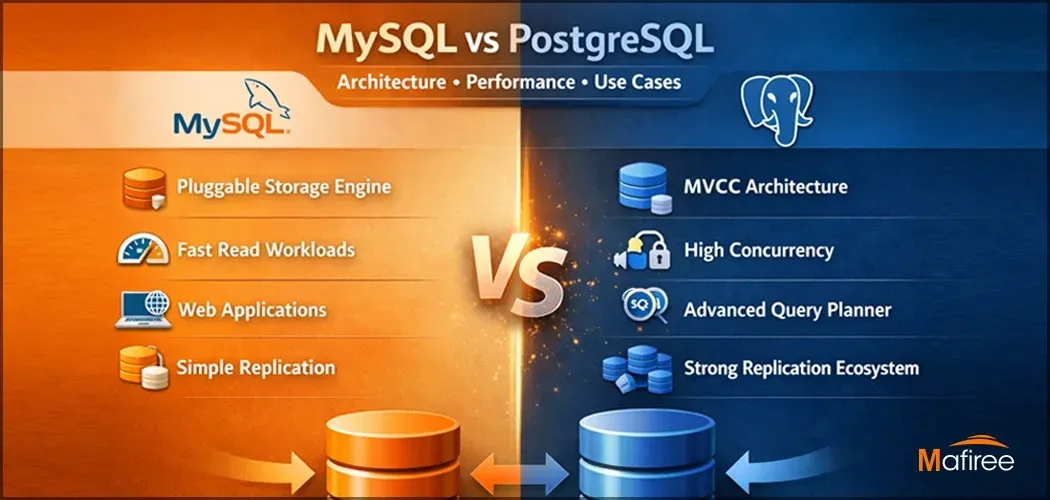

MySQL uses a Primary-Replica Switchover. An initial dump via mysqldump or Percona XtraBackup is restored to the target, then Binary Log (Binlog) replication catches up on live changes. When replication lag hits zero, traffic is redirected to the new database.

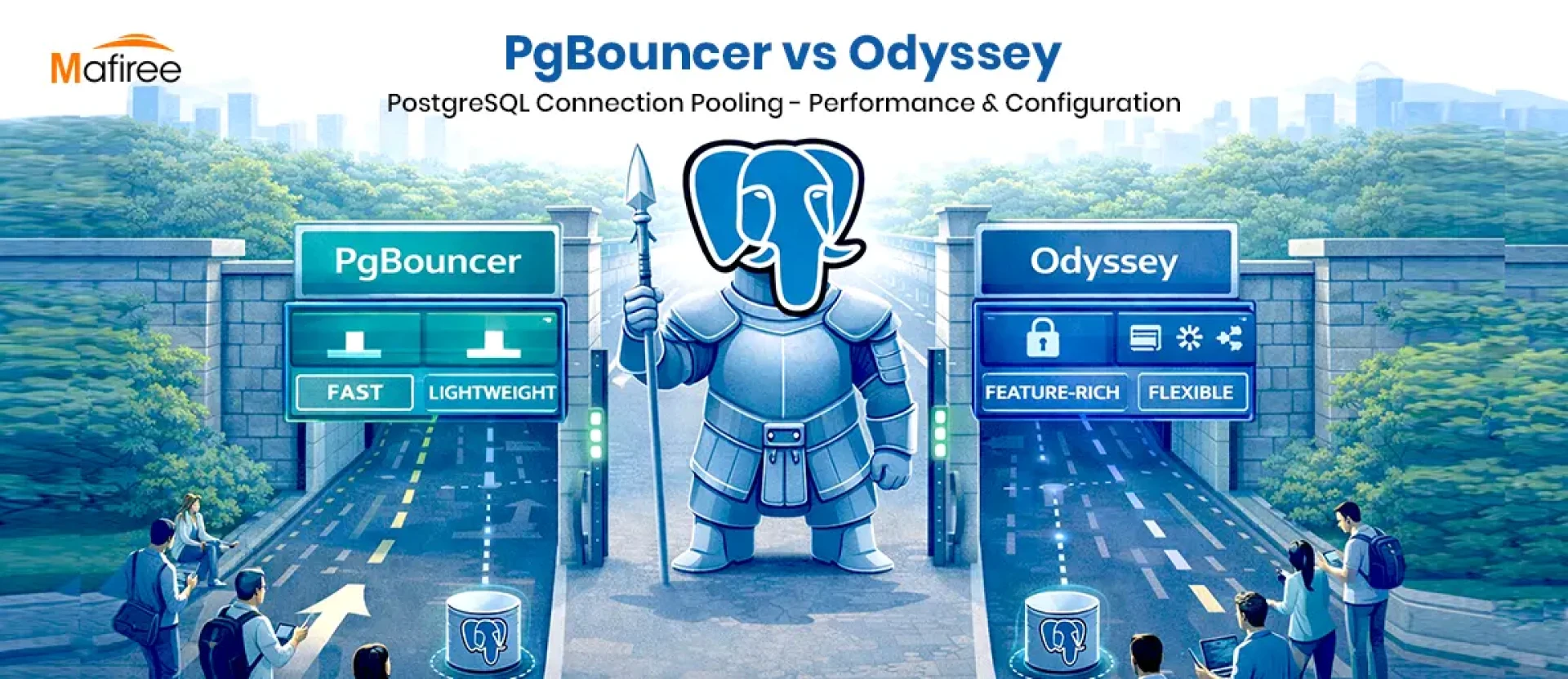

PostgreSQL leverages Logical Replication, enabling migration across major versions (e.g., PG 12 to PG 16) with near-zero lag. Unlike physical replication, it allows table-level granularity, offering more control throughout the process.

MongoDB relies on Replica Set Oplog Tailing — a new node is introduced into the target replica set and syncs data from the primary via the Oplog. For cross-platform moves such as migrations to MongoDB Atlas, tools like Mongomirror can automate the process end to end.

Common Failure Patterns and Their Fixes

Even carefully planned migrations can go wrong. Six recurring failure scenarios to watch for:

- Replication Lag Spike — results in missing rows at cutover. Fix: set a lag alert threshold under 5 seconds.

- Schema Mismatch Post-Cutover — causes application crashes and constraint errors. Fix: always run a schema compatibility audit, especially for heterogeneous migrations.

- Silent Data Corruption — row counts appear correct but values are wrong. Fix: verify data using MD5/SHA checksums on all critical tables.

- Insufficient Network Bandwidth — replication falls behind and never recovers. Fix: benchmark network throughput against data volume before the migration begins.

- No Rollback Plan — a failed cutover with no recovery path. Fix: keep the source database live as a fallback with a documented, time-boxed rollback window.

- Index Bloat Post-Cutover — query performance degrades after go-live. Fix: run VACUUM/ANALYZE (PostgreSQL) or OPTIMIZE TABLE (MySQL) immediately after cutover.

Testing Strategy

Testing at every phase is what protects against a failed migration:

Pre-Migration: Verify backups, audit schema compatibility, test network throughput, complete at least two full dry runs in a staging environment, and document the rollback plan.

During Migration: Continuously monitor replication lag and CDC error rates; confirm lag remains below 5 seconds before proceeding to cutover; keep the source database live as a safety net.

Post-Migration: Validate row counts, run checksum verification, conduct User Acceptance Testing (UAT) with stakeholders, and perform index rebuilds alongside query plan reviews.

Key Best Practices

Always keep a verified off-site backup before starting. Automate the cutover using DNS TTL adjustments to reduce the transition window. Run at least two production-equivalent dry runs. Monitor for index bloat and cache misses right after go-live.

The takeaway: migration failures are preventable. Success hinges on thorough preparation, the right tooling, and a disciplined, phase-by-phase testing strategy.

Sign in to leave a comment.