Data has become the new currency, but unlike gold, we're generating more of it every second. From customer information and operational data to the endless stream of content powering our digital lives, the sheer volume of data is staggering. This explosion presents a significant challenge for businesses: where and how do we store all this information securely, accessibly, and cost-effectively?

Traditional storage methods are struggling to keep up. Relying solely on on-premise servers or a single cloud provider is no longer enough. The future of network storage solutions is about creating a smarter, more flexible ecosystem. This means embracing a combination of technologies like edge computing, hybrid cloud models, and intelligent data tiering to build a robust and efficient storage infrastructure.

This post will explore the trends shaping the future of data storage. We will examine how edge computing is bringing data processing closer to the source, why hybrid cloud is becoming the go-to strategy for enterprises, and how intelligent data tiering is optimizing costs and performance. Understanding these shifts is crucial for any organization looking to build a data strategy that's ready for tomorrow.

The Challenge: Managing the Data Deluge

The amount of data created and consumed globally is growing at an exponential rate. This growth puts immense pressure on existing network storage solutions. Businesses are grappling with several key issues:

- Scalability: How can storage systems expand seamlessly to accommodate terabytes, or even petabytes, of new data without a complete overhaul?

- Performance: How can we ensure low-latency access to critical data, especially for real-time applications and analytics?

- Cost: What is the most cost-effective way to store vast amounts of data, much of which may be infrequently accessed but must be retained for compliance?

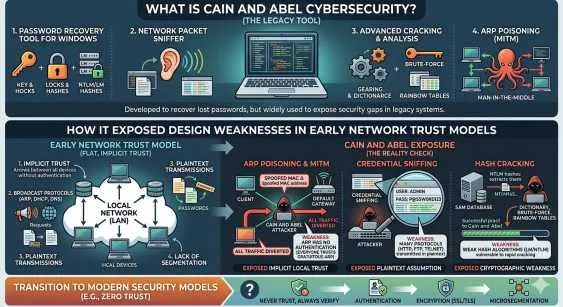

- Security: How do we protect sensitive data across different environments, from on-premise servers to multiple cloud platforms?

Simply buying more hard drives or increasing cloud storage subscriptions isn't a sustainable answer. A more strategic approach is required, one that leverages the unique strengths of different storage technologies.

Edge Computing: Bringing Storage Closer to the Action

Edge computing represents a fundamental shift in where data is processed and stored. Instead of sending all data to a centralized cloud or data center, edge computing performs computation closer to the physical location where the data is generated. Think of IoT sensors on a factory floor, smart cameras in a retail store, or medical devices in a hospital.

Why Edge Storage Matters

By processing and storing data at the "edge" of the network, organizations can achieve significant benefits:

- Reduced Latency: For applications that require immediate responses, like autonomous vehicles or industrial automation, sending data to a distant cloud is too slow. Edge storage provides the near-instant access needed for these time-sensitive operations.

- Bandwidth Conservation: Transmitting massive datasets to the cloud can be expensive and strain network resources. Edge devices can process data locally and only send essential information or summaries to the central server, reducing bandwidth consumption.

- Improved Reliability: Edge systems can continue to operate even if the connection to the central cloud is lost. This is critical for remote or mobile deployments where network connectivity may be unreliable.

Edge computing doesn't replace the cloud; it complements it. It acts as a crucial first line of data processing, filtering, and temporary storage, making the entire network more efficient.

Hybrid Cloud: The Best of Both Worlds

For years, the debate was "public cloud vs. private cloud." Today, the answer for most enterprises is "both." A hybrid cloud strategy combines an organization's private cloud or on-premise infrastructure with one or more public cloud services, allowing data and applications to be shared between them.

The Power of Hybrid Network Attached Storage (NAS)

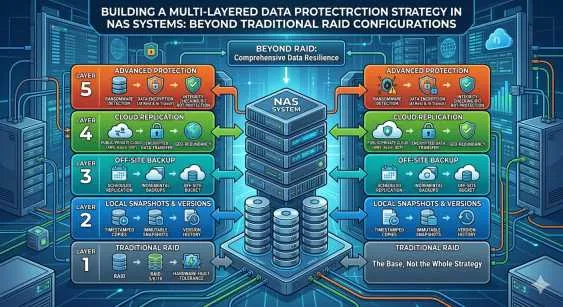

An enterprise NAS system is a cornerstone of modern data management, and its evolution into a hybrid model is a game-changer. A hybrid cloud approach allows businesses to:

- Maintain Control: Keep sensitive data, such as customer records or intellectual property, on a private, on-premise enterprise NAS for maximum security and control.

- Leverage Cloud Scalability: Use the public cloud for less sensitive data, development environments, or to handle unexpected spikes in demand. This "cloud bursting" capability provides flexibility without the need for massive upfront investment in hardware.

- Optimize NAS Backup and Disaster Recovery: A hybrid model is ideal for creating a robust NAS backup strategy. You can automatically back up data from your on-premise NAS to the cloud, ensuring it's protected from local disasters like hardware failure, fires, or floods. This provides a secure and cost-effective off-site backup solution.

This balanced approach allows organizations to tailor their network storage solutions to their specific needs, optimizing for performance, security, and cost simultaneously.

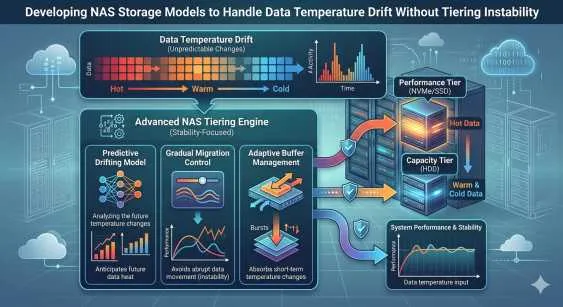

Intelligent Data Tiering: Smart Storage for Every Byte

Not all data is created equal. Some data, like active project files or recent transaction records, needs to be accessed instantly. Other data, such as old emails or archived financial records, might be needed only for compliance and can be stored in a slower, less expensive medium.

This is where intelligent data tiering comes in. It's an automated process that moves data between different storage tiers based on predefined policies, access frequency, and business value.

How Data Tiering Works

A typical tiered storage architecture might look like this:

- Tier 1 (Hot Tier): High-performance, expensive storage like solid-state drives (SSDs) for mission-critical data that is accessed frequently.

- Tier 2 (Warm Tier): A balance of performance and cost, often using traditional hard disk drives (HDDs) for data that is accessed less frequently but still needs to be readily available.

- Tier 3 (Cold Tier): Low-cost, high-capacity storage like cloud archival services (e.g., Amazon S3 Glacier) for long-term data retention and NAS backup. Access times are slower, but the cost per gigabyte is significantly lower.

By automatically moving data to the appropriate tier, intelligent data tiering ensures that you're not paying for high-speed storage for data that doesn't need it. This dramatically reduces overall storage costs while ensuring that performance for critical applications is never compromised.

Building Your Future-Ready Storage Strategy

The future of network storage solutions is not about choosing one single technology but about integrating multiple solutions into a cohesive, intelligent system. Edge, hybrid cloud, and data tiering work together to create an infrastructure that is scalable, efficient, and secure.

As you plan for your organization's data needs, consider these steps:

- Assess Your Data: Understand the different types of data you have, how frequently it's accessed, and its security requirements.

- Embrace a Hybrid Model: Design a storage architecture that combines the security of an on-premise enterprise NAS with the flexibility of the public cloud.

- Implement Tiering: Use automated data tiering to optimize your storage costs without sacrificing performance.

- Plan for the Edge: Identify opportunities where edge computing can reduce latency and improve the efficiency of your operations.

By embracing these trends, your organization can move beyond simply storing data and start leveraging it as a true strategic asset.

Sign in to leave a comment.